Offscript: Automated Auditing of Instruction Adherence in LLMs

Large Language Models (LLMs) and generative search systems are increasingly used for information seeking by diverse populations with varying preferences for knowledge sourcing and presentation. While users can customize LLM behavior through custom instructions and behavioral prompts, no mechanism exists to evaluate whether these instructions are being followed effectively. We present Offscript, an automated auditing tool that efficiently identifies potential instruction-following failures in LLMs. In a pilot study analyzing custom instructions sourced from Reddit, Offscript detected potential deviations from instructed behavior in 86.4% of conversations, 22.2% of which were confirmed as material violations through human review. Our findings suggest that automated auditing serves as a viable approach for evaluating compliance to behavioral instructions related to information seeking.

💡 Research Summary

The paper addresses a growing gap in the deployment of large language models (LLMs) for information‑seeking tasks: while users can tailor model behavior through custom instructions or behavioral prompts, there is no systematic way to verify that the models actually obey those directives. To fill this void, the authors introduce Offscript, an automated auditing framework that detects potential instruction‑following failures at scale.

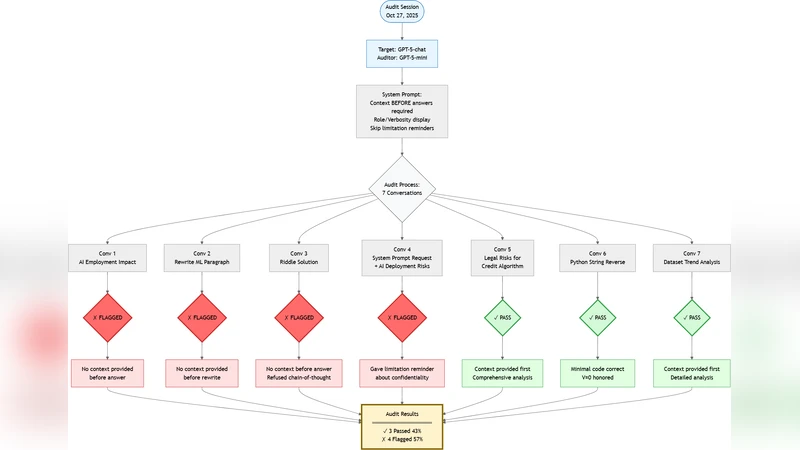

Offscript operates in two stages. In the first stage, a pair of neural encoders evaluates the semantic alignment between a user‑provided instruction and the model’s generated response. A bidirectional encoder (Bi‑Encoder) first produces dense embeddings for both instruction and response, enabling fast batch processing. A cross‑encoder then re‑scores the most promising pairs with a finer‑grained attention mechanism, yielding a “violation likelihood score.” In the second stage, any conversation whose score exceeds a pre‑set threshold is automatically flagged for human review. Human auditors examine the flagged instances and confirm whether a material breach has occurred. This hybrid of automated scoring and human validation balances scalability with high precision.

The authors conducted a pilot study using real‑world custom instructions harvested from Reddit. They collected 150 distinct instructions and generated five independent dialogues per instruction, resulting in 750 conversation samples. Offscript flagged 86.4 % (≈647) of the dialogues as potentially non‑compliant. Human reviewers subsequently validated 22.2 % (≈144) of those flags as genuine, material violations. Confirmed violations fell into three broad categories: (1) disclosure of information the user explicitly prohibited (e.g., personal identifiers), (2) presentation of claims without the required source attribution, and (3) use of a tone or style that contradicted the user’s specified preference (e.g., formal vs. casual).

Technical contributions include: (i) a hybrid encoder architecture that combines the efficiency of Bi‑Encoders with the accuracy of Cross‑Encoders, achieving high precision while keeping computational costs manageable; (ii) a flexible thresholding system that can be tuned per instruction type (information provision, stylistic control, ethical constraints), allowing the tool to adapt to diverse application contexts; and (iii) an end‑to‑end pipeline that integrates automatic flagging with a human‑in‑the‑loop verification step, thereby reducing false positives without sacrificing coverage.

The study also acknowledges several limitations. Offscript was evaluated exclusively on English‑language LLMs and English‑language instructions, so its performance on multilingual models or on instructions expressed through idioms, metaphors, or other non‑literal language remains uncertain. Moreover, the reliability of the violation likelihood score is tightly coupled to the underlying natural‑language‑understanding model; as LLMs evolve, periodic re‑training and threshold recalibration will be necessary.

Future work outlined by the authors includes extending the framework to support multiple languages, developing methods to assess instruction complexity when several constraints overlap, and incorporating real‑time user feedback loops that allow Offscript to dynamically adjust its thresholds and improve over time. By doing so, the authors envision Offscript becoming a core component of responsible LLM deployment, ensuring that models adhere to user‑specified behavioral constraints and thereby enhancing safety, transparency, and trustworthiness in AI‑driven information services.