AFRAgent : An Adaptive Feature Renormalization Based High Resolution Aware GUI agent

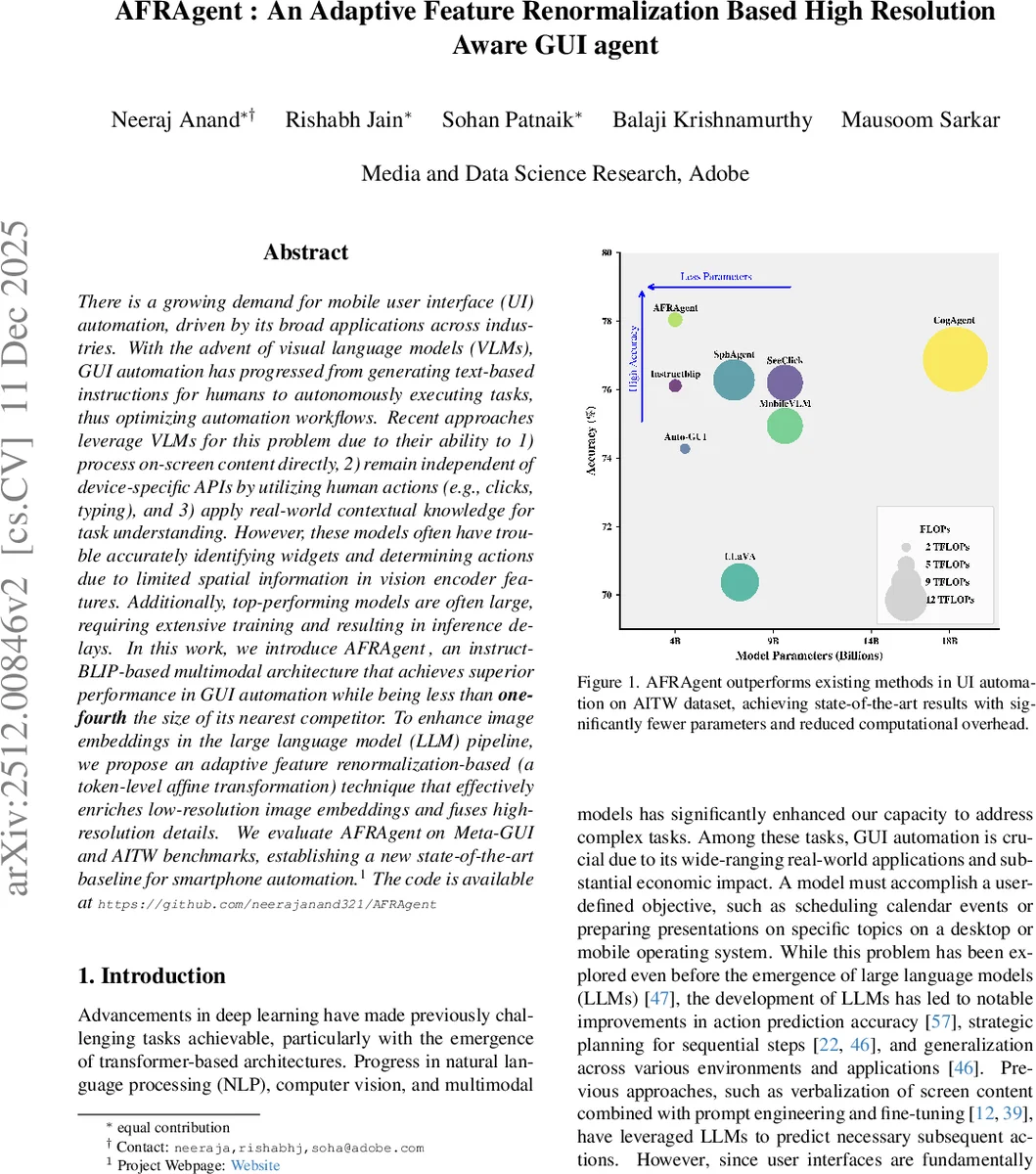

There is a growing demand for mobile user interface (UI) automation, driven by its broad applications across industries. With the advent of visual language models (VLMs), GUI automation has progressed from generating text-based instructions for humans to autonomously executing tasks, thus optimizing automation workflows. Recent approaches leverage VLMs for this problem due to their ability to 1) process on-screen content directly, 2) remain independent of device-specific APIs by utilizing human actions (e.g., clicks, typing), and 3) apply real-world contextual knowledge for task understanding. However, these models often have trouble accurately identifying widgets and determining actions due to limited spatial information in vision encoder features. Additionally, top-performing models are often large, requiring extensive training and resulting in inference delays. In this work, we introduce AFRAgent, an instruct-BLIP-based multimodal architecture that achieves superior performance in GUI automation while being less than one-fourth the size of its nearest competitor. To enhance image embeddings in the large language model (LLM) pipeline, we propose an adaptive feature renormalization-based (a token-level affine transformation) technique that effectively enriches low-resolution image embeddings and fuses high-resolution details. We evaluate AFRAgent on Meta-GUI and AITW benchmarks, establishing a new state-of-the-art baseline for smartphone automation.

💡 Research Summary

AFRAgent tackles the challenge of mobile GUI automation by marrying the efficiency of Instruct‑BLIP with a novel Adaptive Feature Renormalization (AFR) module that bridges low‑resolution visual embeddings and high‑resolution details without exploding token counts. The authors first identify three major shortcomings of existing approaches: reliance on external OCR or icon detectors that add latency and potential errors; the need for large vision‑language models (7‑18 B parameters) that are unsuitable for edge deployment; and inefficient high‑resolution handling that either inflates memory (by cropping and processing many patches) or loses fine‑grained visual cues.

AFRAgent’s architecture retains the standard Instruct‑BLIP backbone—an image encoder (E) that produces patch embeddings, a Q‑Former that attends to both these embeddings and textual inputs (task description and action history). The key innovation lies in the AFR block, which receives two feature sets: (1) “enriching” features (F_enrich) derived from the raw image embeddings (both low‑resolution and high‑resolution crops) and (2) “target” features (F_target) which are the Q‑Former token representations. Two lightweight feed‑forward networks compute per‑token scaling (α) and shifting (β) parameters from F_enrich. An affine transformation α ⊙ F_target + β then produces enriched token embeddings. This operation is reminiscent of AdaIN in generative models but is applied at the token level, allowing the model to inject global visual context into each query token efficiently.

The low‑resolution pathway uses the whole screenshot’s patch embeddings as F_enrich, enriching the Q‑Former tokens before they are projected into the LLM. For high‑resolution information, the screenshot is split into several crops, each processed by the same BLIP encoder; these crop embeddings also pass through AFR, further refining the Q‑Former tokens. Crucially, no additional visual tokens are introduced for the LLM, preserving the original token budget and keeping memory usage low.

The final multimodal representation—enriched Q‑Former tokens concatenated with textual embeddings of the task and action history—is fed into a 4 B parameter language model (a distilled LLaMA‑2 variant). The model is trained with a standard cross‑entropy loss to predict the next UI action (click, type, scroll, etc.).

Empirical evaluation on the Meta‑GUI and AITW benchmarks demonstrates that AFRAgent outperforms larger models (7‑18 B) by 2‑3 % absolute accuracy while reducing inference latency by over 30 %. Ablation studies confirm that (i) removing AFR degrades performance dramatically, (ii) using only high‑resolution crops without AFR leads to memory overflow, and (iii) simplifying α/β to scalars diminishes the gains, underscoring the importance of token‑wise affine modulation. Grad‑CAM visualizations further reveal that AFR‑enhanced models focus more precisely on UI widgets and textual regions, indicating better visual grounding.

Limitations include a fixed number of high‑resolution crops, which may be insufficient for very large screens (e.g., tablets), and the linear growth of AFR parameters with token count, potentially causing memory pressure in extreme cases. The authors suggest future work on dynamic crop selection, more compact FFNs, and broader cross‑device generalization.

In summary, AFRAgent presents a compelling solution for edge‑friendly, high‑resolution aware GUI automation: a modest‑size multimodal model that leverages adaptive feature renormalization to fuse visual detail without incurring the computational penalties of prior high‑resolution strategies. This work advances the state of the art in mobile UI automation and opens avenues for deploying sophisticated VLM‑based agents on resource‑constrained devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment