Extending Douglas-Rachford Splitting for Convex Optimization

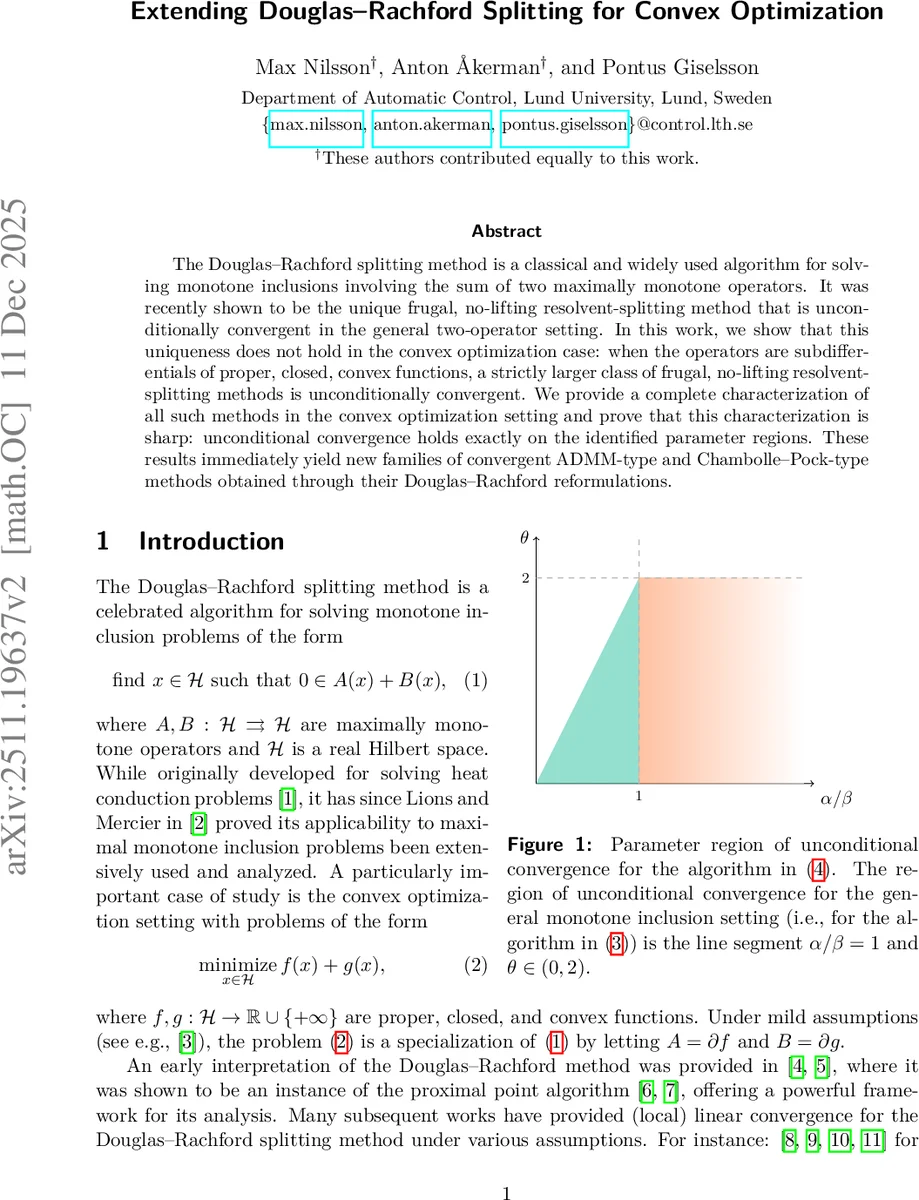

The Douglas-Rachford splitting method is a classical and widely used algorithm for solving monotone inclusions involving the sum of two maximally monotone operators. It was recently shown to be the unique frugal, no-lifting resolvent-splitting method that is unconditionally convergent in the general two-operator setting. In this work, we show that this uniqueness does not hold in the convex optimization case: when the operators are subdifferentials of proper, closed, convex functions, a strictly larger class of frugal, no-lifting resolvent-splitting methods is unconditionally convergent. We provide a complete characterization of all such methods in the convex optimization setting and prove that this characterization is sharp: unconditional convergence holds exactly on the identified parameter regions. These results immediately yield new families of convergent ADMM-type and Chambolle-Pock-type methods obtained through their Douglas-Rachford reformulations.

💡 Research Summary

The paper investigates the Douglas‑Rachford (DR) splitting method, a classical algorithm for solving monotone inclusions of the form 0 ∈ A(x) + B(x), and asks whether the uniqueness result established in the general maximal‑monotone setting still holds when the operators are subdifferentials of proper, closed, convex functions. In the general setting, it was shown (Ryu & Yin, 2021) that the only frugal, no‑lifting resolvent‑splitting method that possesses the fixed‑point encoding property and is unconditionally convergent is the standard DR algorithm, which requires equal step sizes (α = β) and an over‑relaxation parameter θ in the interval (0, 2).

The authors demonstrate that this uniqueness breaks down in the convex‑optimization context. By restricting A = ∂f and B = ∂g, they prove a complete characterization of all frugal, no‑lifting resolvent‑splitting schemes that are unconditionally convergent and retain the fixed‑point encoding property. The main result (Theorem 3.3) states that the algorithm converges for any positive step sizes α, β provided the relaxation parameter satisfies

θ ∈ (0, min{2, 2α/β}).

Equivalently, the admissible parameter region consists of two sub‑regions:

- S(1) – when α/β ≤ 1, the condition becomes θ < 2α/β;

- S(2) – when α/β ≥ 1, the condition reduces to the classical θ < 2.

These regions together strictly contain the line α = β, θ ∈ (0, 2) that characterizes DR in the general monotone case. The authors verify sharpness by constructing one‑dimensional counter‑examples (zero function and the indicator of {0}) that diverge when the conditions are violated.

To establish sufficiency, the paper employs automated Lyapunov‑function generation tools (references

Comments & Academic Discussion

Loading comments...

Leave a Comment