ObjectAdd: Adding Objects into Image via a Training-Free Diffusion Modification Fashion

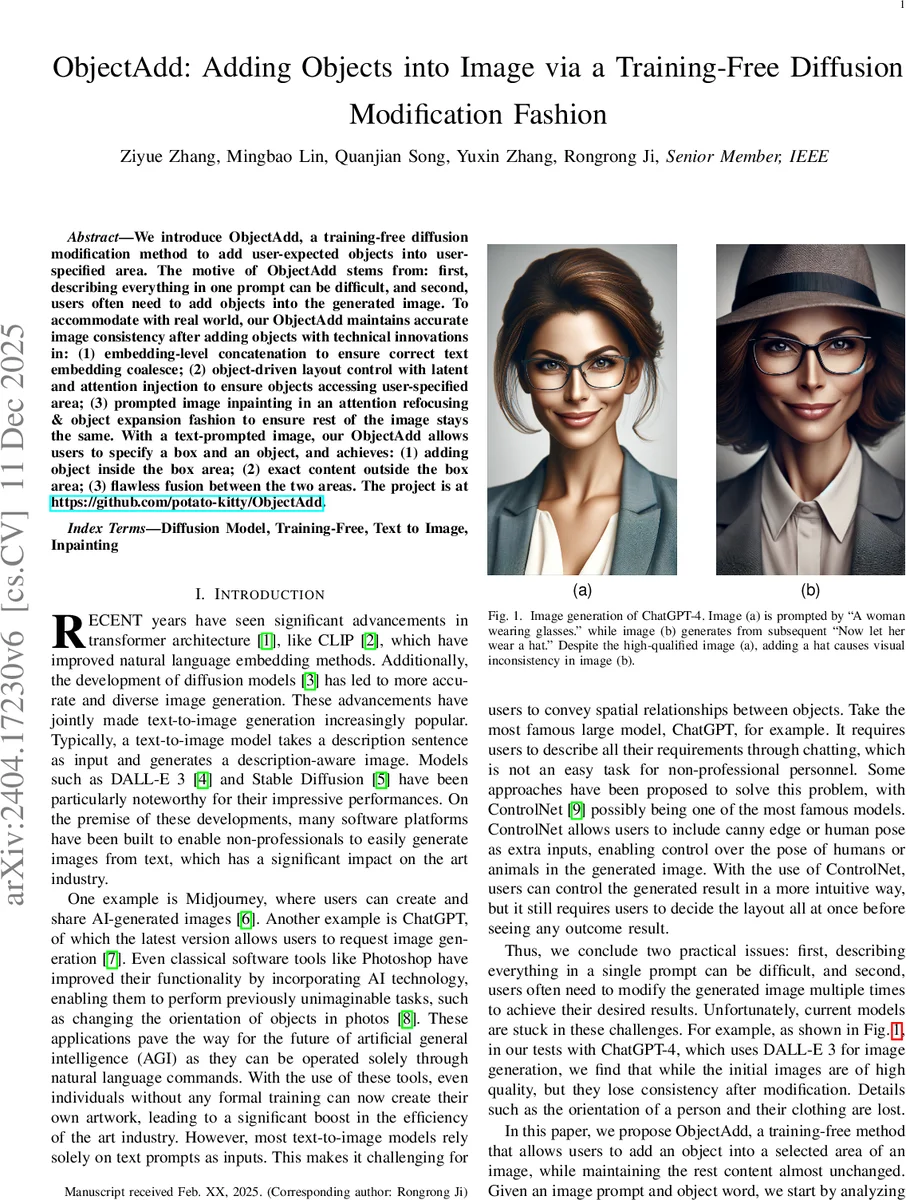

We introduce ObjectAdd, a training-free diffusion modification method to add user-expected objects into user-specified area. The motive of ObjectAdd stems from: first, describing everything in one prompt can be difficult, and second, users often need to add objects into the generated image. To accommodate with real world, our ObjectAdd maintains accurate image consistency after adding objects with technical innovations in: (1) embedding-level concatenation to ensure correct text embedding coalesce; (2) object-driven layout control with latent and attention injection to ensure objects accessing user-specified area; (3) prompted image inpainting in an attention refocusing & object expansion fashion to ensure rest of the image stays the same. With a text-prompted image, our ObjectAdd allows users to specify a box and an object, and achieves: (1) adding object inside the box area; (2) exact content outside the box area; (3) flawless fusion between the two areas

💡 Research Summary

ObjectAdd introduces a training‑free method for editing images generated by large text‑to‑image diffusion models such as Stable Diffusion or DALL‑E 3. The goal is to let a user specify a rectangular region (a mask) and an object word, and then insert that object precisely inside the region while keeping the rest of the image unchanged. The authors identify three core challenges: (1) concatenating two textual prompts (the original description P and the added object W) leads to embedding confusion because CLIP‑style encoders use self‑attention; (2) ensuring the added object appears exactly within the user‑drawn box; (3) preserving the original content outside the box after the edit.

To solve (1), ObjectAdd encodes P and W separately with a CLIP text encoder, then concatenates the resulting embeddings while sharing the CLS token. This “embedding‑level concatenation” prevents the two prompts from interfering with each other, providing a clean combined embedding E{P,W} for downstream diffusion.

For (2), the method injects information at both the latent and attention levels. First, a dedicated diffusion run with only W produces a latent Ĩ₀. Then, a training‑free backward guidance technique (similar to the one in “DragDiffusion”) is applied: the cross‑attention map for the object token k is compared with the down‑sampled mask Mγ, and a loss based on their overlap is minimized by gradient descent on the latent at selected diffusion steps. This forces the object’s attention to concentrate inside the mask. Simultaneously, early diffusion steps amplify the same cross‑attention map (forward guidance) so the object is strongly conditioned on the masked region. The combination of backward and forward guidance yields precise spatial control without any model fine‑tuning.

Addressing (3), the authors propose a “prompted image inpainting” stage that runs in the middle of the diffusion process. They observe that the object‑related attention map becomes highly responsive; they cluster this map to extract an object‑centric sub‑figure that largely overlaps the mask. Seed pixels are chosen at the boundary of this sub‑figure, and neighboring non‑object pixels are recovered if they are close to the seeds. By applying attention refocusing and object expansion in a single diffusion step, the background outside the mask is effectively restored to its original appearance, achieving seamless fusion between edited and untouched regions.

ObjectAdd also extends to real‑image editing. The input photograph is first inverted to latent noise using an improved inversion scheme (building on DDIM inversion and recent refinements). The mask is replaced by a segmentation of the real image, and the same three‑module pipeline is applied, resulting in high‑quality object insertion while preserving photographic details.

Experiments on a variety of prompts (adding hats to faces, inserting animals into landscapes, placing furniture in indoor scenes) demonstrate that ObjectAdd outperforms baselines such as ControlNet, InstructPix2Pix, and DragDiffusion in terms of object placement accuracy, background fidelity, and overall visual realism. Quantitative metrics (CLIP‑Score, LPIPS) and user studies confirm the superiority of the proposed approach.

In summary, ObjectAdd achieves “add‑modify‑preserve” by (i) cleanly merging text embeddings, (ii) jointly steering latent and cross‑attention representations to enforce layout constraints, and (iii) using attention‑based inpainting to keep the untouched area unchanged. The method requires no additional training data, works with off‑the‑shelf diffusion models, and can be readily integrated into interactive image‑editing tools. Future work may explore multi‑object editing, video frame consistency, and real‑time UI integration.

Comments & Academic Discussion

Loading comments...

Leave a Comment