Generative AI offers new opportunities for individualized and adaptive learning, particularly through large language model (LLM)-based feedback systems. While LLMs can produce effective feedback for relatively straightforward conceptual tasks, delivering high-quality feedback for tasks that require advanced domain expertise-such as physics problem solving-remains a substantial challenge. This study presents the design of an LLMbased feedback system for physics problem solving grounded in evidence-centered design (ECD) and evaluates its performance within the German Physics Olympiad. Participants assessed the usefulness and accuracy of the generated feedback, which was generally perceived as useful and highly accurate. However, an in-depth analysis revealed that the feedback contained factual errors in 20% of cases-errors that often went unnoticed by the students. We discuss the risks associated with uncritical reliance on LLM-based feedback systems and outline potential directions for generating more adaptive and reliable LLM-based feedback in the future.

Deep Dive into Developing and Evaluating a Large Language Model-Based Automated Feedback System Grounded in Evidence-Centered Design for Supporting Physics Problem Solving.

Generative AI offers new opportunities for individualized and adaptive learning, particularly through large language model (LLM)-based feedback systems. While LLMs can produce effective feedback for relatively straightforward conceptual tasks, delivering high-quality feedback for tasks that require advanced domain expertise-such as physics problem solving-remains a substantial challenge. This study presents the design of an LLMbased feedback system for physics problem solving grounded in evidence-centered design (ECD) and evaluates its performance within the German Physics Olympiad. Participants assessed the usefulness and accuracy of the generated feedback, which was generally perceived as useful and highly accurate. However, an in-depth analysis revealed that the feedback contained factual errors in 20% of cases-errors that often went unnoticed by the students. We discuss the risks associated with uncritical reliance on LLM-based feedback systems and outline potential directions for

IEEE TRANSACTIONS ON LEARNING TECHNOLOGIES - PREPRINT

1

Developing and Evaluating a Large Language Model-Based

Automated Feedback System Grounded in Evidence-Centered Design

for Supporting Physics Problem Solving

Holger Maus, Paul Tschisgale, Fabian Kieser, Stefan Petersen, Peter Wulff

Abstract—Generative AI offers new opportunities for individu-

alized and adaptive learning, particularly through large language

model (LLM)-based feedback systems. While LLMs can produce

effective feedback for relatively straightforward conceptual tasks,

delivering high-quality feedback for tasks that require advanced

domain expertise—such as physics problem solving—remains a

substantial challenge. This study presents the design of an LLM-

based feedback system for physics problem solving grounded in

evidence-centered design (ECD) and evaluates its performance

within the German Physics Olympiad. Participants assessed the

usefulness and accuracy of the generated feedback, which was

generally perceived as useful and highly accurate. However, an

in-depth analysis revealed that the feedback contained factual

errors in 20% of cases—errors that often went unnoticed by the

students. We discuss the risks associated with uncritical reliance

on LLM-based feedback systems and outline potential directions

for generating more adaptive and reliable LLM-based feedback

in the future.

Index Terms—Generative Artificial Intelligence, Large Lan-

guage Models, Evidence-Centered Design, Problem Solving, Au-

tomated Feedback, Human-AI Interaction.

I. INTRODUCTION

R

ECENT advances in artificial intelligence (AI), partic-

ularly in large language models (LLM), have opened

promising opportunities to provide automated, individualized,

and meaningful LLM-generated feedback in a range of do-

mains, including computer science and physics [1]–[3]. To

date, however, most of these systems—and the correspond-

ing research—have focused primarily on advancing students’

factual knowledge and conceptual understanding. It remains

largely unclear to what extent such systems can also assess and

provide feedback on more complex and multifaceted activities,

such as problem solving, which is widely recognized as a

key 21st-century skill and considered particularly important

for individuals pursuing (computer) science-related careers [4],

[5].

Holger Maus, Paul Tschisgale, and Stefan Petersen are with the Depart-

ment of Physics Education, Leibniz Institute for Science and Mathematics

Education, Kiel, Germany.

Fabian Kieser is with the Department of Physics Education Research, Free

University of Berlin, Berlin, Germany

Peter Wulff is with the Department of Physics and Physics Education

Research, Ludwigsburg University of Education, Ludwigsburg, Germany.

This work was supported by the Klaus-Tschira-Stiftung (project WasP)

under Grant No. 00.001.2023.

© 2025 IEEE. Personal use of this material is permitted. Permission from

IEEE must be obtained for all other uses, in any current or future media,

including reprinting/republishing this material for advertising or promotional

purposes, creating new collective works, for resale or redistribution to servers

or lists, or reuse of any copyrighted component of this work in other works.

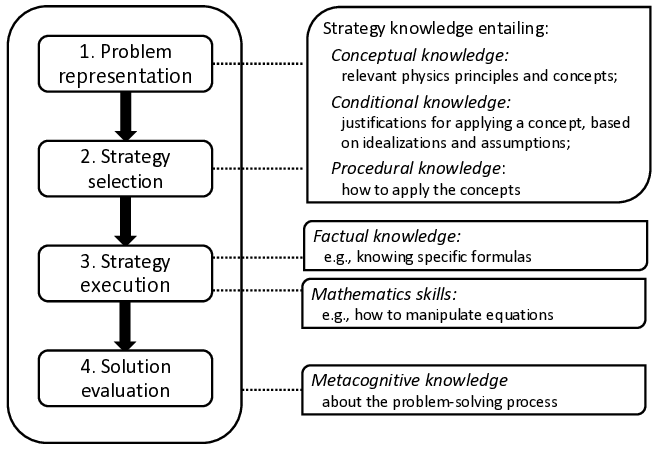

To become a proficient problem solver, continuous and

targeted deliberate practice is required [6]. In particular, for-

mative feedback has been shown to play a crucial role in

developing students’ problem-solving abilities [7]. In fact, gen-

erating high-quality automated feedback requires an accurate

assessment of students’ problem-solving abilities. This poses

a considerable challenge, as problem solving is inherently

complex: it involves the integration of multiple types of

knowledge and skills. Evidence for these knowledge types

and skills needs to be identified in students’ written problem

solutions, interpreted in light of the specific problem at hand,

and then appropriately addressed in the feedback. Designing

a feedback system that captures this complexity in a valid

and reliable manner is therefore a task that requires domain

expertise and assessment skills.

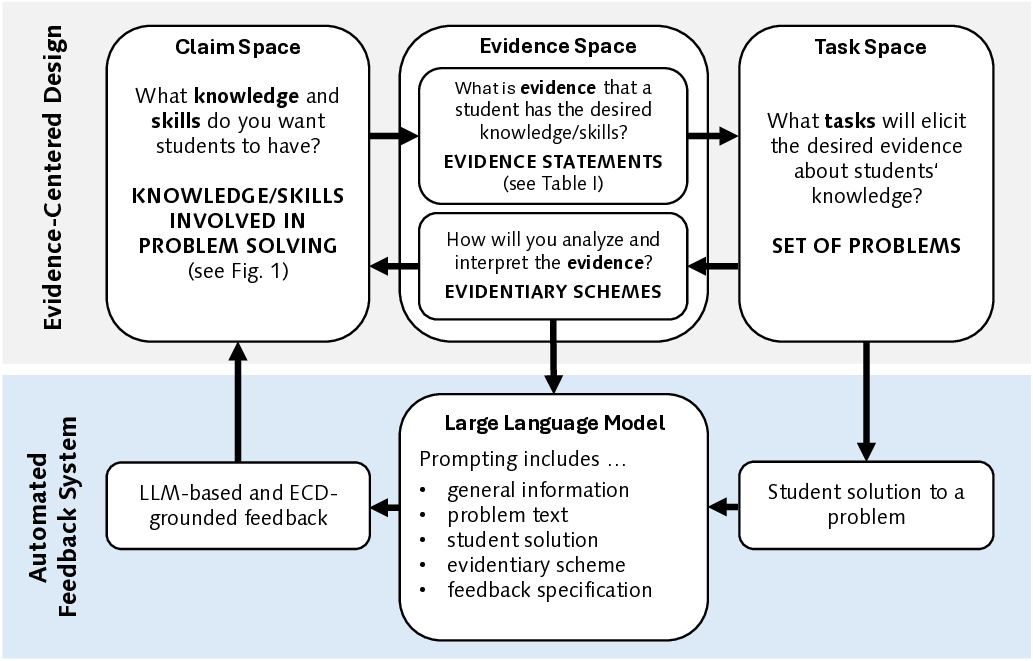

Evidence-Centered Design (ECD) [8] offers a promising

framework for tackling this challenge. By systematically link-

ing the types of knowledge and skills involved in problem

solving with the respective evidence observable in students’

problem solutions, ECD provides a structure to assess stu-

dents’ problem solutions and guide feedback generation. In

the context of LLM-generated feedback, ECD can serve as

a guiding framework to constrain and direct the LLM. It

is a known challenge with LLMs that they are prone to

produce average responses (e.g., not tied to the specifics of an

experts’ problem solution) or confabulate information entirely

[9]. Hence, rather than producing surface-level or holistic

feedback, LLMs can be prompted using the ECD approach

to identify specific forms of evidence (e.g., relevant concepts,

missing assumptions or reasoning steps, wrong formulas) and

map them t

…(Full text truncated)…

This content is AI-processed based on ArXiv data.