📝 Original Info

- Title: Generative Modeling from Black-box Corruptions via Self-Consistent Stochastic Interpolants

- ArXiv ID: 2512.10857

- Date: 2025-12-11

- Authors: Researchers from original ArXiv paper

📝 Abstract

Transport-based methods have emerged as a leading paradigm for building generative models from large, clean datasets. However, in many scientific and engineering domains, clean data are often unavailable: instead, we only observe measurements corrupted through a noisy, ill-conditioned channel. A generative model for the original data thus requires solving an inverse problem at the level of distributions. In this work, we introduce a novel approach to this task based on Stochastic Interpolants: we iteratively update a transport map between corrupted and clean data samples using only access to the corrupted dataset as well as black box access to the corruption channel. Under appropriate conditions, this iterative procedure converges towards a self-consistent transport map that effectively inverts the corruption channel, thus enabling a generative model for the clean data. We refer to the resulting method as the self-consistent stochastic interpolant (SCSI). It (i) is computationally efficient compared to variational alternatives, (ii) highly flexible, handling arbitrary nonlinear forward models with only black-box access, and (iii) enjoys theoretical guarantees. We demonstrate superior performance on inverse problems in natural image processing and scientific reconstruction, and establish convergence guarantees of the scheme under appropriate assumptions.

💡 Deep Analysis

Deep Dive into Generative Modeling from Black-box Corruptions via Self-Consistent Stochastic Interpolants.

Transport-based methods have emerged as a leading paradigm for building generative models from large, clean datasets. However, in many scientific and engineering domains, clean data are often unavailable: instead, we only observe measurements corrupted through a noisy, ill-conditioned channel. A generative model for the original data thus requires solving an inverse problem at the level of distributions. In this work, we introduce a novel approach to this task based on Stochastic Interpolants: we iteratively update a transport map between corrupted and clean data samples using only access to the corrupted dataset as well as black box access to the corruption channel. Under appropriate conditions, this iterative procedure converges towards a self-consistent transport map that effectively inverts the corruption channel, thus enabling a generative model for the clean data. We refer to the resulting method as the self-consistent stochastic interpolant (SCSI). It (i) is computationally effi

📄 Full Content

Generative Modeling from Black-box Corruptions via

Self-Consistent Stochastic Interpolants

Chirag Modi1*, Jiequn Han2*, Eric Vanden-Eijnden3,1, Joan Bruna1,2

1New York University

2Flatiron Institute

3Machine Learning Lab, Capital Fund Management

December 12, 2025

Abstract

Transport-based methods have emerged as a leading paradigm for building generative models from

large, clean datasets. However, in many scientific and engineering domains, clean data are often unavailable:

instead, we only observe measurements corrupted through a noisy, ill-conditioned channel. A generative

model for the original data thus requires solving an inverse problem at the level of distributions. In this

work, we introduce a novel approach to this task based on Stochastic Interpolants: we iteratively update a

transport map between corrupted and clean data samples using only access to the corrupted dataset as

well as black box access to the corruption channel. Under appropriate conditions, this iterative procedure

converges towards a self-consistent transport map that effectively inverts the corruption channel, thus

enabling a generative model for the clean data. We refer to the resulting method as the self-consistent

stochastic interpolant (SCSI). It (i) is computationally efficient compared to variational alternatives,

(ii) highly flexible, handling arbitrary nonlinear forward models with only black-box access, and (iii)

enjoys theoretical guarantees. We demonstrate superior performance on inverse problems in natural

image processing and scientific reconstruction, and establish convergence guarantees of the scheme under

appropriate assumptions.

Contents

1

Introduction

2

2

Preliminaries

4

2.1

Stochastic Interpolants

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4

2.2

Problem Setup . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5

3

Self-Consistent Stochastic Interpolants

6

3.1

Iterative Scheme for Self-Consistency

. . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6

3.2

Truncated Inner-Loop Optimization for Efficiency . . . . . . . . . . . . . . . . . . . . . . .

7

4

Theoretical Analysis

7

4.1

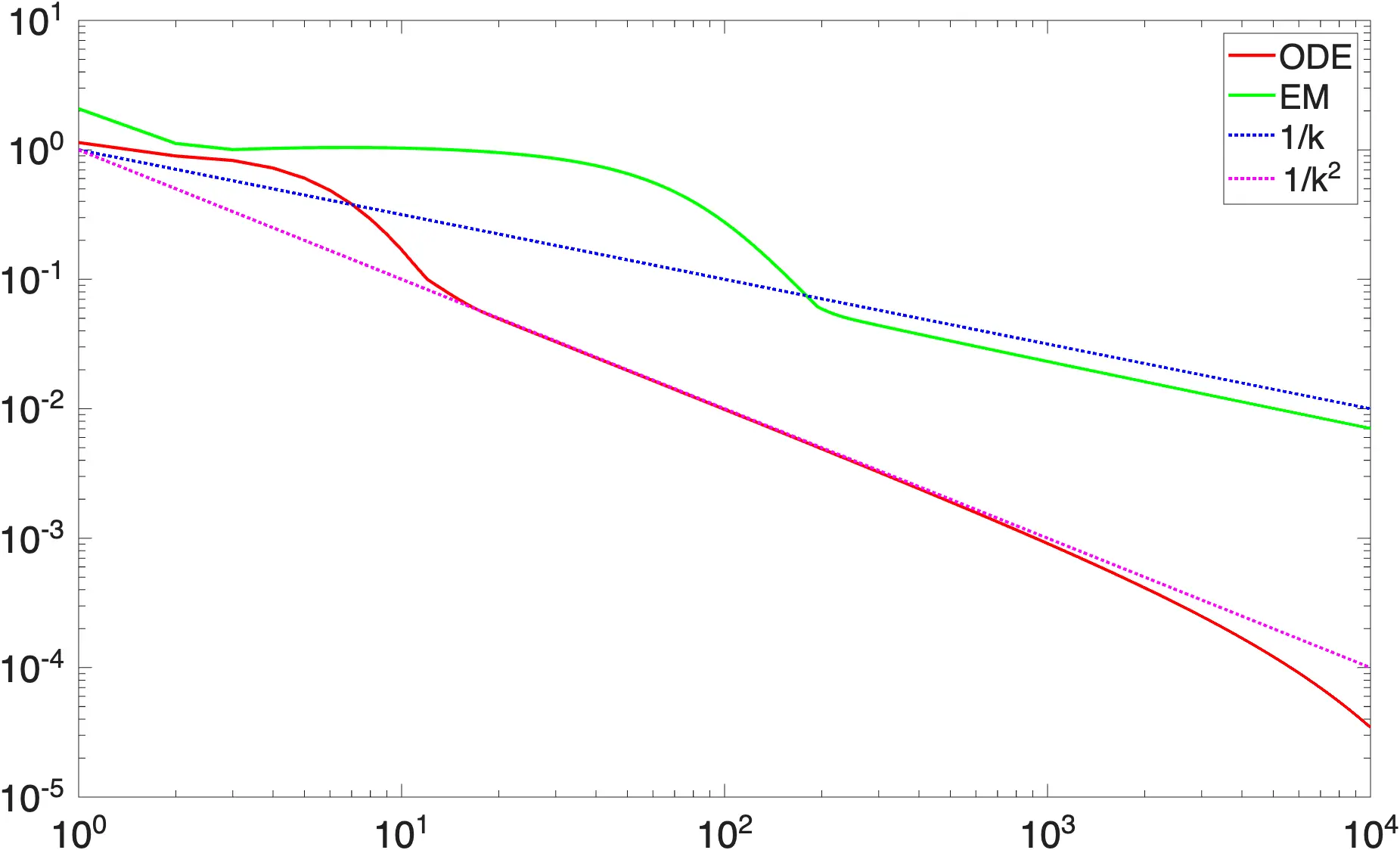

Contraction in Wasserstein Metric . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

7

4.2

Contraction in KL Divergence

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

8

∗Equal contribution.

1

arXiv:2512.10857v1 [cs.LG] 11 Dec 2025

5

Case Study: AWGN with Gaussian prior

10

6

Experiments

13

6.1

Low Dimensional Synthetic Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

13

6.2

Imaging Tasks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

14

6.3

Quasar Spectra

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

16

7

Conclusion

17

A Proofs

21

A.1

Proof of Proposition 4 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

21

A.2

Proof of Proposition 8 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

21

A.3

Proof of Theorem 9 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

22

A.4

Proof of Proposition 13 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

26

B

Detailed Algorithm Description

26

B.1

Algorithm Pseudocode . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

26

B.2

Conditional Generation via Lifting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

27

C Implementation Details

27

C.1

Architecture of Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

27

C.2

Training Parameters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

28

D Additional Results

29

D.1

Impact of Network Size . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

29

D.2

SDE Results . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

30

D.3 Additional Metrics for Restored Performance Comparison . . . . . . . . . . . . . . . . . . .

31

D.4 Varying Levels of Corruption . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

31

D.5

Non-Gaussian Noise: Gaussian Blurring with Poisson noise

. . . . . . . . . . . . . . . . .

33

1

Introduction

Generative modeling has become a central aspect of high-dimensional learning. Transport-based methods,

including diffusion-based models [HJA20, SSDK+21] and flow-based models [AVE23, LCBH+22, LGL23],

have emerged as leading frameworks with a wide range of applications from natural image synthesis [RBL+22]

to molecular design [WJB+23]. These methods rely on access to clean samples 𝑥∼𝜋of the target distribution,

which are plentiful in many machine learning tasks. However, in many scientific and engineering applications,

such clean data of interest are unavailable. Instead, we only observe corrupted measurements 𝑦through

a forward map 𝑦= F (𝑥) that is typically noisy and ill-conditioned. Examples include med

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.