📝 Original Info

- Title: LabelFusion: Learning to Fuse LLMs and Transformer Classifiers for Robust Text Classification

- ArXiv ID: 2512.10793

- Date: 2025-12-11

- Authors: Researchers from original ArXiv paper

📝 Abstract

LabelFusion is a fusion ensemble for text classification that learns to combine a traditional transformerbased classifier (e.g., RoBERTa) with one or more Large Language Models (LLMs such as OpenAI GPT, Google Gemini, or DeepSeek) to deliver accurate and cost-aware predictions across multi-class and multi-label tasks. The package provides a simple high-level interface (AutoFusionClassifier) that trains the full pipeline end-to-end with minimal configuration, and a flexible API for advanced users. Under the hood, LabelFusion integrates vector signals from both sources by concatenating the ML backbone's embeddings with the LLM-derived per-class scores-obtained through structured prompt-engineering strategies-and feeds this joint representation into a compact multi-layer perceptron (FusionMLP) that produces the final prediction. This learned fusion approach captures complementary strengths of LLM reasoning and traditional transformer-based classifiers, yielding robust performance across domains-achieving 92.4% accuracy on AG News and 92.3% on 10-class Reuters 21578 topic classification-while enabling practical trade-offs between accuracy, latency, and cost.

💡 Deep Analysis

Deep Dive into LabelFusion: Learning to Fuse LLMs and Transformer Classifiers for Robust Text Classification.

LabelFusion is a fusion ensemble for text classification that learns to combine a traditional transformerbased classifier (e.g., RoBERTa) with one or more Large Language Models (LLMs such as OpenAI GPT, Google Gemini, or DeepSeek) to deliver accurate and cost-aware predictions across multi-class and multi-label tasks. The package provides a simple high-level interface (AutoFusionClassifier) that trains the full pipeline end-to-end with minimal configuration, and a flexible API for advanced users. Under the hood, LabelFusion integrates vector signals from both sources by concatenating the ML backbone’s embeddings with the LLM-derived per-class scores-obtained through structured prompt-engineering strategies-and feeds this joint representation into a compact multi-layer perceptron (FusionMLP) that produces the final prediction. This learned fusion approach captures complementary strengths of LLM reasoning and traditional transformer-based classifiers, yielding robust performance across d

📄 Full Content

LABELFUSION: LEARNING TO FUSE LLMS AND TRANSFORMER

CLASSIFIERS FOR ROBUST TEXT CLASSIFICATION

Michael Schlee

Centre for Statistics

Georg-August-Universität Göttingen

Germany

Christoph Weisser

Centre for Statistics

Georg-August-Universität Göttingen

Germany

Timo Kivimäki

Department of Politics and International Studies

University of Bath

Bath, UK

Melchizedek Mashiku

Tanaq Management Services LLC

Contracting Agency to the Division of Viral Diseases

Centers for Disease Control and Prevention

Chamblee, Georgia, USA

Benjamin Saefken

Institute of Mathematics

Clausthal University of Technology

Clausthal-Zellerfeld, Germany

December 12, 2025

ABSTRACT

LabelFusion is a fusion ensemble for text classification that learns to combine a traditional transformer-

based classifier (e.g., RoBERTa) with one or more Large Language Models (LLMs such as OpenAI

GPT, Google Gemini, or DeepSeek) to deliver accurate and cost-aware predictions across multi-class

and multi-label tasks. The package provides a simple high-level interface (AutoFusionClassifier)

that trains the full pipeline end-to-end with minimal configuration, and a flexible API for advanced

users. Under the hood, LabelFusion integrates vector signals from both sources by concatenating

the ML backbone’s embeddings with the LLM-derived per-class scores—obtained through struc-

tured prompt-engineering strategies—and feeds this joint representation into a compact multi-layer

perceptron (FusionMLP) that produces the final prediction. This learned fusion approach captures

complementary strengths of LLM reasoning and traditional transformer-based classifiers, yielding

robust performance across domains—achieving 92.4% accuracy on AG News and 92.3% on 10-class

Reuters 21578 topic classification—while enabling practical trade-offs between accuracy, latency,

and cost.

Keywords Natural Language Processing · Text Classification · Large Language Models · Ensemble Learning ·

Multi-class · Multi-label

arXiv:2512.10793v1 [cs.CL] 11 Dec 2025

A PREPRINT - DECEMBER 12, 2025

1

Introduction

Modern text classification spans diverse scenarios, from sentiment analysis [1, 2, 3] to complex topic tagging [4, 5, 6, 7],

often under constraints that vary per deployment (throughput, cost ceilings, data privacy). While transformer classifiers

such as BERT/RoBERTa achieve strong supervised performance [8, 9], frontier LLMs can excel in low-data, ambiguous,

or cross-domain settings [10]. No single model family is typically uniformly best: LLMs are powerful, but comparatively

costly, whereas fine-tuned transformers are efficient but may struggle with out-of-distribution cases or extremely limited

training examples.

LabelFusion addresses this gap by: (1) exposing a minimal “AutoFusion” interface that trains a learned combination of

an ML backbone and one or more LLMs; (2) supporting both multi-class and multi-label classification; (3) providing

a lightweight fusion learner that directly fits on LLM scores and ML embeddings; and (4) integrating cleanly with

existing ensemble utilities. Researchers and practitioners can therefore leverage LLMs where they add value while

retaining the speed and determinism of transformer models.

2

State of the Field

In applied NLP, common tools such as scikit-learn [11] and Hugging Face Transformers [12] offer strong baselines but do

not provide a learned fusion of LLMs with supervised transformers. Orchestration frameworks (e.g., LangChain) focus

on tool use rather than classification ensembles. LabelFusion contributes a focused, production-minded implementation

of a small learned combiner that operates on per-class signals from both model families.

3

Functionality and Design

LabelFusion consists of three layers:

• ML component: a RoBERTa-style classifier produces per-class logits for input texts.

• LLM component(s): provider-specific classifiers (OpenAI, Gemini, DeepSeek) return per-class scores. Scores

can be cached to minimize API calls when cache locations are provided.

• Fusion component: a compact MLP concatenates information rich ML embeddings and LLM scores and

outputs fused logits. The ML backbone is trained/fine-tuned with a small learning rate; the fusion MLP uses a

higher rate, enabling rapid adaptation without destabilizing the encoder.

3.1

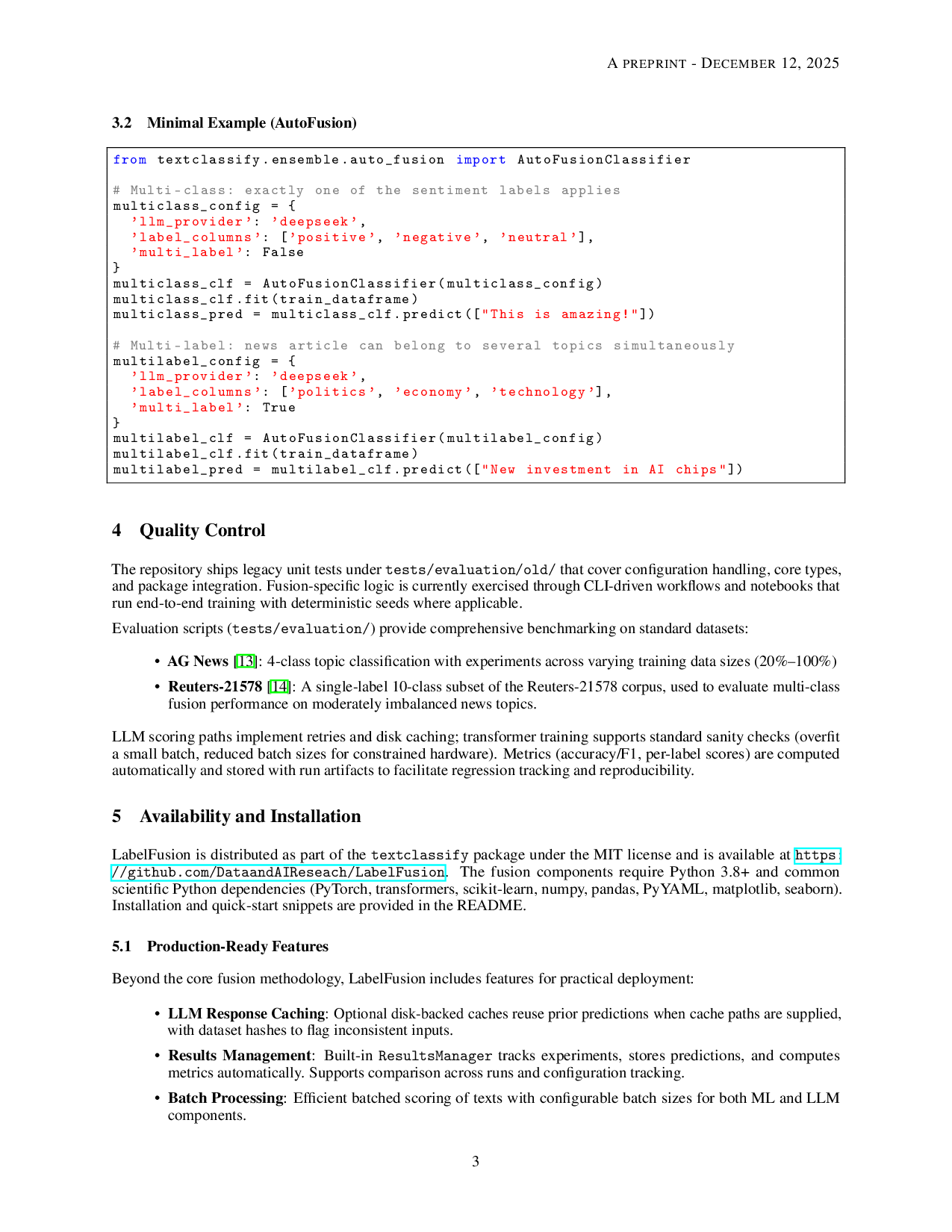

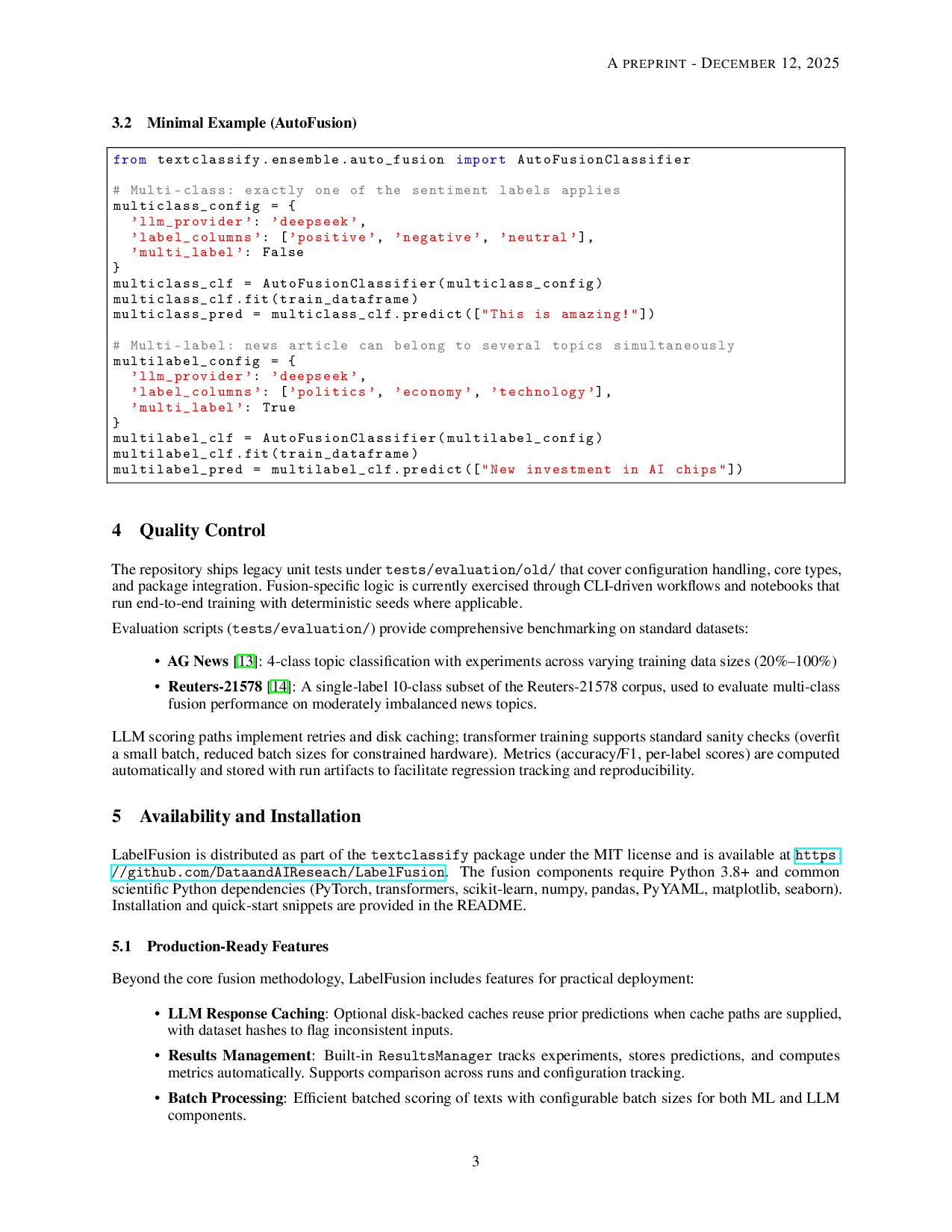

Key Features

• Multi-class and multi-label support with consistent data structures and unified training pipeline.

• Optional LLM response caching reuses on-disk predictions when cache paths are supplied, with dataset-hash

validation to guard against stale files.

• Batched scoring processes multiple texts efficiently with configurable batch sizes for both ML tokenization

and LLM API calls.

• Results management via ResultsManager tracks experiments, stores predictions, computes metrics, and

enables reproducible research workflows.

• Flexible interfaces: Command-line training via train_fusion.py with YAML configs for research; or

minimal AutoFusion API for quick deployment.

• Composable design: LabelFusion can serve as a st

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.