ChronusOmni: Improving Time Awareness of Omni Large Language Models

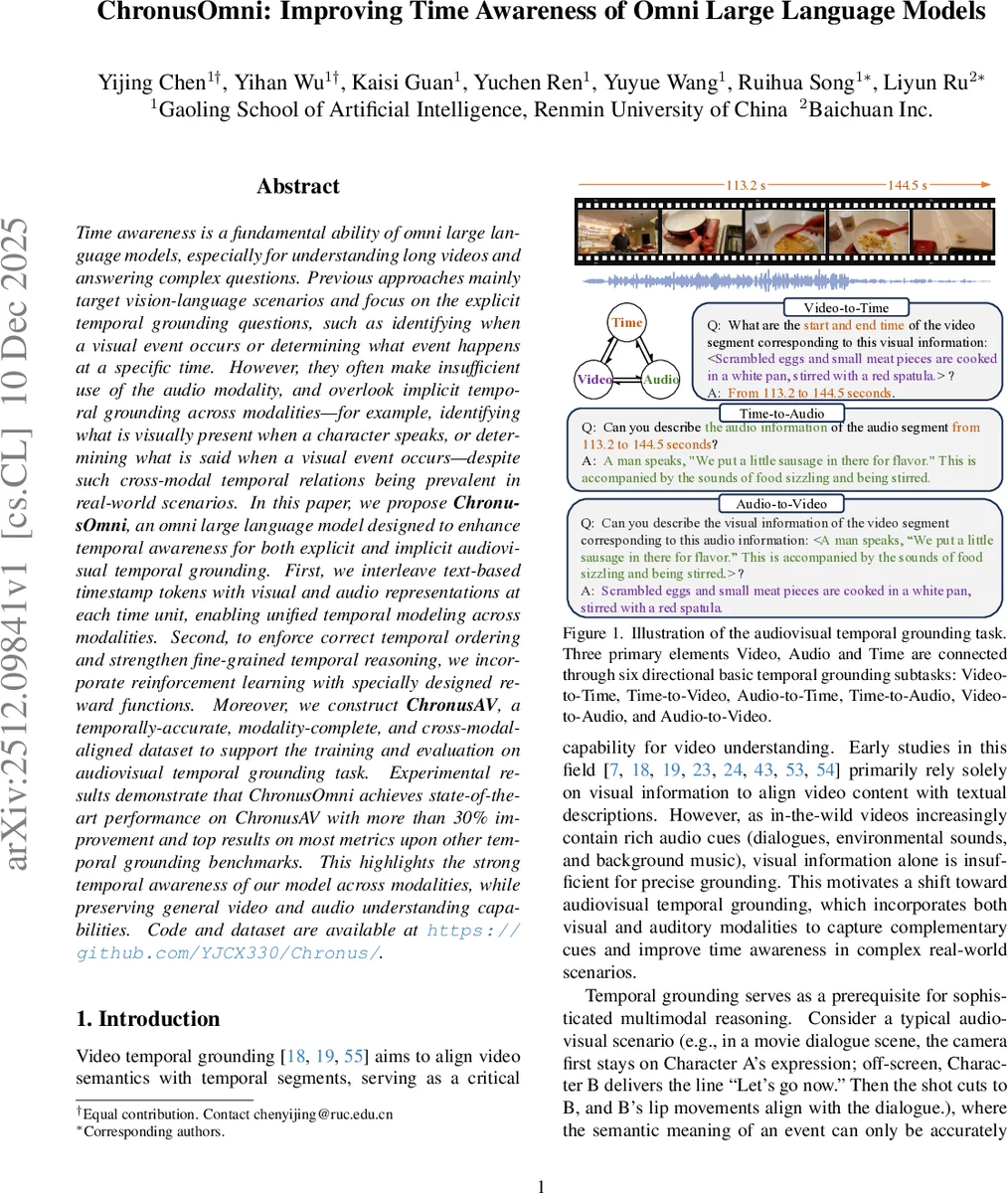

Time awareness is a fundamental ability of omni large language models, especially for understanding long videos and answering complex questions. Previous approaches mainly target vision-language scenarios and focus on the explicit temporal grounding questions, such as identifying when a visual event occurs or determining what event happens at aspecific time. However, they often make insufficient use of the audio modality, and overlook implicit temporal grounding across modalities–for example, identifying what is visually present when a character speaks, or determining what is said when a visual event occurs–despite such cross-modal temporal relations being prevalent in real-world scenarios. In this paper, we propose ChronusOmni, an omni large language model designed to enhance temporal awareness for both explicit and implicit audiovisual temporal grounding. First, we interleave text-based timestamp tokens with visual and audio representations at each time unit, enabling unified temporal modeling across modalities. Second, to enforce correct temporal ordering and strengthen fine-grained temporal reasoning, we incorporate reinforcement learning with specially designed reward functions. Moreover, we construct ChronusAV, a temporally-accurate, modality-complete, and cross-modal-aligned dataset to support the training and evaluation on audiovisual temporal grounding task. Experimental results demonstrate that ChronusOmni achieves state-of-the-art performance on ChronusAV with more than 30% improvement and top results on most metrics upon other temporal grounding benchmarks. This highlights the strong temporal awareness of our model across modalities, while preserving general video and audio understanding capabilities.

💡 Research Summary

This paper introduces ChronusOmni, an Omni Large Language Model (LLM) designed to significantly enhance temporal awareness, specifically for the complex task of audiovisual temporal grounding. The core challenge addressed is the limitation of prior models, which primarily focused on explicit temporal grounding (e.g., “when does a visual event happen?”) within vision-language contexts, often underutilizing audio and neglecting implicit cross-modal temporal relations (e.g., “what is seen when a specific sound is heard?”).

ChronusOmni’s innovation is threefold. First, it proposes a novel temporal-interleaved tokenization scheme. Instead of using learnable temporal embeddings or auxiliary time encoders, it represents absolute timestamps as plain text tokens (e.g., “second {113.2}”). These timestamp tokens are explicitly interleaved with the visual tokens (from a frame) and audio tokens (from a corresponding segment) at each sampled time point, forming a unified sequence like

Comments & Academic Discussion

Loading comments...

Leave a Comment