ReMoSPLAT: Reactive Mobile Manipulation Control on a Gaussian Splat

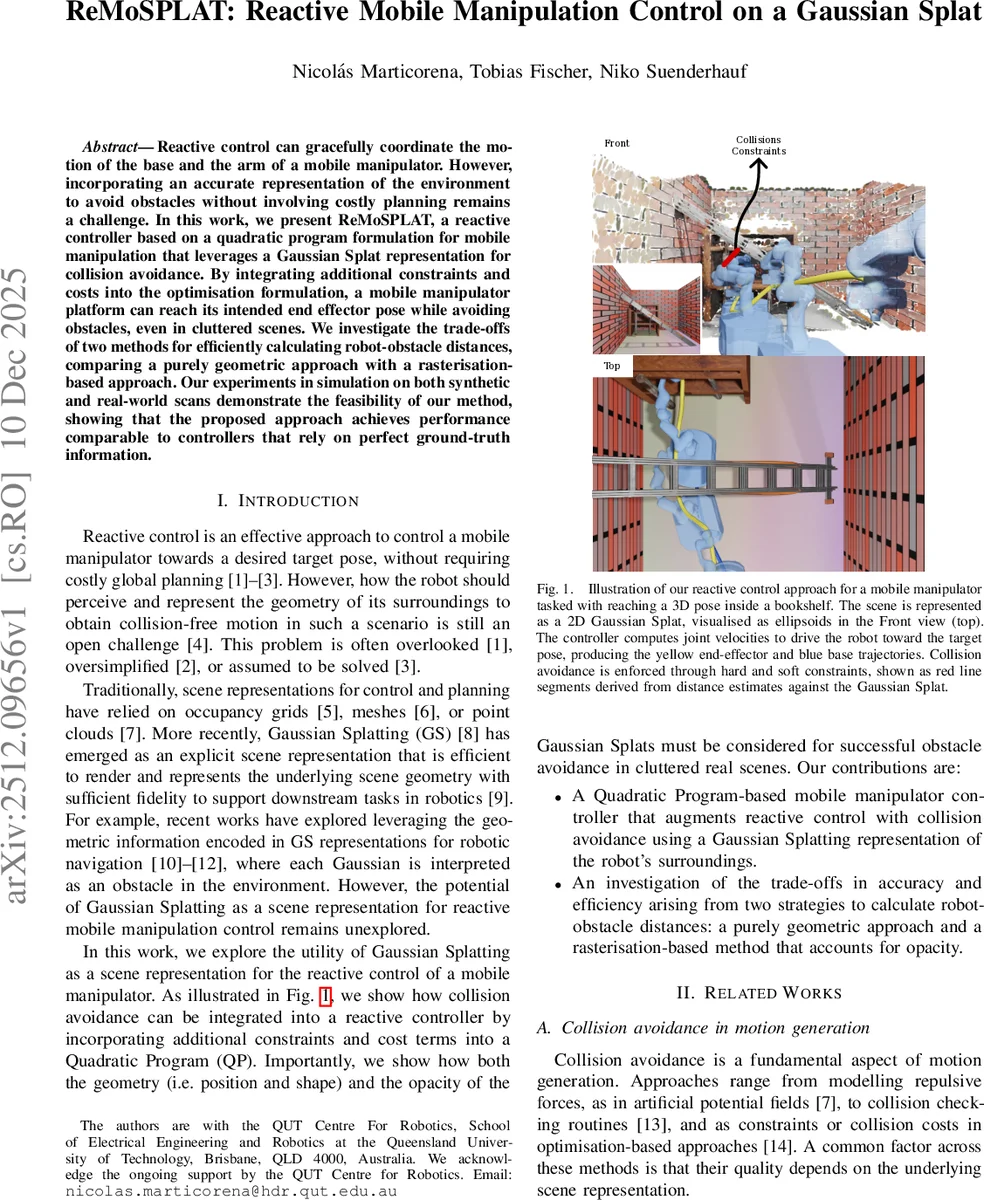

Reactive control can gracefully coordinate the motion of the base and the arm of a mobile manipulator. However, incorporating an accurate representation of the environment to avoid obstacles without involving costly planning remains a challenge. In this work, we present ReMoSPLAT, a reactive controller based on a quadratic program formulation for mobile manipulation that leverages a Gaussian Splat representation for collision avoidance. By integrating additional constraints and costs into the optimisation formulation, a mobile manipulator platform can reach its intended end effector pose while avoiding obstacles, even in cluttered scenes. We investigate the trade-offs of two methods for efficiently calculating robot-obstacle distances, comparing a purely geometric approach with a rasterisation-based approach. Our experiments in simulation on both synthetic and real-world scans demonstrate the feasibility of our method, showing that the proposed approach achieves performance comparable to controllers that rely on perfect ground-truth information.

💡 Research Summary

This paper presents ReMoSPLAT, a novel reactive controller for mobile manipulators that leverages a 3D Gaussian Splatting (GS) representation of the environment for real-time collision avoidance. The core challenge addressed is integrating an accurate, sensor-derived world model into a reactive control loop without resorting to computationally expensive global planning.

The methodology builds upon a holistic Quadratic Program (QP) formulation for mobile manipulation. The robot’s geometry is approximated by a set of spheres. The key innovation lies in how distances between these spheres and the environment are computed using the GS model. The authors investigate two complementary strategies: 1) A Sphere-to-Ellipsoid method that computes the geometric minimum distance between a robot sphere and the surface of individual 3D Gaussians (treated as ellipsoids). This approach is efficient but ignores the opacity attribute of Gaussians, potentially leading to reactions to non-visible surfaces. 2) A Depth Rasterization method that places virtual cameras on each robot sphere. It renders depth images of the GS scene from six viewpoints using a median-depth rasterization technique, which aggregates opacity along a ray to find the most probable surface location. The closest pixel in these depth maps then provides the distance estimate. This method inherently accounts for opacity, providing a more accurate measure of distance to the visible surface.

The calculated distance and its gradient direction are then integrated into the QP controller. For imminent collisions, they are formulated as linear inequality constraints on joint velocities, enforcing hard safety limits. For obstacles further away, they are incorporated as linear cost terms that encourage the robot to move away from them, promoting smoother, more natural avoidance behavior.

Experiments conducted in simulation using both synthetic scenes and GS models reconstructed from real-world LiDAR scans demonstrate the feasibility of the approach. The controller successfully navigates the mobile manipulator to target end-effector poses in cluttered environments. The depth rasterization method proves to be more robust in scenes with imperfect GS reconstructions (e.g., containing low-opacity splats), achieving a success rate comparable to a baseline controller that uses perfect ground-truth geometry. The sphere-to-ellipsoid method, while faster, can fail in such scenarios by reacting to insubstantial splats. The work concludes that Gaussian Splatting is a viable and rich scene representation for reactive robot control, effectively bridging the gap between high-fidelity neural scene representations and real-time motion generation requirements. Future work includes deployment on a physical platform and extension to dynamic environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment