Super4DR: 4D Radar-centric Self-supervised Odometry and Gaussian-based Map Optimization

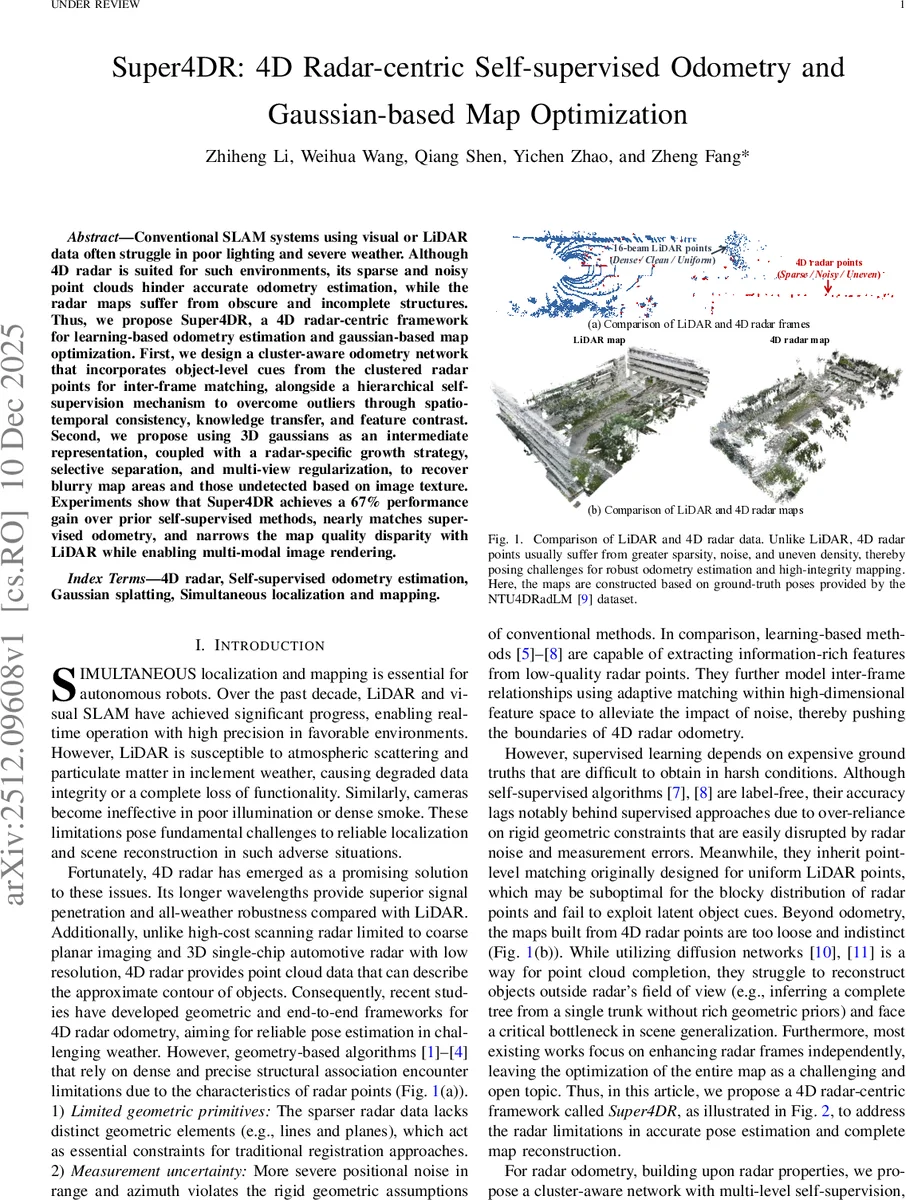

Conventional SLAM systems using visual or LiDAR data often struggle in poor lighting and severe weather. Although 4D radar is suited for such environments, its sparse and noisy point clouds hinder accurate odometry estimation, while the radar maps suffer from obscure and incomplete structures. Thus, we propose Super4DR, a 4D radar-centric framework for learning-based odometry estimation and gaussian-based map optimization. First, we design a cluster-aware odometry network that incorporates object-level cues from the clustered radar points for inter-frame matching, alongside a hierarchical self-supervision mechanism to overcome outliers through spatio-temporal consistency, knowledge transfer, and feature contrast. Second, we propose using 3D gaussians as an intermediate representation, coupled with a radar-specific growth strategy, selective separation, and multi-view regularization, to recover blurry map areas and those undetected based on image texture. Experiments show that Super4DR achieves a 67% performance gain over prior self-supervised methods, nearly matches supervised odometry, and narrows the map quality disparity with LiDAR while enabling multi-modal image rendering.

💡 Research Summary

Super4DR presents a comprehensive 4D radar‑centric framework that simultaneously tackles two long‑standing challenges in adverse‑environment robotics: robust odometry from sparse, noisy radar point clouds and the generation of high‑quality maps from those same data. The authors first introduce a cluster‑aware odometry network that leverages object‑level cues obtained by clustering radar returns. By feeding both raw points and cluster‑level features into a point‑cluster encoder and a temporal fusion‑based ego‑motion decoder, the network learns to match frames at the object scale rather than at the individual point level, which is crucial given the blocky distribution of radar data.

Training is performed without ground‑truth poses using a multi‑level self‑supervision scheme. The cluster‑weighted distance loss assigns higher importance to large, stable clusters, encouraging the network to align dominant structures. The column‑occupancy loss exploits the vertical scanning nature of radar by comparing occupancy statistics column‑wise, thereby enforcing global spatial consistency despite point‑level noise. A geometry‑based teacher module provides soft pose labels derived from a refined ICP‑style algorithm, which are incorporated via a distance‑weighted Kabsch alignment. Feature contrast loss maximizes the distance between non‑corresponding point pairs in the learned feature space, improving discriminability for matching. Finally, a constant‑acceleration motion model imposes temporal smoothness on the predicted trajectory. Together these losses mitigate outliers, reduce drift, and enable the network to achieve odometry accuracy comparable to supervised methods while remaining fully self‑supervised.

For map reconstruction, the paper adapts 3‑D Gaussian Splatting (3DGS) to the radar domain. Directly applying 3DGS to raw radar points is insufficient because radar data are unevenly distributed and contain significant measurement uncertainty. To overcome this, the authors devise a radar‑specific Gaussian growth strategy. First, depth priors from a visual foundation model are used to generate synthetic ground‑level Gaussians, addressing the common “ground fragmentation” problem in radar maps. Next, a geometry‑aware densification process splits and interpolates Gaussians based on local surface normals and curvature, creating denser structures where the original radar points are sparse. Selective separation then masks sky Gaussians, preventing floating artifacts and preserving valuable large Gaussians for later completion. Multi‑view regularization leverages overlapping RGB or thermal camera views to jointly optimize Gaussian parameters, ensuring that the rendered images align with all available observations and enforcing global geometric consistency. After optimization, the refined Gaussians are converted back to point clouds via their centroids, yielding a complete, dense radar map that retains the original radar’s all‑weather robustness while gaining LiDAR‑like structural fidelity.

Extensive experiments on the public NTU‑4DRadLM dataset and on self‑collected sequences captured in rain, fog, snow, and low‑light conditions demonstrate the effectiveness of Super4DR. The self‑supervised odometry achieves a 67 % relative improvement over the previous best self‑supervised radar odometry (RaFlow, SelfR‑O) and narrows the gap to state‑of‑the‑art supervised methods, with translation errors within a few centimeters per second of supervised baselines. In terms of map quality, the Gaussian‑based reconstruction attains PSNR and SSIM scores that are close to those of LiDAR‑derived maps, and visual inspection shows markedly clearer building facades, road edges, and vegetation structures. The framework also supports both RGB and thermal imaging; in low‑light scenarios the thermal‑guided Gaussian optimization further improves rendering quality, confirming the method’s multimodal flexibility.

The authors release their code and a multimodal dataset collected with a handheld platform, providing a valuable benchmark for future 4D radar SLAM research. By integrating a radar‑aware self‑supervised odometry pipeline with a novel Gaussian map optimizer, Super4DR establishes a new paradigm for all‑weather autonomous navigation, opening avenues for real‑time deployment, dynamic object handling, and large‑scale urban mapping under conditions where traditional LiDAR or vision systems fail.

Comments & Academic Discussion

Loading comments...

Leave a Comment