Gradient-Guided Learning Network for Infrared Small Target Detection

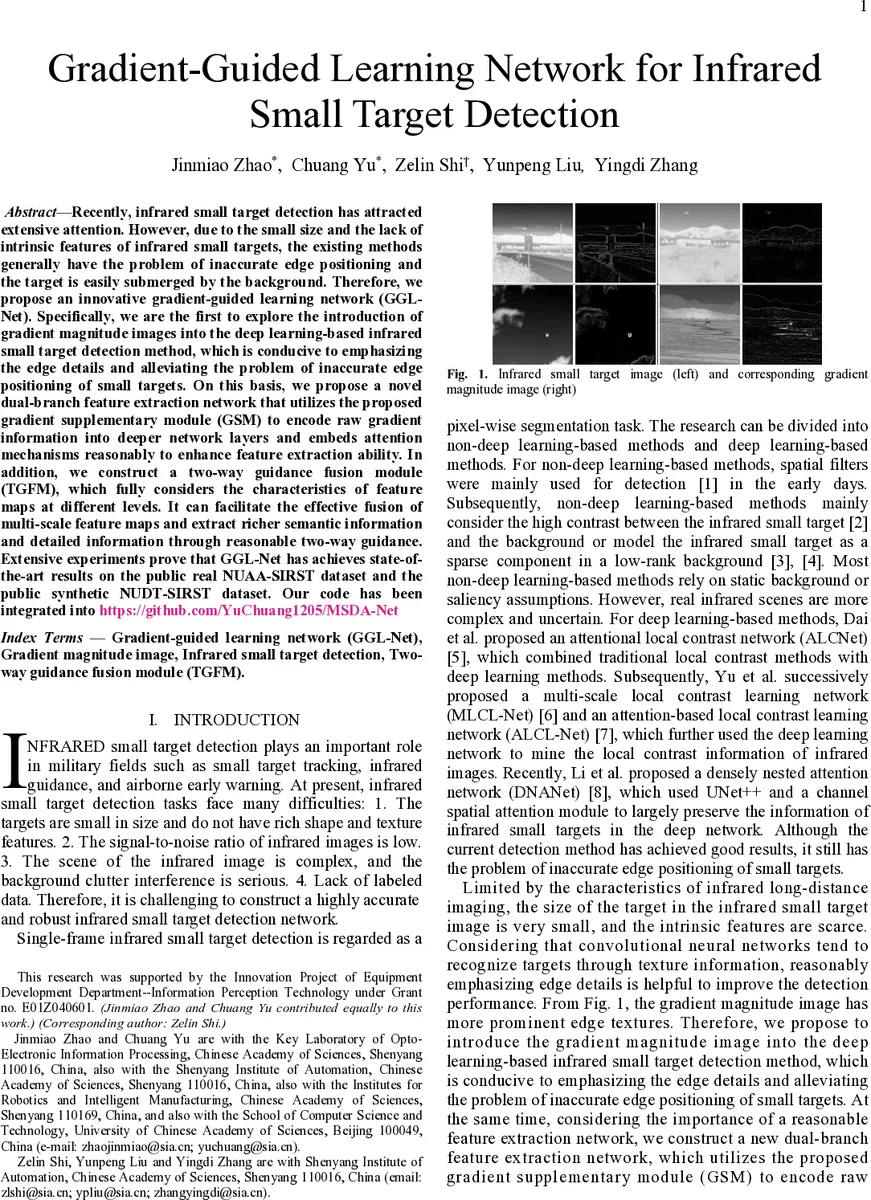

Recently, infrared small target detection has attracted extensive attention. However, due to the small size and the lack of intrinsic features of infrared small targets, the existing methods generally have the problem of inaccurate edge positioning and the target is easily submerged by the background. Therefore, we propose an innovative gradient-guided learning network (GGL-Net). Specifically, we are the first to explore the introduction of gradient magnitude images into the deep learning-based infrared small target detection method, which is conducive to emphasizing the edge details and alleviating the problem of inaccurate edge positioning of small targets. On this basis, we propose a novel dual-branch feature extraction network that utilizes the proposed gradient supplementary module (GSM) to encode raw gradient information into deeper network layers and embeds attention mechanisms reasonably to enhance feature extraction ability. In addition, we construct a two-way guidance fusion module (TGFM), which fully considers the characteristics of feature maps at different levels. It can facilitate the effective fusion of multi-scale feature maps and extract richer semantic information and detailed information through reasonable two-way guidance. Extensive experiments prove that GGL-Net has achieves state-of-the-art results on the public real NUAA-SIRST dataset and the public synthetic NUDT-SIRST dataset. Our code has been integrated into https://github.com/YuChuang1205/MSDA-Net

💡 Research Summary

The paper addresses two persistent challenges in infrared small‑target detection: inaccurate edge localization and easy submergence of tiny targets in cluttered backgrounds. Existing deep‑learning approaches typically rely solely on raw infrared images, which lack sufficient texture and edge cues for such minute objects. To overcome this, the authors introduce a novel Gradient‑Guided Learning Network (GGL‑Net) that explicitly incorporates gradient magnitude images as an additional input modality, thereby emphasizing edge details.

GGL‑Net consists of three main components: (1) a dual‑branch feature extraction backbone, (2) a local contrast learning module, and (3) a two‑way guided fusion module (TGFM). The dual‑branch backbone contains a primary branch of five stages, each stage comprising six 3×3 convolutions split into three blocks, residual connections, and SE‑Attention (channel‑wise attention). The supplementary branch processes multi‑scale gradient magnitude maps (produced by Sobel‑type operators) through max‑pooling. The two branches are fused via a Gradient Supplementary Module (GSM), which includes a G‑Block (gradient‑specific convolutions) and a residual (Res) block. Unlike naïve element‑wise addition, the Res block allows richer gradient information to be injected into deeper layers, as demonstrated by a 1.28% IoU drop when it is removed.

The local contrast learning module, adopted from previous ALCL‑Net work, extracts local contrast cues that further highlight the disparity between tiny targets and surrounding background, complementing the edge‑focused gradient input.

TGFM tackles the classic multi‑scale fusion dilemma: low‑level features carry fine spatial detail but lack semantic context, while high‑level features are semantically rich but spatially coarse. TGFM applies spatial attention (SAM) to low‑level features, guiding high‑level representations, and channel attention (CAM) to high‑level features, guiding low‑level representations. The attention mechanisms are combined via element‑wise multiplication, as formalized in equations (1)–(3). Hyper‑parameter r controls the reduction ratio in the shared MLP of CAM; experiments show r = 8 yields a good trade‑off between parameter count and performance, with negligible impact on final metrics.

To mitigate class imbalance between background and target pixels, the authors employ a Soft‑IoU loss, directly optimizing the overlap between predicted probability maps and binary masks.

Experiments are conducted on two public datasets: NUAA‑SIRST (real infrared images, 427 samples, 96 for testing) and NUDT‑SIRST (synthetic images with five background types). Images are resized to 512×512 (NUAA) and 256×256 (NUDT). Training uses an RTX 2080Ti, batch size 4, learning rate 1e‑4, for 500 epochs. Evaluation metrics include pixel‑level IoU and normalized IoU (nIoU), as well as target‑level probability of detection (Pd), false alarm rate (Fa), and 3D‑ROC curves.

Ablation studies reveal: (a) Combining original and gradient inputs (Original+Gradient) yields the best performance (IoU = 0.8142, nIoU = 0.7858), outperforming using either modality alone; (b) The Res structure within GSM is essential, as replacing it with simple addition reduces IoU by 1.28%; (c) TGFM’s two‑way attention outperforms plain addition, channel‑only (CAM), or spatial‑only (SAM) variants, delivering a 0.99% IoU gain.

Comparative results on NUAA‑SIRST show GGL‑Net surpasses state‑of‑the‑art methods: IoU improvements of 7.53% over ALCNet, 5.44% over MLCL‑Net, 3.83% over DNANet, and 2.78% over ALCL‑Net. nIoU gains range from 1.55% to 7.97%. Inference time remains modest (~0.018 s per image), offering a favorable accuracy‑speed trade‑off; GGL‑Net is roughly 3.5× faster than DNANet while delivering higher accuracy.

On NUDT‑SIRST, GGL‑Net achieves the highest scores under both 1:1 and 7:3 train‑test splits, with IoU reaching 0.842 and nIoU 0.814 in the latter case, consistently outperforming competing approaches.

In summary, GGL‑Net demonstrates that explicitly feeding gradient magnitude information into a deep network, combined with a carefully designed dual‑branch architecture and bidirectional attention‑based fusion, markedly improves infrared small‑target detection. The method delivers superior edge localization, higher detection rates, and robust performance across real and synthetic datasets, while maintaining practical inference speed. Future work may explore model compression for embedded platforms and integration of additional modalities (e.g., thermal‑visible fusion) to further enhance robustness in diverse operational scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment