World in a Frame: Understanding Culture Mixing as a New Challenge for Vision-Language Models

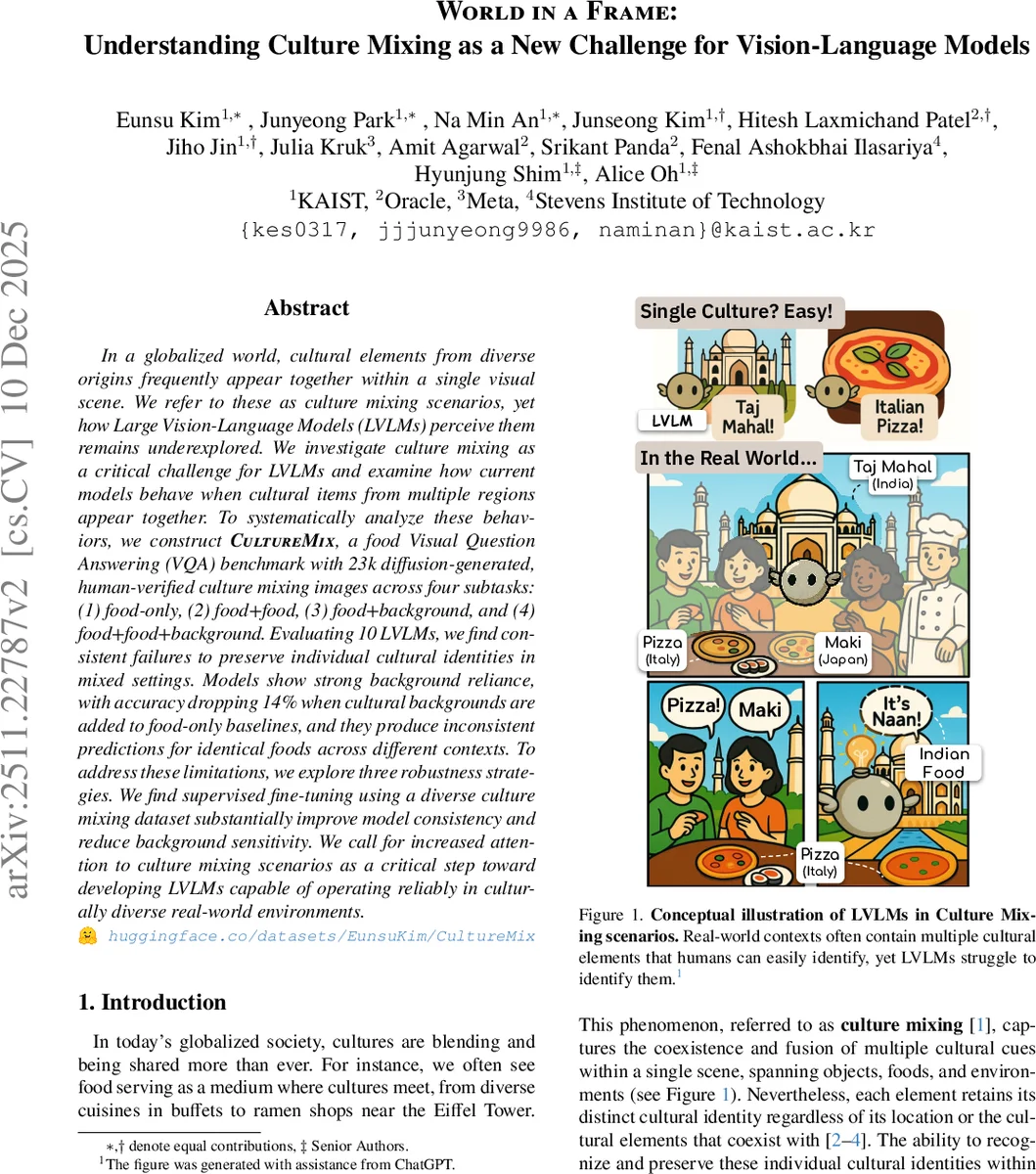

In a globalized world, cultural elements from diverse origins frequently appear together within a single visual scene. We refer to these as culture mixing scenarios, yet how Large Vision-Language Models (LVLMs) perceive them remains underexplored. We investigate culture mixing as a critical challenge for LVLMs and examine how current models behave when cultural items from multiple regions appear together. To systematically analyze these behaviors, we construct CultureMix, a food Visual Question Answering (VQA) benchmark with 23k diffusion-generated, human-verified culture mixing images across four subtasks: (1) food-only, (2) food+food, (3) food+background, and (4) food+food+background. Evaluating 10 LVLMs, we find consistent failures to preserve individual cultural identities in mixed settings. Models show strong background reliance, with accuracy dropping 14% when cultural backgrounds are added to food-only baselines, and they produce inconsistent predictions for identical foods across different contexts. To address these limitations, we explore three robustness strategies. We find supervised fine-tuning using a diverse culture mixing dataset substantially improve model consistency and reduce background sensitivity. We call for increased attention to culture mixing scenarios as a critical step toward developing LVLMs capable of operating reliably in culturally diverse real-world environments.

💡 Research Summary

The paper tackles a previously under‑explored aspect of large vision‑language models (LVLMs): their ability to understand “culture mixing” scenes, where visual elements from multiple cultural origins appear together. The authors define culture mixing as the coexistence of distinct cultural cues—objects, foods, and environments—within a single image, and argue that each cue retains its own cultural identity regardless of surrounding context. To systematically evaluate LVLM performance on this problem, they introduce a new benchmark called CultureMix, which is a visual question‑answering (VQA) dataset focused on food items.

CultureMix consists of 23,000 diffusion‑generated images and 100 real‑world images, all human‑verified. The dataset is organized into four subtasks that vary the type of cultural distractors: (1) Single Food (SF) – a single dish on a neutral background, (2) Multiple Food (MF) – two dishes side‑by‑side, introducing food‑type distractors, (3) Single Food with Background (SFB) – a single dish placed in a culturally specific background (street or landmark), and (4) Multiple Food with Background (MFB) – both food and background distractors combined. For each target food, the authors create pairings that span three levels of “cultural distance”: same country, different countries within the same continent, and different continents. This design enables a fine‑grained analysis of how both object‑level and scene‑level cues affect model predictions.

The synthetic images are generated using editing‑based text‑to‑image diffusion models (FLUX.1‑K and Qwen‑Image‑Edit). Seed food images are drawn from multicultural VQA datasets (WorldCuisines, WorldWideDishes) covering 30 countries across four continents, while background seeds come from landmark and street photo collections representing five continents. A rigorous human‑in‑the‑loop pipeline validates each generated image, ensuring that the final dataset maintains high visual fidelity and correct cultural labeling.

For evaluation, ten LVLMs are tested: two proprietary models (GPT‑5 and Gemini‑2.5‑Pro) and eight open‑source models ranging from 8 B to 78 B parameters (InternVL series, Ovis2.5‑9B, QwenVL3‑8B/32B, Molmo‑72B). Models are prompted with a VQA query: “What is the name of the food, and which country is it most closely associated with?” In MF and MFB settings the target food is placed on the left, and the prompt explicitly references “left food.” Food‑name accuracy is measured via a weighted Jaccard n‑gram similarity (70 % bigram, 30 % unigram) with a 0.4 threshold; country accuracy uses exact string matching with known name variations.

Results show a consistent drop in performance from SF to the more complex subtasks. Across all models, MF causes a modest decline, while SFB leads to the largest degradation: on average country accuracy falls by 13 percentage points and food‑name accuracy by 7 points when a cultural background is added. The ordering of difficulty is generally SF ≈ MF > MFB ≈ SFB. Proprietary models (GPT‑5, Gemini‑2.5‑Pro) outperform open‑source counterparts, but even they suffer notable background sensitivity. Heatmap analyses reveal systematic biases toward high‑resource (“WEIRD”) countries: African and many Asian dishes are frequently mis‑identified as India or China, while European and North American dishes are often labeled as the United States. Misclassifications tend to stay within the same continent, indicating that models rely on coarse geographic cues rather than fine‑grained cultural knowledge.

To mitigate these issues, the authors explore three strategies: (1) training‑free prompt engineering, (2) zero‑shot correction using image‑text matching scores, and (3) supervised fine‑tuning (SFT) on a curated culture‑mixing dataset. The supervised approach yields the most substantial gains, reducing background reliance and improving consistency of predictions for the same food across different contexts. Nevertheless, performance gaps remain, especially when cultural distance is large or when both food and background distractors are present.

The paper concludes that current LVLMs are not yet robust enough for real‑world, culturally diverse environments. It calls for broader inclusion of mixed‑culture data during pre‑training and fine‑tuning, as well as the development of evaluation benchmarks like CultureMix that explicitly test cross‑cultural reasoning. The released dataset and analysis provide a valuable foundation for future work aimed at building LVLMs that can accurately recognize and respect multiple cultural identities within a single visual scene.

Comments & Academic Discussion

Loading comments...

Leave a Comment