Flow-Aided Flight Through Dynamic Clutters From Point To Motion

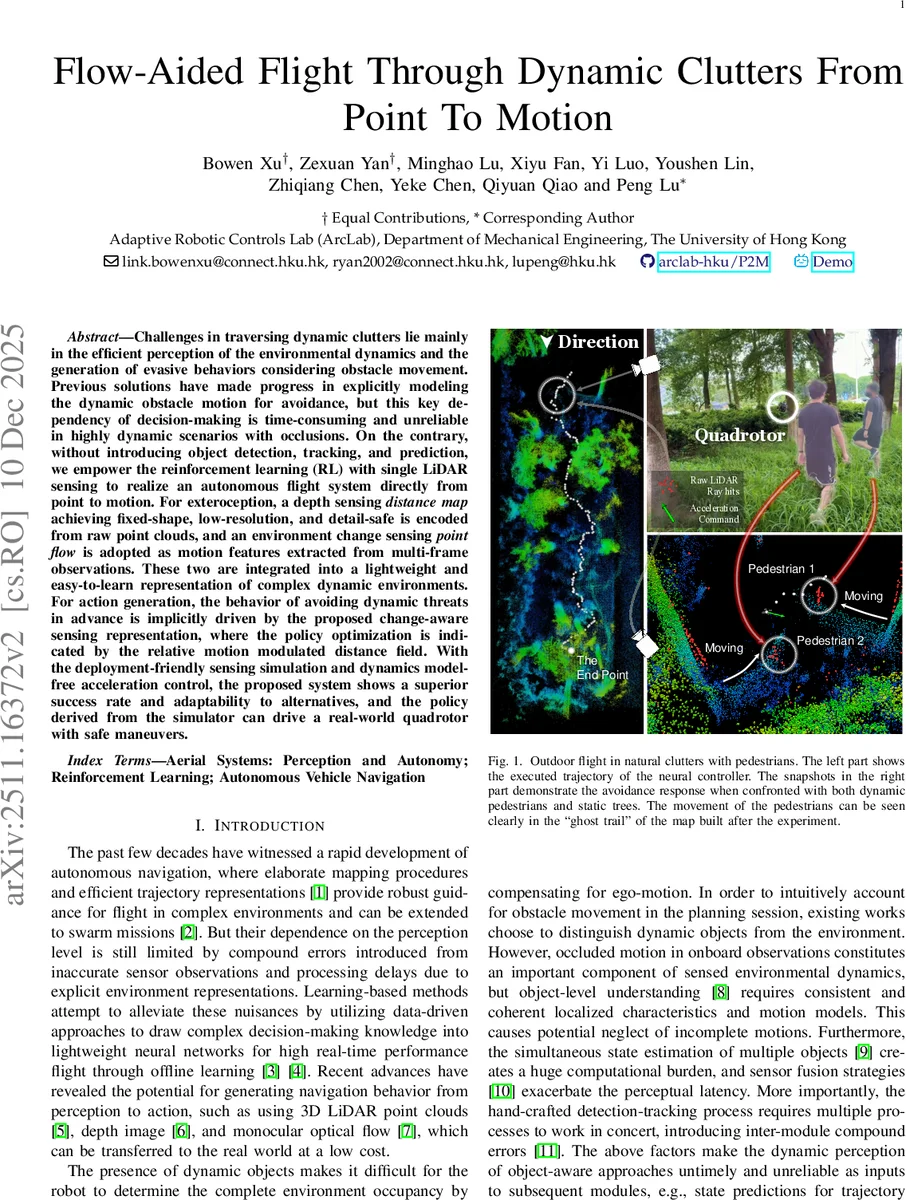

Challenges in traversing dynamic clutters lie mainly in the efficient perception of the environmental dynamics and the generation of evasive behaviors considering obstacle movement. Previous solutions have made progress in explicitly modeling the dynamic obstacle motion for avoidance, but this key dependency of decision-making is time-consuming and unreliable in highly dynamic scenarios with occlusions. On the contrary, without introducing object detection, tracking, and prediction, we empower the reinforcement learning (RL) with single LiDAR sensing to realize an autonomous flight system directly from point to motion. For exteroception, a depth sensing distance map achieving fixed-shape, low-resolution, and detail-safe is encoded from raw point clouds, and an environment change sensing point flow is adopted as motion features extracted from multi-frame observations. These two are integrated into a lightweight and easy-to-learn representation of complex dynamic environments. For action generation, the behavior of avoiding dynamic threats in advance is implicitly driven by the proposed change-aware sensing representation, where the policy optimization is indicated by the relative motion modulated distance field. With the deployment-friendly sensing simulation and dynamics model-free acceleration control, the proposed system shows a superior success rate and adaptability to alternatives, and the policy derived from the simulator can drive a real-world quadrotor with safe maneuvers.

💡 Research Summary

The paper presents a novel autonomous flight system that bypasses traditional object‑centric perception pipelines and directly learns to avoid dynamic obstacles using only a single 2‑D LiDAR sensor. The authors introduce a compact, two‑channel sensing representation: (1) a low‑resolution distance map and (2) a point‑flow field derived from consecutive LiDAR scans. The distance map is generated by ray‑casting raw point clouds into a fixed‑size (108 × 18) grid, selecting the nearest point in each cell, and then down‑sampling to a 36 × 6 grayscale image. This “nearest‑point” strategy preserves the most hazardous obstacle details while drastically reducing data volume.

To capture environmental motion, three successive distance‑map frames are stacked as a pseudo‑RGB image and fed to a pre‑trained NeuFlowV2 network, which estimates pixel‑wise optical flow. The flow is temporally smoothed over five frames, scaled to match the distance‑map resolution, and concatenated with the distance map, yielding a (3, 36, 6) tensor that encodes both static geometry and relative motion.

The combined tensor is processed by a lightweight convolutional encoder (three Conv layers followed by a 128‑unit fully‑connected layer). The extracted visual features are fused with three additional state inputs: the unit vector toward the goal, the current velocity, and the previous action. This fused vector passes through a two‑layer MLP (256 units each) and feeds an actor‑critic architecture that directly outputs a three‑dimensional acceleration command. By operating at the acceleration level, the policy remains model‑free: it does not rely on an explicit dynamics model, allowing the same policy to be deployed on different quadrotors despite variations in mass, inertia, or motor characteristics.

The reinforcement‑learning reward function balances safety, goal achievement, state regularization, and dynamic obstacle avoidance. State regularization uses a logarithmic “soft‑limit” function to gently penalize violations of velocity, acceleration, altitude, and jerk constraints, encouraging smooth, feasible trajectories. The dynamic‑obstacle term is tightly coupled with the flow‑modulated distance field: as the relative motion indicated by the flow brings an obstacle closer, the penalty rises sharply, incentivizing the policy to execute anticipatory maneuvers rather than reactive ones.

Training is performed in a high‑fidelity simulator that reproduces realistic LiDAR noise, occlusions, and moving pedestrians. The authors evaluate the learned policy against several baselines, including object‑based detection‑tracking‑prediction pipelines and static‑depth‑image RL methods. In diverse scenarios with varying numbers of moving pedestrians and static trees, the proposed method achieves a markedly higher success rate, lower collision frequency, and faster convergence.

For real‑world validation, the policy trained entirely in simulation is transferred to a physical quadrotor equipped with a 2‑D LiDAR and an IMU. State estimation combines LiDAR point clouds and inertial data, providing a robust substitute for ground‑truth states used during training. In outdoor experiments at the University of Hong Kong campus, the drone successfully navigates among moving pedestrians, maintaining safe distances and reaching the target without any fine‑tuning of the policy. The system runs at approximately 50 Hz, demonstrating real‑time feasibility.

Key contributions of the work are:

- Efficient LiDAR representation that merges a low‑resolution distance map with a point‑flow field, preserving critical obstacle details while encoding environmental dynamics without explicit object detection.

- Change‑aware reinforcement learning where the reward is shaped by the flow‑modulated distance field, enabling the policy to learn anticipatory avoidance behaviors from implicit motion cues.

- Model‑free acceleration control and seamless sim‑to‑real transfer, allowing the same learned policy to be deployed on different quadrotors without re‑training or dynamics identification.

The authors acknowledge limitations such as dependence on the pre‑trained flow estimator (which may be sensitive to LiDAR characteristics) and the restriction to a 2‑D field of view. Future work could extend the approach to 360° LiDAR, incorporate online adaptation of the flow network, or integrate higher‑level planning for long‑range navigation.

Overall, the paper demonstrates that a carefully designed, lightweight perception stack combined with end‑to‑end reinforcement learning can achieve robust, real‑time autonomous flight in highly dynamic, cluttered environments, offering a promising pathway for low‑cost, high‑performance drone navigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment