An Offline Mobile Conversational Agent for Mental Health Support: Learning from Emotional Dialogues and Psychological Texts with Student-Centered Evaluation

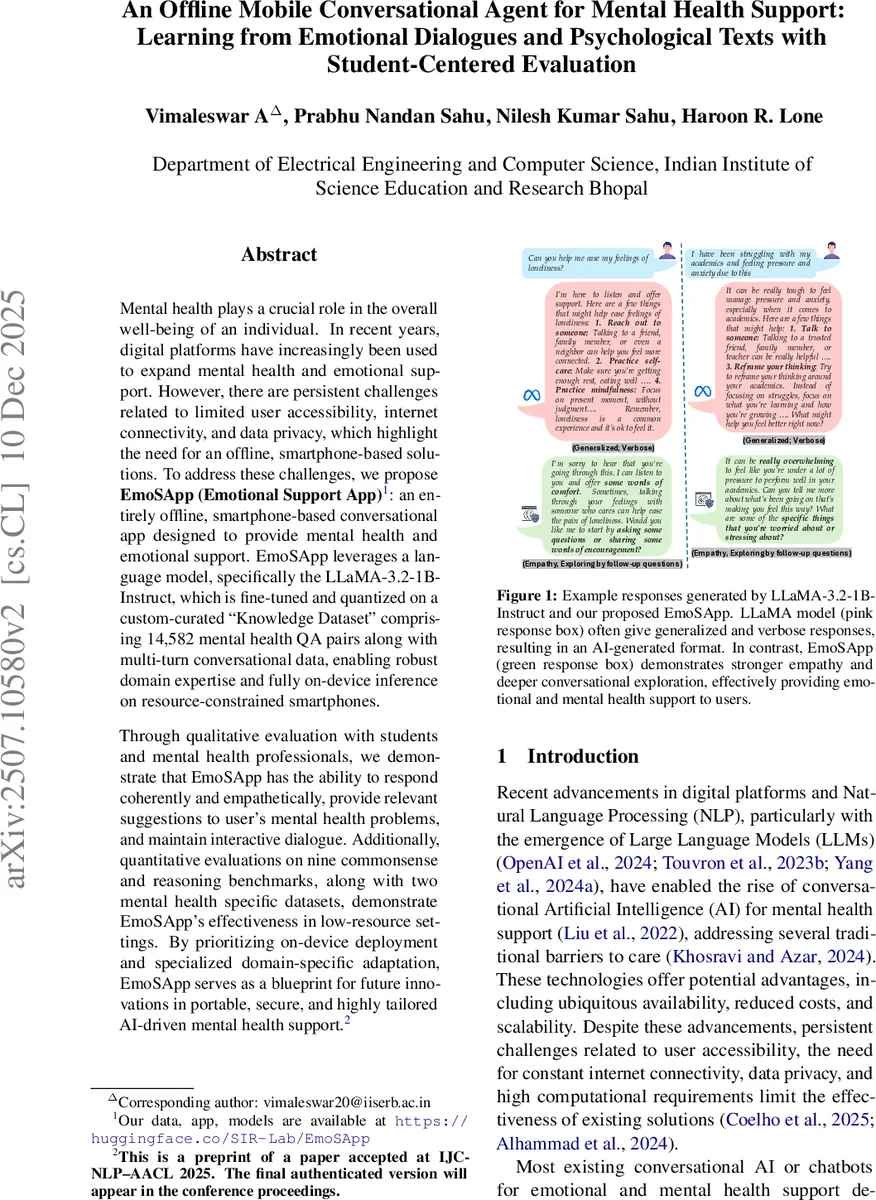

Mental health plays a crucial role in the overall well-being of an individual. In recent years, digital platforms have increasingly been used to expand mental health and emotional support. However, there are persistent challenges related to limited user accessibility, internet connectivity, and data privacy, which highlight the need for an offline, smartphone-based solutions. To address these challenges, we propose EmoSApp (Emotional Support App): an entirely offline, smartphone-based conversational app designed to provide mental health and emotional support. EmoSApp leverages a language model, specifically the LLaMA-3.2-1B-Instruct, which is fine-tuned and quantized on a custom-curated ``Knowledge Dataset’’ comprising 14,582 mental health QA pairs along with multi-turn conversational data, enabling robust domain expertise and fully on-device inference on resource-constrained smartphones. Through qualitative evaluation with students and mental health professionals, we demonstrate that EmoSApp has the ability to respond coherently and empathetically, provide relevant suggestions to user’s mental health problems, and maintain interactive dialogue. Additionally, quantitative evaluations on nine commonsense and reasoning benchmarks, along with two mental health specific datasets, demonstrate EmoSApp’s effectiveness in low-resource settings. By prioritizing on-device deployment and specialized domain-specific adaptation, EmoSApp serves as a blueprint for future innovations in portable, secure, and highly tailored AI-driven mental health support.

💡 Research Summary

The paper introduces EmoSApp, an entirely offline, smartphone‑based conversational agent designed to provide mental‑health and emotional support, particularly for students. Leveraging the open‑source LLaMA‑3.2‑1B‑Instruct model (≈1.2 B parameters), the authors fine‑tune it on a custom “Knowledge Dataset” comprising 14,582 mental‑health question‑answer pairs and two multi‑turn conversational corpora drawn from psychology textbooks, existing emotional‑support datasets (ESConV, ServeForEmo), and curated expert material. This dual focus supplies both factual domain knowledge and the nuanced emotional strategies required for empathetic dialogue.

Three fine‑tuning and quantization pipelines are explored: (1) Full fine‑tuning, which updates all parameters but demands substantial GPU memory and yields a model too large for typical smartphones; (2) LoRA + Post‑Training Quantization (PTQ), which inserts low‑rank adapters to reduce trainable parameters and then quantizes weights to INT4 and activations to INT8, but suffers noticeable performance degradation; and (3) QA‑T‑LoRA, which combines LoRA adapters with Quantization‑Aware Training (QAT) that simulates low‑precision arithmetic during training. After training, the model is converted to a fully quantized INT4‑weight/INT8‑activation format. QA‑T‑LoRA achieves performance comparable to full fine‑tuning while shrinking model size by 55 % (to 1.03 GB) and increasing inference speed by 3.7× (13.5 tokens/s, TTFT ≈ 5.7 s) on an Android 15 device with 6 GB RAM.

Quantitative evaluation spans nine general commonsense/reasoning benchmarks (e.g., CommonsenseQA, PIQA) and two mental‑health‑specific datasets (Mental Health QA, EmpatheticDialogues). Across these, the QA‑T‑LoRA model matches or exceeds the full‑fine‑tuned baseline, especially in empathy‑related metrics on the domain datasets. Qualitative assessment involves 30 university students and five mental‑health professionals who rate responses on empathy, relevance, and conversational flow using a 5‑point Likert scale. EmoSApp consistently outperforms a vanilla LLaMA‑based chatbot, achieving an average gain of over one point in empathy and strategy‑driven questioning.

Implementation details include the use of PyTorch torchtune for fine‑tuning and Executorch for on‑device inference. The authors report that the model runs entirely offline, eliminating any need for internet connectivity and ensuring that all conversation logs remain on the device, thereby addressing privacy concerns inherent in cloud‑based solutions.

The paper’s contributions are fourfold: (i) delivery of a functional offline mental‑health app tailored to students; (ii) a multi‑source dataset fusion strategy that blends factual knowledge with emotional‑support dialogue; (iii) systematic exploration of fine‑tuning and quantization techniques, demonstrating that QA‑T‑LoRA offers the best trade‑off between performance and resource usage; and (iv) extensive human and benchmark evaluations confirming that the system provides coherent, empathetic, and context‑appropriate support in low‑resource settings.

Limitations include the modest size of the curated dataset, which may restrict generalization to more complex psychiatric conditions, and residual quantization artifacts that could affect highly sensitive counseling scenarios. Moreover, the user study is confined to a student and expert cohort, leaving broader demographic validation open.

Future work is suggested in three directions: scaling the dataset via large‑language‑model‑generated but human‑verified dialogues, integrating multimodal cues (voice tone, facial expression) for richer emotion detection, and employing federated learning to enable continual on‑device personalization without compromising privacy. Overall, EmoSApp represents a practical blueprint for deploying privacy‑preserving, domain‑specialized AI mental‑health assistants on everyday smartphones, potentially expanding access to supportive care in regions with limited connectivity or resources.

Comments & Academic Discussion

Loading comments...

Leave a Comment