Make LVLMs Focus: Context-Aware Attention Modulation for Better Multimodal In-Context Learning

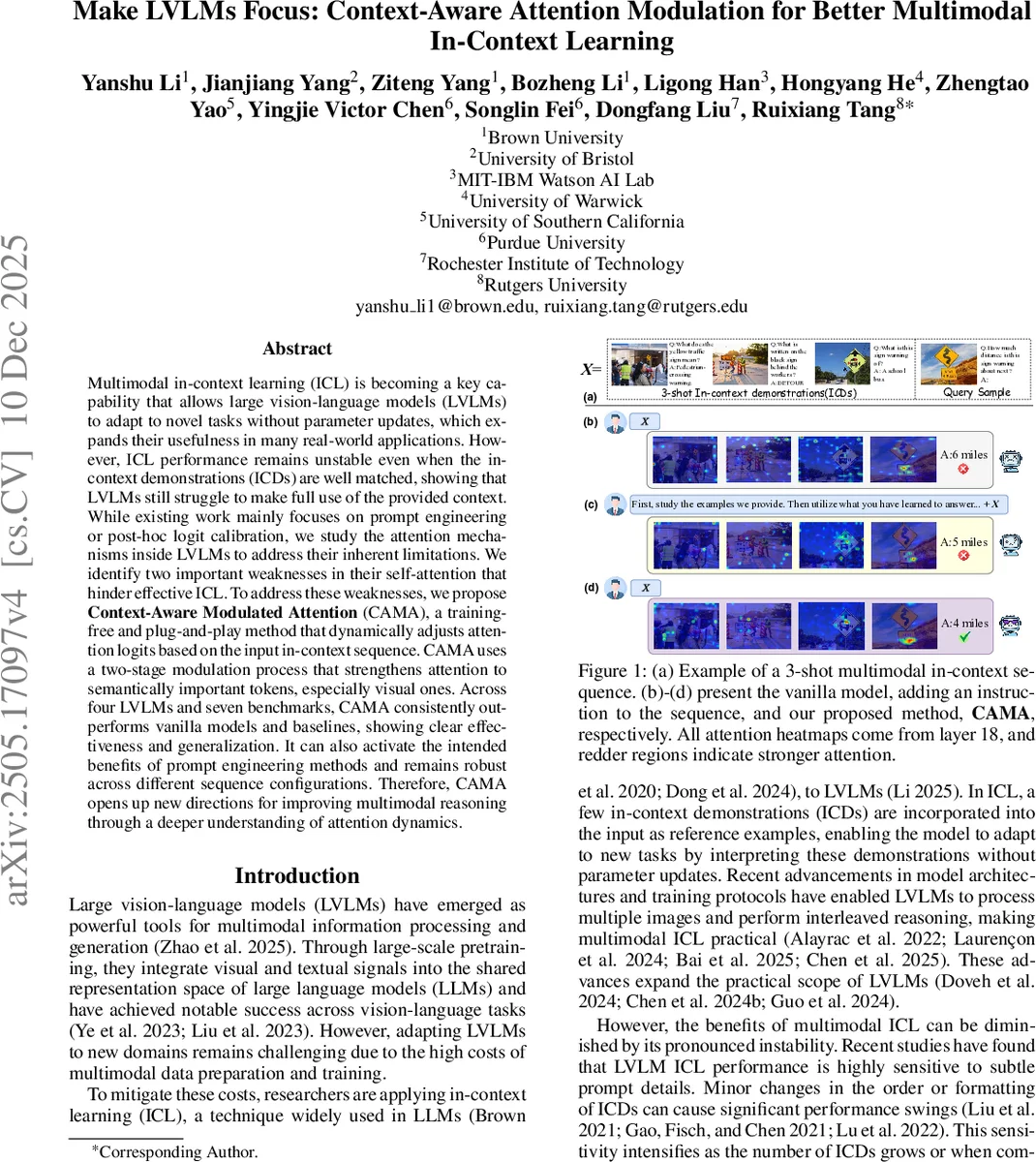

Multimodal in-context learning (ICL) is becoming a key capability that allows large vision-language models (LVLMs) to adapt to novel tasks without parameter updates, which expands their usefulness in many real-world applications. However, ICL performance remains unstable even when the in-context demonstrations (ICDs) are well matched, showing that LVLMs still struggle to make full use of the provided context. While existing work mainly focuses on prompt engineering or post-hoc logit calibration, we study the attention mechanisms inside LVLMs to address their inherent limitations. We identify two important weaknesses in their self-attention that hinder effective ICL. To address these weaknesses, we propose Context-Aware Modulated Attention (CAMA), a training-free and plug-and-play method that dynamically adjusts attention logits based on the input in-context sequence. CAMA uses a two-stage modulation process that strengthens attention to semantically important tokens, especially visual ones. Across four LVLMs and seven benchmarks, CAMA consistently outperforms vanilla models and baselines, showing clear effectiveness and generalization. It can also activate the intended benefits of prompt engineering methods and remains robust across different sequence configurations. Therefore, CAMA opens up new directions for improving multimodal reasoning through a deeper understanding of attention dynamics.

💡 Research Summary

The paper investigates why large vision‑language models (LVLMs) often exhibit unstable performance when performing multimodal in‑context learning (ICL), even when the provided in‑context demonstrations (ICDs) are well‑matched to the query. By probing the internal self‑attention mechanisms of LVLMs, the authors identify two distinct deficits that hinder effective ICL.

First, in the shallow layers of the transformer decoder, LVLMs fail to align visual tokens with the semantic content of their paired text. The authors quantify this “intra‑ICD alignment” by measuring the Intersection‑over‑Union (IoU) between the top‑attended image regions (derived from attention maps) and manually annotated bounding boxes that correspond to the textual description. Correctly answered examples show significantly higher alignment scores than incorrect ones, especially in layers 2‑4.

Second, in the middle layers, LVLMs do not prioritize the most relevant ICDs for a given query. To capture this “query‑centric routing” deficit, the authors compute a contribution score (𝑠_contrib) that reflects the proportion of information flow from each ICD to the answer tokens. Correct examples display a sharp rise in this score starting around layer 10 and remaining high through layer 20, whereas wrong examples keep the score low across all layers. Moreover, the position of an ICD in the sequence matters: earlier ICDs receive less attention and contribution, amplifying both deficits.

Based on these observations, the authors propose Context‑Aware Modulated Attention (CAMA), a training‑free, plug‑and‑play method that dynamically reshapes attention logits during inference. CAMA consists of two stages, each targeting one of the identified deficits.

Stage I – Intra‑ICD Grounding (shallow layers). For each ICD, CAMA first identifies the visual tokens that are most semantically linked to the accompanying question‑answer pair. Instead of naïve metrics such as summing text‑to‑image attention scores or using raw embedding similarity, the method leverages cross‑attention strength between text and image tokens to compute a grounding score. The identified key visual tokens receive an amplification factor (e.g., multiplying their attention logits by a scalar α > 1). This boosts early visual perception, ensuring that the model’s initial layers focus on the most informative image regions.

Stage II – Query‑Centric Routing (middle layers). At a deeper set of decoder layers, CAMA operates at the attention‑head level. It first measures, for each head, how strongly the query tokens attend to each ICD’s tokens. Then it rescales the logits of those heads according to a cross‑modal similarity between the query and each ICD (e.g., CLIP‑based text‑image similarity). Heads that show strong query‑to‑ICD attention are given higher weights for ICDs that are more relevant, effectively rerouting the information flow so that the most useful demonstrations dominate the reasoning process.

Both stages are applied as a simple mathematical transformation of the attention matrices; no model parameters are altered, and no additional training data or fine‑tuning is required. Consequently, CAMA can be attached to any existing LVLM as a lightweight module.

The authors evaluate CAMA on four state‑of‑the‑art LVLMs (LLaVA‑NeXT‑7B, Idefics2‑8B, QwenVL‑7B, InternVL‑2‑7B) across seven multimodal benchmarks, including VQA‑v2, OK‑VQA, GQA, VizWiz, A‑OKVQA, VCR, and a hard VQA split. Across all settings, CAMA yields consistent accuracy improvements of roughly 3–5 percentage points. When combined with prompt‑engineering techniques (e.g., adding “Answer in one word”), CAMA further amplifies gains, outperforming either approach alone. Importantly, the method is robust to variations in ICD ordering and the number of demonstrations, mitigating the previously observed position bias.

Ablation studies confirm that each stage contributes uniquely: Stage I alone improves shallow‑layer alignment but does not fully close the performance gap; Stage II alone enhances middle‑layer routing but suffers from weak early visual grounding. Only the combination of both stages achieves the full benefit.

The paper acknowledges limitations: CAMA is currently tailored to question‑answering formats and may need adaptation for longer, dialogic, or generative multimodal tasks. Moreover, the grounding and similarity scores rely on existing cross‑attention and CLIP embeddings; future work could explore richer semantic alignment mechanisms.

In summary, the work provides a clear diagnosis of why LVLMs struggle with multimodal ICL and introduces a practical, training‑free solution that dynamically modulates attention based on the input context. By addressing both visual‑text alignment and query‑centric routing, CAMA substantially stabilizes and improves ICL performance, offering an immediately applicable tool for enhancing the reasoning capabilities of current large vision‑language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment