Two Causal Principles for Improving Visual Dialog

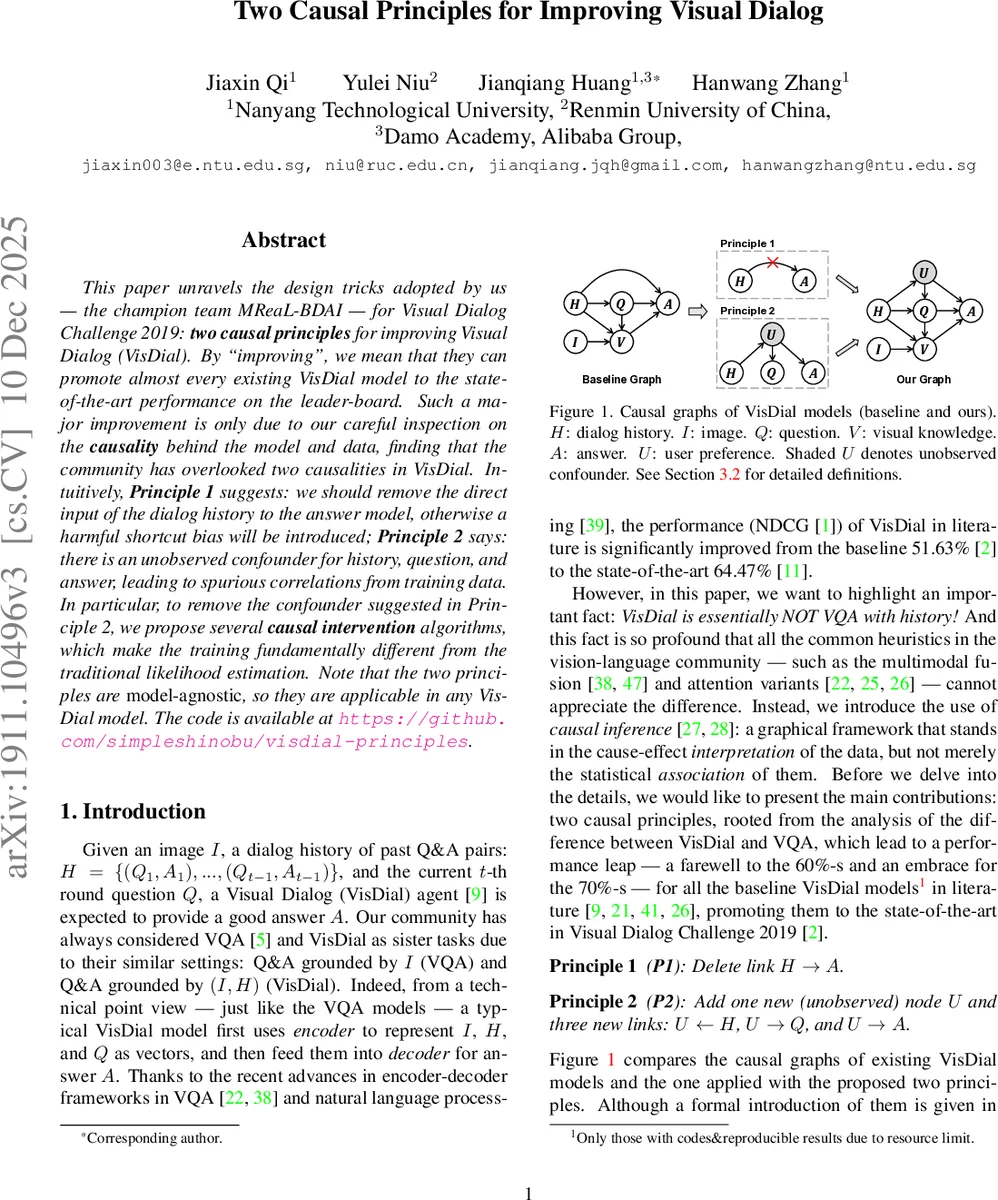

This paper unravels the design tricks adopted by us, the champion team MReaL-BDAI, for Visual Dialog Challenge 2019: two causal principles for improving Visual Dialog (VisDial). By “improving”, we mean that they can promote almost every existing VisDial model to the state-of-the-art performance on the leader-board. Such a major improvement is only due to our careful inspection on the causality behind the model and data, finding that the community has overlooked two causalities in VisDial. Intuitively, Principle 1 suggests: we should remove the direct input of the dialog history to the answer model, otherwise a harmful shortcut bias will be introduced; Principle 2 says: there is an unobserved confounder for history, question, and answer, leading to spurious correlations from training data. In particular, to remove the confounder suggested in Principle 2, we propose several causal intervention algorithms, which make the training fundamentally different from the traditional likelihood estimation. Note that the two principles are model-agnostic, so they are applicable in any VisDial model. The code is available at https://github.com/simpleshinobu/visdial-principles.

💡 Research Summary

The paper “Two Causal Principles for Improving Visual Dialog” presents a systematic causal analysis of the Visual Dialog (VisDial) task and introduces two model‑agnostic principles that dramatically boost the performance of existing VisDial systems. The authors first observe that VisDial differs fundamentally from Visual Question Answering (VQA) because it incorporates a dialog history (H) alongside the image (I) and the current question (Q). In the original data collection protocol, annotators were prohibited from copying previous Q&A pairs, meaning that the correct answer should depend only on Q and visual knowledge extracted from I, not directly on H. Nevertheless, most prior models feed H directly into the answer decoder, creating a shortcut path H → A that introduces a “history‑length bias” and other spurious correlations.

Principle 1 (P1) therefore removes the direct edge H → A from the causal graph, forcing the model to rely on the mediated path H → Q → A. This aligns the model with the true generative process: H helps resolve co‑references in Q, and Q together with visual features V determines A. Empirically, cutting the H → A link eliminates the tendency of models to produce answers whose length mirrors the average length of previous answers, leading to more semantically appropriate responses.

Principle 2 (P2) addresses a hidden confounder U that simultaneously influences both Q and A. Because VisDial is a human‑in‑the‑loop task, user preferences, conversational style, and subtle contextual cues act as an unobserved variable that biases both the question formulation and the answer selection. In causal terms, U creates a fork Q ← U → A, opening a back‑door path that makes P(A|Q) spuriously correlated even when Q has no causal effect on A. To obtain the true causal effect of (I, H, Q) on A, the authors apply Pearl’s do‑calculus, estimating P(A|do(I, H, Q)) instead of the conventional likelihood P(A|I, H, Q). Since U is not observable, they propose practical approximations: (1) Monte‑Carlo sampling over possible U values, (2) gradient‑based de‑confounding that adjusts the loss to counteract the inferred influence of U, and (3) re‑weighting of candidate answers to diminish U‑driven preferences.

The paper validates these principles on four representative VisDial baselines: Late Fusion (LF), Hierarchical Co‑Attention (HCIAE), Co‑Attention (CoAtt), and Recursive Visual Attention (RvA). Applying P1 alone yields NDCG gains of roughly 12–14 percentage points; adding P2 pushes the improvements to 15–16 pp across all models. On the official test‑server leaderboard, a simple LF ensemble surpasses the previous champion by 0.2 pp, while a more sophisticated ensemble exceeds it by 0.9 pp, establishing new state‑of‑the‑art results for every single‑model baseline. Qualitative analyses show that the models no longer over‑fit to history length or to user‑specific lexical preferences, producing answers that are more grounded in the image and the current question.

In conclusion, the two causal principles constitute a universal roadmap for VisDial: (i) eliminate direct history‑to‑answer shortcuts, and (ii) actively intervene on the causal graph to block hidden user‑preference confounders. Because the principles are independent of any particular encoder‑decoder architecture, they can be readily incorporated into future multimodal dialog systems and potentially other interactive vision‑language tasks where human bias plays a hidden role. The work demonstrates how causal inference, traditionally applied in epidemiology or economics, can yield concrete performance gains in modern AI benchmarks.

Comments & Academic Discussion

Loading comments...

Leave a Comment