A Theory of Relation Learning and Cross-domain Generalization

People readily generalize knowledge to novel domains and stimuli. We present a theory, instantiated in a computational model, based on the idea that cross-domain generalization in humans is a case of analogical inference over structured (i.e., symbolic) relational representations. The model is an extension of the LISA and DORA models of relational inference and learning. The resulting model learns both the content and format (i.e., structure) of relational representations from non-relational inputs without supervision, when augmented with the capacity for reinforcement learning, leverages these representations to learn individual domains, and then generalizes to new domains on the first exposure (i.e., zero-shot learning) via analogical inference. We demonstrate the capacity of the model to learn structured relational representations from a variety of simple visual stimuli, and to perform cross-domain generalization between video games (Breakout and Pong) and between several psychological tasks. We demonstrate that the model’s trajectory closely mirrors the trajectory of children as they learn about relations, accounting for phenomena from the literature on the development of children’s reasoning and analogy making. The model’s ability to generalize between domains demonstrates the flexibility afforded by representing domains in terms of their underlying relational structure, rather than simply in terms of the statistical relations between their inputs and outputs.

💡 Research Summary

The paper proposes a cognitive theory of human cross‑domain generalization and implements it in a computational model that extends the LISA (Learning and Inference with Schemas and Analogies) and DORA (Discovery of Relations by Analogy) architectures. The central claim is that humans do not rely on purely statistical associations to transfer knowledge between superficially dissimilar domains; instead they perform analogical inference over structured symbolic relational representations that encode both the semantic content of a relation (e.g., “left‑of”) and a compositional format that binds arguments to relational roles.

Model Overview

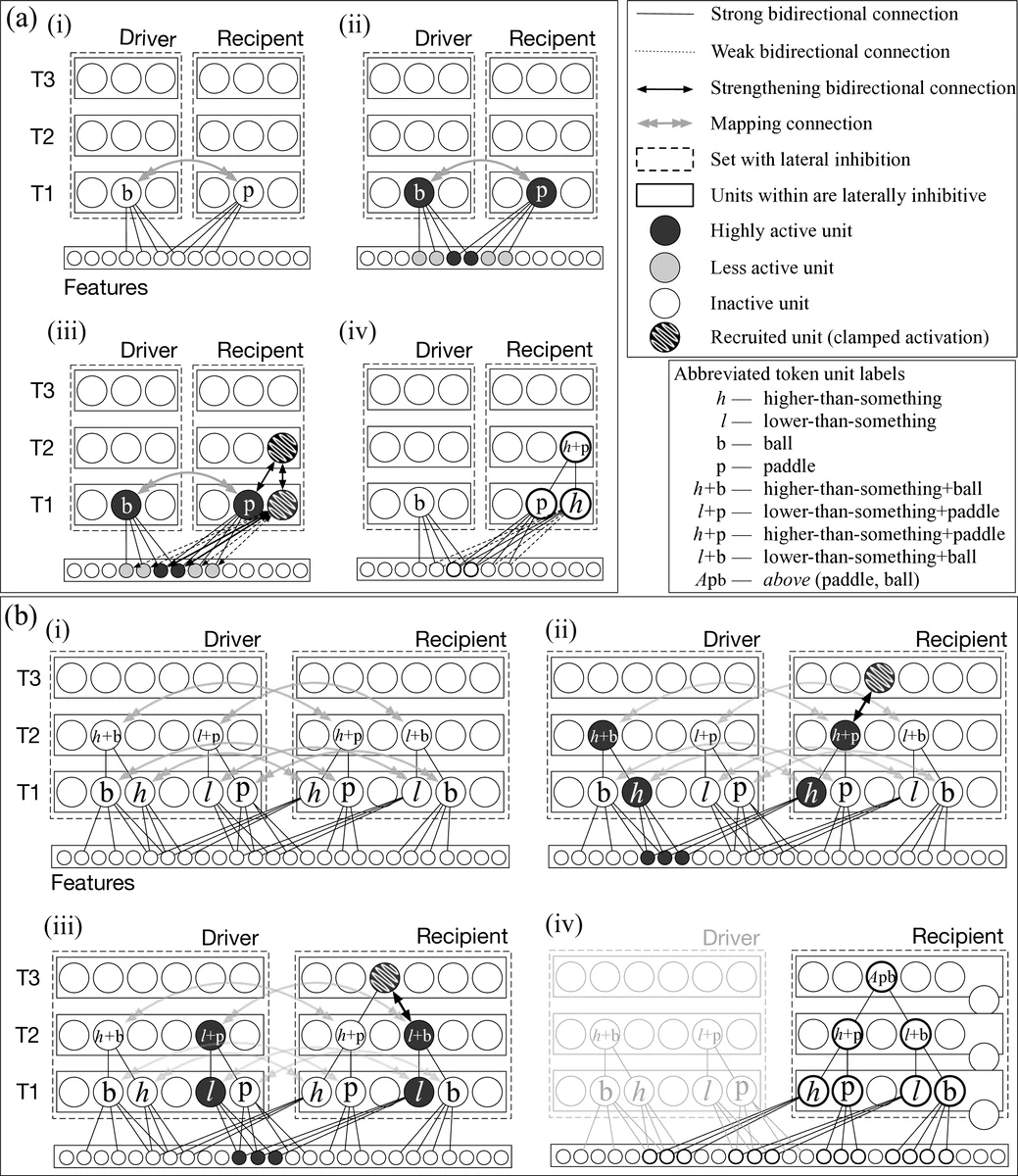

The model consists of three interacting components: (1) an unsupervised relational‑learning module that extracts relational units from raw visual inputs using oscillatory binding mechanisms; (2) a reinforcement‑learning (RL) controller that selects which relational unit to apply in a given situation and translates the selected relation into concrete actions; and (3) an analogical‑mapping subsystem that can copy a relational structure learned in one domain and instantiate it with new arguments in another domain, thereby achieving zero‑shot transfer.

In the first stage, simple visual scenes (arrays of shapes, positions, colors) are presented. Through phase‑synchronization of neural oscillations, the system discovers invariant patterns that fire whenever a particular relation holds, regardless of the specific objects or coordinates involved. These patterns constitute relational units that simultaneously encode (a) the content of the relation (its meaning) and (b) the format (role‑binding structure). No external labels or supervision are required; the learning is driven by statistical regularities in the co‑occurrence of object features.

The second stage integrates the learned relational units with an RL agent. The agent receives a state description (e.g., positions of paddle and ball) and, via a policy network, chooses a relation such as “paddle‑left‑of‑ball”. The chosen relation then triggers a motor command (move paddle right). The reward signal (game score, task success) updates the policy, allowing the system to learn which relations are useful in which contexts. This coupling gives the model the productivity of symbolic reasoning while retaining the sample‑efficiency of RL.

Zero‑Shot Cross‑Domain Transfer

The analogical‑mapping component treats a relational structure as a template. When the model encounters a new domain (e.g., Pong after training on Breakout), it maps the template “paddle‑left‑of‑ball → move paddle right” onto the new objects and geometry of Pong. Because the template’s format is invariant, the mapping requires only a substitution of arguments; the underlying rule remains unchanged. Empirically, the model achieved high performance on Pong after a single episode, whereas deep‑Q‑network agents typically need thousands of episodes to reach comparable proficiency.

Developmental Alignment

Simulations of the model’s learning trajectory were compared with developmental data on children’s acquisition of relational concepts. The model first learns simple spatial relations (above/below, left/right), then more complex relational schemas (simultaneity, causality), mirroring the order observed in child development studies. This suggests that the staged acquisition of relational representations in the model captures a plausible cognitive growth process.

Comparison with Implicit‑Relation Approaches

The authors contrast their explicit, structured approach with recent deep learning methods that aim to learn relations implicitly in weight matrices (e.g., Relation Networks, Graph Neural Networks). While implicit methods can solve within‑distribution tasks, they often fail when presented with out‑of‑distribution combinations or novel object configurations. The paper argues that such failures stem from the lack of a binding‑invariant format; without explicit role‑binding, the learned “relations” cannot be recombined flexibly.

Neural Oscillations and Binding

A notable contribution is the use of oscillatory phase‑locking as a mechanistic account of how the brain might achieve invariant relational binding. The authors link this to empirical findings on gamma‑band synchrony during relational reasoning, proposing a testable neurophysiological prediction.

Limitations and Future Directions

Current work is limited to two‑dimensional visual inputs and simple game environments. Extending the framework to language, higher‑order abstract reasoning, and multimodal domains will require richer relational vocabularies and possibly hierarchical composition mechanisms. Moreover, the reliance on hand‑crafted reward structures suggests a need for meta‑learning or intrinsic motivation signals to support more autonomous transfer. Finally, direct neuroimaging comparisons between the model’s oscillatory dynamics and human EEG/MEG data are proposed as a next step.

Conclusion

By integrating unsupervised relational extraction, reinforcement learning, and analogical mapping, the model demonstrates human‑like zero‑shot generalization across disparate domains and reproduces developmental patterns of relational learning. The work offers a concrete computational instantiation of the claim that structured symbolic relations, rather than statistical correlations, underlie the remarkable flexibility of human cognition, and it provides a roadmap for building AI systems with comparable generalization capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment