📝 Original Info

- Title: SimWorld-Robotics: Synthesizing Photorealistic and Dynamic Urban Environments for Multimodal Robot Navigation and Collaboration

- ArXiv ID: 2512.10046

- Date: 2025-12-10

- Authors: Yan Zhuang, Jiawei Ren, Xiaokang Ye, Jianzhi Shen, Ruixuan Zhang, Tianai Yue, Muhammad Faayez, Xuhong He, Ziqiao Ma, Lianhui Qin, Zhiting Hu, Tianmin Shu

📝 Abstract

Recent advances in foundation models have shown promising results in developing generalist robotics that can perform diverse tasks in open-ended scenarios given multimodal inputs. However, current work has been mainly focused on indoor, household scenarios. In this work, we present SimWorld-Robotics (SWR), a simulation platform for embodied AI in large-scale, photorealistic urban environments. Built on Unreal Engine 5, SWR procedurally generates unlimited photorealistic urban scenes populated with dynamic elements such as pedestrians and traffic systems, surpassing prior urban simulations in realism, complexity, and scalability. It also supports multi-robot control and communication. With these key features, we build two challenging robot benchmarks: (1) a multimodal instruction-following task, where a robot must follow vision-language navigation instructions to reach a destination in the presence of pedestrians and traffic; and (2) a multi-agent search task, where two robots must communicate to cooperatively locate and meet each other. Unlike existing benchmarks, these two new benchmarks comprehensively evaluate a wide range of critical robot capacities in realistic scenarios, including (1) multimodal instructions grounding, (2) 3D spatial reasoning in large environments, (3) safe, long-range navigation with people and traffic, (4) multi-robot collaboration, and (5) grounded communication. Our experimental results demonstrate that stateof-the-art models, including vision-language models (VLMs), struggle with our tasks, lacking robust perception, reasoning, and planning abilities necessary for urban environments.

💡 Deep Analysis

Deep Dive into SimWorld-Robotics: Synthesizing Photorealistic and Dynamic Urban Environments for Multimodal Robot Navigation and Collaboration.

Recent advances in foundation models have shown promising results in developing generalist robotics that can perform diverse tasks in open-ended scenarios given multimodal inputs. However, current work has been mainly focused on indoor, household scenarios. In this work, we present SimWorld-Robotics (SWR), a simulation platform for embodied AI in large-scale, photorealistic urban environments. Built on Unreal Engine 5, SWR procedurally generates unlimited photorealistic urban scenes populated with dynamic elements such as pedestrians and traffic systems, surpassing prior urban simulations in realism, complexity, and scalability. It also supports multi-robot control and communication. With these key features, we build two challenging robot benchmarks: (1) a multimodal instruction-following task, where a robot must follow vision-language navigation instructions to reach a destination in the presence of pedestrians and traffic; and (2) a multi-agent search task, where two robots must comm

📄 Full Content

SimWorld-Robotics: Synthesizing Photorealistic and

Dynamic Urban Environments for Multimodal Robot

Navigation and Collaboration

Yan Zhuang1

Jiawei Ren2∗

Xiaokang Ye2∗

Jianzhi Shen3

Ruixuan Zhang3

Tianai Yue3

Muhammad Faayez3

Xuhong He4

Xiyan Zhang3

Ziqiao Ma5

Lianhui Qin2‡

Zhiting Hu2‡

Tianmin Shu3‡

1University of Virginia

2UC San Diego

3Johns Hopkins University

4Carnegie Mellon University

5University of Michigan

Abstract

Recent advances in foundation models have shown promising results in

developing generalist robotics that can perform diverse tasks in open-ended

scenarios given multimodal inputs.

However, current work has been mainly

focused on indoor, household scenarios.

In this work, we present SimWorld-

Robotics (SWR), a simulation platform for embodied AI in large-scale,

photorealistic urban environments. Built on Unreal Engine 5, SWR procedurally

generates unlimited photorealistic urban scenes populated with dynamic elements

such as pedestrians and traffic systems, surpassing prior urban simulations in

realism, complexity, and scalability.

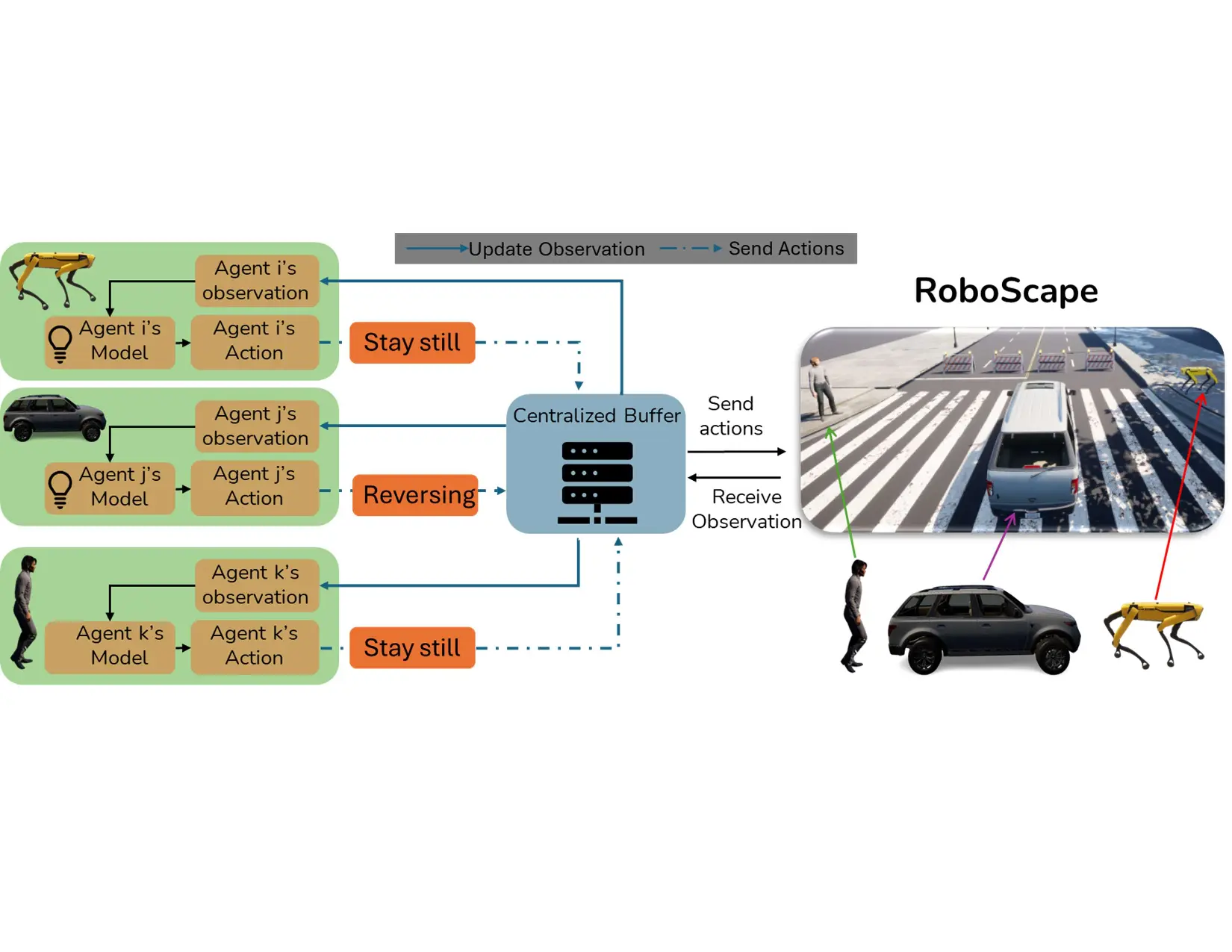

It also supports multi-robot control and

communication.

With these key features, we build two challenging robot

benchmarks: (1) a multimodal instruction-following task, where a robot must

follow vision-language navigation instructions to reach a destination in the

presence of pedestrians and traffic; and (2) a multi-agent search task, where two

robots must communicate to cooperatively locate and meet each other. Unlike

existing benchmarks, these two new benchmarks comprehensively evaluate a wide

range of critical robot capacities in realistic scenarios, including (1) multimodal

instructions grounding, (2) 3D spatial reasoning in large environments, (3) safe,

long-range navigation with people and traffic, (4) multi-robot collaboration, and

(5) grounded communication. Our experimental results demonstrate that state-

of-the-art models, including vision-language models (VLMs), struggle with our

tasks, lacking robust perception, reasoning, and planning abilities necessary for

urban environments.

Project website: SimWorld-Robotics

1

Introduction

There has been tremendous progress in engineering general-purpose robotics that can follow human

instructions and perform open-ended tasks [2, 28, 15, 27, 46], thanks to the advances in robot

foundation models. Training these models requires a large amount of data, much of which can be

generated in high-fidelity embodied simulators, such as Habitat 3 [40], RoboTHOR [10], TDW [14],

VirtualHome [38], Virtual Community [61] and BEHAVIOR [27]. They can also be systematically

evaluated in diverse scenarios created in these simulators. However, current embodied simulators for

robotics have been focused on tabletop [35, 58, 34, 22, 59] or household tasks [48, 27, 26, 47, 46].

In this work, we want to study how to create a realistic and scalable embodied simulator for outdoor

robotics tasks.

* Equal contribution. ‡ Equal advising.

39th Conference on Neural Information Processing Systems (NeurIPS 2025).

arXiv:2512.10046v3 [cs.AI] 23 Jan 2026

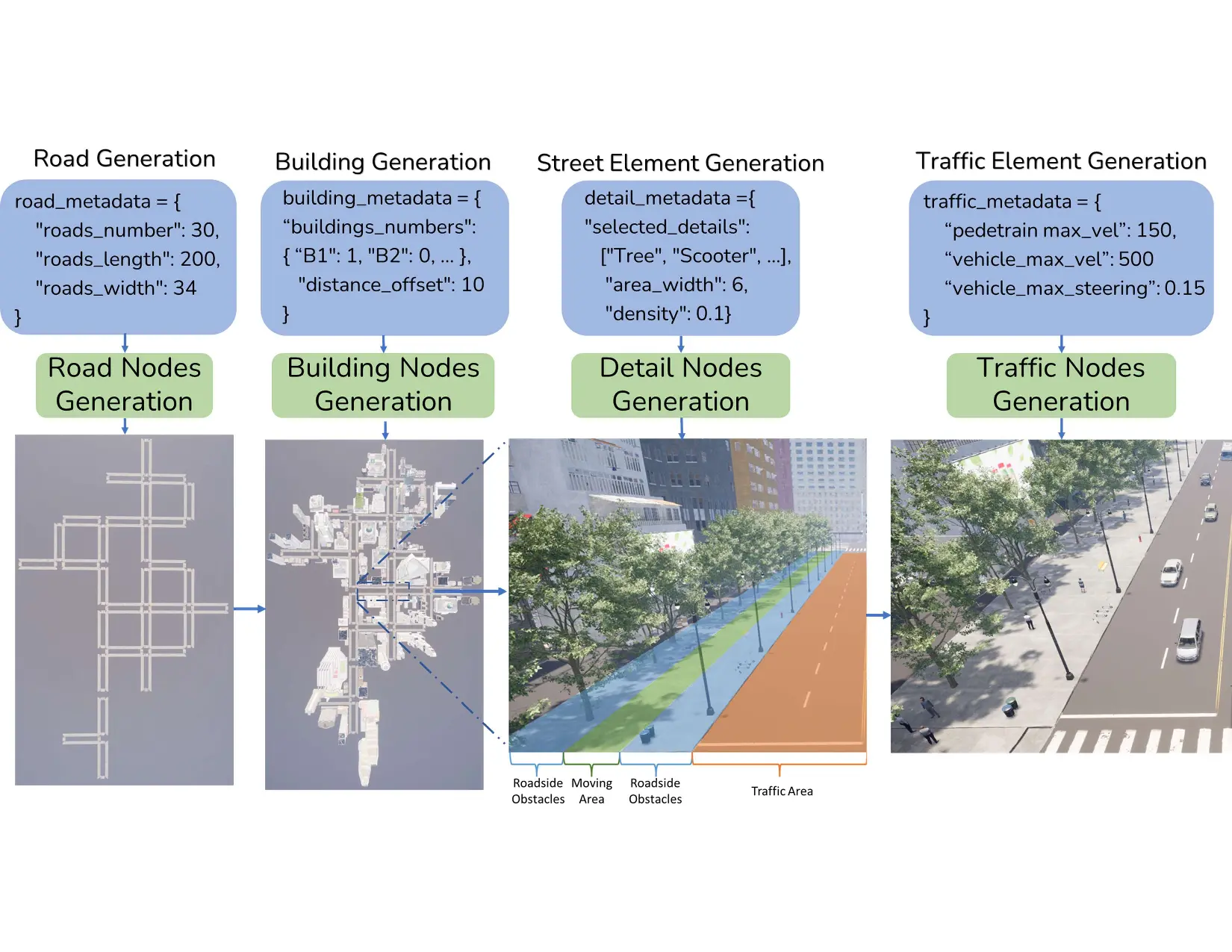

Figure 1: Overview of SimWorld Robotics (SWR). Built upon Unreal Engine 5, SWR is a

simulation platform for large-scale, photorealistic, and dynamic urban environments.

It offers

diverse high-fidelity building and object assets, supports embodied agents with rich action spaces,

includes a background traffic system powered by city-scale waypoint generation, and enables

comprehensive city procedural generation.

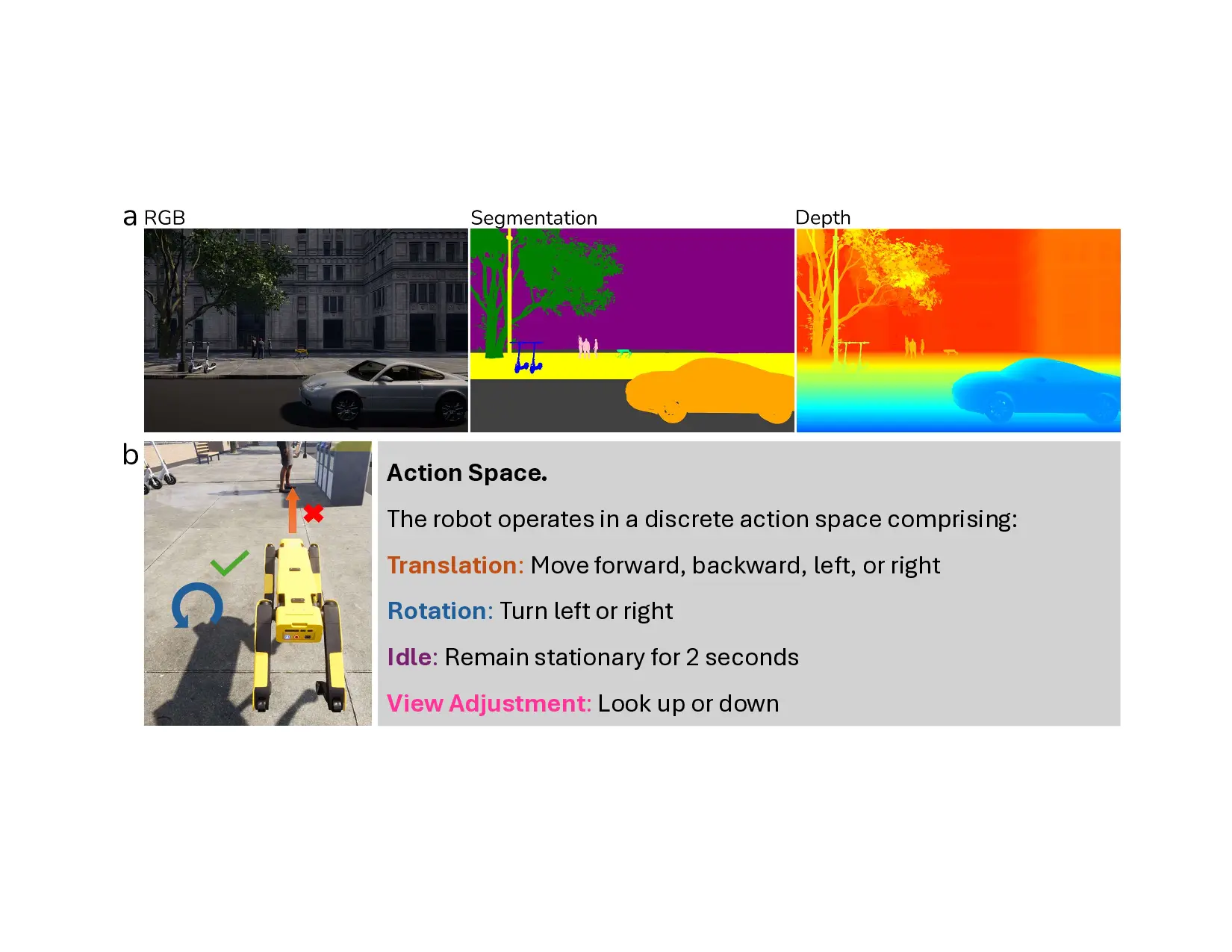

Compared to indoor scenarios, robotics in outdoor environments, in particular, large urban

environments, introduces additional challenges, such as (1) 3D perception, spatial reasoning and

grounding in large environments; (2) safe navigation in dynamic scenes with people and traffic; (3)

long-range spatial memory; and (4) multi-agent collaboration and communication in task.

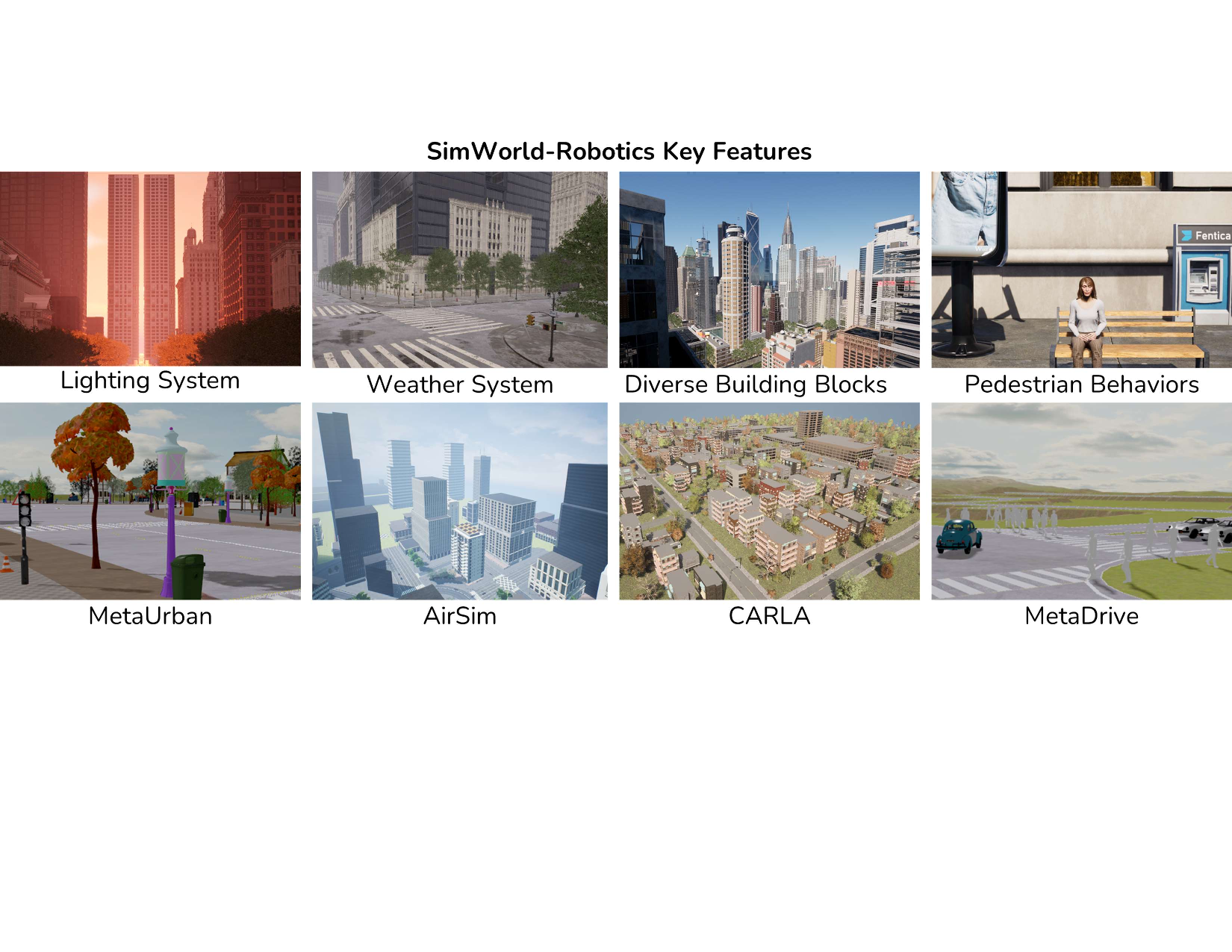

There have been urban simulators developed in recent years.

However, to address the critical

challenges faced by real-world robotics in urban environments, they lack the necessary realism,

customizability, scalability, and versatility. For instance, well-known simulators such as AirSim [45],

CARLA [12] mainly focus on autonomous driving domains.

While it supports some manual

customization to the provided city environments, it does not support procedural city environment

generation.

It also does not support the flexible control of embodied agents (such as mobile

robots or pedestrians) other than vehicles. More recent city simulators, such as MetaDrive [29],

MetaUrban [56], significantly improve the scalability. However, the simulated environments still

lack photorealism as shown in Figure 2.

Therefore, we introduce SimWorld-Robotics (SWR), a new embodied AI simulation platform for

large-scale, photorealistic, and dynamic urban environments.

As illustrated in Figure 1, SWR

offers diverse high-fidelity building and object assets, multiple types of embodied agents with rich

action spaces, a waypoint-based background traffic system, and a c

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.