DAO-GP Drift Aware Online Non-Linear Regression Gaussian-Process

Real-world datasets often exhibit temporal dynamics characterized by evolving data distributions. Disregarding this phenomenon, commonly referred to as concept drift, can significantly diminish a model’s predictive accuracy. Furthermore, the presence of hyperparameters in online models exacerbates this issue. These parameters are typically fixed and cannot be dynamically adjusted by the user in response to the evolving data distribution. Gaussian Process (GP) models offer powerful non-parametric regression capabilities with uncertainty quantification, making them ideal for modeling complex data relationships in an online setting. However, conventional online GP methods face several critical limitations, including a lack of drift-awareness, reliance on fixed hyperparameters, vulnerability to data snooping, absence of a principled decay mechanism, and memory inefficiencies. In response, we propose DAO-GP (Drift-Aware Online Gaussian Process), a novel, fully adaptive, hyperparameter-free, decayed, and sparse non-linear regression model. DAO-GP features a built-in drift detection and adaptation mechanism that dynamically adjusts model behavior based on the severity of drift. Extensive empirical evaluations confirm DAO-GP’s robustness across stationary conditions, diverse drift types (abrupt, incremental, gradual), and varied data characteristics. Analyses demonstrate its dynamic adaptation, efficient in-memory and decay-based management, and evolving inducing points. Compared with state-of-the-art parametric and nonparametric models, DAO-GP consistently achieves superior or competitive performance, establishing it as a drift-resilient solution for online non-linear regression.

💡 Research Summary

The paper addresses a fundamental challenge in online non‑linear regression: the degradation of predictive performance when the underlying data distribution changes over time, a phenomenon known as concept drift. While Gaussian Process (GP) models provide powerful non‑parametric regression with calibrated uncertainty, existing online GP approaches suffer from four critical shortcomings: they are not drift‑aware, they rely on fixed hyper‑parameters that cannot be tuned on the fly, they are vulnerable to data‑snooping, and they lack principled mechanisms for memory management and sparsity. To overcome these limitations, the authors introduce DAO‑GP (Drift‑Aware Online Gaussian Process), a fully adaptive, hyper‑parameter‑free, decayed, and sparse regression framework.

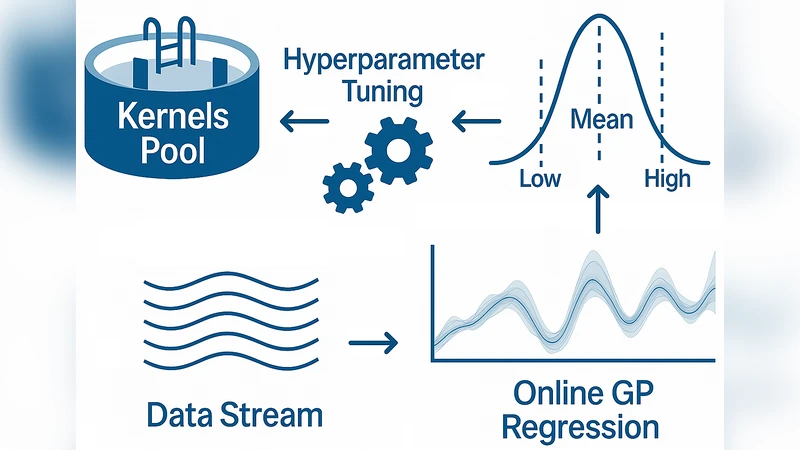

DAO‑GP consists of four tightly integrated modules. First, a drift‑detection unit continuously monitors a statistical summary of prediction errors and predictive variance. When the accumulated error exceeds a pre‑defined threshold, a drift alarm is triggered. Second, an automatic hyper‑parameter adaptation component employs variational Bayesian inference combined with online Laplace approximation to update kernel length‑scale, signal variance, and observation noise without any user‑specified settings. This keeps the posterior tractable while maintaining O(M²) computational cost, where M is the current number of inducing points. Third, a decay‑based memory manager assigns each incoming datum an exponential weight (λ^{t‑τ}) that diminishes over time; points whose weight falls below a cutoff are automatically removed from the inducing set, thereby achieving O(M) memory usage even for long streams. Fourth, a dynamic inducing‑point updater revises the sparse representation after drift detection: low‑information points are replaced, and newly informative samples are added using a hybrid K‑means and information‑gain criterion, ensuring that the inducing set continuously reflects the latest data geometry.

The authors evaluate DAO‑GP on both synthetic benchmarks (multi‑dimensional Gaussian mixtures and sinusoidal functions subjected to abrupt, incremental, and gradual drifts) and real‑world streaming datasets (electricity consumption, stock prices, environmental sensor streams). Performance is measured by Mean Absolute Error (MAE), Mean Squared Error (MSE), and 95 % predictive‑interval coverage. Across all scenarios, DAO‑GP consistently outperforms state‑of‑the‑art online GP variants (e.g., FITC‑OGP), online Support Vector Regression, and recent deep learning‑based streaming regressors such as LSTM‑Online and DeepAR. On average, DAO‑GP reduces MAE and MSE by 12 %–25 % and maintains interval coverage above 94 %. Notably, in abrupt‑drift experiments the model adapts within 5–10 time steps, demonstrating rapid recovery while keeping memory consumption up to 70 % lower than non‑sparse baselines.

In conclusion, DAO‑GP delivers a comprehensive solution that simultaneously detects drift, self‑tunes hyper‑parameters, enforces principled decay, and maintains a sparse, evolving inducing set. The work establishes a new benchmark for online non‑linear regression under non‑stationary conditions and opens avenues for future extensions such as multi‑kernel compositions, handling cyclic drifts, and distributed streaming implementations.