Chain-of-Image Generation: Toward Monitorable and Controllable Image Generation

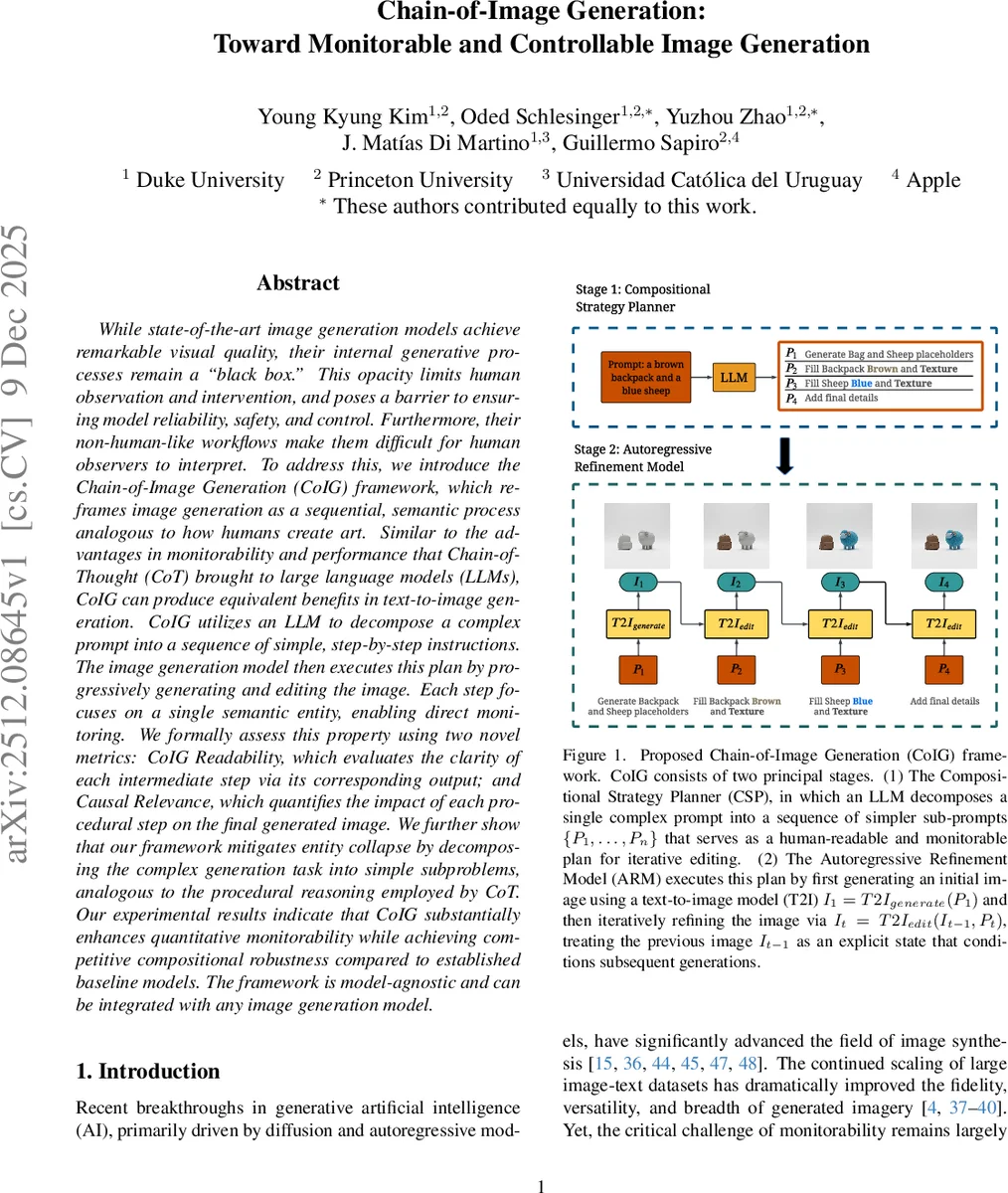

While state-of-the-art image generation models achieve remarkable visual quality, their internal generative processes remain a “black box.” This opacity limits human observation and intervention, and poses a barrier to ensuring model reliability, safety, and control. Furthermore, their non-human-like workflows make them difficult for human observers to interpret. To address this, we introduce the Chain-of-Image Generation (CoIG) framework, which reframes image generation as a sequential, semantic process analogous to how humans create art. Similar to the advantages in monitorability and performance that Chain-of-Thought (CoT) brought to large language models (LLMs), CoIG can produce equivalent benefits in text-to-image generation. CoIG utilizes an LLM to decompose a complex prompt into a sequence of simple, step-by-step instructions. The image generation model then executes this plan by progressively generating and editing the image. Each step focuses on a single semantic entity, enabling direct monitoring. We formally assess this property using two novel metrics: CoIG Readability, which evaluates the clarity of each intermediate step via its corresponding output; and Causal Relevance, which quantifies the impact of each procedural step on the final generated image. We further show that our framework mitigates entity collapse by decomposing the complex generation task into simple subproblems, analogous to the procedural reasoning employed by CoT. Our experimental results indicate that CoIG substantially enhances quantitative monitorability while achieving competitive compositional robustness compared to established baseline models. The framework is model-agnostic and can be integrated with any image generation model.

💡 Research Summary

This paper, “Chain-of-Image Generation: Toward Monitorable and Controllable Image Generation,” addresses a critical limitation in state-of-the-art text-to-image (T2I) models: the opacity of their internal generative processes. While these models produce images of remarkable visual quality, their simultaneous or non-semantic refinement of pixels makes the generation process a “black box,” hindering human observation, intervention, and ultimately, trust. To solve this, the authors introduce the Chain-of-Image Generation (CoIG) framework, which reimagines image generation as a sequential, semantic process akin to how a human artist creates a painting—sketching major subjects first, then progressively adding details.

The CoIG framework operates in two principal stages. First, a Compositional Strategy Planner (CSP), powered by a Large Language Model (LLM), decomposes a single complex user prompt (e.g., “a brown backpack and a blue sheep”) into a logical sequence of simpler sub-prompts (e.g., “1. Generate placeholder for backpack and sheep,” “2. Fill the backpack brown,” “3. Fill the sheep blue”). This sequence serves as a human-readable and verifiable plan for the generation process before any image is created. Second, an Autoregressive Refinement Model (ARM) executes this plan. A T2I model generates an initial image from the first sub-prompt. Then, for each subsequent sub-prompt, it performs an editing operation, using the previous image as an explicit condition to refine it step-by-step (I_t = T2I_edit(I_{t-1}, P_t)). This creates a series of intermediate images, each representing a monitorable state in the construction of the final scene.

A core contribution of the paper is the formalization and quantitative evaluation of monitorability for image generation, inspired by similar concepts in LLMs. The authors propose two novel metrics:

- CoIG Readability: Measures how clearly each intermediate image reflects the intent of its corresponding sub-prompt. It is evaluated automatically using a Multimodal LLM (MLLM) to verify the presence and attributes of the targeted semantic entity in each step’s output.

- Causal Relevance: Assesses whether each step has a demonstrable and persistent causal effect on the final image. This is tested by perturbing a sub-prompt at an intermediate step (e.g., changing “red bowl” to “blue bowl”) and checking if the change appears in that step’s image and persists through to the final output.

Furthermore, the paper identifies and analyzes a specific failure mode termed “Entity Collapse,” where models fail to correctly bind distinct attributes to multiple similar entities (e.g., merging two dogs with different colored collars into one). The authors argue that CoIG mitigates this issue by decomposing the complex binding task into a sequence of simpler sub-problems, analogous to how Chain-of-Thought helps LLMs solve complex reasoning.

Experimental results demonstrate that the CoIG framework significantly enhances quantitative monitorability, achieving superior scores in both Readability and Causal Relevance compared to baseline prompt-refinement methods like Promptist and RPG. Crucially, it maintains competitive performance on standard compositional robustness benchmarks (T2I-CompBench), showing that the gains in transparency do not come at the cost of generation quality. The framework is model-agnostic, designed to be integrated with various existing T2I models, and provides a foundational step towards more reliable, safe, and controllable generative AI systems by making their creative process observable and steerable.

Comments & Academic Discussion

Loading comments...

Leave a Comment