NumCoKE: Ordinal-Aware Numerical Reasoning over Knowledge Graphs with Mixture-of-Experts and Contrastive Learning

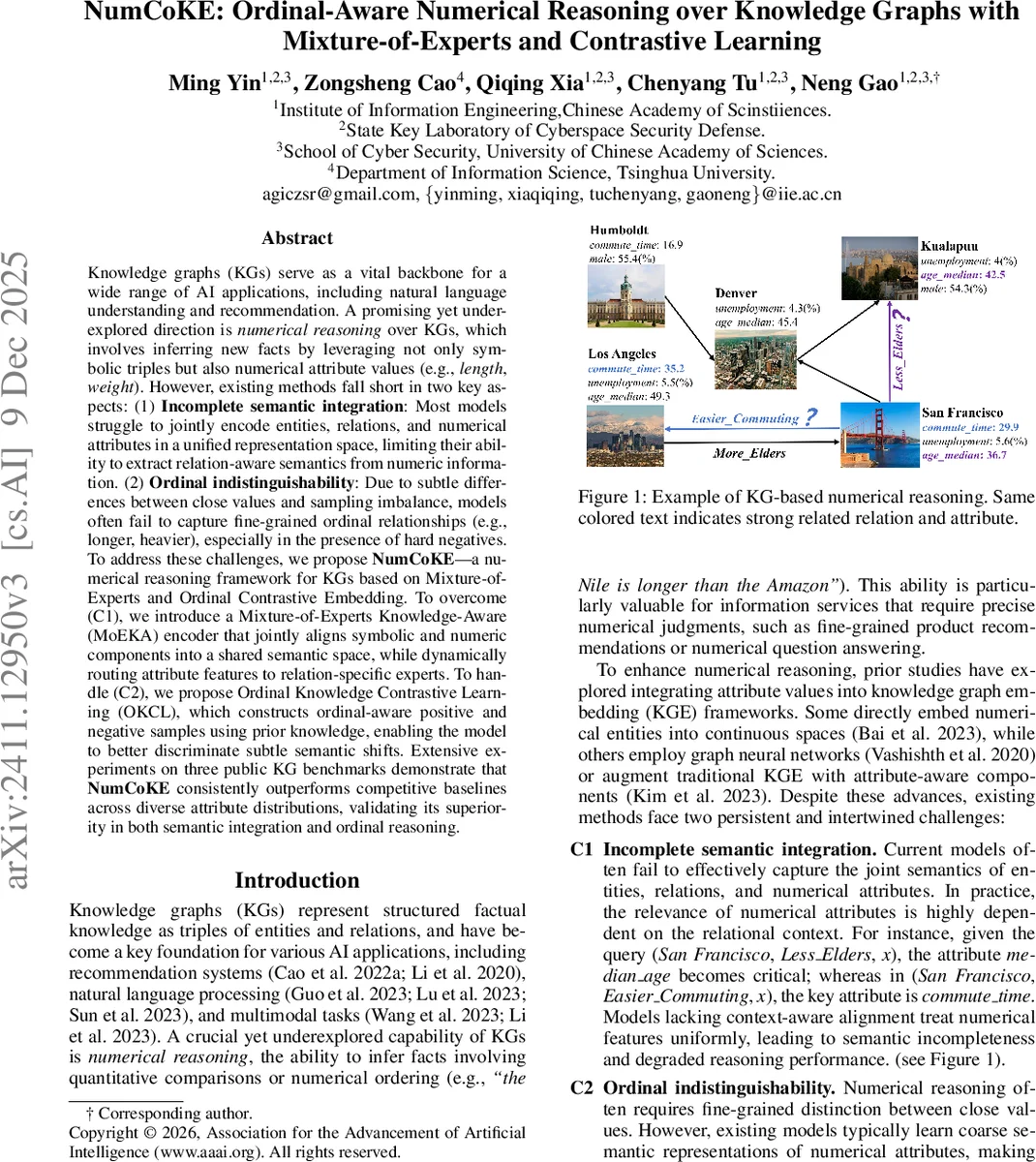

Knowledge graphs (KGs) serve as a vital backbone for a wide range of AI applications, including natural language understanding and recommendation. A promising yet underexplored direction is numerical reasoning over KGs, which involves inferring new facts by leveraging not only symbolic triples but also numerical attribute values (e.g., length, weight). However, existing methods fall short in two key aspects: (1) Incomplete semantic integration: Most models struggle to jointly encode entities, relations, and numerical attributes in a unified representation space, limiting their ability to extract relation-aware semantics from numeric information. (2) Ordinal indistinguishability: Due to subtle differences between close values and sampling imbalance, models often fail to capture fine-grained ordinal relationships (e.g., longer, heavier), especially in the presence of hard negatives. To address these challenges, we propose NumCoKE, a numerical reasoning framework for KGs based on Mixture-of-Experts and Ordinal Contrastive Embedding. To overcome (C1), we introduce a Mixture-of-Experts Knowledge-Aware (MoEKA) encoder that jointly aligns symbolic and numeric components into a shared semantic space, while dynamically routing attribute features to relation-specific experts. To handle (C2), we propose Ordinal Knowledge Contrastive Learning (OKCL), which constructs ordinal-aware positive and negative samples using prior knowledge, enabling the model to better discriminate subtle semantic shifts. Extensive experiments on three public KG benchmarks demonstrate that NumCoKE consistently outperforms competitive baselines across diverse attribute distributions, validating its superiority in both semantic integration and ordinal reasoning.

💡 Research Summary

NumCoKE addresses two fundamental shortcomings of existing knowledge graph (KG) embedding methods when dealing with numerical attributes: (1) incomplete semantic integration of entities, relations, and numeric values, and (2) inability to distinguish fine‑grained ordinal relationships among close numeric values. To solve these problems, the authors propose a two‑component framework.

The first component, Mixture‑of‑Experts Knowledge‑Aware (MoEKA) encoder, first extracts a raw feature vector for each entity and passes it through K expert networks, each producing a perspective‑specific embedding. A relation‑conditioned gating mechanism computes soft weights for each expert using a combination of learned projections, Gaussian noise, and a relation‑specific temperature term. The weighted sum yields a relation‑aware entity embedding that dynamically routes numeric attribute information to the most relevant expert, thereby aligning entities, relations, and numbers in a shared semantic space.

The second component, Ordinal Knowledge Contrastive Learning (OKCL), goes beyond binary positive‑negative sampling. It first generates conventional positive (E⁺) and negative (E⁻) samples from KG triples, then selects the top‑k most cosine‑similar samples for each anchor to form ordinal‑aware positive and negative sets. These sets are blended with the anchor embedding using coefficients α and β sampled from a uniform distribution, producing mixed embeddings that preserve ordinal proximity. A triplet‑style loss enforces d(anchor, positive) < d(anchor, negative), encouraging the model to capture subtle ordering such as “184 cm is longer than 183 cm.”

Numeric values themselves are embedded via a learnable linear transformation and a per‑attribute embedding vector; missing values receive a dedicated learnable missing‑value embedding instead of a fixed placeholder. A Knowledge Perceptual Attention module projects entity and relation embeddings onto an attribute subspace, combines them with learnable mixing coefficients, and applies multi‑head attention to compute attribute‑wise attention scores. The resulting attribute‑aware vectors are concatenated and fused with the relation‑conditioned entity embedding through a gated linear layer, producing the final attribute‑enriched entity representation.

Experiments are conducted on three public KG benchmarks (FB15k‑237, YAGO‑3, and a custom dataset covering discrete, continuous, and skewed numeric distributions) and compare against strong baselines such as TransE, RotatE, ConvE, KBLRN, LiteralE, NRN, and RAKGE. NumCoKE consistently outperforms all baselines across standard metrics (Mean Rank, Hits@1/3/10, MRR), achieving improvements of 5–12 percentage points in MRR and 7–15 percentage points in Hits@10. The gains are especially pronounced on test sets with hard negatives, demonstrating the effectiveness of OKCL in handling close‑value confusion. Ablation studies show that using multiple experts (K = 4–8) and a relation‑specific temperature around 0.3–0.5 yields the most stable performance.

The paper also discusses limitations: the expert gating may lose discriminative power when the KG contains very few relation types, and the Top‑k cosine selection in OKCL can be sensitive to highly imbalanced or noisy attribute distributions. Extending the framework to multimodal literals (text, images) is left for future work.

In summary, NumCoKE introduces a novel mixture‑of‑experts encoder that dynamically aligns numeric attributes with relational context, and an ordinal‑aware contrastive learning scheme that captures fine‑grained ordering. The combined approach delivers state‑of‑the‑art results on diverse KG benchmarks, offering a practical foundation for applications such as fine‑grained recommendation, medical diagnosis, and geographic information systems where numerical reasoning is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment