📝 Original Info

- Title: GTAvatar: Bridging Gaussian Splatting and Texture Mapping for Relightable and Editable Gaussian Avatars

- ArXiv ID: 2512.09162

- Date: 2025-12-09

- Authors: ** Kelian Baert, Mae Younes, Francois Bourel, Marc Christie, Adnane Boukhayma **

📝 Abstract

GTAvatar broadens applications of monocular Gaussian Splatting head avatars beyond reenactment and relighting, enabling interactive editing of textures for precise control of intrinsic appearance, while preserving training efficiency, rendering speed and visual fidelity.

💡 Deep Analysis

Deep Dive into GTAvatar: Bridging Gaussian Splatting and Texture Mapping for Relightable and Editable Gaussian Avatars.

GTAvatar broadens applications of monocular Gaussian Splatting head avatars beyond reenactment and relighting, enabling interactive editing of textures for precise control of intrinsic appearance, while preserving training efficiency, rendering speed and visual fidelity.

📄 Full Content

GTAvatar: Bridging Gaussian Splatting and Texture Mapping for

Relightable and Editable Gaussian Avatars

Kelian Baert

Mae Younes

Francois Bourel

Marc Christie

Adnane Boukhayma

Univ Rennes, Inria, CNRS, IRISA, France

Reconstruct

Edit & Relight

PBR Material Support

Animate

Input video

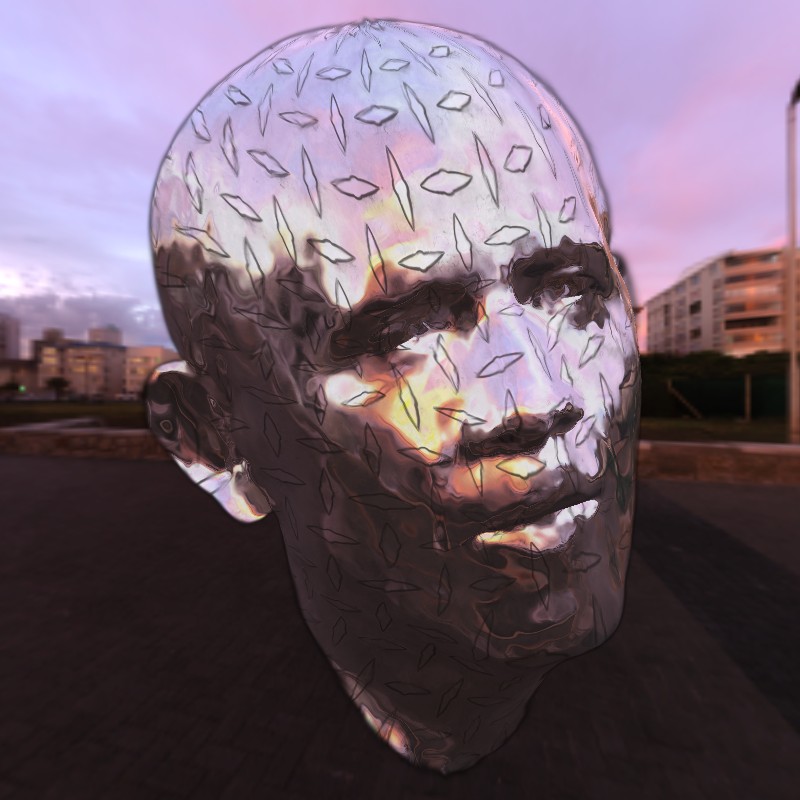

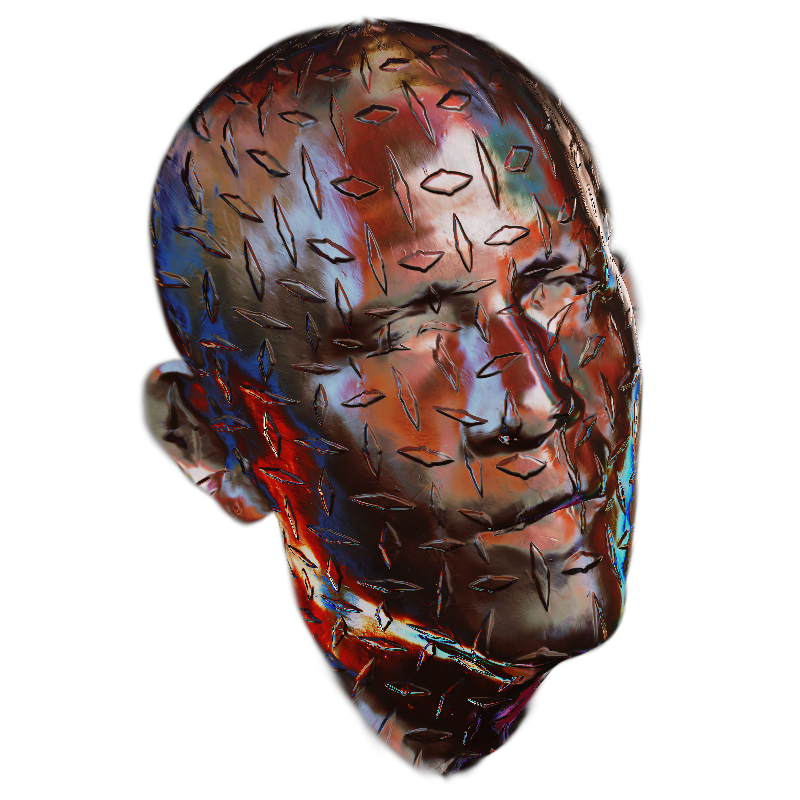

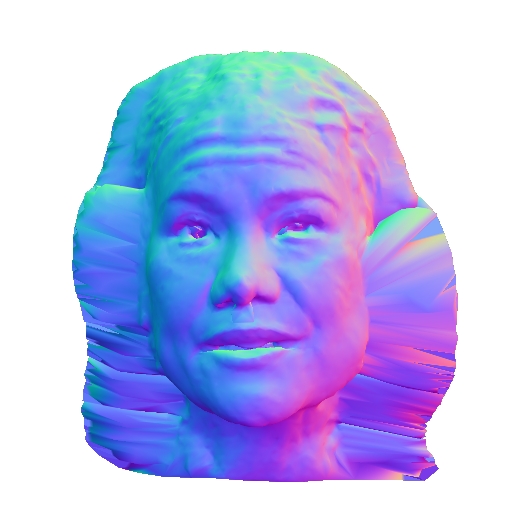

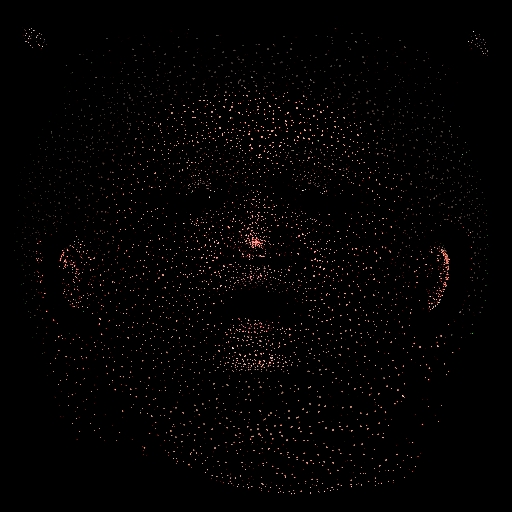

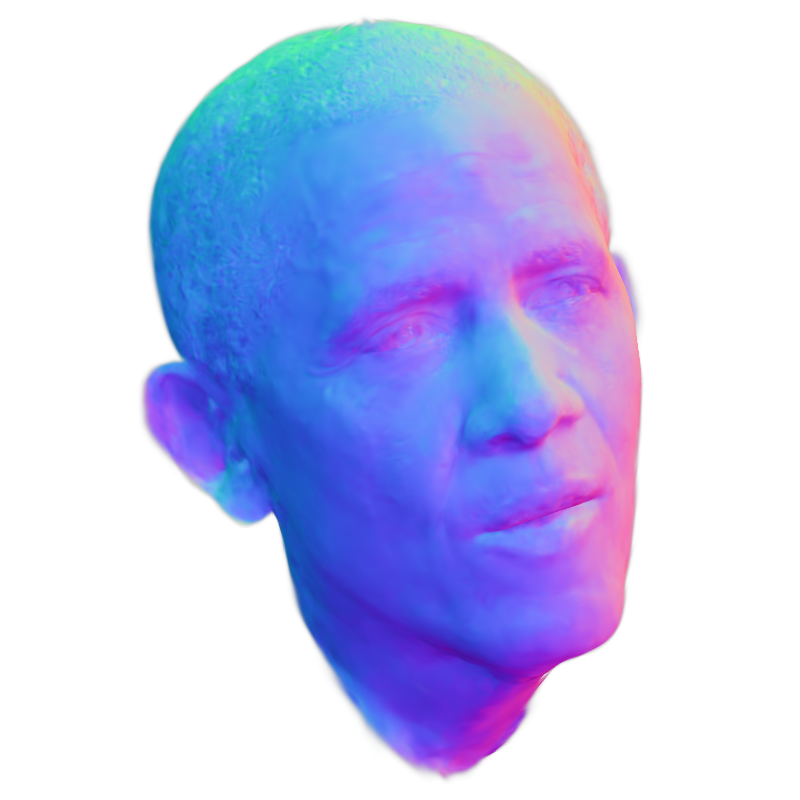

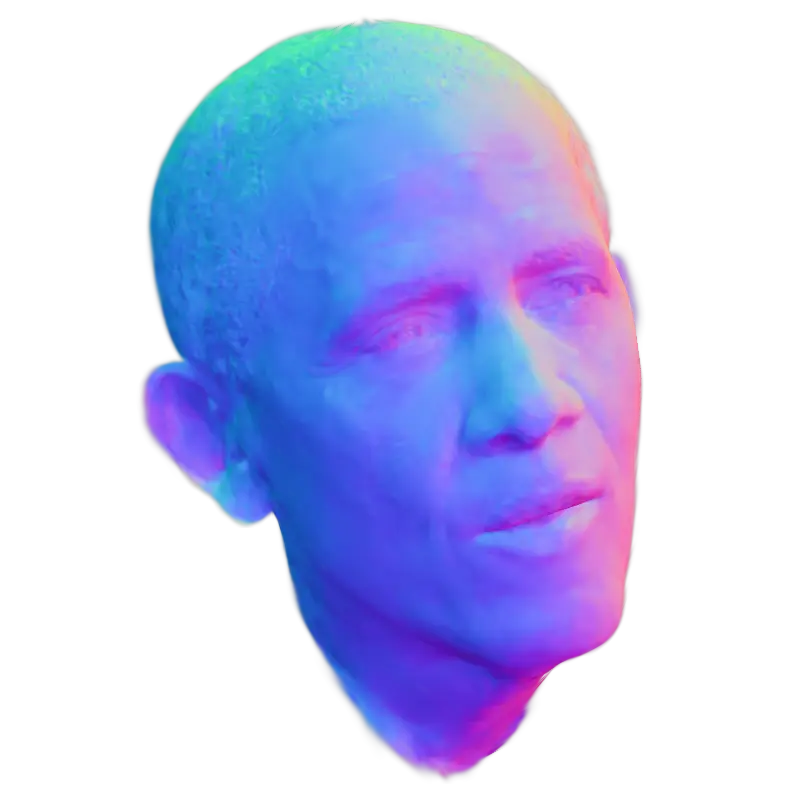

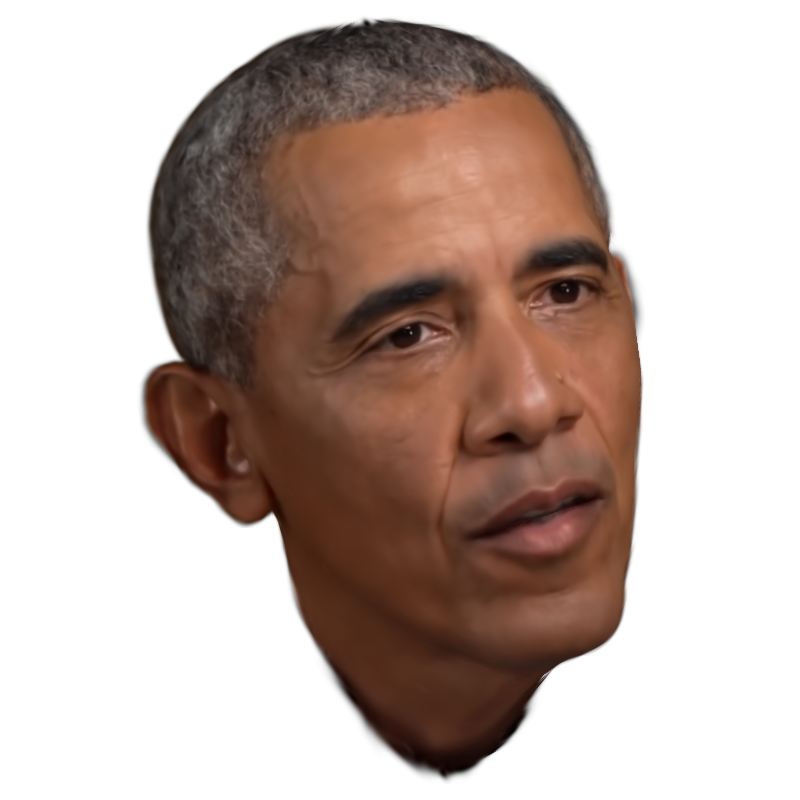

Figure 1: GTAvatar broadens applications of monocular Gaussian Splatting head avatars beyond reenactment and relighting, enabling inter-

active editing of textures for precise control of intrinsic appearance, while preserving training efficiency, rendering speed and visual fidelity.

Abstract

Recent advancements in Gaussian Splatting have enabled increasingly accurate reconstruction of photorealistic head avatars,

opening the door to numerous applications in visual effects, videoconferencing, and virtual reality. This, however, comes with

the lack of intuitive editability offered by traditional triangle mesh–based methods. In contrast, we propose a method that com-

bines the accuracy and fidelity of 2D Gaussian Splatting with the intuitiveness of UV texture mapping. By embedding each

canonical Gaussian primitive’s local frame into a patch in the UV space of a template mesh in a computationally efficient man-

ner, we reconstruct continuous editable material head textures from a single monocular video on a conventional UV domain.

Furthermore, we leverage an efficient physically based reflectance model to enable relighting and editing of these intrinsic

material maps. Through extensive comparisons with state-of-the-art methods, we demonstrate the accuracy of our reconstruc-

tions, the quality of our relighting results, and the ability to provide intuitive controls for modifying an avatar’s appearance and

geometry via texture mapping without additional optimization.

- Introduction

Gaussian Splatting based avatars have revolutionized the capture

and rendering of digital humans by delivering an unprecedented

level of photorealism with real-time rendering capability, enabling

new possibilities for reanimating content from video inputs. How-

ever, this realism comes with a trade-off: unlike traditional texture-

map-based modeling, Gaussian avatars offer little flexibility for in-

tuitive appearance editing. This gap becomes critical in practice. In

arXiv:2512.09162v1 [cs.CV] 9 Dec 2025

2 of 16

K. Baert et al. / GTAvatar: Bridging Gaussian Splatting and Texture Mapping for Relightable and Editable Gaussian Avatars

film and visual effects, artists routinely adjust the smallest details of

a face - smoothing skin to create a flawless appearance, removing

distracting high-frequency features, or sculpting age and character

through wrinkles, scars and bruises. In gaming and virtual produc-

tion, creators seek the same level of control to personalize avatars

with tattoos, makeup, or stylized patterns using artist-friendly tools

that operate directly on material texture maps. Without such editing

capabilities, even the most realistic avatar remains a fixed reproduc-

tion, rather than a medium for creative expression.

This limitation stems from the fact that each Gaussian splat car-

ries its own color or material properties in isolation, without the

shared structure that texture maps naturally provide. As a result, the

surface lacks the local coherence needed to treat it as a continuous

canvas, making it difficult — if not impossible — to apply edits

consistently, whether to a small region of the face or to its over-

all appearance. Conversely, while mesh-based 3D inverse rendering

methods [MHS∗22, BZH∗23, BBC∗24] inherit a coherent defacto

UV-domain fit for artist-friendly edits, they can suffer from topo-

logical rigidity when representing high-frequency details and are

hampered in their reconstruction capacity due to the limitations of

differentiable rasterization when handling complex or translucent

geometries (cf. Table 1). Furthermore, naively embedding Gaus-

sians in UV domain leads to discontinuous texture maps that are

hard to edit successfully, as witnessed in our results (e.g. Figure 10)

and also in [ZWL∗25]. The authors of FATE [ZWL∗25] proposed

a second U-Net neural baking stage to alleviate this issue in the

context of non-relightable avatars.

Our goal is to reconstruct a head avatar from a single monocu-

lar RGB video, that can be rendered in real time, relit under arbi-

trary environment maps, animated with new poses and expressions,

and directly edited through texture mapping, yet without the added

postprocessing complexity of training a two-stage model or baking

lighting information in texture directly.

Following seminal work [QKS∗24, LKB∗25a], we adopt

FLAME-anchored [LBB∗17] Gaussian splats. Our key observation

is that, unlike vanilla 3DGS [KKLD23a], which operates directly in

screen space, the 2DGS variant [HYC∗24] defines a local splat co-

ordinate frame and computes ray–splat intersections to query ker-

nels. We leverage this property to map ray–splat intersections into

the UV domain via an approximate orthographic proj

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.