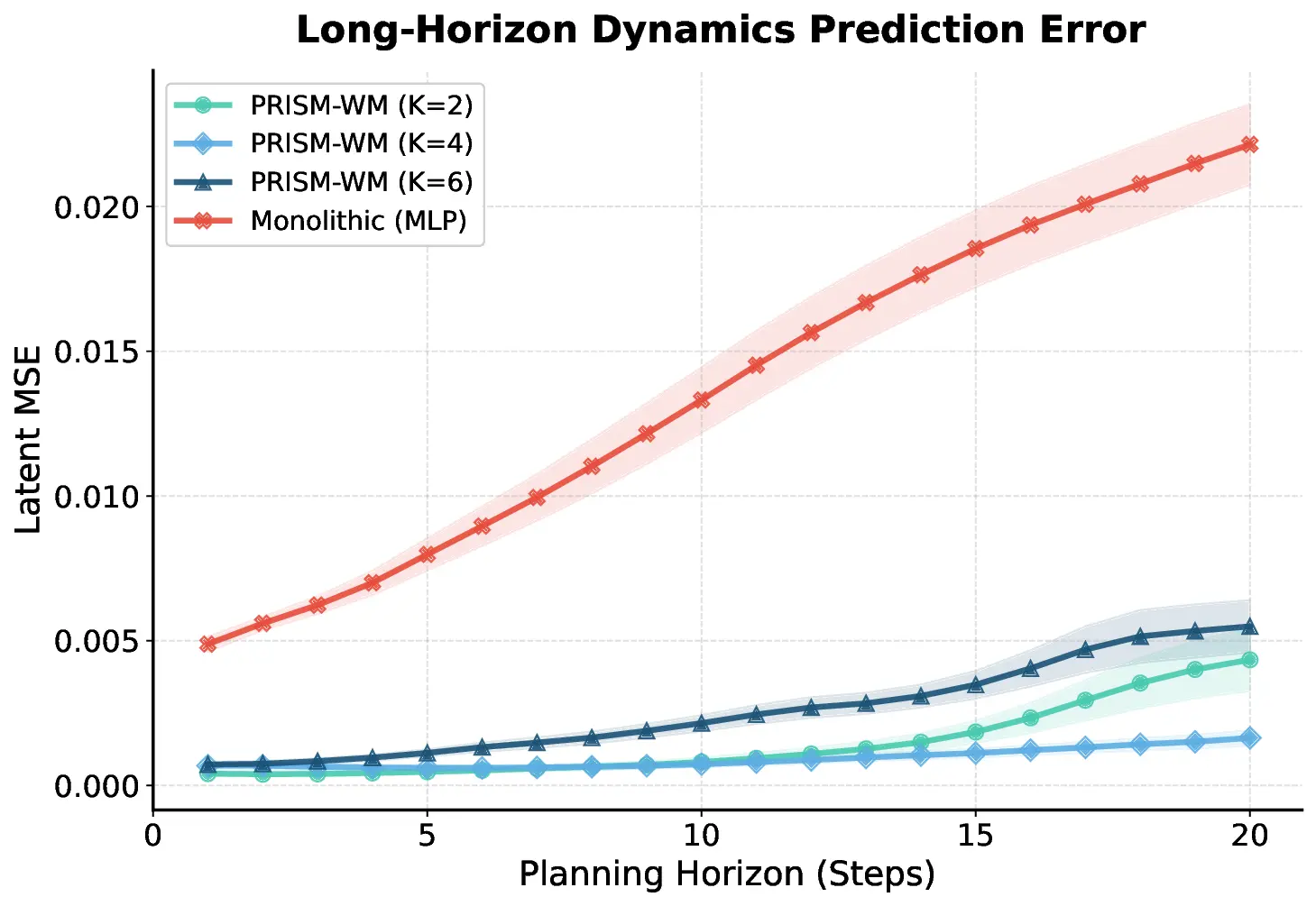

Model-based planning in robotic domains is fundamentally challenged by the hybrid nature of physical dynamics, where continuous motion is punctuated by discrete events such as contacts and impacts. Conventional latent world models typically employ monolithic neural networks that enforce global continuity, inevitably over-smoothing the distinct dynamic modes (e.g., sticking vs. sliding, flight vs. stance). For a planner, this smoothing results in catastrophic compounding errors during long-horizon lookaheads, rendering the search process unreliable at physical boundaries. To address this, we introduce the Prismatic World Model (PRISM-WM), a structured architecture designed to decompose complex hybrid dynamics into composable primitives. PRISM-WM leverages a context-aware Mixture-of-Experts (MoE) framework where a gating mechanism implicitly identifies the current physical mode, and specialized experts predict the associated transition dynamics. We further introduce a latent orthogonalization objective to ensure expert diversity, effectively preventing mode collapse. By accurately modeling the sharp mode transitions in system dynamics, PRISM-WM significantly reduces rollout drift. Extensive experiments on challenging continuous control benchmarks, including high-dimensional humanoids and diverse multi-task settings, demonstrate that PRISM-WM provides a superior high-fidelity substrate for trajectory optimization algorithms (e.g., TD-MPC), proving its potential as a powerful foundational model for nextgeneration model-based agents.

Deep Dive into 프리즘 월드 모델: 하이브리드 로봇 동역학을 위한 모드 분리 전문가 혼합.

Model-based planning in robotic domains is fundamentally challenged by the hybrid nature of physical dynamics, where continuous motion is punctuated by discrete events such as contacts and impacts. Conventional latent world models typically employ monolithic neural networks that enforce global continuity, inevitably over-smoothing the distinct dynamic modes (e.g., sticking vs. sliding, flight vs. stance). For a planner, this smoothing results in catastrophic compounding errors during long-horizon lookaheads, rendering the search process unreliable at physical boundaries. To address this, we introduce the Prismatic World Model (PRISM-WM), a structured architecture designed to decompose complex hybrid dynamics into composable primitives. PRISM-WM leverages a context-aware Mixture-of-Experts (MoE) framework where a gating mechanism implicitly identifies the current physical mode, and specialized experts predict the associated transition dynamics. We further introduce a latent orthogonaliz

The efficacy of Model-Based Reinforcement Learning (MBRL) and planning is strictly bounded by the predictive fidelity of the underlying world model (Janner et al. 2019). While latent variable models have enabled planning directly from high-dimensional observations by abstracting away pixel details (Ha and Schmidhuber 2018;Hafner et al. 2025), they face a critical hurdle in robotic domains: the hybrid nature of real-world physics. Legged locomotion and manipulation involve systems that continuously switch between discrete contact modes, resulting in non-smooth and highly non-linear dynamics.

Prevailing approaches typically approximate these complex dynamics using a single, monolithic neural network * These authors contributed equally. † Corresponding author.

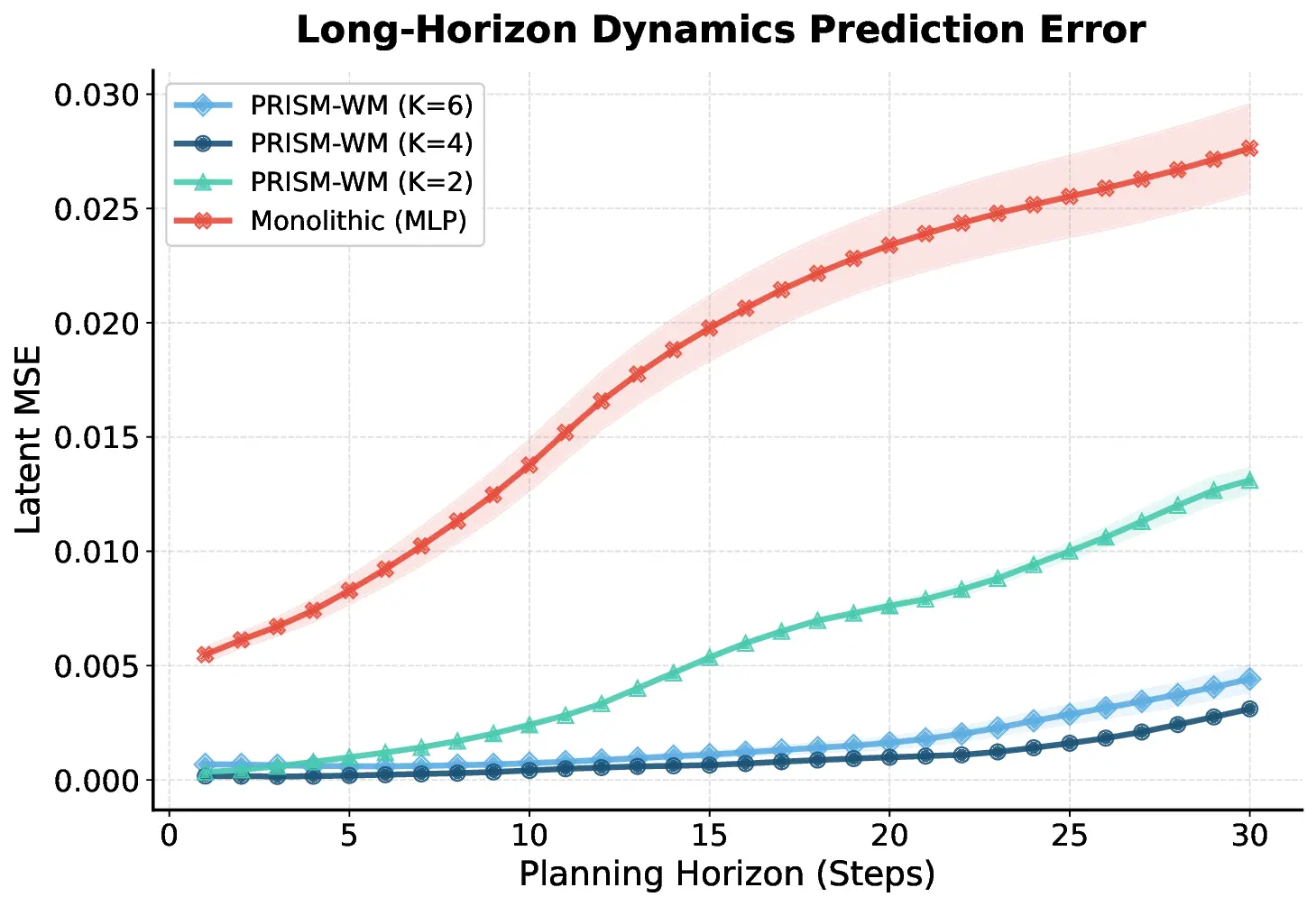

(e.g., an MLP or RNN) (Hansen, Wang, and Su 2022;Georgiev et al. 2024). While theoretically capable of universal approximation, in practice, these models tend to learn an “average” representation that smooths over sharp discontinuities inherent in contact events. For a planner effectively simulating a trajectory (as seen in methods like TD-MPC), this smoothing is fatal. A minor prediction error at a contact boundary leads to rapid divergence of the imagined state from reality. This compounding error forces planners to rely on dangerously short horizons, severely limiting the agent’s ability to reason about long-term consequences.

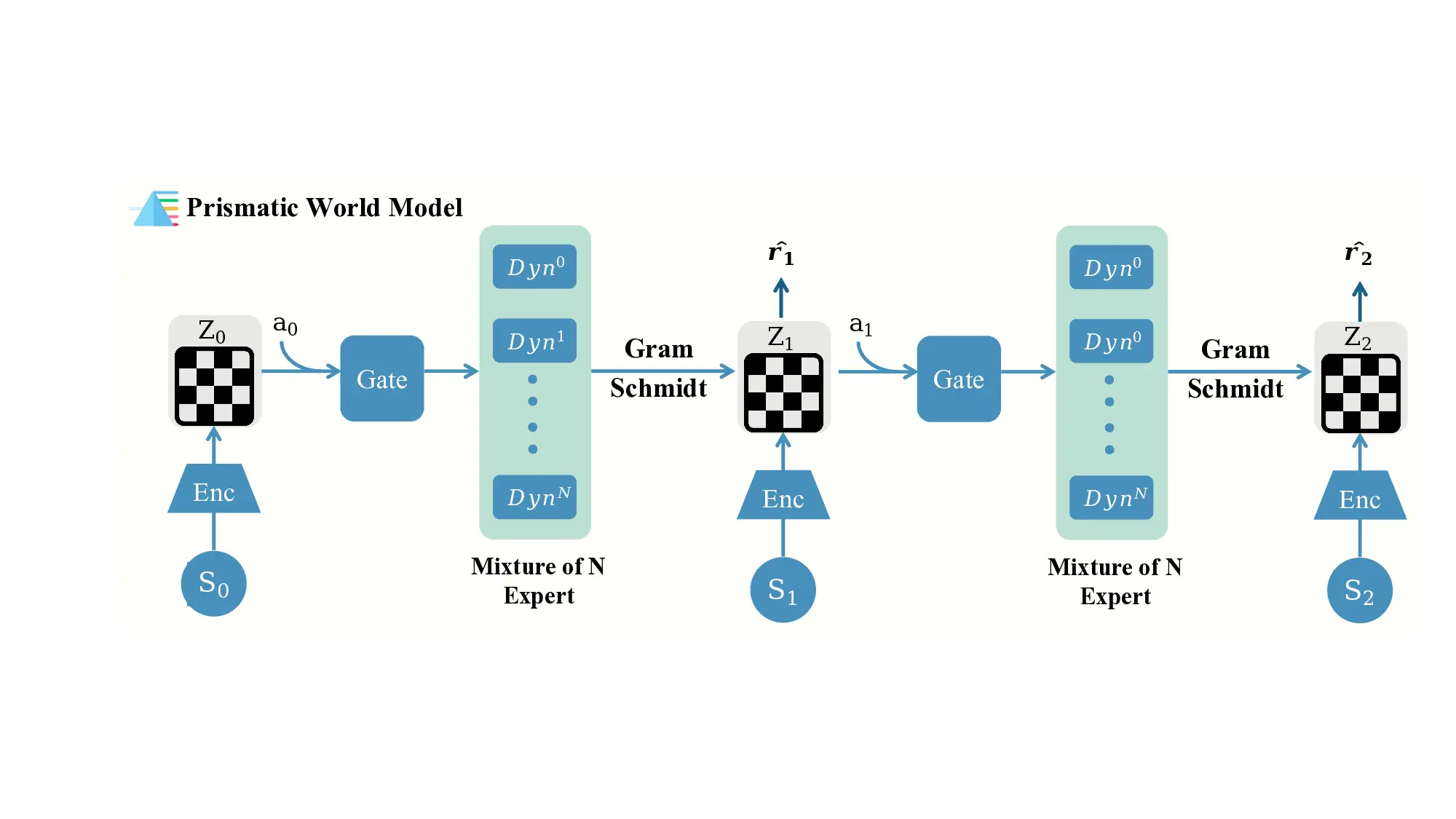

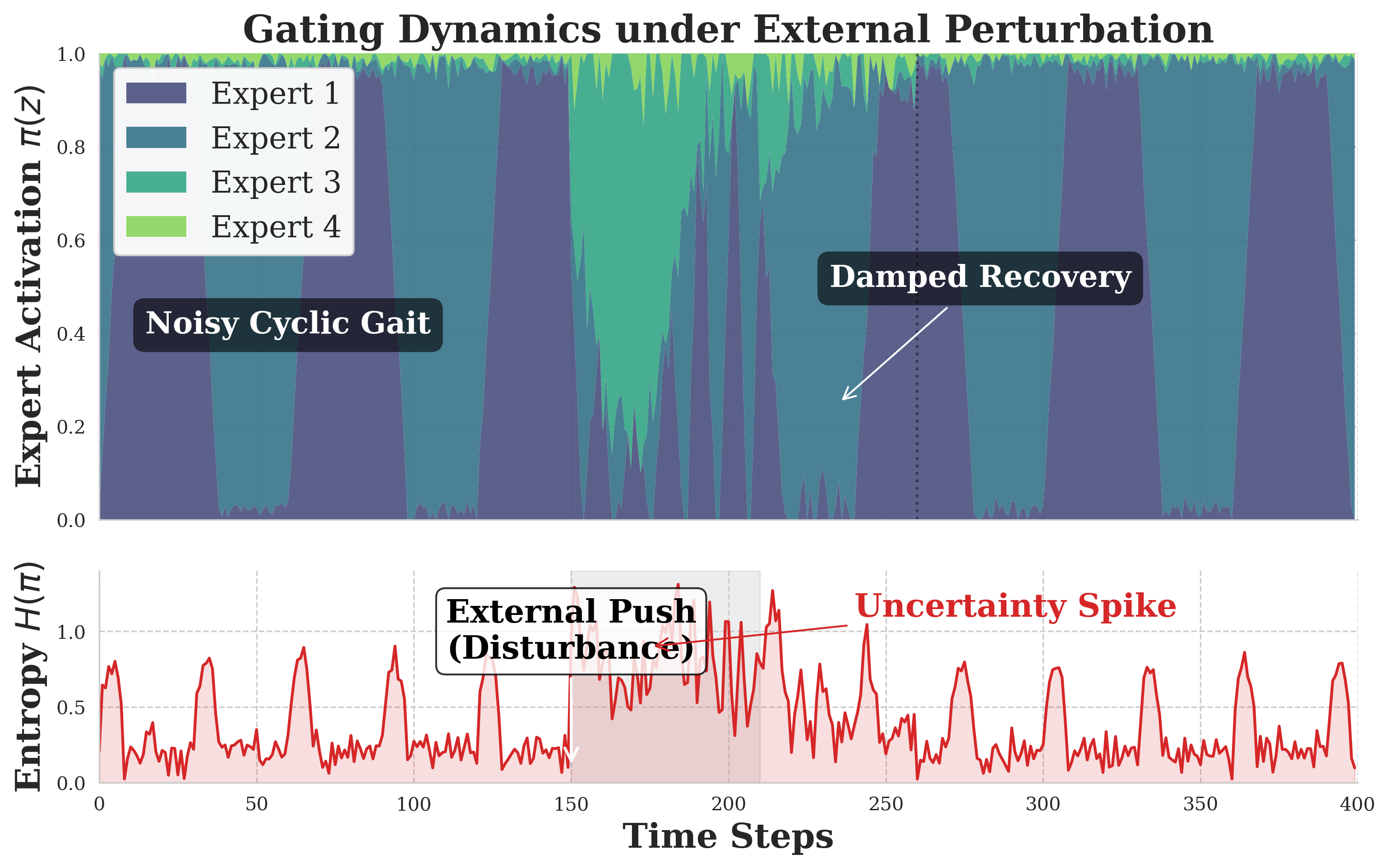

We argue that to overcome these limitations, a world model should incorporate structural priors inspired by hybrid systems. Drawing inspiration from the structure of hybrid systems, we propose to approximate the underlying physics by learning a decomposition of specialized latent regimes from data. Instead of forcing a single function to fit all regimes, PRISM-WM represents the global dynamics as a composition of simpler, learnable “base dynamics.” In this view, the gating network acts as a context-aware switching mechanism to identify the active physical regime, while experts capture the continuous flow within each mode, bridging the gap between flexible neural learning and structured symbolic control.

In this work, we introduce the Prismatic World Model (PRISM-WM). Drawing inspiration from how a prism separates light into its constituent colors, PRISM-WM decomposes the transition function using a context-aware Mixtureof-Experts (MoE) architecture:

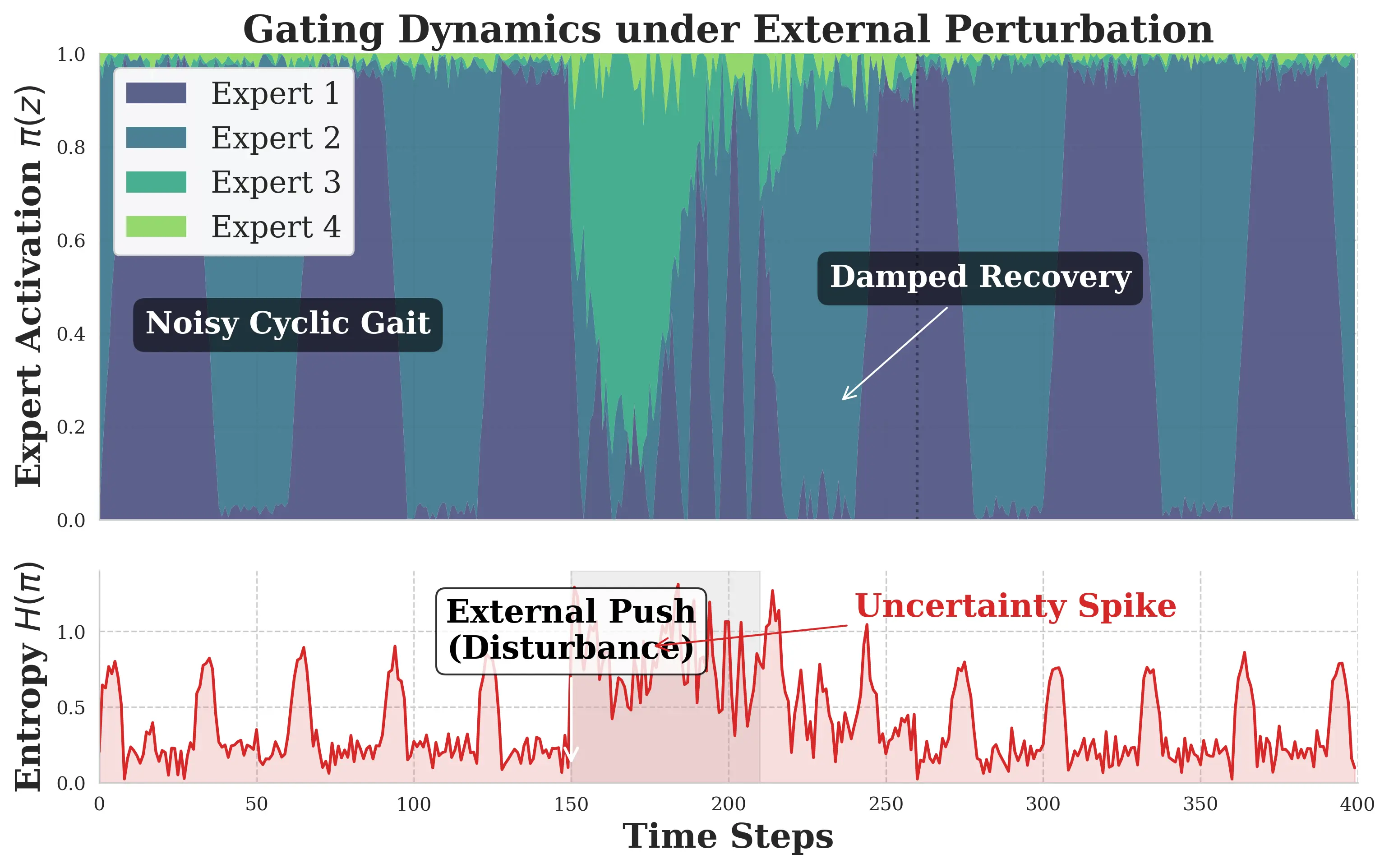

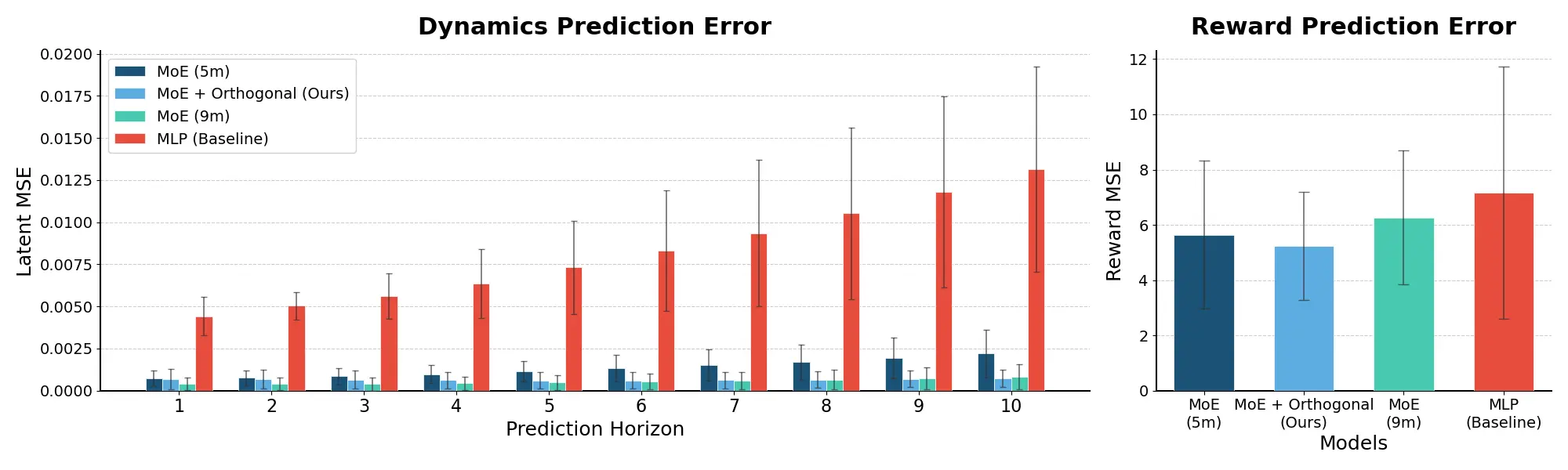

• Mode Identification: A gating network acts as a learnable switching function, analyzing the current context (state, action, or task identity) to implicitly determine the active physical regime (e.g., identifying a “contact” vs. “flight” phase). • Specialized Dynamics: A set of expert networks compete to model specific local dynamics. By specializing, these experts avoid the interference and smoothing issues of monolithic models. • Orthogonality for Distinct Primitives: To ensure the learned primitives are structurally distinct, we introduce an orthogonalization layer, encouraging the model to learn a basis of non-redundant dynamic forces.

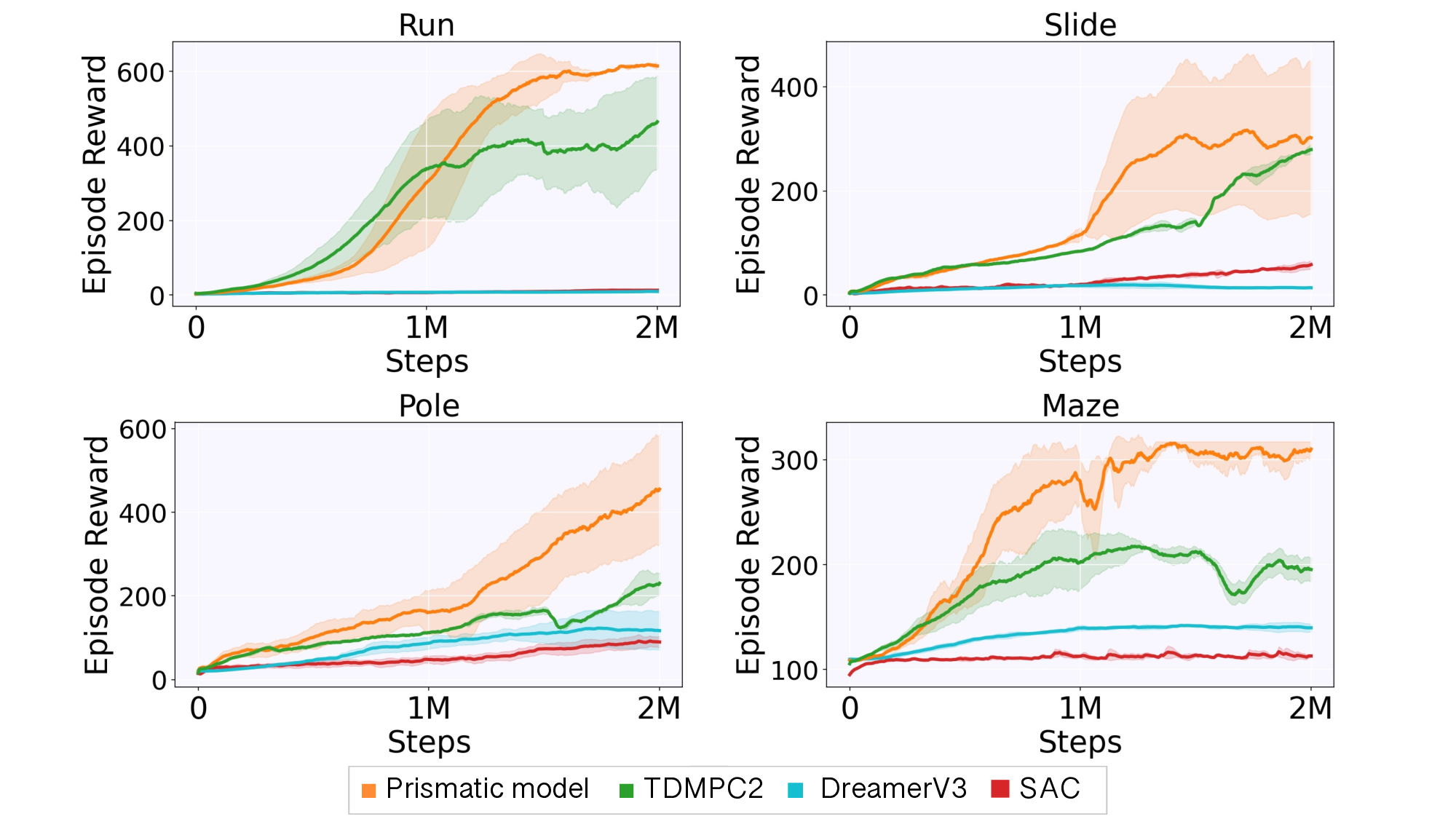

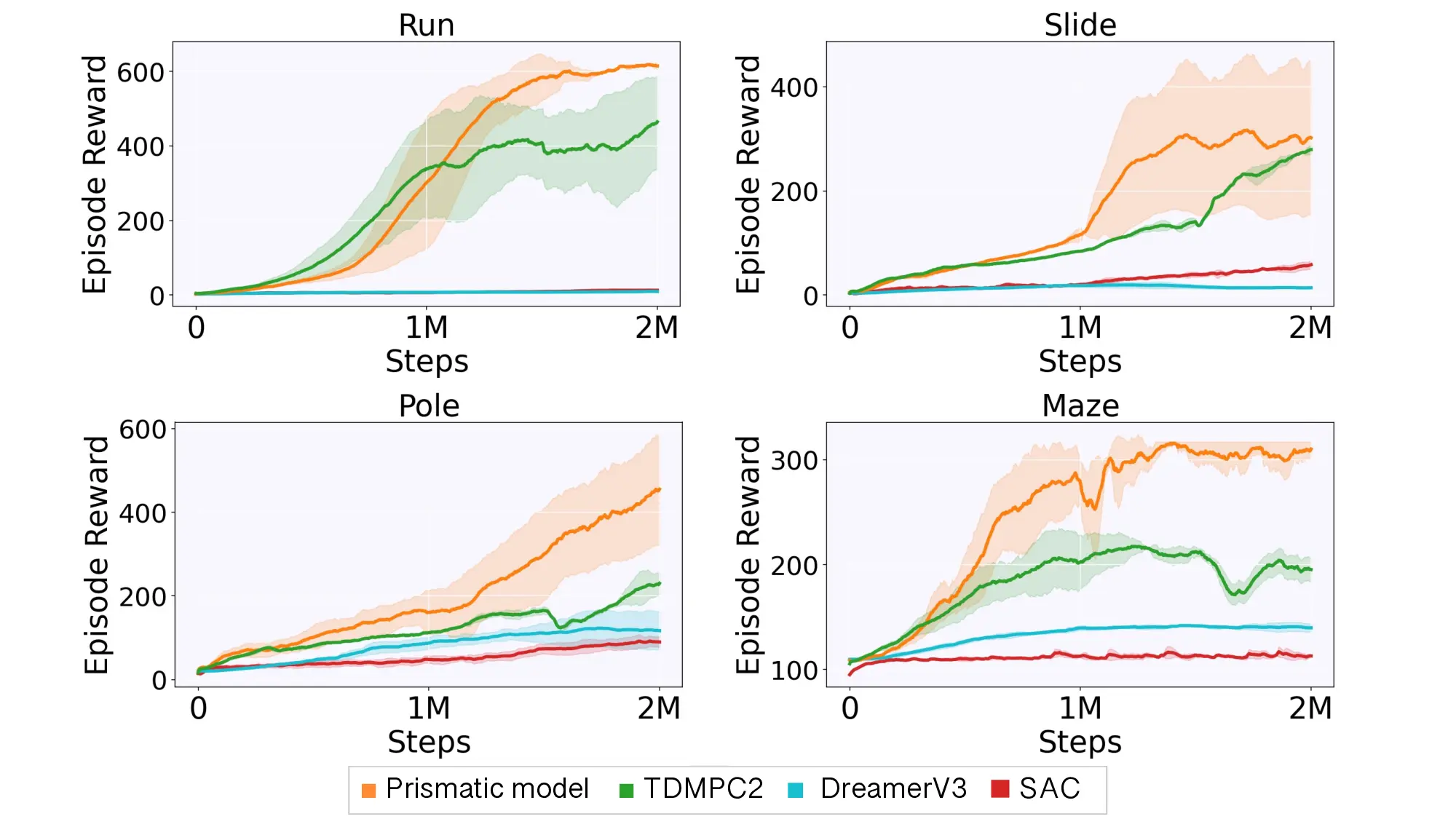

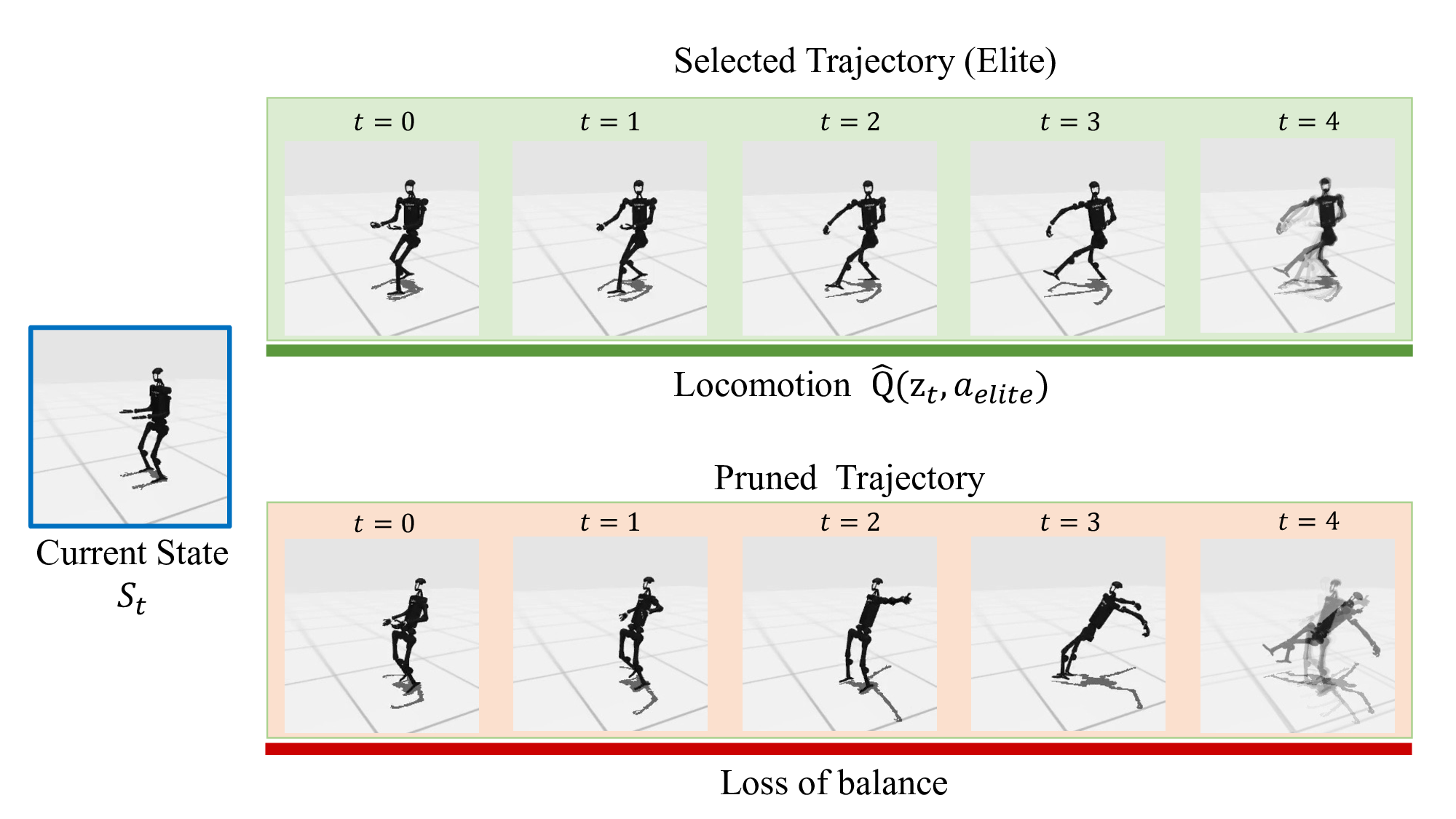

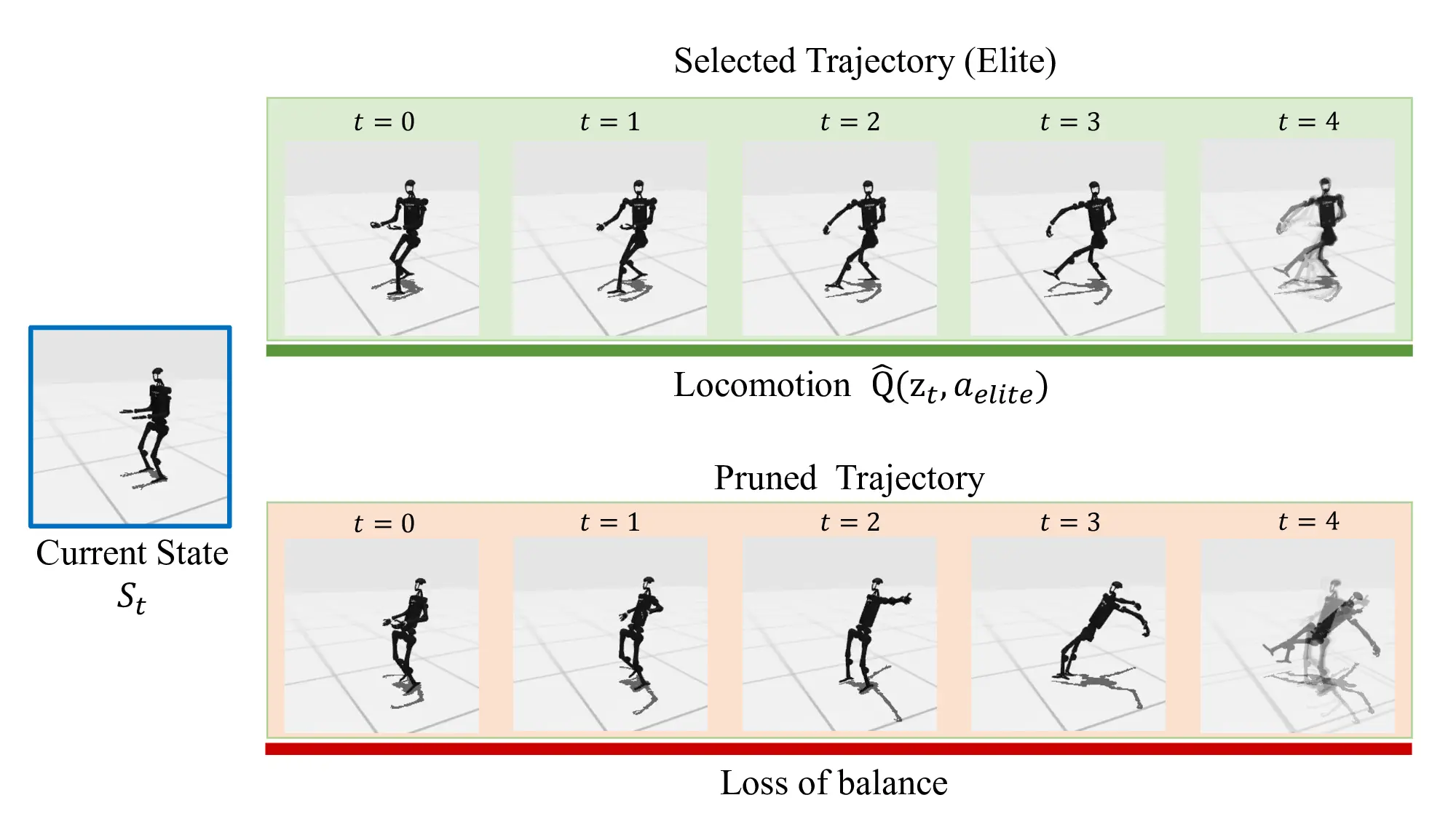

We integrate PRISM-WM into the TD-MPC planning arXiv:2512.08411v1 [cs.AI] 9 Dec 2025 framework (Hansen, Wang, and Su 2022). Our experiments across challenging domains, including high-dimensional Humanoid control and multi-task benchmarks, demonstrate that:

- PRISM-WM significantly lowers prediction error over longer horizons compared to monolithic baselines. 2. The model spontaneously learns to cluster states into meaningful physical modes (e.g., locomotion vs. balance) without explicit supervision. 3. By providing a more accurate causal model, PRISM-WM enables the planner to solve complex tasks that monolithic baselines fail to master.

Our work is situated at the intersection of two significant research areas: Mixture-of-Experts architectures and Model-Based Reinforcement Learning. We review key developments in both fields to contextualize our contributions.

The MoE framework (Jacobs et al. 1991) routes each input to a few specialised experts, an idea that unlocked trillionparameter LLMs. Subsequent studies on capacity balance and scaling laws (Du et al. 2022;Zoph et al. 2022;Clark, Fedus, and et al. 2022;Riquelme et al. 2021;Zhou et al. 2022) have cemented MoE as the default path to ultralarge language models.In the context of reinforcement learning, MoE has been used to address various challenges: orthogonalised experts for multi-task transfer (Hendawy, Peters, and D’Eramo 2023), attention-routed multi-task policies (Cheng et al. 2023), probabilistic actor ensembles (Ren et al. 2021), and other variants for continual or hierarchical RL (Akrour, Tateo, and Peters 2021;D’Eramo et al. 2020;Wang, Li, and Ma 2022;Zhou et al. 2021). These works apply MoE to policies or value functions. Some approaches like NMOE (Karnachan et al. 2020) have explored nested mixtures for identifying hybrid dynamical systems. However, distinct from NMOE’s hierarchical structure for system identification, our PRISM-WM employs a flat, contextaware architecture re

…(Full text truncated)…

This content is AI-processed based on ArXiv data.