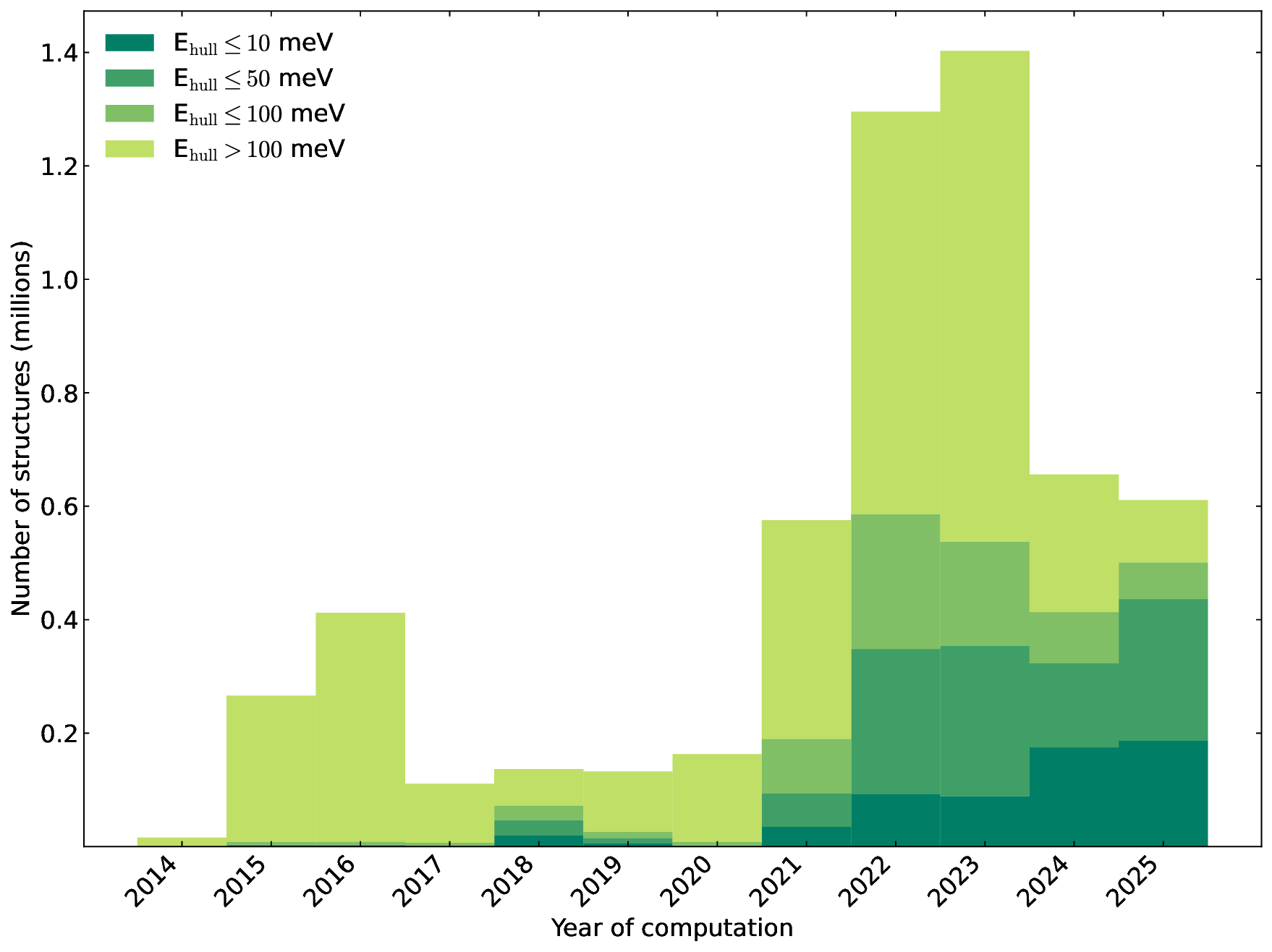

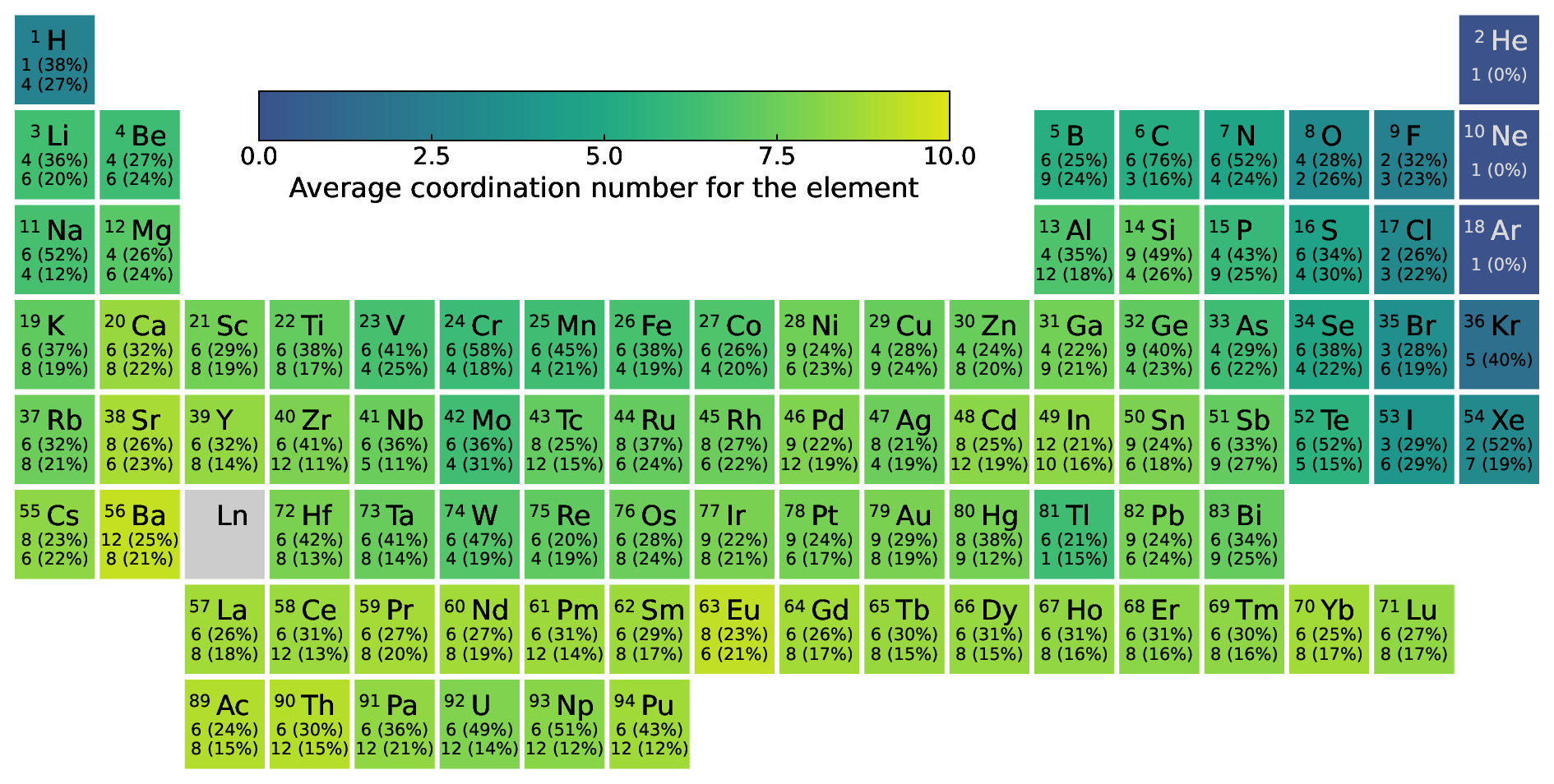

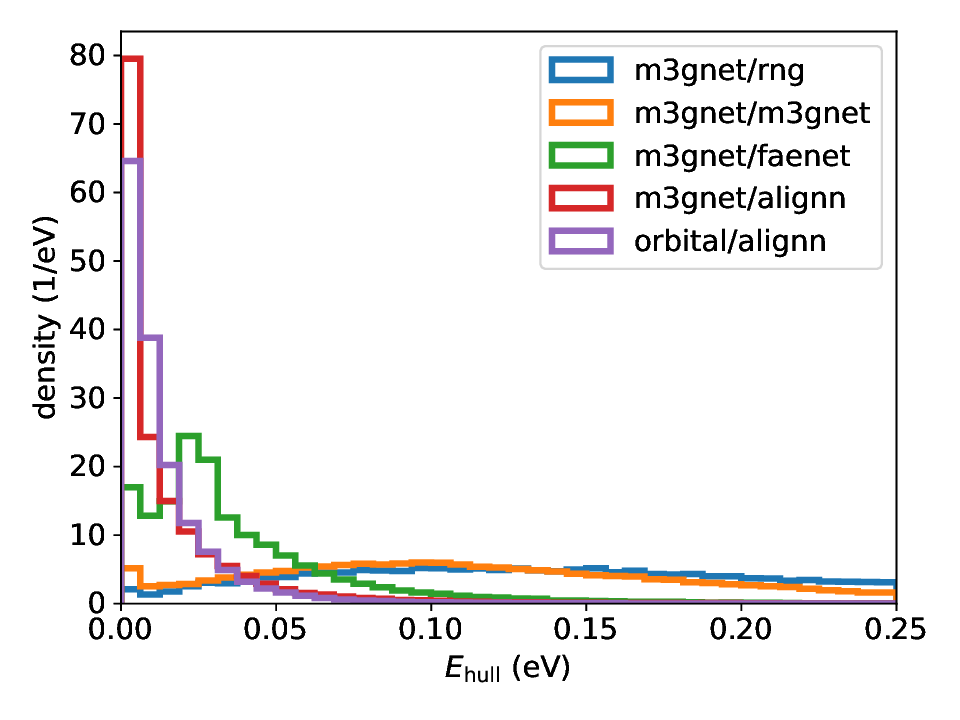

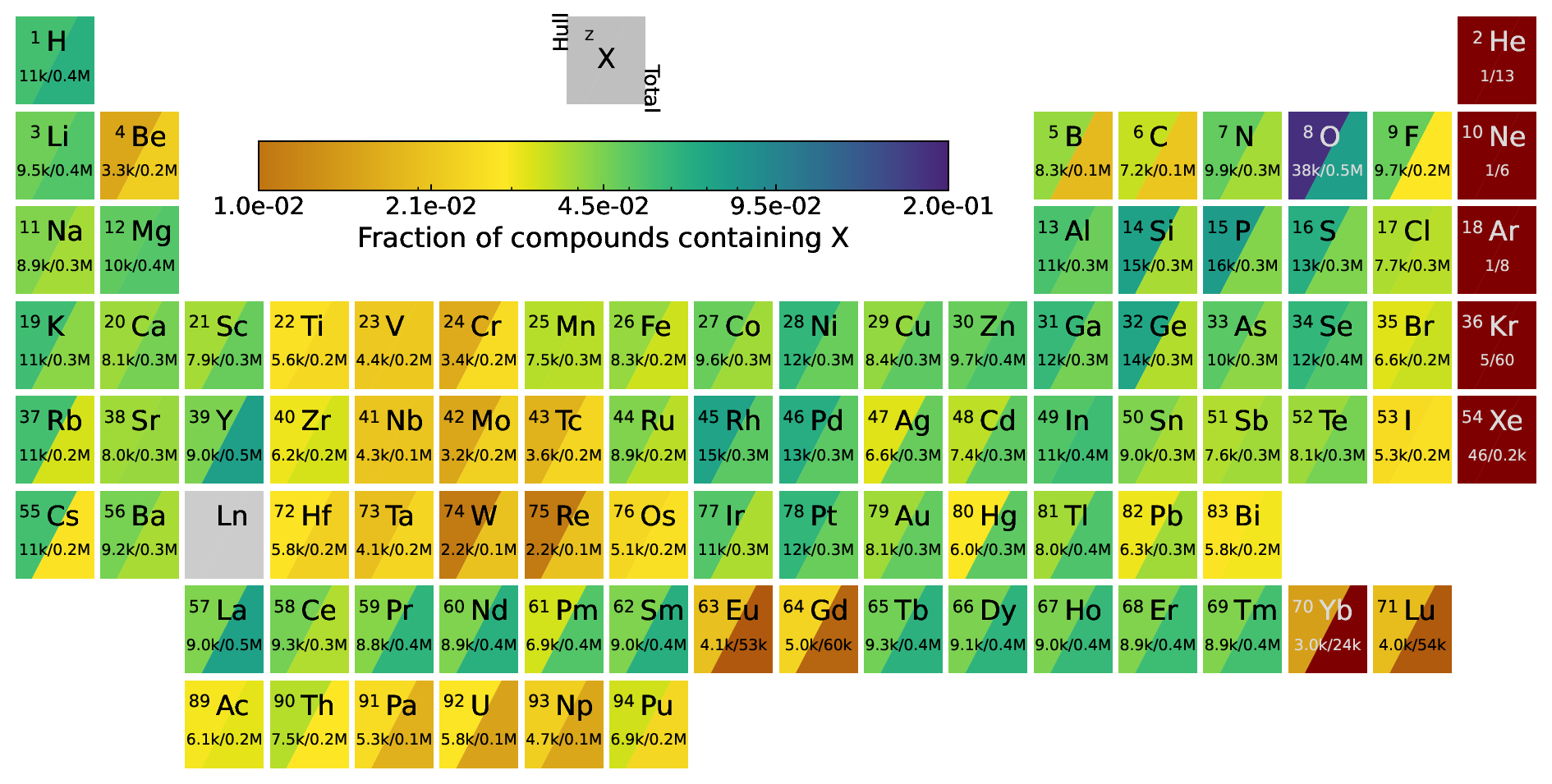

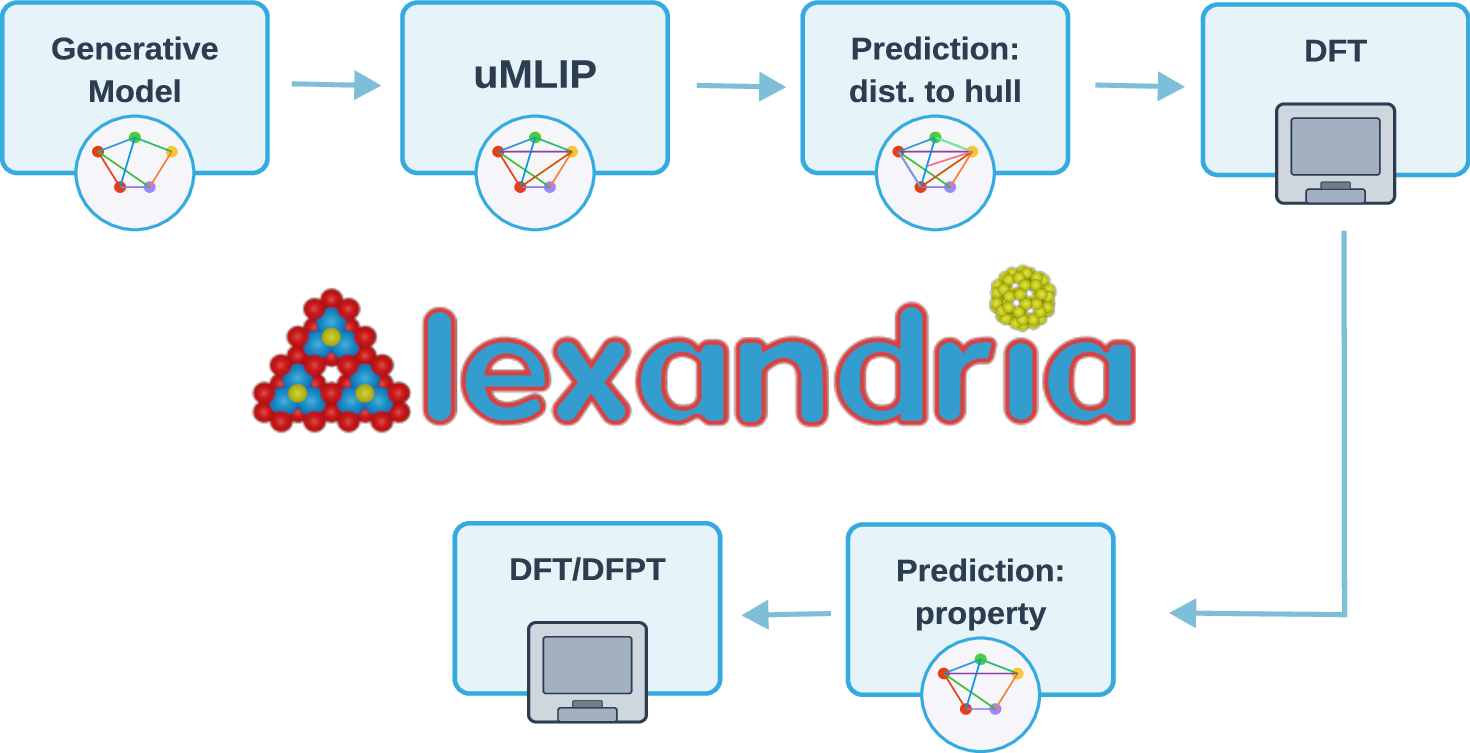

We present a novel multi-stage workflow for computational materials discovery that achieves a 99% success rate in identifying compounds within 100 meV/atom of thermodynamic stability, with a threefold improvement over previous approaches. By combining the Matra-Genoa generative model, Orb-v2 universal machine learning interatomic potential, and ALIGNN graph neural network for energy prediction, we generated 119 million candidate structures and added 1.3 million DFT-validated compounds to the Alexandria database, including 74 thousand new stable materials. The expanded Alexandria database now contains 5.8 million structures with 175 thousand compounds on the convex hull. Predicted structural disorder rates (37-43%) match experimental databases, unlike other recent AI-generated datasets. Analysis reveals fundamental patterns in space group distributions, coordination environments, and phase stability networks, including sub-linear scaling of convex hull connectivity. We release the complete dataset, including sAlex25 with 14 million out-of-equilibrium structures containing forces and stresses for training universal force fields. We demonstrate that fine-tuning a GRACE model on this data improves benchmark accuracy. All data, models, and workflows are freely available under Creative Commons licenses.

Deep Dive into AI-Driven Expansion and Application of the Alexandria Database.

We present a novel multi-stage workflow for computational materials discovery that achieves a 99% success rate in identifying compounds within 100 meV/atom of thermodynamic stability, with a threefold improvement over previous approaches. By combining the Matra-Genoa generative model, Orb-v2 universal machine learning interatomic potential, and ALIGNN graph neural network for energy prediction, we generated 119 million candidate structures and added 1.3 million DFT-validated compounds to the Alexandria database, including 74 thousand new stable materials. The expanded Alexandria database now contains 5.8 million structures with 175 thousand compounds on the convex hull. Predicted structural disorder rates (37-43%) match experimental databases, unlike other recent AI-generated datasets. Analysis reveals fundamental patterns in space group distributions, coordination environments, and phase stability networks, including sub-linear scaling of convex hull connectivity. We release the compl

AI-Driven Expansion and Application of the Alexandria Database

Théo Cavignac,1 Jonathan Schmidt,2 Pierre-Paul De Breuck,1 Antoine Loew,1

Tiago F. T. Cerqueira,3 Hai-Chen Wang,1 Anton Bochkarev,4 Yury Lysogorskiy,4

Aldo H. Romero,5 Ralf Drautz,4 Silvana Botti,1, ∗and Miguel A. L. Marques1, †

1Research Center Future Energy Materials and Systems of the University Alliance Ruhr

and ICAMS, Ruhr University Bochum, Universitätsstraße 150, D-44801 Bochum, Germany

2Department of Materials, ETH Zürich, Zürich, CH-8093, Switzerland

3CFisUC, Department of Physics, University of Coimbra, Rua Larga, 3004-516 Coimbra, Portugal

4ICAMS, Ruhr-Universität Bochum, Universitätstrasse 150, 44801 Bochum,

Germany and ACEworks GmbH, Hagen-Hof-Weg 1, 44797 Bochum, Germany

5Department of Physics, West Virginia University, Morgantown, WV 26506, USA

(Dated: December 11, 2025)

We present a novel multi-stage workflow for computational materials discovery that achieves a

99% success rate in identifying compounds within 100 meV/atom of thermodynamic stability, with

a threefold improvement over previous approaches. By combining the Matra-Genoa generative

model, Orb-v2 universal machine learning interatomic potential, and ALIGNN graph neural

network for energy prediction, we generated 119 million candidate structures and added 1.3

million DFT-validated compounds to the Alexandria database, including 74 thousand new stable

materials.

The expanded Alexandria database now contains 5.8 million structures with 175

thousand compounds on the convex hull.

Predicted structural disorder rates (37–43%) match

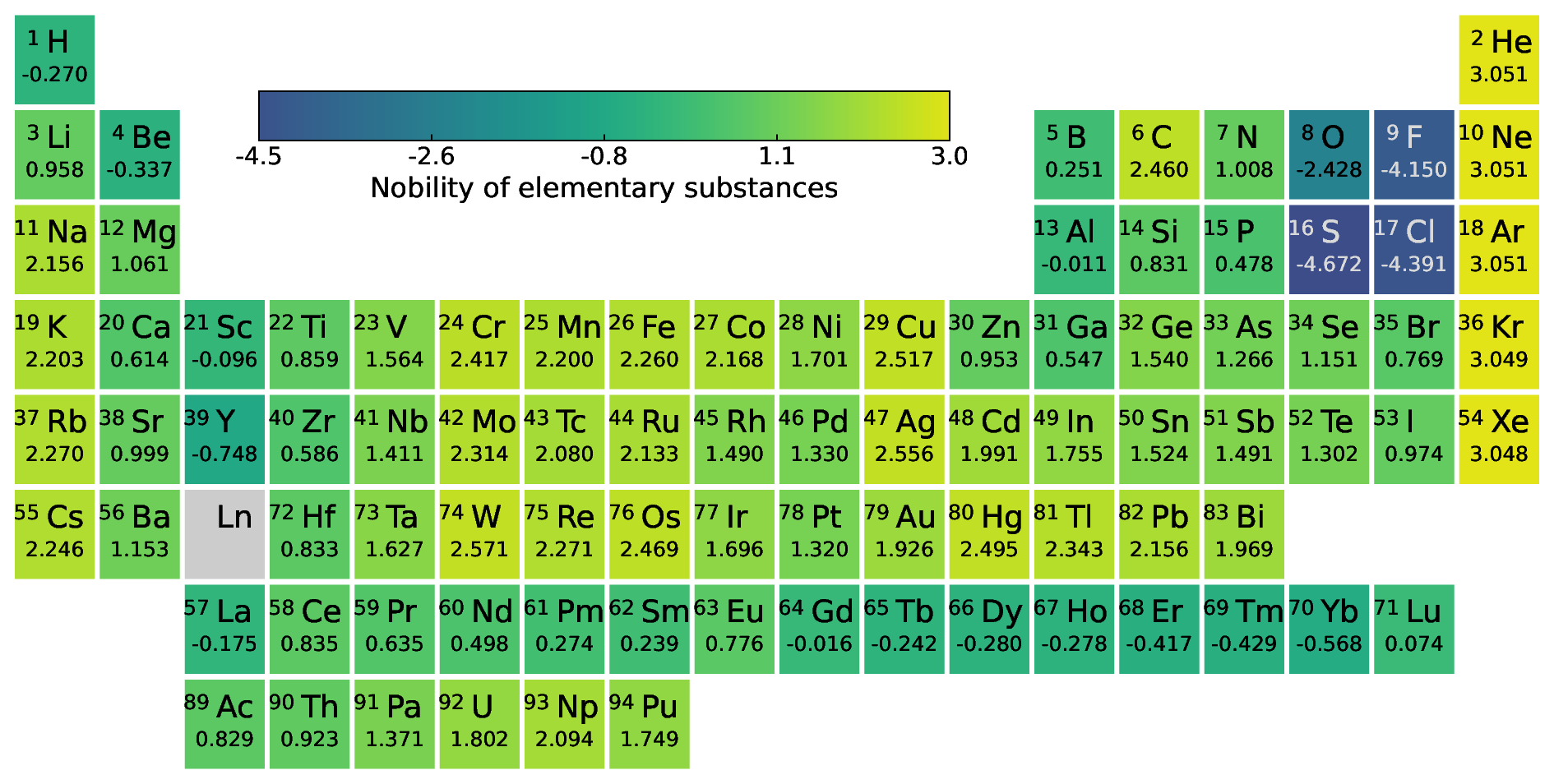

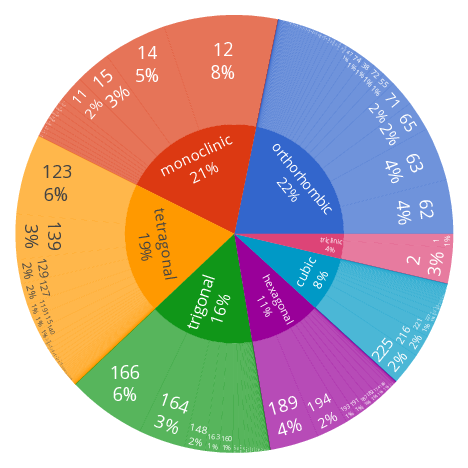

experimental databases, unlike other recent AI-generated datasets. Analysis reveals fundamental

patterns in space group distributions, coordination environments, and phase stability networks,

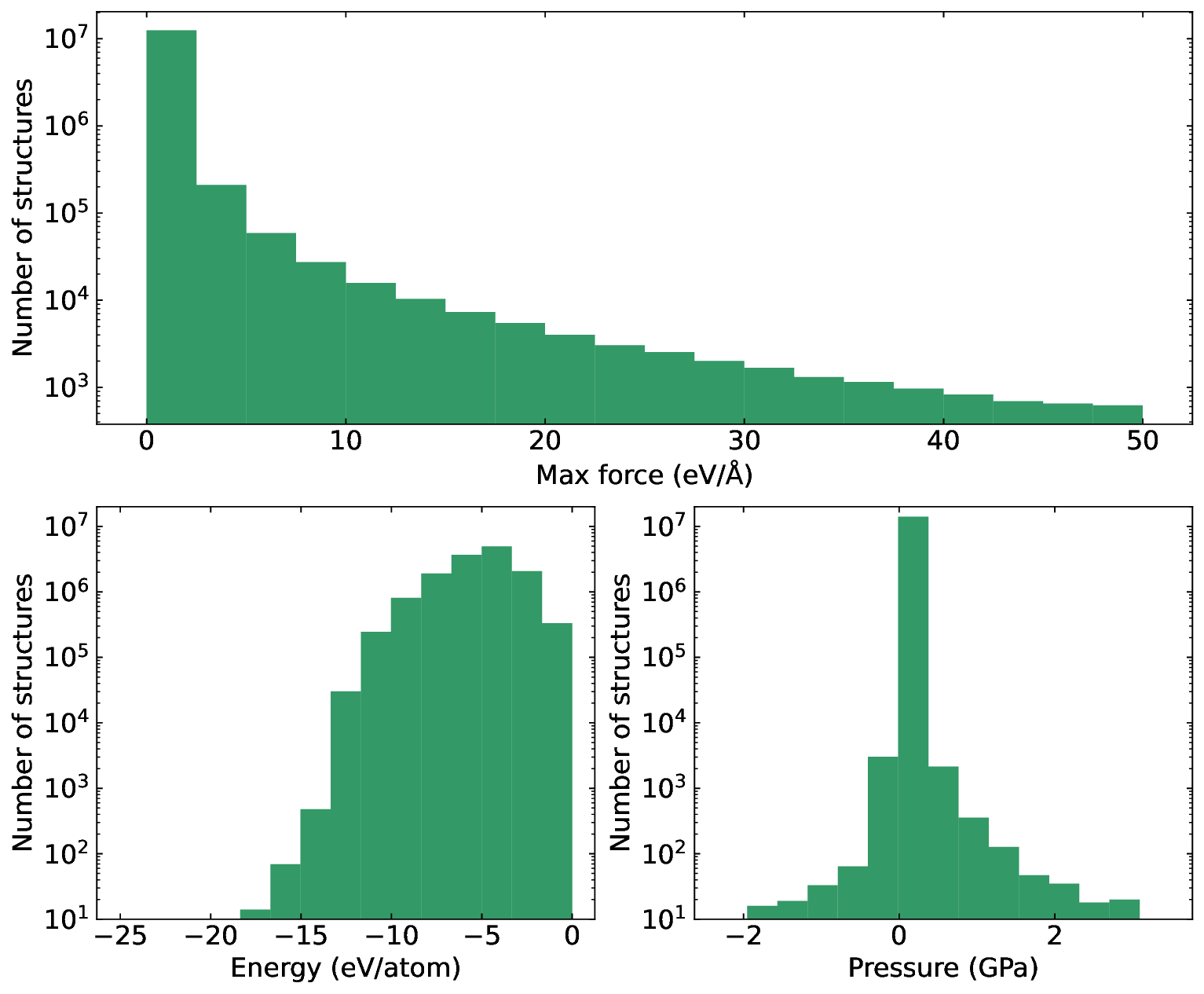

including sub-linear scaling of convex hull connectivity. We release the complete dataset, including

sAlex25 with 14 million out-of-equilibrium structures containing forces and stresses for training

universal force fields.

We demonstrate that fine-tuning a GRACE model on this data improves

benchmark accuracy. All data, models, and workflows are freely available under Creative Commons

licenses.

I.

INTRODUCTION

Recent advances in availability of large materials

databases

serves

as

evidence

of

the

success

of

high-throughput (HT) materials discovery [1–8] and

provide reservoirs of hypothetical compounds that are

thermodynamically stable or close to stability. Despite

these advances, sampling the vast combinatorial space of

possible materials remains computationally intensive and

unfeasible by brute force. Fortunately, the development

of machine learning (ML) models has greatly accelerated

this process [9–13]. Conversely, we have also witnessed

a dramatic increase of data available to train new

models [3, 7, 8, 14].

Such a positive feedback loop

has significantly accelerated the material discovery for

technological applications ranging from energy storage

to catalysis and electronics.

The Alexandria database represents one of the

largest collections of ab initio calculations and is the

largest open database for thermodynamically stable

materials. Currently, it encompasses approximately 5.8

million structures calculated using density functional

theory (DFT), of which 175 thousand structures lie on

the convex hull.

The previous iteration of this dataset has been

∗silvana.botti@rub.de

† miguel.marques@rub.de

extensively utilized by the scientific community.

For

instance, all universal force-field models within the top

five [10, 14–18] of the Matbench Discovery ranking [19]

were trained using Alexandria data.

Some of these

force fields have advanced to the point where they

can reliably predict structural, vibrational, and thermal

properties [20], defect energies [21, 22], and infrared

spectra [23], thereby unlocking new avenues for exploring

physical phenomena.

Beyond

training

machine-learning

force

fields,

Alexandria has also been employed in the development

of generative models [24].

In addition to serving as a

training resource, this extensive database provides a

valuable foundation for data-driven design of functional

materials.

For instance, subsets of Alexandria have

been used to identify novel dielectric semiconductors [25],

novel

two

dimensional

materials

[26]

and

hard

magnets [27].

Furthermore, state-of-the-art machine-

learning–assisted high-throughput design frameworks

for

conventional

high-Tc

superconductors

leverage

Alexandria as their primary search space [28–30].

A major challenge in the growth of Alexandria is

to generate novel structures that are potentially located

near the convex hull with high success rate.

During

standard prototype-based high-throughput studies the

success rate is of the order of 0.1% [31].

To increase

the success rate of standard HT search, Wang et al. [32]

used data-driven chemical similarity measures to guide

systematic substitution, i.e. replacing elements in stable

or near-stable compounds with similar elements.

This

arXiv:251

…(Full text truncated)…

This content is AI-processed based on ArXiv data.