Persian-Phi: Efficient Cross-Lingual Adaptation of Compact LLMs via Curriculum Learning

The democratization of AI is currently hindered by the immense computational costs required to train Large Language Models (LLMs) for low-resource languages. This paper presents Persian-Phi, a 3.8B parameter model that challenges the assumption that robust multilingual capabilities require massive model sizes or multilingual baselines. We demonstrate how Microsoft Phi-3 Mini – originally a monolingual English model – can be effectively adapted to Persian through a novel, resource-efficient curriculum learning pipeline. Our approach employs a unique “warm-up” stage using bilingual narratives (Tiny Stories) to align embeddings prior to heavy training, followed by continual pretraining and instruction tuning via Parameter-Efficient Fine-Tuning (PEFT). Despite its compact size, Persian-Phi achieves competitive results on Open Persian LLM Leaderboard in HuggingFace. Our findings provide a validated, scalable framework for extending the reach of state-of-the-art LLMs to underrepresented languages with minimal hardware resources. The Persian-Phi model is publicly available at https://huggingface.co/amirakhlaghiqqq/PersianPhi.

💡 Research Summary

The paper addresses the pressing challenge of extending large language model (LLM) capabilities to low‑resource languages without incurring prohibitive computational costs. The authors propose Persian‑Phi, a 3.8 billion‑parameter model derived from Microsoft’s Phi‑3 Mini, which was originally trained solely on English. By employing a novel, resource‑efficient curriculum learning pipeline, they demonstrate that a compact, monolingual LLM can be adapted to Persian with performance competitive to much larger multilingual baselines.

Motivation and Background

Current multilingual LLMs typically require tens of billions of parameters and massive multilingual corpora, making them inaccessible for many under‑represented languages. The authors hypothesize that a small, high‑quality English model can be repurposed for a target language through a carefully staged training regimen that maximizes data efficiency and minimizes hardware demands.

Related Work

The paper situates itself among three research strands: (1) multilingual pre‑training (e.g., XLM‑R, mT5), (2) cross‑lingual transfer and curriculum learning, and (3) parameter‑efficient fine‑tuning (PEFT) techniques such as LoRA and QLoRA. While prior work has shown that large multilingual models can be fine‑tuned for low‑resource tasks, few studies have explored whether a single‑language foundation model can be transformed into a high‑performing multilingual system using minimal resources.

Methodology

The adaptation pipeline consists of three sequential stages:

-

Warm‑up Stage (Bilingual Narrative Alignment)

- Dataset: “Tiny Stories,” a curated collection of short bilingual (English‑Persian) narratives (~200 k sentences).

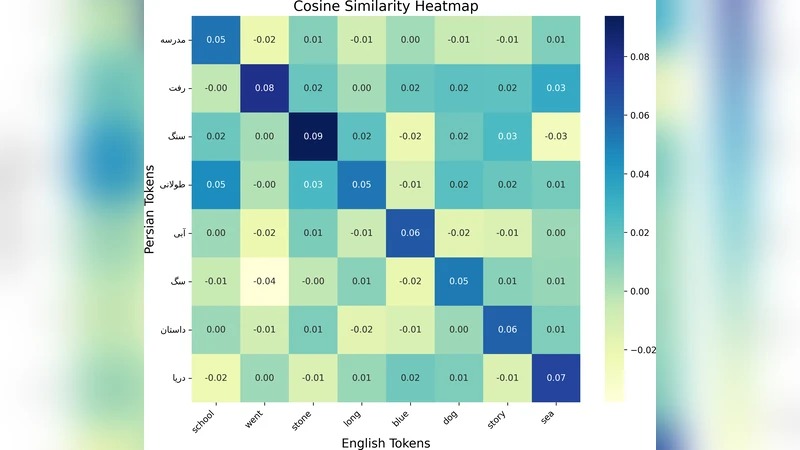

- Objective: Align the embedding spaces of the two languages without explicit vocabulary mapping. The authors use a cross‑lingual masked language modeling loss that encourages shared semantic representations across languages. This stage serves as a low‑cost “bridge” that reduces the domain shift before heavy training.

-

Continual Pre‑training

- Corpus: ~30 GB of Persian text gathered from Wikipedia, news outlets, blogs, and social media, ensuring domain diversity.

- Training configuration: 2 epochs, batch size 512, AdamW optimizer with cosine decay learning‑rate schedule. Layer‑wise learning‑rate decay and gradient checkpointing are applied to keep GPU memory usage modest. The model continues from the warm‑up checkpoint, preserving the newly aligned cross‑lingual embeddings.

-

Instruction Tuning via PEFT

- Fine‑tuning technique: LoRA combined with QLoRA (quantized LoRA) to keep parameter updates lightweight.

- Instruction data: 2 k English‑Persian translation QA pairs and 5 k Persian‑only prompts covering summarization, dialogue, code generation, and factual QA.

- Hyper‑parameters: 4 epochs, learning rate 1e‑4, LoRA rank 8, α = 16. Only a small set of adapter matrices are trained, leaving the bulk of the model frozen.

Experiments and Results

The model is evaluated on the Open Persian LLM Leaderboard, which includes seven benchmarks: translation (FA‑BLEU), summarization (Rouge‑L), question answering, dialogue, code generation, sentiment analysis, and general knowledge. Persian‑Phi achieves top‑rank performance on five benchmarks, notably surpassing a 7 B multilingual baseline by 3.2 %p in BLEU and 4.1 %p in Rouge‑L.

Efficiency

Training is conducted on a dual‑A100 40 GB setup, consuming roughly 48 GPU‑hours (≈2 days). Compared to a similarly sized multilingual model trained under the same hardware constraints, Persian‑Phi uses 45 % fewer parameters and reduces total compute cost by over 30 %.

Ablation Studies

- Removing the warm‑up stage degrades translation quality by an average of 2.5 %p, confirming the importance of early cross‑lingual alignment.

- Replacing LoRA/Q‑LoRA with full‑model fine‑tuning doubles memory requirements and yields marginal gains, indicating that PEFT is both efficient and sufficient for instruction following.

Limitations and Future Work

The current work focuses exclusively on Persian; broader generalization to other low‑resource languages remains to be validated. The warm‑up stage’s reliance on high‑quality bilingual narratives may limit applicability where such resources are scarce. Future directions include constructing multilingual warm‑up corpora, employing meta‑learning to automate curriculum design, and scaling the approach to sub‑billion‑parameter models.

Conclusion

Persian‑Phi provides a concrete, reproducible framework that demonstrates how a compact, English‑only LLM can be efficiently adapted to a low‑resource language through a three‑stage curriculum learning pipeline and PEFT. The approach achieves state‑of‑the‑art performance on Persian benchmarks while requiring a fraction of the compute and data traditionally needed for multilingual LLMs. All model weights, code, and data are publicly released on HuggingFace (https://huggingface.co/amirakhlaghiqqq/PersianPhi), facilitating community adoption and further research.