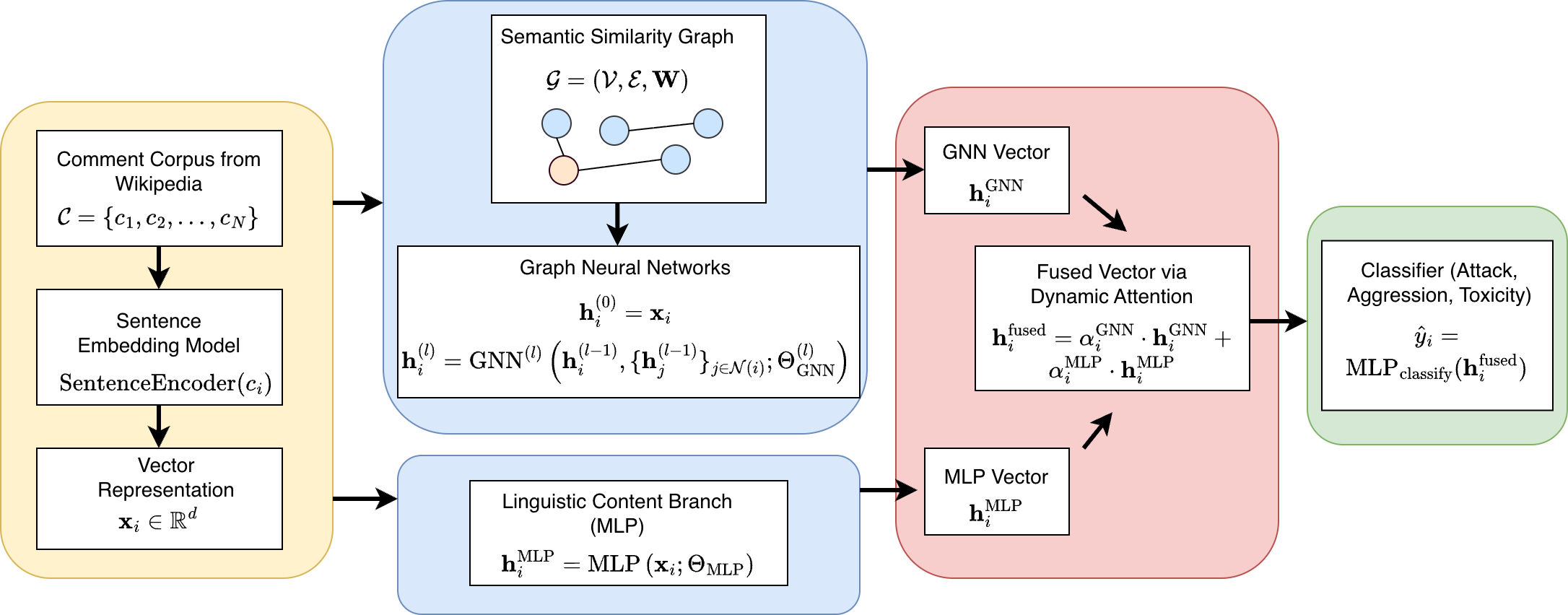

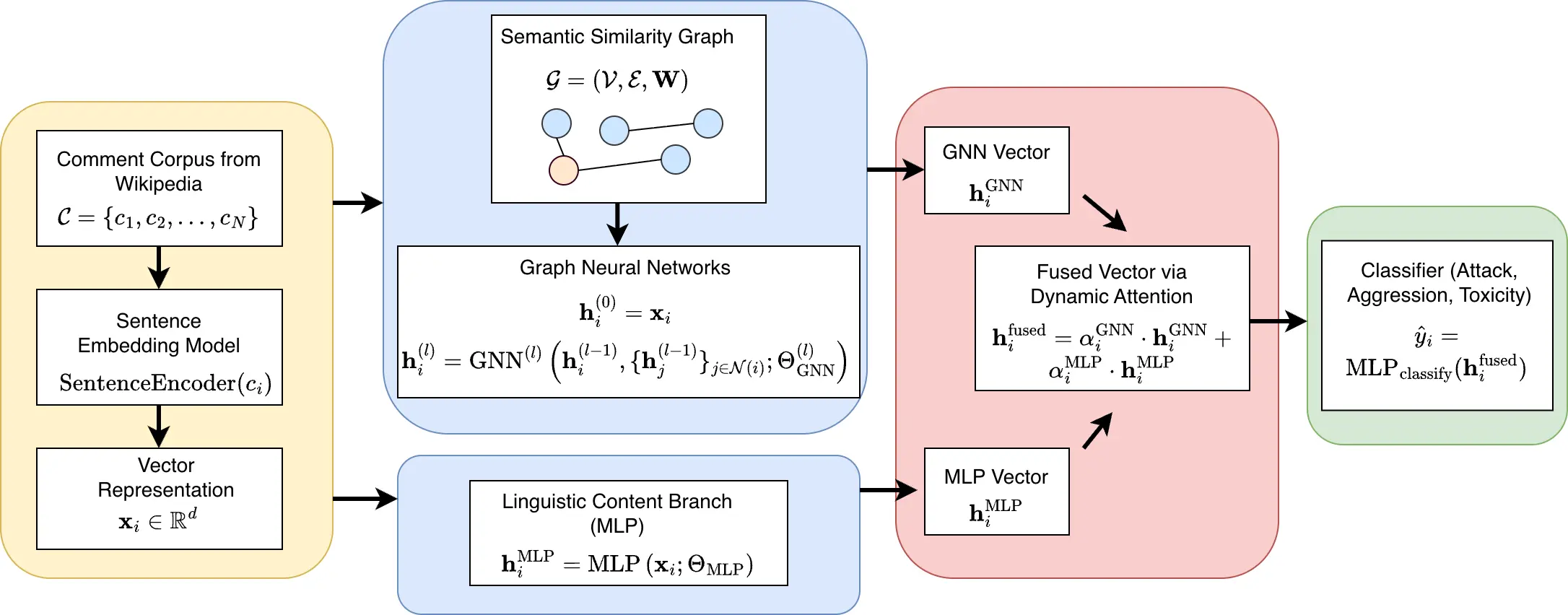

Online incivility has emerged as a widespread and persistent problem in digital communities, imposing substantial social and psychological burdens on users. Although many platforms attempt to curb incivility through moderation and automated detection, the performance of existing approaches often remains limited in both accuracy and efficiency. To address this challenge, we propose a Graph Neural Network (GNN) framework for detecting three types of uncivil behavior (i.e., toxicity, aggression, and personal attacks) within the English Wikipedia community. Our model represents each user comment as a node, with textual similarity between comments defining the edges, allowing the network to jointly learn from both linguistic content and relational structures among comments. We also introduce a dynamically adjusted attention mechanism that adaptively balances nodal and topological features during information aggregation. Empirical evaluations demonstrate that our proposed architecture outperforms 12 state-of-the-art Large Language Models (LLMs) across multiple metrics while requiring significantly lower inference cost. These findings highlight the crucial role of structural context in detecting online incivility and address the limitations of text-only LLM paradigms in behavioral prediction. All datasets and comparative outputs will be publicly available in our repository to support further research and reproducibility. 1

Deep Dive into 위키백과 댓글 무례성 탐지를 위한 그래프 신경망 기반 구조적 분석.

Online incivility has emerged as a widespread and persistent problem in digital communities, imposing substantial social and psychological burdens on users. Although many platforms attempt to curb incivility through moderation and automated detection, the performance of existing approaches often remains limited in both accuracy and efficiency. To address this challenge, we propose a Graph Neural Network (GNN) framework for detecting three types of uncivil behavior (i.e., toxicity, aggression, and personal attacks) within the English Wikipedia community. Our model represents each user comment as a node, with textual similarity between comments defining the edges, allowing the network to jointly learn from both linguistic content and relational structures among comments. We also introduce a dynamically adjusted attention mechanism that adaptively balances nodal and topological features during information aggregation. Empirical evaluations demonstrate that our proposed architecture outper

Online communities have become integral to both organizational and public communication. However, their rapid expansion has also led to a rise in incivility and other forms of antisocial behavior. According to the Cyberbullying Research Center, nearly 33.8% of young teenagers aged 12-17 in the United States have experienced online incivility. Abusive text and hostile online interactions, often regarded as forms of "brutal cyber violence" [1,2], pose serious threats not only to the health and reputation of online communities, but also to the mental well-being of users, contributing to anxiety, depression, and even suicidal tendencies [3,4]. Consequently, curbing online incivility efficiently and effectively is an urgent priority for both online platforms and society at large. Although most online platforms have implemented mechanisms to restrain uncivil behaviors, prior studies suggest that existing systems for moderating personal attacks and cyberbullying remain weak [5]. For example, more than 30% of user comments on major U.S. news sites still exhibit some degree of incivility [6]. Much of the research in this domain has focused on detecting uncivil messages using text classification models [7,1]. However, these models often struggle to generalize due to evolving linguistic patterns, obfuscated spellings, context-dependence, and the absence of relational or conversational context.

To address these challenges, this paper proposes a novel Graph Neural Network (GNN) based framework for identifying uncivil comments in online communities. Specifically, we represent each user comment as a node and define edges based on textual similarity, enabling the network to jointly learn from both linguistic content and the structural relationships among user comments. During classification, this relational architecture aggregates information from both nodal features (text embeddings) and topological features (inter-comment structure) [8,9]. To enhance model expressiveness, we further introduce a dynamically adjusted attention mechanism that adaptively balances these two sources of information during message passing. To evaluate our model, we use the Wikipedia Detox Project dataset [10], which contains an annotated subset of 100,000 Wikipedia comments labeled across three key dimensions of online incivility: (1) personal attacks, which identify direct interpersonal hostility; (2) aggression, capturing the intensity and tone of hostile intent; and (3) toxicity, evaluating the extent to which a comment undermines constructive dialogue. Each comment is independently labeled by approximately ten crowdworkers, providing a robust foundation for modeling online incivility. For benchmarking, we compare our GNN-based model with twelve state-of-the-art Large Language Models (LLMs), which have demonstrated remarkable success in text-based understanding tasks. Experimental results show that our model consistently outperforms these LLMs across multiple evaluation metrics (e.g., AUC, accuracy, precision, recall, F1 score), while achieving substantially lower training and inference costs. Robustness tests further confirm that both topological and nodal features contribute significantly to our model’s predictive performance.

Our contributions are twofold. First, we propose a novel GNN-based framework for online incivility detection that integrates both linguistic and relational structures among user comments. The proposed dynamic attention mechanism enables the model to adaptively balance nodal and topological information during message aggregation, enhancing both predictive performance and interpretability. Second, we conduct a comprehensive empirical evaluation against 12 state-of-the-art LLMs, demonstrating that our approach achieves superior performance while requiring significantly lower computational cost [11,12,13,14,15]. Although LLMs have shown strong capabilities in text-based tasks, they remain computationally expensive and often fail to capture the structural dynamics that underlie social interactions. By releasing our code and model outputs, this paper offers practical value by enabling online communities to more effectively identify and mitigate uncivil behavior, thereby contributing to healthier and more constructive online environments.

The remainder of this paper is organized as follows. In the next section, we review related work on online incivility detection and graph neural network methods. We then describe our proposed graph-based framework, detailing the graph construction and dynamic attention mechanism. Following this, we present the experimental setup, data description, and results. We conclude the paper by pinpointing the potential limitations and proposing avenues for future research.

Our study intersects two main threads of the literature: (1) online incivility and toxic behavior detection, and (2) graph neural networks implementations in online communities. In this session, we review both areas and highli

…(Full text truncated)…

This content is AI-processed based on ArXiv data.