📝 Original Info

- Title: NeSTR: A Neuro-Symbolic Abductive Framework for Temporal Reasoning in Large Language Models

- ArXiv ID: 2512.07218

- Date: 2025-12-08

- Authors: Researchers from original ArXiv paper

📝 Abstract

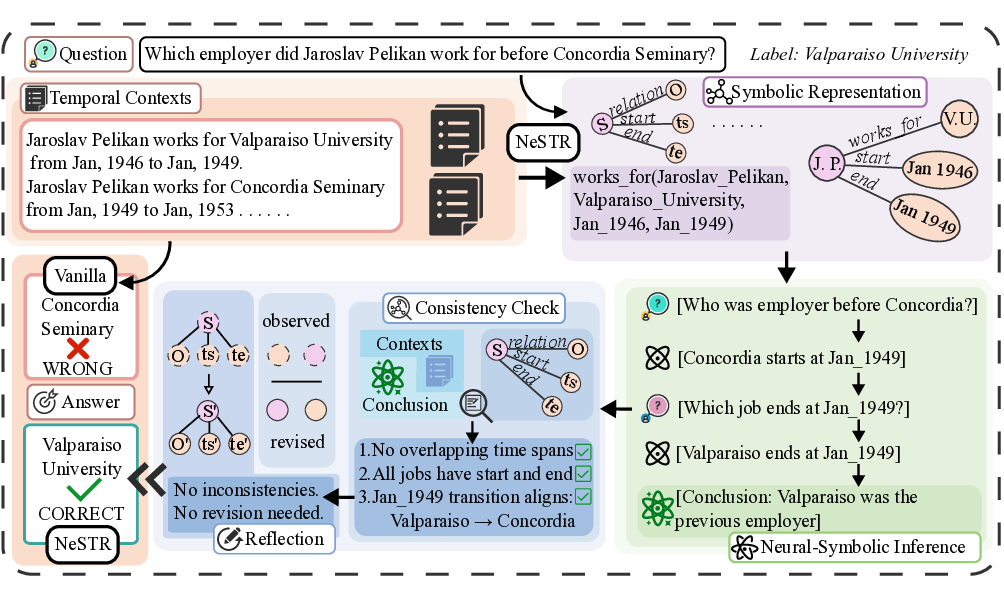

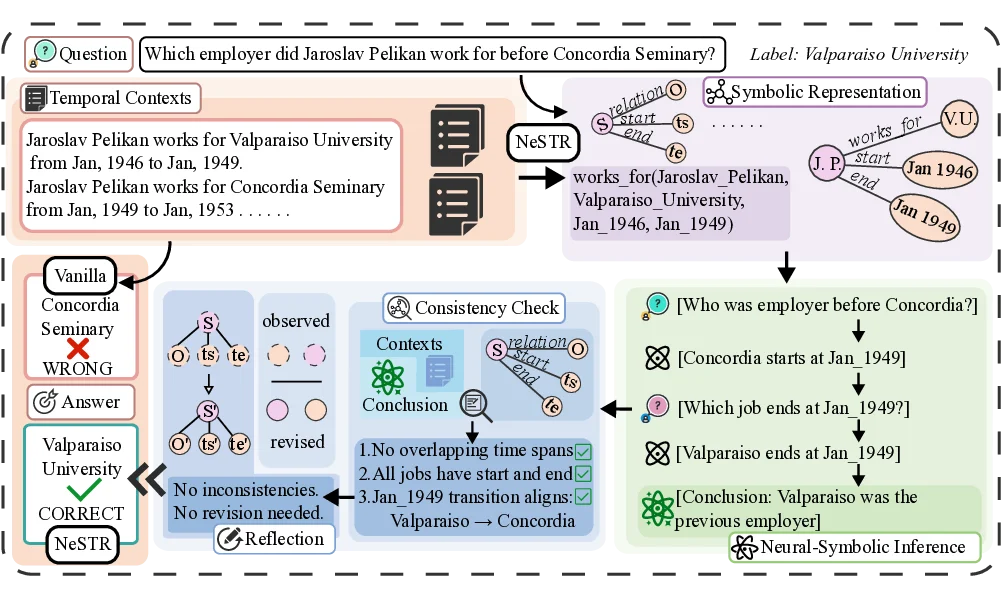

Large Language Models (LLMs) have demonstrated remarkable performance across a wide range of natural language processing tasks. However, temporal reasoning, particularly under complex temporal constraints, remains a major challenge. To this end, existing approaches have explored symbolic methods, which encode temporal structure explicitly, and reflective mechanisms, which revise reasoning errors through multi-step inference. Nonetheless, symbolic approaches often underutilize the reasoning capabilities of LLMs, while reflective methods typically lack structured temporal representations, which can result in inconsistent or hallucinated reasoning. As a result, even when the correct temporal context is available, LLMs may still misinterpret or misapply time-related information, leading to incomplete or inaccurate answers. To address these limitations, in this work, we propose Neuro-Symbolic Temporal Reasoning (NeSTR), a novel framework that integrates structured symbolic representations with hybrid reflective reasoning to enhance the temporal sensitivity of LLM inference. NeSTR preserves explicit temporal relations through symbolic encoding, enforces logical consistency via verification, and corrects flawed inferences using abductive reflection. Extensive experiments on diverse temporal question answering benchmarks demonstrate that NeSTR achieves superior zero-shot performance and consistently improves temporal reasoning without any fine-tuning, showcasing the advantage of neuro-symbolic integration in enhancing temporal understanding in large language models.

💡 Deep Analysis

Deep Dive into NeSTR: A Neuro-Symbolic Abductive Framework for Temporal Reasoning in Large Language Models.

Large Language Models (LLMs) have demonstrated remarkable performance across a wide range of natural language processing tasks. However, temporal reasoning, particularly under complex temporal constraints, remains a major challenge. To this end, existing approaches have explored symbolic methods, which encode temporal structure explicitly, and reflective mechanisms, which revise reasoning errors through multi-step inference. Nonetheless, symbolic approaches often underutilize the reasoning capabilities of LLMs, while reflective methods typically lack structured temporal representations, which can result in inconsistent or hallucinated reasoning. As a result, even when the correct temporal context is available, LLMs may still misinterpret or misapply time-related information, leading to incomplete or inaccurate answers. To address these limitations, in this work, we propose Neuro-Symbolic Temporal Reasoning (NeSTR), a novel framework that integrates structured symbolic representations w

📄 Full Content

NeSTR: A Neuro-Symbolic Abductive Framework for Temporal Reasoning in

Large Language Models

Feng Liang1*, Weixin Zeng2†, Runhao Zhao2, Xiang Zhao2†

1China Academy of Launch Vehicle Technology,

2National Key Laboratory of Big Data and Decision, National University of Defense Technology, China

fungloeng@gmail.com, {zengweixin13, runhaozhao, xiangzhao}@nudt.edu.cn

Abstract

Large Language Models (LLMs) have demonstrated re-

markable performance across a wide range of natural lan-

guage processing tasks. However, temporal reasoning, par-

ticularly under complex temporal constraints, remains a ma-

jor challenge. To this end, existing approaches have ex-

plored symbolic methods, which encode temporal structure

explicitly, and reflective mechanisms, which revise reason-

ing errors through multi-step inference. Nonetheless, sym-

bolic approaches often underutilize the reasoning capabili-

ties of LLMs, while reflective methods typically lack struc-

tured temporal representations, which can result in inconsis-

tent or hallucinated reasoning. As a result, even when the cor-

rect temporal context is available, LLMs may still misinter-

pret or misapply time-related information, leading to incom-

plete or inaccurate answers. To address these limitations, in

this work, we propose Neuro-Symbolic Temporal Reasoning

(NeSTR), a novel framework that integrates structured sym-

bolic representations with hybrid reflective reasoning to en-

hance the temporal sensitivity of LLM inference. NeSTR pre-

serves explicit temporal relations through symbolic encod-

ing, enforces logical consistency via verification, and corrects

flawed inferences using abductive reflection. Extensive exper-

iments on diverse temporal question answering benchmarks

demonstrate that NeSTR achieves superior zero-shot perfor-

mance and consistently improves temporal reasoning without

any fine-tuning, showcasing the advantage of neuro-symbolic

integration in enhancing temporal understanding in large lan-

guage models.

Code and Extended version —

https://github.com/fungloeng/NeSTR.git

Introduction

Large Language Models (LLMs), such as GPT-4 (Achiam

et al. 2023), Gemini2.5 (Comanici et al. 2025), and

Qwen3 (Yang et al. 2025), have demonstrated remarkable

emergent capabilities, achieving human-level performance

across a wide range of Natural Language Processing (NLP)

*The work was done during the first author’s internship at Na-

tional Key Laboratory of Big Data and Decision, National Univer-

sity of Defense Technology, China.

†Corresponding authors.

Copyright © 2026, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

tasks (Zhao et al. 2023; Salemi et al. 2023). The success

of these models is rooted in pretraining on vast static cor-

pora rich in world knowledge (Roberts, Raffel, and Shazeer

2020). However, the static nature of LLMs restricts their

ability to answer time-sensitive queries, resulting in out-

dated or hallucinated responses when recent or temporally

grounded information is required (Wang et al. 2024). This

limitation is particularly evident in Temporal Question An-

swering (TQA), which requires both timeliness, i.e., access-

ing up-to-date knowledge, and temporal reasoning ability,

i.e., the ability to understand and use time expressions in

context (Wang and Zhao 2023; Yang et al. 2024; Zhao et al.

2025).

To satisfy the timeliness requirement of TQA, the

retrieval-augmented generation (RAG) technique is utilized,

which allows LLMs to access up-to-date external informa-

tion at inference time (Zhu et al. 2023b; Chen et al. 2024a).

However, most existing approaches mainly focus on opti-

mizing the retrieval pipeline, such as improving retrievers

or re-rankers (Wu et al. 2024a; Qian et al. 2024; Chen et al.

2024b; Zhang et al. 2025), while overlooking the importance

of temporal reasoning (Gupta et al. 2023; Wang et al. 2024).

As a result, models often fail to generate correct answers

even when relevant evidence is available. Thus, it is critical

to exploit the temporal information in the context to make

accurate temporal reasoning (Jia, Christmann, and Weikum

2024; Su et al. 2024).

To improve temporal reasoning ability, recent works

mainly exploit two broad directions: symbolic structuring

and reflective reasoning. The former transforms temporal

information into structured forms, supporting explicit rule-

based reasoning. For instance, QAaP parses questions and

retrieved passages as Python-style dictionaries and applies

programmable functions to verify consistency and rank an-

swer candidates (Zhu et al. 2023a). Event-AL constructs

event graphs from symbolic tuples and performs abduc-

tive reasoning over inferred temporal relations (Wu et al.

2024b). Reflective approaches, on the other hand, leverage

the reasoning flexibility of LLMs by prompting them to re-

flect on intermediate steps. TISER, for example, guides the

model through timeline construction and iterative revision,

improving consistency on complex

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.