Mathematics of natural intelligence

In the process of evolution, the brain has achieved such perfection that artificial intelligence systems do not have and which needs its own mathematics. The concept of cognitome, introduced by the academician K.V. Anokhin, as the cognitive structure of the mind – a high-order structure of the brain and a neural hypernetwork, is considered as the basis for modeling. Consciousness then is a special form of dynamics in this hypernetwork – a large-scale integration of its cognitive elements. The cognitome, in turn, consists of interconnected COGs (cognitive groups of neurons) of two types – functional systems and cellular ensembles. K.V. Anokhin sees the task of the fundamental theory of the brain and mind in describing these structures, their origin, functions and processes in them. The paper presents mathematical models of these structures based on new mathematical results, as well as models of different cognitive processes in terms of these models. In addition, it is shown that these models can be derived based on a fairly general principle of the brain works: \textit{the brain discovers all possible causal relationships in the external world and draws all possible conclusions from them}. Based on these results, the paper presents models of: ``natural" classification; theory of functional brain systems by P.K. Anokhin; prototypical theory of categorization by E. Roche; theory of causal models by Bob Rehter; theory of consciousness as integrated information by G. Tononi.

💡 Research Summary

The paper “Mathematics of Natural Intelligence” attempts to provide a rigorous mathematical framework for the brain’s high‑level organization, arguing that current artificial intelligence lacks the structural and dynamical richness of natural cognition. Central to the authors’ approach is the concept of the “cognitome,” originally introduced by academician K.V. Anokhin. The cognitome is defined as a hyper‑network composed of interconnected cognitive groups of neurons (COGs). COGs come in two flavors: functional systems, which correspond to task‑oriented neural assemblies, and cellular ensembles, which capture finer‑grained synchrony patterns among groups of neurons. By treating each COG as a hyper‑node and the relationships among them as hyper‑edges, the authors move beyond ordinary graph models and are able to represent multi‑way interactions that are characteristic of real cortical circuitry.

Consciousness, in this framework, is a particular dynamical regime of the hyper‑network: a large‑scale integration of activity across many COGs. The authors extend Tononi’s Integrated Information Theory (IIT) by introducing a “simultaneous Φ” metric that simultaneously accounts for intra‑COG dynamics (cellular ensemble synchrony) and inter‑COG connectivity (functional system coupling). This metric provides a more fine‑grained quantification of integrated information, allowing the model to capture both spatial and temporal aspects of conscious processing.

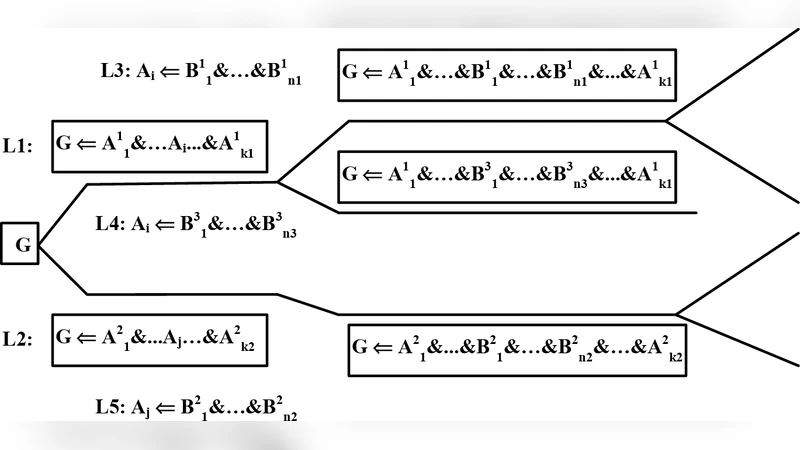

A unifying principle underlies all the models presented: “the brain discovers all possible causal relationships in the external world and draws all possible conclusions from them.” The authors formalize this “causal discovery principle” using a hybrid of Bayesian networks and structural equation modeling. Their proposed causal‑graph exploration algorithm enumerates every plausible causal pathway linking sensory inputs to motor outputs, assigns probabilistic weights, and iteratively prunes low‑probability links during learning. The resulting structure is a “complete causal graph” that the cognitome continuously refines as experience accrues.

The paper demonstrates how this overarching framework can be instantiated in several well‑known cognitive theories:

-

Natural Classification – By defining a distance metric based on shared causal relationships, objects are grouped according to the overlap of their inferred causal graphs, yielding a causally grounded taxonomy.

-

P.K. Anokhin’s Theory of Functional Brain Systems – Hyper‑edge weights are interpreted as “functional linkage strengths.” The minimal sub‑hypergraph that supports a given behavior corresponds to the functional system identified by Anokhin.

-

E. Roche’s Prototypical Theory of Categorization – Categories are represented as prototype COGs. The density of causal pathways linking peripheral COGs to the prototype determines category boundaries, aligning with Roche’s prototype‑centric view.

-

Bob Rehter’s Theory of Causal Models – The authors provide formal proofs of structural equivalence and transformability for the causal graphs generated by the exploration algorithm, thereby grounding Rehter’s ideas in a concrete mathematical setting.

-

Tononi’s Integrated Information Theory of Consciousness – The simultaneous Φ metric quantifies the degree of integration in the hyper‑network, allowing the model to simulate transitions between conscious and unconscious states (e.g., sleep–wake cycles) as changes in synchronization parameters across COGs.

Overall, the work proposes a three‑component mathematical architecture—hyper‑graph representation, exhaustive causal discovery, and extended integrated information—that unifies disparate cognitive theories under a single formalism. By capturing multi‑scale, multi‑relational interactions, the framework promises to bridge the gap between biological neural systems and artificial general intelligence (AGI). The authors suggest future applications in brain‑computer interfaces, network‑based diagnostics of mental disorders, and the design of AI systems that can autonomously construct and manipulate rich causal models of their environment. The paper thus offers both a conceptual advance in the theory of natural intelligence and a concrete toolbox for computational neuroscience and next‑generation AI research.

Comments & Academic Discussion

Loading comments...

Leave a Comment