Large Language Model (LLM) agents are emerging to transform daily life. However, existing LLM agents primarily follow a reactive paradigm, relying on explicit user instructions to initiate services, which increases both physical and cognitive workload. In this paper, we propose ProAgent, the first end-to-end proactive agent system that harnesses massive sensory contexts and LLM reasoning to deliver proactive assistance. ProAgent first employs a proactive-oriented context extraction approach with on-demand tiered perception to continuously sense the environment and derive hierarchical contexts that incorporate both sensory and persona cues. ProAgent then adopts a context-aware proactive reasoner to map these contexts to user needs and tool calls, providing proactive assistance. We implement ProAgent on Augmented Reality (AR) glasses with an edge server and extensively evaluate it on a real-world testbed, a public dataset, and through a user study. Results show that ProAgent achieves up to 33.4% higher proactive prediction accuracy, 16.8% higher tool-calling F1 score, and notable improvements in user satisfaction over state-of-the-art baselines, marking a significant step toward proactive assistants. A video demonstration of ProAgent is available at https://youtu.be/pRXZuzvrcVs.

Deep Dive into ProAgent: Harnessing On-Demand Sensory Contexts for Proactive LLM Agent Systems.

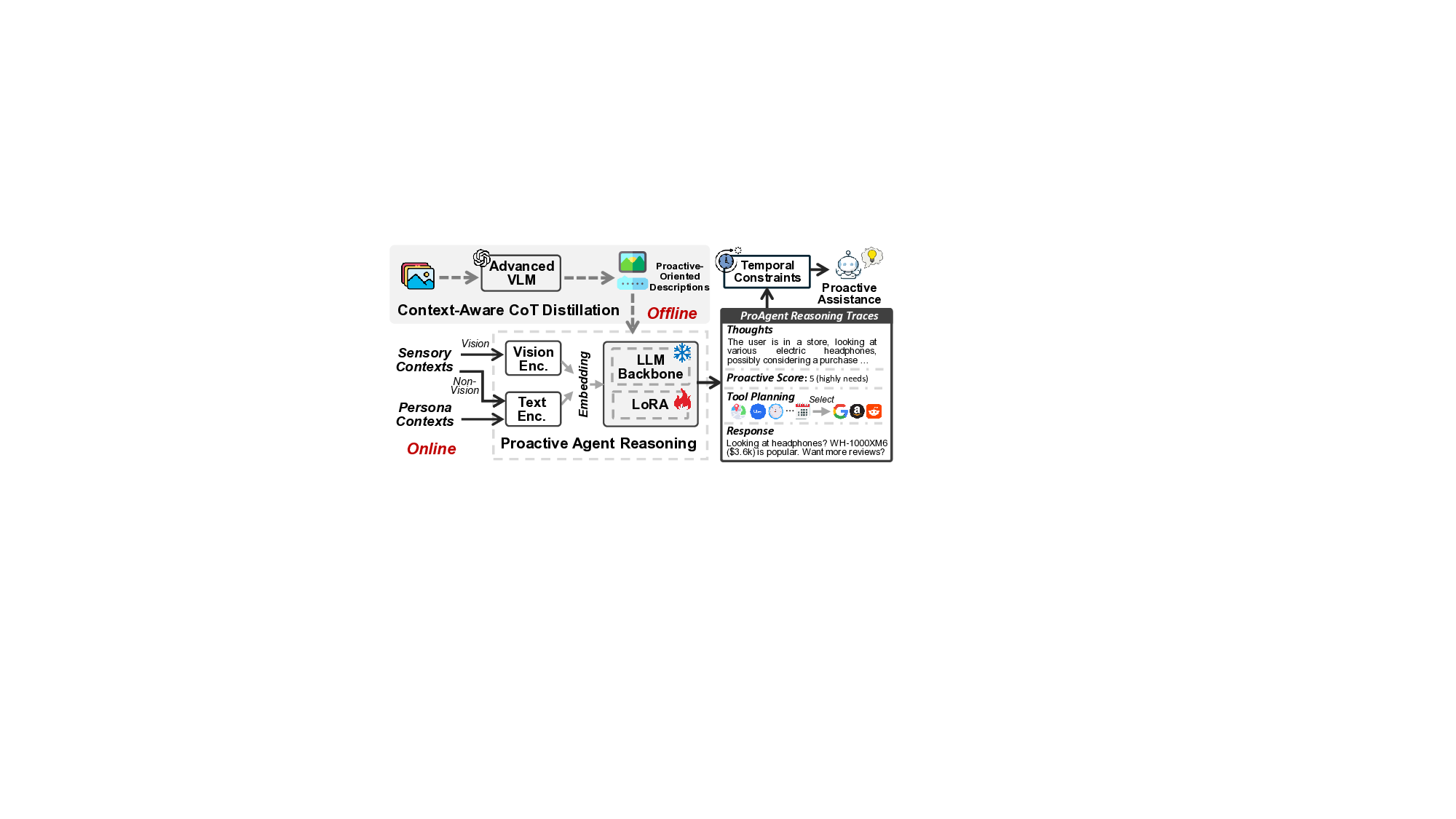

Large Language Model (LLM) agents are emerging to transform daily life. However, existing LLM agents primarily follow a reactive paradigm, relying on explicit user instructions to initiate services, which increases both physical and cognitive workload. In this paper, we propose ProAgent, the first end-to-end proactive agent system that harnesses massive sensory contexts and LLM reasoning to deliver proactive assistance. ProAgent first employs a proactive-oriented context extraction approach with on-demand tiered perception to continuously sense the environment and derive hierarchical contexts that incorporate both sensory and persona cues. ProAgent then adopts a context-aware proactive reasoner to map these contexts to user needs and tool calls, providing proactive assistance. We implement ProAgent on Augmented Reality (AR) glasses with an edge server and extensively evaluate it on a real-world testbed, a public dataset, and through a user study. Results show that ProAgent achieves up

Large Language Model (LLM) agents [29] have the potential to revolutionize human life due to their advanced reasoning and interaction with the physical world, enabling new forms of personal assistance such as mobile task automation [49], home sensor coordination [11], and healthcare support [42]. The global market for devices with agents is expected to approach USD 200 billion within the next decade [9].

Most existing LLM agent systems follow a reactive paradigm, relying on explicit user instructions to initiate their services [23,45,49,61]. Users must manually invoke the agent, typically by taking out the smartphone and issuing instructions, which requires excessive and repetitive human effort. This reactive nature of existing agents not only increases physical workload, but also elevates cognitive burden, † Equal Contribution. ‡ Corresponding author.

I want to buy the headphones. Can you find a cheaper price and some reviews?

Sure, I’ll help search online for prices and reviews…

As I talked with my friend, we want to plan a trip this weekend.

Can you suggest a good option?

Sure, to plan a trip, I’ll help check the weather and your agenda… as users risk missing timely information during live interactions or attention-intensive tasks. In contrast, proactive agents are designed to autonomously perceive environments, anticipate user needs, and deliver timely assistance, thereby reducing both physical and cognitive workload [36]. Shifting from reactive agents, which remain largely passive, to proactive agent systems with the autonomy to perceive, reason, and unobtrusively assist holds great potential.

Although earlier personal assistants with notification features exhibit basic proactivity, they are confined to rulebased workflows without agent reasoning, e.g., fall or abnormal heart rate alerts [5,25]. While recent work explores proactive LLM agents [36], it remains limited to perceiving enclosed desktop environments, offering assistance in computer-use scenarios such as coding. Some agents (e.g., Google’s Magic Cue [1]) can provide pre-defined assistance using text in specific Apps and functions. In contrast, humans are continuously immersed in massive “contexts” that can be sensed through wearable and mobile devices. Harnessing these contexts to enhance the proactivity of LLM agents holds great potential. Moreover, this seamless, handsfree perception closely aligns with the objective of proactive assistants: reducing both human physical and cognitive workloads. Though recent works [52,56] explore proactive agents with sensor data, they only focus on limited scenarios and ignore the system overhead in real-world deployments.

To bridge this research gap, we propose a proactive agent system that harnesses the rich contexts surrounding humans [49] Reactive ✔ ✔ ✘ ✘ AutoIoT [45] Reactive ✔ ✔ ✘ ✘ SocialMind [52] Proactive ✔ ✘ ✔ ✘ Proactive Agent [36] Proactive ✔ ✘ ✘ ✘ ContextLLM [41] N.A.

to proactively deliver unobtrusive assistance. As shown in Fig. 1, unlike reactive LLM agents, which only initiate tasks upon receiving explicit user instructions [33,45,49], proactive LLM agents must continuously perceive their environment to anticipate user intentions and provide unobtrusive assistance. We summarize the unique challenges as follows.

First, delivering timely and content-appropriate proactive assistance requires deriving intention-related cues from massive sensor data. However, while recent work has explored LLMs and visual LLMs (VLMs) [31] to interpret sensor readings for general purposes [17,50,59], extracting proactiveoriented cues from massive, multi-dimensional, and heterogeneous sensor data remains challenging. Second, unlike existing LLM agents that follow a reactive paradigm based on explicit text instructions [33,49], a context-aware proactive agent must map sensor contexts to user intentions, including the timing of proactive actions and required tool calls, posing challenges for LLM reasoning, especially for models running on edge platforms. Third, to avoid missing service opportunities, proactive agents must continuously sense in-situ contexts surrounding humans and perform ongoing LLM reasoning, creating significant system overhead, especially on resource-constrained mobile devices.

In this study, we introduce ProAgent, the first end-toend proactive agent system that integrates multisensory perception and LLM reasoning to deliver proactive assistance. ProAgent first adopts an on-demand tiered perception strategy that coordinates always-on, low-cost sensors with on-demand, higher-cost sensors to continuously capture proactive-relevant cues. ProAgent then employs a proactiveoriented context extraction approach to derive hierarchical contexts from the massive sensor data, incorporating both sensory and persona cues. Finally, it adopts a context-aware proactive reasoner using VLMs, mapping hierarchical contexts to user needs, including the timing and content of assistance, and the calling of

…(Full text truncated)…

This content is AI-processed based on ArXiv data.