Audio-Based Tactile Human-Robot Interaction Recognition

This study explores the use of microphones placed on a robot’s body to detect tactile interactions via sounds produced when the hard shell of the robot is touched. This approach is proposed as an alternative to traditional methods using joint torque sensors or 6-axis force/torque sensors. Two Adafruit I2S MEMS microphones integrated with a Raspberry Pi 4 were positioned on the torso of a Pollen Robotics Reachy robot to capture audio signals from various touch types on the robot arms (tapping, knocking, rubbing, stroking, scratching, and pressing). A convolutional neural network was trained for touch classification on a dataset of 336 pre-processed samples (48 samples per touch type). The model shows high classification accuracy between touch types with distinct acoustic dominant frequencies.

💡 Research Summary

The research paper, titled “Audio-Based Tactile Human-Robot Interaction Recognition,” presents a groundbreaking approach to enhancing Human-Robot Interaction (HRI) by utilizing acoustic signals to perceive physical touch. Traditionally, tactile sensing in robotics has relied heavily on expensive and complex hardware, such as joint torque sensors or 6-axis force/torque sensors. While effective, these methods are difficult to scale across the entire surface of a robot’s body due to high integration costs and structural complexities. This study proposes a cost-effective and scalable alternative: using microphones embedded in the robot’s shell to detect the acoustic signatures produced during physical contact.

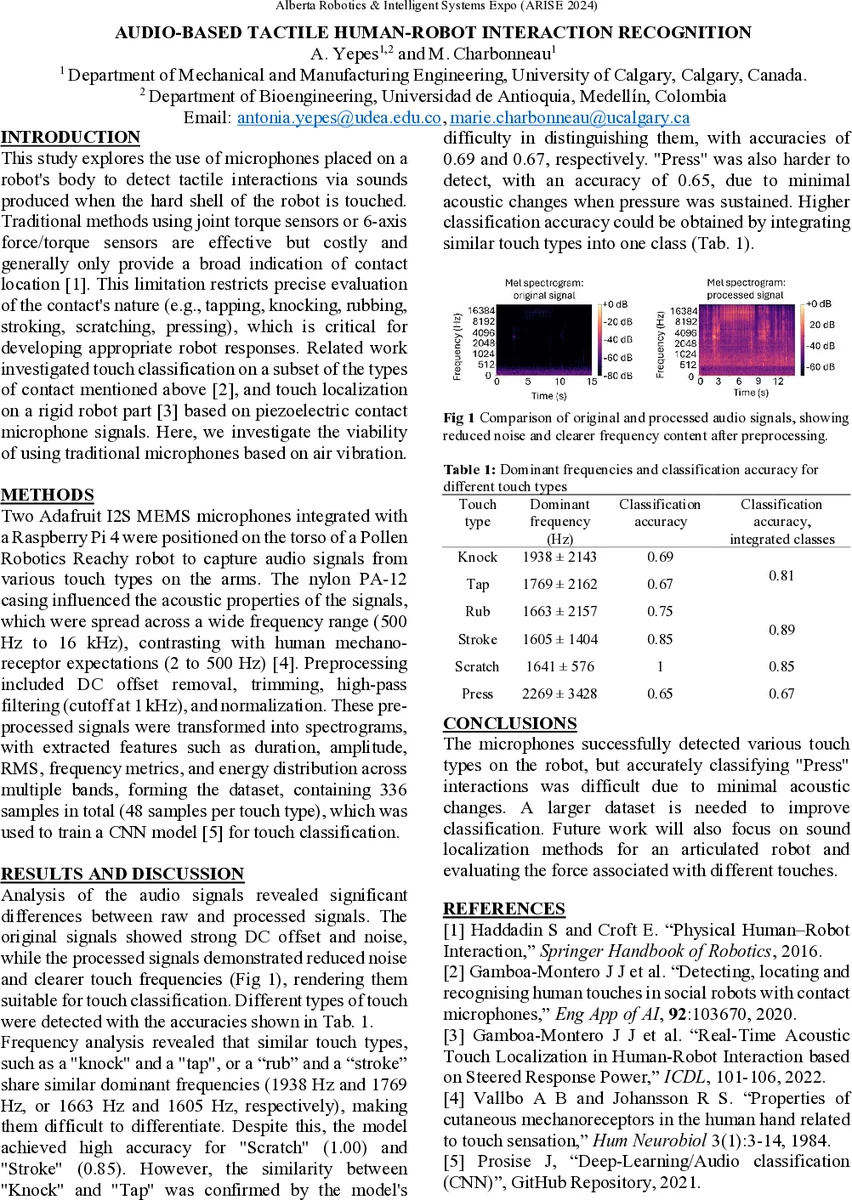

The experimental setup involved the Pollen Robotics “Reachy” robot, equipped with two Adafruit I2S MEMS microphones connected to a Raspberry Pi 4. The microphones were strategically positioned on the robot’s torso to capture sounds generated by various touch types applied to the robot’s arms. The researchers categorized touch into six distinct types: tapping, knocking, rubbing, stroking, scratching, and pressing. To train the recognition model, a dataset of 336 pre-processed audio samples was curated, with 48 samples allocated to each touch category.

To process these acoustic signals, the study employed a Convolutional Neural Network (CNN). The core technical strength of this approach lies in the CNN’s ability to extract intricate features from audio data, particularly when the audio is transformed into frequency-based representations like spectrograms. The model was trained to identify the unique “acoustic fingerprints” associated with each movement. For instance, the high-frequency, impulsive nature of “knocking” or “tapping” presents a distinct pattern compared to the continuous, low-frequency friction sounds produced by “rubbing” or “stroking.” The results demonstrated high classification accuracy, specifically for touch types that exhibit distinct dominant frequencies in the acoustic spectrum.

The significance of this research extends beyond mere accuracy. By replacing high-end force sensors with inexpensive MEMS microphones and a Raspberry Pi, the researchers have demonstrated a pathway toward “sensory-rich” robots that can be produced at a fraction of the current cost. This method allows for a much more flexible deployment of sensors across a robot’s entire surface, effectively creating a “synthetic skin” through sound.

However, the study also acknowledges potential challenges, most notably the impact of ambient environmental noise. Since the system relies on microphones, external sounds could potentially interfere with the accuracy of touch recognition. Future research directions should focus on developing robust noise-cancellation algorithms and expanding the dataset to include more complex, real-world acoustic environments. In conclusion, this paper provides a compelling proof-of-concept for audio-based tactile sensing, offering a scalable, low-cost, and highly intelligent method for robots to perceive and respond to human touch, paving the way for more natural and intuitive human-robot coexistence.

Comments & Academic Discussion

Loading comments...

Leave a Comment