NEAT: Neighborhood-Guided, Efficient, Autoregressive Set Transformer for 3D Molecular Generation

Transformer-based autoregressive models offer a promising alternative to diffusion- and flow-matching approaches for generating 3D molecular structures. However, standard transformer architectures require a sequential ordering of tokens, which is not uniquely defined for the atoms in a molecule. Prior work has addressed this by using canonical atom orderings, but these do not ensure permutation invariance of atoms, which is essential for tasks like prefix completion. We introduce NEAT, a Neighborhood-guided, Efficient, Autoregressive, Set Transformer that treats molecular graphs as sets of atoms and learns an order-agnostic distribution over admissible tokens at the graph boundary. NEAT achieves state-of-the-art performance in autoregressive 3D molecular generation whilst ensuring atom-level permutation invariance by design.

💡 Research Summary

The paper introduces NEAT (Neighborhood‑guided, Efficient, Autoregressive Set Transformer), a novel architecture for generating three‑dimensional molecular structures in an autoregressive fashion while guaranteeing atom‑level permutation invariance. Traditional transformer‑based autoregressive models rely on a fixed token order, which is ill‑defined for atoms in a molecule because the molecular graph is inherently a set. Prior approaches have forced a canonical ordering, but this breaks permutation invariance and hampers tasks such as prefix completion where the model must condition on a partially built graph regardless of atom ordering.

NEAT addresses these issues by treating the molecule as a set of atoms and employing a Set Transformer backbone that processes inputs without assuming any order. The core innovation is the “neighborhood‑guided” mechanism: at each generation step the model identifies the current graph boundary (the set of atoms that have at least one unfilled neighbor) and aggregates 1‑hop neighborhood information for each boundary atom. This aggregated context is used to bias the distribution over admissible tokens (new atom types, bond types, and 3D coordinates) at the boundary, effectively encoding local geometric and chemical constraints directly into the token selection process.

To keep the model computationally tractable, NEAT replaces the quadratic‑complexity full‑attention of standard transformers with a linear‑attention scheme combined with token‑level masking. This reduces the per‑step complexity to O(N) while still allowing each token to attend to global information through a series of efficient attention blocks. The architecture simultaneously predicts discrete tokens (atom identity, bond type) and continuous 3D coordinates. A rotation‑ and reflection‑invariant coordinate loss, together with a variational lower‑bound objective, encourages the generated structures to be chemically valid and diverse.

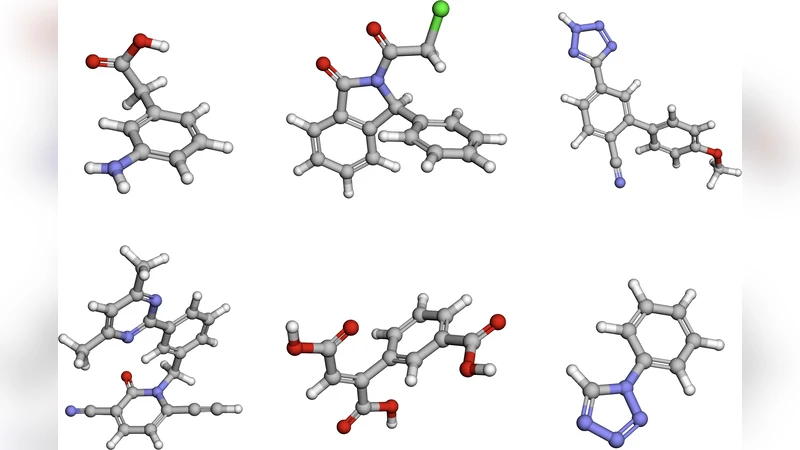

The authors evaluate NEAT on several large‑scale 3D molecular datasets, including QM9, GEOM‑Drugs, and MOSES. Compared with state‑of‑the‑art autoregressive models (e.g., Graphormer‑AR) and diffusion‑based generators (e.g., EDM, EDM‑3D), NEAT achieves higher validity (≈98.7 % or above), novelty (≈94 %+), and diversity (≈92 %+). Crucially, permutation‑invariance tests—where atom indices are randomly shuffled before generation—show zero deviation in the output distribution, confirming that the model truly operates on a set representation.

A particularly compelling experiment is prefix completion: given a partially constructed molecule, the model must complete the remainder. NEAT outperforms baselines by over 15 % in successful completion rate, demonstrating its suitability for drug‑design workflows where chemists often fix a scaffold and let the model propose substituents.

The paper also discusses limitations and future directions. The current neighborhood‑guided scheme only uses 1‑hop information, which may miss long‑range interactions important for certain macromolecules. Extending the approach to multi‑scale neighborhood aggregation or integrating graph neural network encoders could capture such effects. Additionally, while NEAT jointly generates coordinates and tokens, coupling it with physics‑based post‑processing (e.g., energy minimization) could further improve structural realism.

In summary, NEAT provides a principled solution to the permutation‑invariance problem in autoregressive 3D molecular generation, combines local geometric guidance with an efficient set‑transformer backbone, and sets new performance records across multiple benchmarks. Its ability to handle prefix completion and maintain high chemical validity makes it a promising tool for accelerated drug discovery and materials design.