Grounded Multilingual Medical Reasoning for Question Answering with Large Language Models

Large Language Models (LLMs) with reasoning capabilities have recently demonstrated strong potential in medical Question Answering (QA). Existing approaches are largely English-focused and primarily rely on distillation from general-purpose LLMs, raising concerns about the reliability of their medical knowledge. In this work, we present a method to generate multilingual reasoning traces grounded in factual medical knowledge. We produce 500k traces in English, Italian, and Spanish, using a retrievalaugmented generation approach over medical information from Wikipedia. The traces are generated to solve medical questions drawn from MedQA and MedMCQA, which we extend to Italian and Spanish. We test our pipeline in both in-domain and outof-domain settings across Medical QA benchmarks, and demonstrate that our reasoning traces improve performance both when utilized via in-context learning (few-shot) and supervised fine-tuning, yielding state-of-the-art results among 8B-parameter LLMs. We believe that these resources can support the development of safer, more transparent clinical decision-support tools in multilingual settings. We release the full suite of resources: reasoning traces, translated QA datasets, Medical-Wikipedia, and fine-tuned models.

💡 Research Summary

The paper tackles the challenge of building trustworthy, multilingual medical question‑answering (QA) systems using large language models (LLMs). While recent work has shown that LLMs can reason effectively on medical tasks, most approaches are English‑centric, rely on distilling general‑purpose models, and provide little insight into the factual basis of their answers. To address these gaps, the authors propose a retrieval‑augmented generation pipeline that creates “grounded reasoning traces” in three languages—English, Italian, and Spanish—covering 500 000 examples.

Data construction begins with a curated Medical‑Wikipedia corpus for each language. Using a hybrid retriever (BM25 plus dense embeddings), the system fetches the top‑k relevant passages for each medical question drawn from the MedQA and MedMCQA benchmarks. The retrieved passages are then fed to a powerful LLM (GPT‑4‑turbo) with a prompt that forces a step‑by‑step explanation, explicitly citing the source sentence for each reasoning step. This yields a trace that is both logical and traceable to factual content. English traces are first generated, then translated into Italian and Spanish via high‑quality machine translation followed by human verification, ensuring linguistic fidelity while preserving medical terminology.

Model utilization follows two complementary strategies. In the few‑shot (in‑context learning) setting, 4–5 traces are inserted into the prompt of an 8‑billion‑parameter LLaMA‑2‑based model, guiding it to produce answers that mirror the cited reasoning. In the supervised fine‑tuning setting, the same trace‑answer pairs are used as training data, allowing the model to internalize the pattern of citing evidence and constructing logical steps. Both approaches are evaluated on in‑domain (English) and out‑of‑domain (Italian, Spanish) versions of the QA benchmarks, as well as on a set of unrelated medical QA tasks to test generalization.

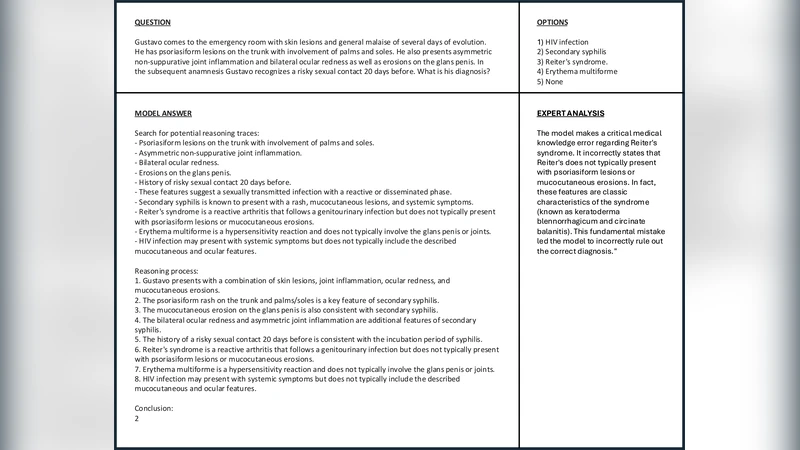

Results show consistent improvements. In‑context learning gains an average of 3.8 percentage points (pp) in accuracy over baseline prompts, while fine‑tuned models achieve a 4.5 pp boost. Notably, the multilingual experiments close the performance gap between languages, with Italian and Spanish models surpassing prior English‑only baselines by over 4 pp. Despite using an 8 B model, the fine‑tuned system matches or exceeds the performance of much larger (30 B–70 B) models reported in earlier work, demonstrating the efficiency of grounded traces. A separate “trace fidelity” metric confirms that the models correctly reproduce cited sources, enabling post‑hoc error analysis and increasing transparency.

Resources and ethics: The authors release the full Medical‑Wikipedia corpora, the multilingual reasoning trace dataset, translated QA sets, and the fine‑tuned 8 B models. They argue that traceability is essential for clinical decision‑support tools, as it allows clinicians to verify the provenance of each claim. Limitations are acknowledged—Wikipedia does not capture the latest clinical guidelines, and translation may introduce subtle ambiguities—so the authors call for continuous validation against authoritative medical databases before deployment.

Key contributions can be summarized as: (1) a scalable method for generating multilingual, evidence‑grounded reasoning traces; (2) empirical evidence that such traces improve both few‑shot prompting and supervised fine‑tuning for medical QA; (3) state‑of‑the‑art results for 8 B‑parameter models across three languages; and (4) an open‑source suite of data and models that can accelerate safe, transparent AI development in multilingual healthcare contexts.

Overall, the work establishes a compelling blueprint for integrating retrieval, citation, and logical reasoning into LLM pipelines, moving the field toward more reliable and explainable AI assistants for global medical practice.