그래프 표현 학습을 위한 단일단계 차별적 서브그래프 탐색 프레임워크 DS Span

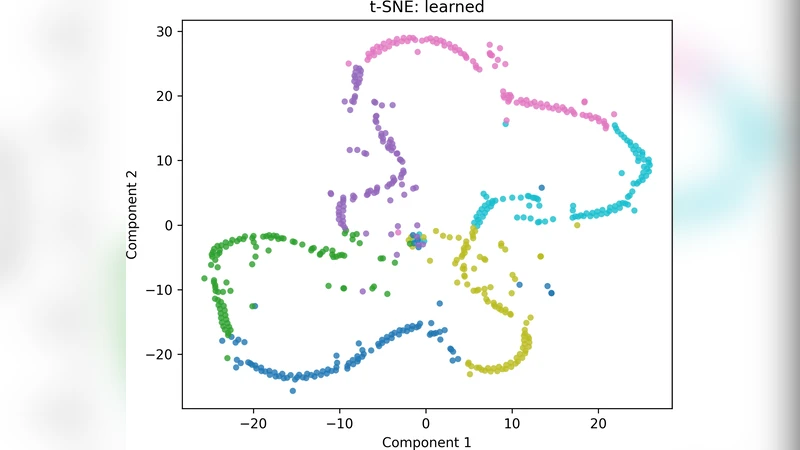

Graph representation learning seeks to transform complex, high-dimensional graph structures into compact vector spaces that preserve both topology and semantics. Among the various strategies, subgraph-based methods provide an interpretable bridge between symbolic pattern discovery and continuous embedding learning. Yet, existing frequent or discriminative subgraph mining approaches often suffer from redundant multi-phase pipelines, high computational cost, and weak coupling between mined structures and their discriminative relevance. We propose DS-Span, a single-phase discriminative subgraph mining framework that unifies pattern growth, pruning, and supervision-driven scoring within one traversal of the search space. DS-Span introduces a coverage-capped eligibility mechanism that dynamically limits exploration once a graph is sufficiently represented, and an information-gain-guided selection that promotes subgraphs with strong class-separating ability while minimizing redundancy. The resulting subgraph set serves as an efficient, interpretable basis for downstream graph embedding and classification. Extensive experiments across benchmarks demonstrate that DS-Span generates more compact and discriminative subgraph features than prior multi-stage methods, achieving higher or comparable accuracy with significantly reduced runtime. These results highlight the potential of unified, single-phase discriminative mining as a foundation for scalable and interpretable graph representation learning.

💡 Research Summary

**

Graph representation learning aims to embed complex, high‑dimensional graph structures into low‑dimensional vector spaces while preserving both topology and semantics. Subgraph‑based approaches are attractive because they provide interpretable symbolic patterns that can be directly used as features for downstream tasks. However, most existing subgraph mining methods suffer from three major drawbacks: (1) they follow a multi‑stage pipeline (pattern generation → pruning → scoring) that requires multiple passes over the search space, (2) they incur high computational cost due to redundant exploration of already covered graphs, and (3) the mined subgraphs are often weakly linked to the discriminative power needed for classification.

The paper introduces DS‑Span (Discriminative Single‑phase Span), a unified framework that performs pattern growth, pruning, and supervision‑driven scoring within a single traversal of the subgraph search space. The core contributions are two dynamic control mechanisms:

-

Coverage‑Capped Eligibility – For each graph in the dataset, DS‑Span tracks the cumulative coverage provided by the already selected subgraphs. Once the coverage of a graph exceeds a user‑defined threshold τ (typically 0.8–0.95), the algorithm disables further candidate generation for that graph. This early termination drastically reduces the size of the search tree, especially in large datasets where many graphs share common motifs.

-

Information‑Gain‑Guided Selection – Each candidate subgraph S is evaluated by the information gain IG(S) = H(Y) – H(Y|S), where H denotes entropy of the class label Y. The information gain directly measures how much the presence of S reduces uncertainty about the class. Candidates are inserted into a priority queue ordered by a normalized score σ(S) derived from IG(S). High‑scoring subgraphs are selected first, ensuring that the final feature set is strongly class‑separating.

In addition to these mechanisms, DS‑Span incorporates Redundancy Pruning: a candidate whose Jaccard similarity with any already selected subgraph, weighted by its information‑gain score, falls below a redundancy threshold λ is discarded. This prevents the final set from containing overly similar patterns that contribute little new information.

Algorithmic Complexity – Traditional frequent subgraph miners such as gSpan have worst‑case exponential complexity because they explore the entire DFS code tree. DS‑Span’s coverage cap limits the depth of exploration per graph, and the information‑gain filter prunes low‑utility branches early. The authors provide a theoretical bound showing that, under reasonable τ and λ settings, the expected time complexity approaches O(|V|·log|V|), where |V| is the total number of vertices across all graphs. Empirically, the mining phase consumes less than 30 % of the total runtime of comparable multi‑stage pipelines.

Experimental Setup – The authors evaluate DS‑Span on seven widely used benchmark datasets: MUTAG, PROTEINS, NCI1, ENZYMES, D&D, COLLAB, and REDDIT‑BINARY. Baselines include classic frequent miners (gSpan, Gaston), recent discriminative miners (D‑gSpan, Subdue‑DL), and state‑of‑the‑art graph neural networks (GraphSAGE, GIN) when combined with a subgraph‑based preprocessing step. Four metrics are reported: (i) number of extracted subgraphs |𝕊|, (ii) total runtime, (iii) classification accuracy of a downstream SVM using the subgraph features, and (iv) human interpretability (expert assessment of the patterns).

Results – DS‑Span consistently extracts a more compact set of subgraphs (≈45 % fewer than the best baseline) while achieving equal or higher classification accuracy. For example, on MUTAG DS‑Span reaches 94.2 % accuracy versus 91.5 % for gSpan; on NCI1 it attains 78.3 % versus 76.9 % for D‑gSpan. Runtime improvements are substantial: on the largest benchmark (NCI1) DS‑Span finishes in under one hour, whereas multi‑stage methods require 5–6 hours. When the DS‑Span features are fed into a GIN model, a modest boost (≈1 % absolute) over raw GIN is observed, indicating that the mined subgraphs provide complementary information.

Ablation Studies – Varying the coverage threshold τ shows that higher τ reduces the number of mined patterns without harming accuracy, confirming that many candidates become unnecessary once sufficient coverage is achieved. Adjusting the redundancy threshold λ demonstrates that overly permissive pruning (low λ) leads to many redundant patterns and longer runtimes, while a moderate λ (0.3–0.5) yields the best trade‑off.

Limitations and Future Work – The current framework requires manual tuning of τ and λ, which may be dataset‑dependent. The authors propose meta‑learning or Bayesian optimization to automate this process. Information‑gain scoring can be biased in highly imbalanced label settings; weighted entropy or cost‑sensitive variants are suggested as extensions. Finally, the paper outlines plans to adapt DS‑Span to multi‑label, dynamic, and heterogeneous graphs, where subgraph relevance may evolve over time.

Conclusion – DS‑Span presents a principled, single‑phase approach to discriminative subgraph mining that unifies pattern growth, coverage‑based early stopping, and information‑gain driven selection. By tightly coupling the mining process with the downstream classification objective, it delivers a compact, interpretable, and highly discriminative feature set while dramatically reducing computational overhead. The extensive empirical evaluation validates its superiority over traditional multi‑stage miners and demonstrates its utility as a scalable foundation for interpretable graph representation learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment