📝 Original Info

- Title: PRiSM 과학적 추론을 위한 동적 멀티모달 벤치마크

- ArXiv ID: 2512.05930

- Date: 2025-12-05

- Authors: Shima Imani, Seungwhan Moon, Adel Ahmadyan, Lu Zhang, Kirmani Ahmed, Babak Damavandi

📝 Abstract

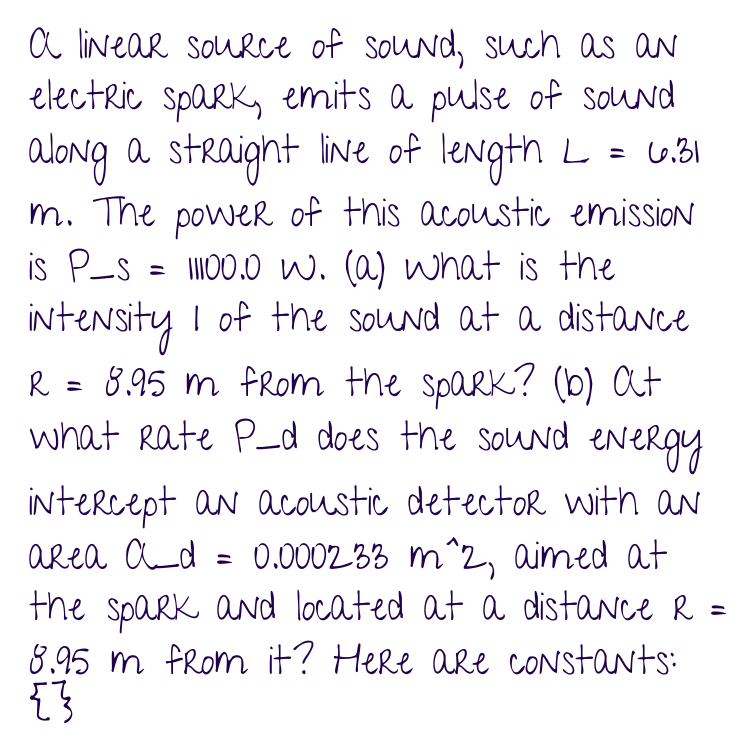

Evaluating vision-language models (VLMs) in scientific domains like mathematics and physics poses unique challenges that go far beyond predicting final answers. These domains demand conceptual understanding, symbolic reasoning, and adherence to formal laws, requirements that most existing benchmarks fail to address. In particular, current datasets tend to be static, lacking intermediate reasoning steps, robustness to variations, or mechanisms for verifying scientific correctness. To address these limitations, we introduce PRiSM, a synthetic, fully dynamic, and multimodal benchmark for evaluating scientific reasoning via grounded Python code. PRiSM includes over 24,750 university-level physics and math problems, and it leverages our scalable agent-based pipeline, PrismAgent, to generate well-structured problem instances. Each problem contains dynamic textual and visual input, a generated figure, alongside rich structured outputs: executable Python code for ground truth generation and verification, and detailed step-by-step reasoning. The dynamic nature and Python-powered automated ground truth generation of our benchmark allow for fine-grained experimental auditing of multimodal VLMs, revealing failure modes, uncertainty behaviors, and limitations in scientific reasoning. To this end, we propose five targeted evaluation tasks covering generalization, symbolic program synthesis, perturbation robustness, reasoning correction, and ambiguity resolution. Through comprehensive evaluation of existing VLMs, we highlight their limitations and showcase how PRiSM enables deeper insights into their scientific reasoning capabilities.

💡 Deep Analysis

Deep Dive into PRiSM 과학적 추론을 위한 동적 멀티모달 벤치마크.

Evaluating vision-language models (VLMs) in scientific domains like mathematics and physics poses unique challenges that go far beyond predicting final answers. These domains demand conceptual understanding, symbolic reasoning, and adherence to formal laws, requirements that most existing benchmarks fail to address. In particular, current datasets tend to be static, lacking intermediate reasoning steps, robustness to variations, or mechanisms for verifying scientific correctness. To address these limitations, we introduce PRiSM, a synthetic, fully dynamic, and multimodal benchmark for evaluating scientific reasoning via grounded Python code. PRiSM includes over 24,750 university-level physics and math problems, and it leverages our scalable agent-based pipeline, PrismAgent, to generate well-structured problem instances. Each problem contains dynamic textual and visual input, a generated figure, alongside rich structured outputs: executable Python code for ground truth generation and ve

📄 Full Content

PRiSM: An Agentic Multimodal Benchmark

for Scientific Reasoning via

Python-Grounded Evaluation

Shima Imani, Seungwhan Moon, Adel Ahmadyan, Lu Zhang, Kirmani Ahmed, Babak

Damavandi

Meta Reality Lab

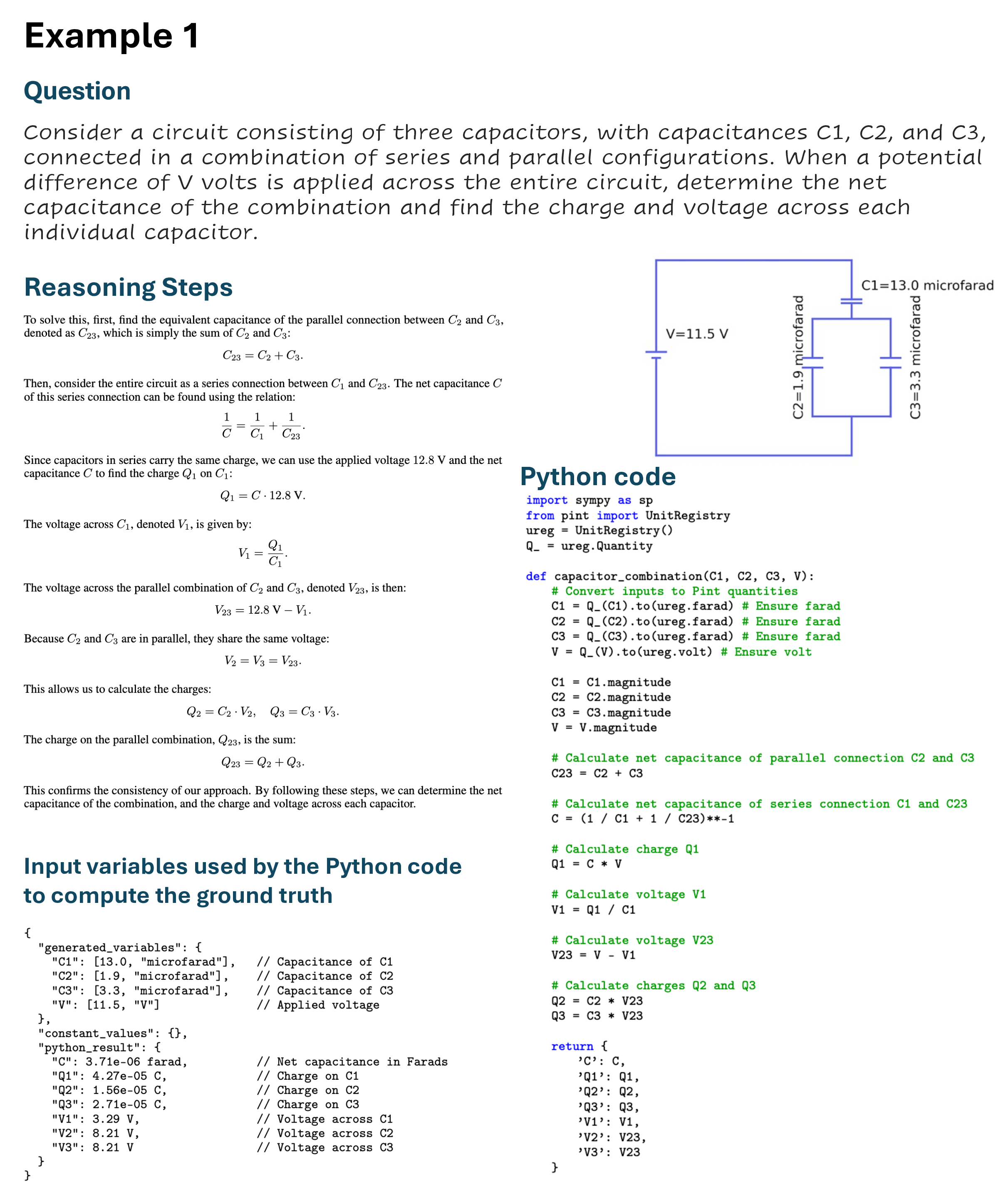

Evaluating vision-language models (VLMs) in scientific domains like mathematics and physics poses unique

challenges that go far beyond predicting final answers. These domains demand conceptual understanding,

symbolic reasoning, and adherence to formal laws, requirements that most existing benchmarks fail to address.

In particular, current datasets tend to be static, lacking intermediate reasoning steps, robustness to variations, or

mechanisms for verifying scientific correctness. To address these limitations, we introduce PRiSM, a synthetic,

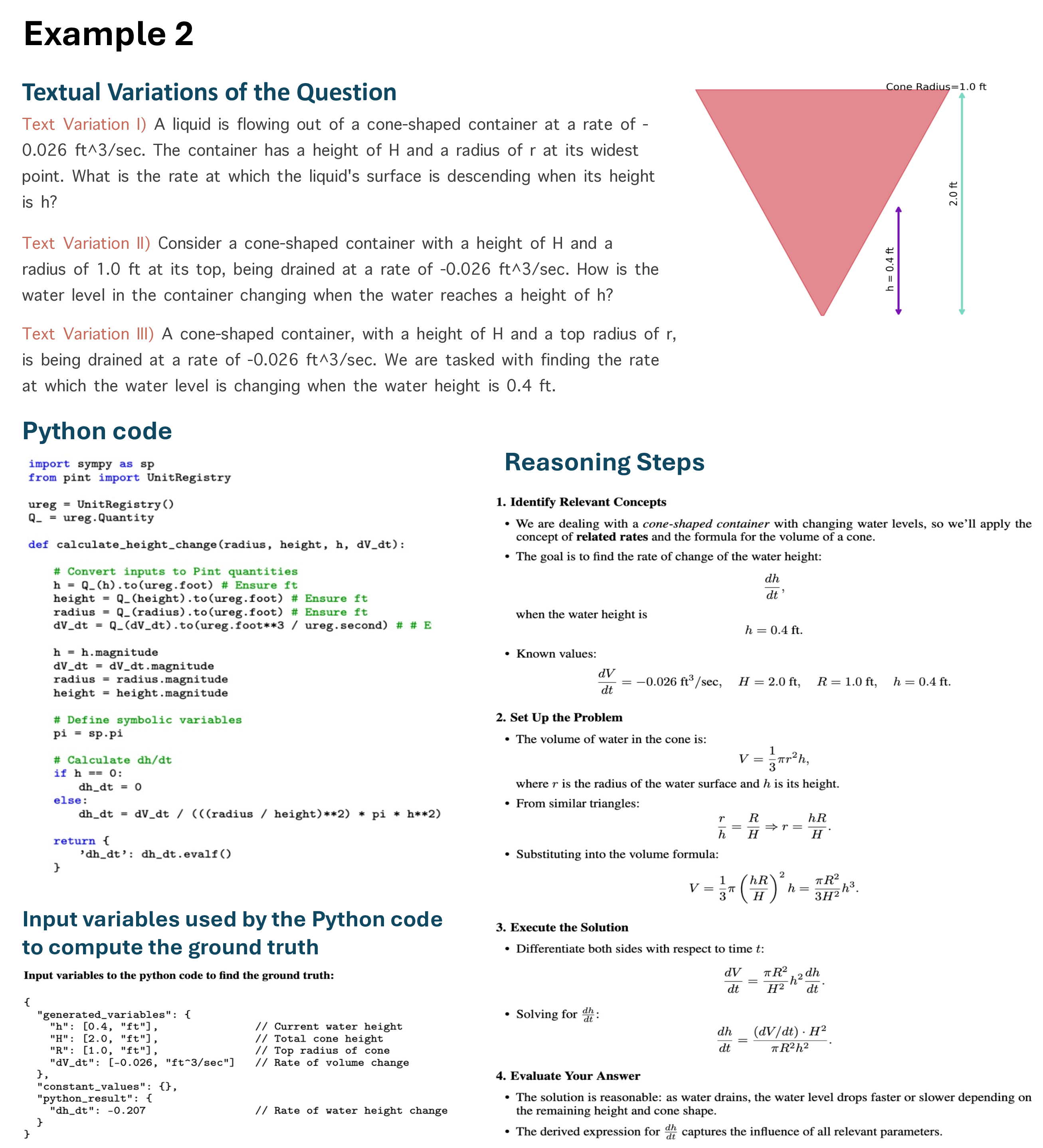

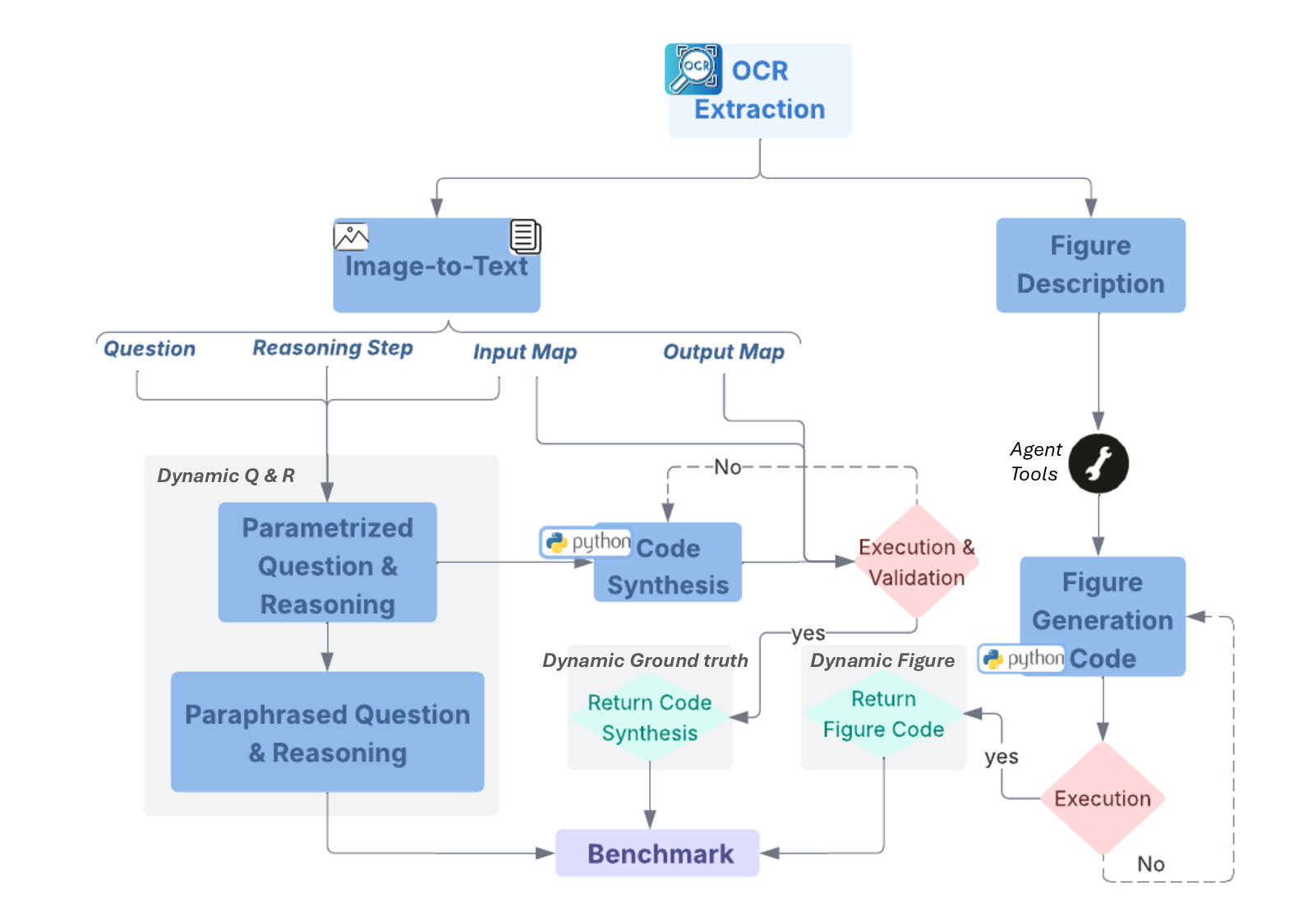

fully dynamic, and multimodal benchmark for evaluating scientific reasoning via grounded Python code. PRiSM

includes over 24,750 university-level physics and math problems, and it leverages our scalable agent-based

pipeline, PrismAgent, to generate well-structured problem instances. Each problem contains dynamic textual

and visual input, a generated figure, alongside rich structured outputs: executable Python code for ground truth

generation and verification, and detailed step-by-step reasoning. The dynamic nature and Python-powered

automated ground truth generation of our benchmark allow for fine-grained experimental auditing of multimodal

VLMs, revealing failure modes, uncertainty behaviors, and limitations in scientific reasoning. To this end,

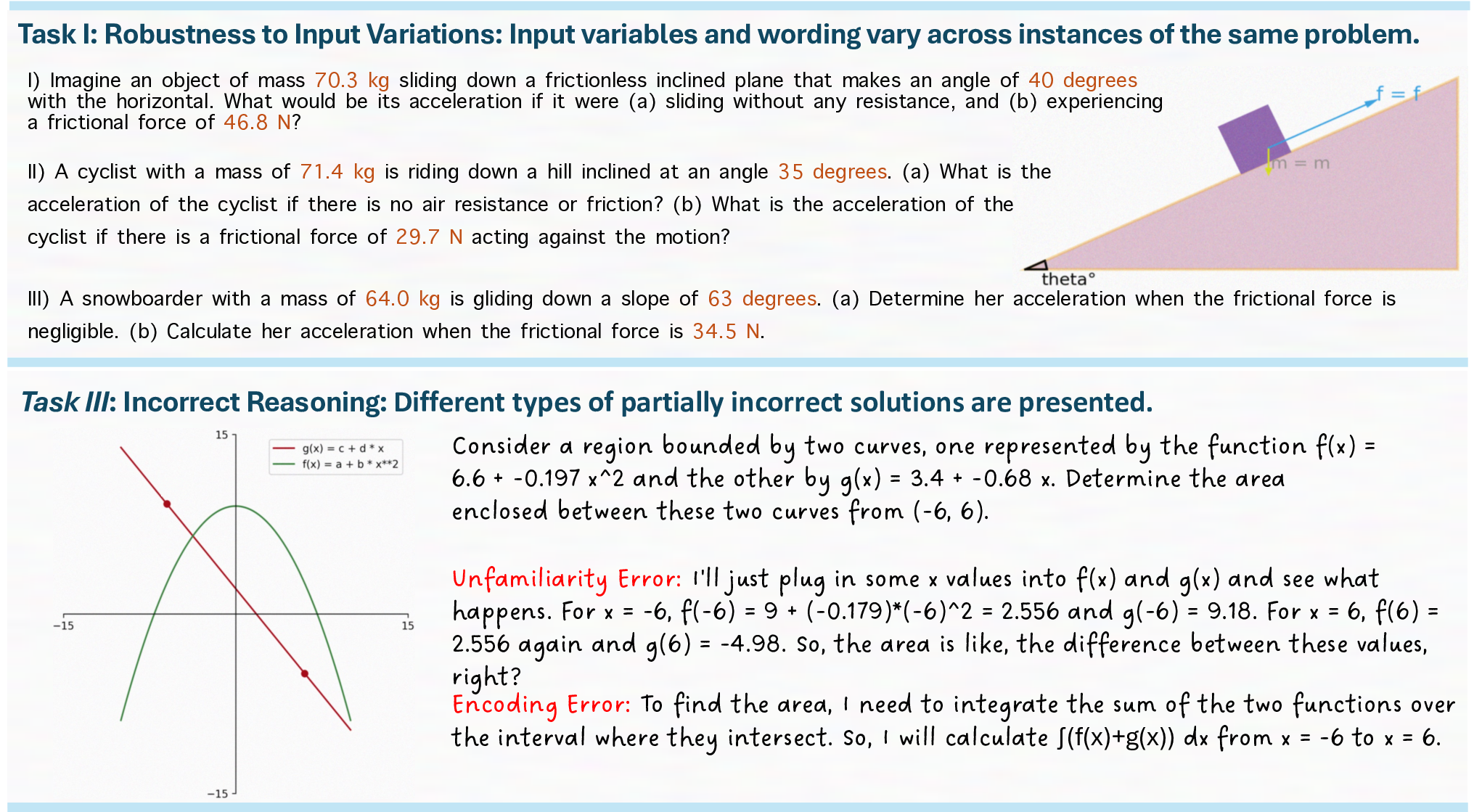

we propose five targeted evaluation tasks covering generalization, symbolic program synthesis, perturbation

robustness, reasoning correction, and ambiguity resolution. Through comprehensive evaluation of existing

VLMs, we highlight their limitations and showcase how PRiSM enables deeper insights into their scientific

reasoning capabilities.

Date: December 8, 2025

Correspondence: First Author at shimaimani@meta.com

1

Introduction

Effective reasoning is fundamental for systematic problem-solving, logical deduction, and structured decision-making.

In complex scientific domains like mathematics and physics, accurate reasoning requires the explicit integration of

theoretical principles, rigorous mathematical processes, and computational verification to ensure dimensional and

numerical validity Lake et al. (2017); Polya (2014); Chi et al. (1981); Newell and Simon (1972).

Recent advancements in multimodal VLMs have significantly improved their reasoning capabilities. Innovations like

advanced prompting techniques (e.g., chain-of-thought, tree-of-thought, and self-reflection), supervised fine-tuning on

reasoning tasks, direct preference optimization (DPO), and reinforcement learning with human feedback (RLHF) have

contributed to these improvements Kojima et al. (2022); Wei et al. (2022); Yao et al. (2023); Renze and Guven (2024);

Ouyang et al. (2022); Rafailov et al. (2023). Alongside these advancements, a variety of datasets and benchmarks have

emerged, explicitly designed to assess and enhance the reasoning skills of models in scientific contexts Lu et al. (2022);

Wang et al. (2023); Hendrycks et al. (2021); Sun et al. (2024).

Nonetheless, existing benchmarks remain limited in several critical ways:

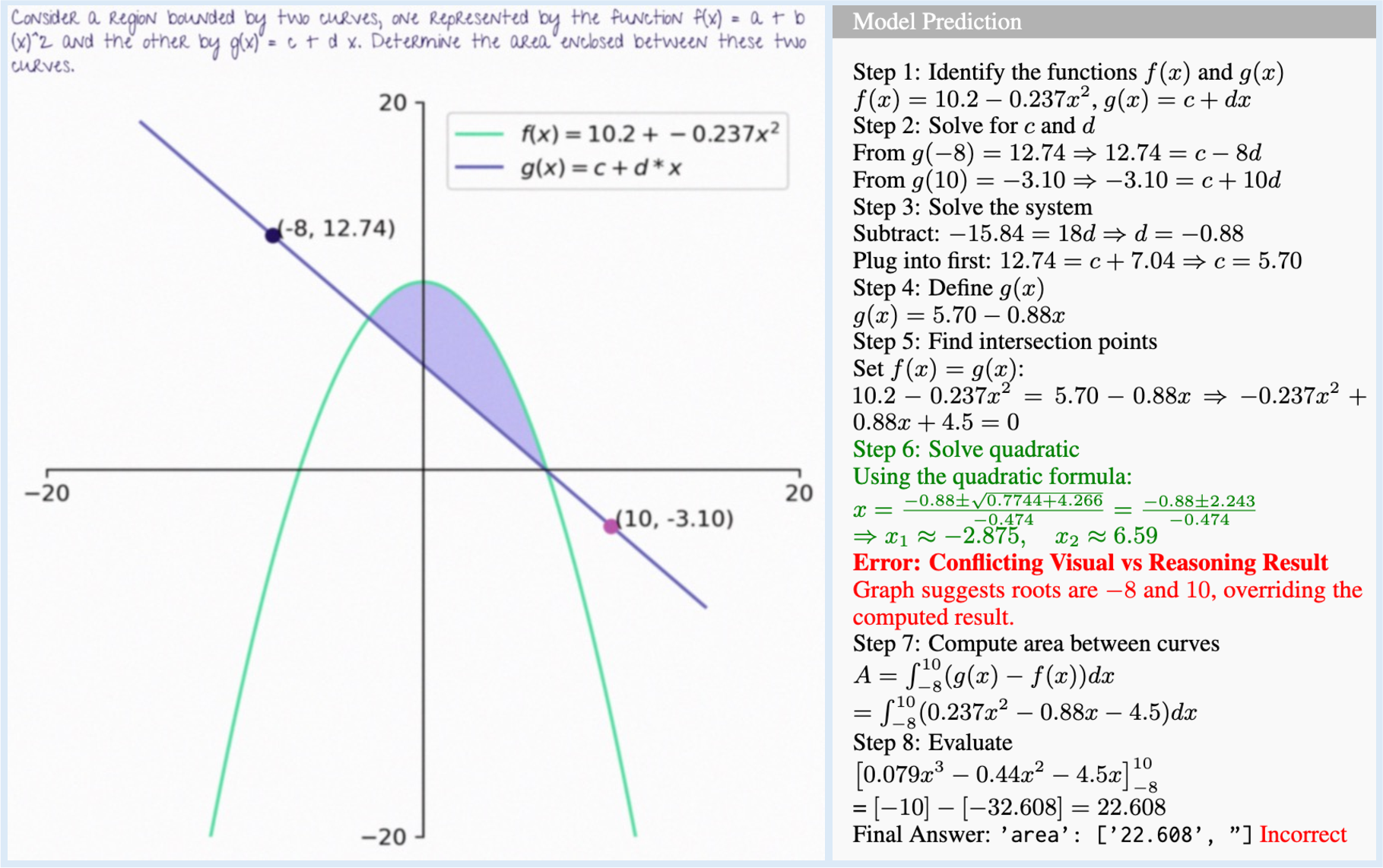

• Limited Generalization: Most benchmarks rely on static problem formulations, lacking systematic variations or

paraphrases. Furthermore, the visual modality is typically static. Consequently, these datasets fail to rigorously assess

models’ generalization and robustness across diverse problem settings and parameter variations.

• Missing Intermediate Reasoning: The majority of datasets provide only final numerical answers, omitting

detailed intermediate reasoning steps. This absence of granular guidance hinders a comprehensive evaluation of a

1

arXiv:2512.05930v1 [cs.AI] 5 Dec 2025

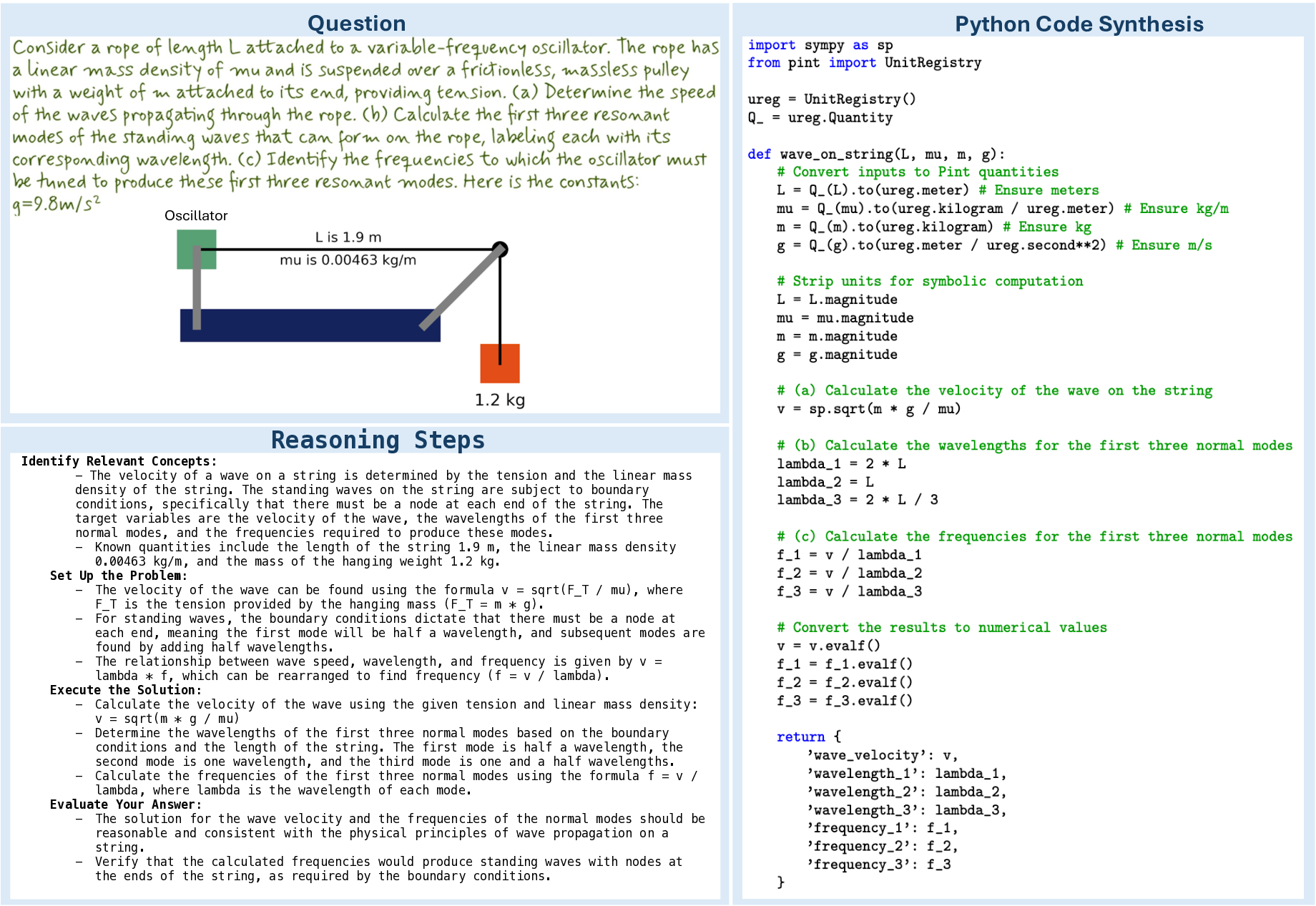

Question

Python Code Synthesis

Identify Relevant Concepts:

- The velocity of a wave on a string is determined by the tension and the linear mass

density of the string. The standing waves on the string are subject to boundary

conditions, specifically that there must be a node at each end of the string. The

target variables are the velocity of the wave, the wavelengths of the first three

normal modes, and the frequencies required to produce these modes.

-

Known quantities include the length of the string 1.9 m, the linear mass density

0.00463 kg/m, and the mass of the hanging weight 1.2 kg.

Set Up the Problem:

-

The velocity of the wave can be found using the formula v = sqrt(F_T / mu), where

F_T is the tension provided by the hanging mass (F_T = m * g).

-

For standing waves, the boundary conditions dictate that there must be a node at

each end, meaning the first mode will be half a wavelength, and subsequent modes are

found by adding half wavelengths.

-

The relationship between wave speed, wavelength, and frequency is given by v =

lambda * f, which can be rearranged to find frequency (f = v / lambda).

Execute the Solution:

-

Calculate the velocity of the w

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.