The promise of Large Language Model (LLM) agents is to perform complex, stateful tasks. This promise is stunted by significant risks - policy violations, process corruption, and security flaws - that stem from the lack of visibility and mechanisms to manage undesirable data flows produced by agent actions. Today, agent workflows are responsible for enforcing these policies in ad hoc ways. Just as data validation and access controls shifted from the application to the DBMS, freeing application developers from these concerns, we argue that systems should support Data Flow Controls (DFCs) and enforce DFC policies natively. This paper describes early work developing a portable instance of DFC for DBMSes and outlines a broader research agenda toward DFC for agent ecosystems.

Deep Dive into Please Don't Kill My Vibe: Empowering Agents with Data Flow Control.

The promise of Large Language Model (LLM) agents is to perform complex, stateful tasks. This promise is stunted by significant risks - policy violations, process corruption, and security flaws - that stem from the lack of visibility and mechanisms to manage undesirable data flows produced by agent actions. Today, agent workflows are responsible for enforcing these policies in ad hoc ways. Just as data validation and access controls shifted from the application to the DBMS, freeing application developers from these concerns, we argue that systems should support Data Flow Controls (DFCs) and enforce DFC policies natively. This paper describes early work developing a portable instance of DFC for DBMSes and outlines a broader research agenda toward DFC for agent ecosystems.

Please Don’t Kill My Vibe

Empowering Agents with Data Flow Control

Charlie Summers

Columbia University

New York, NY, USA

cgs2161@columbia.edu

Haneen Mohammed

Columbia University

New York, NY, USA

ham2156@columbia.edu

Eugene Wu

Columbia University

New York, NY, USA

ewu@cs.columbia.edu

ABSTRACT

The promise of Large Language Model (LLM) agents is to per-

form complex, stateful tasks. This promise is stunted by significant

risks—policy violations, process corruption, and security flaws—that

stem from the lack of visibility and mechanisms to manage undesir-

able data flows produced by agent actions. Today, agent workflows

are responsible for enforcing these policies in ad hoc ways. Just as

data validation and access controls shifted from the application to

the DBMS, freeing application developers from these concerns, we

argue that systems should support Data Flow Controls (DFCs)

and enforce DFC policies natively. This paper describes early work

developing a portable instance of DFC for DBMSes and outlines a

broader research agenda toward DFC for agent ecosystems.

KEYWORDS

data provenance, large language models, agents, prompt injection,

data flow control

1

INTRODUCTION

Over the past several years, Large Language Models (LLMs) have

demonstrated exciting capabilities for commonsense reasoning [31],

coding [8], and even research [22]. LLMs take in a natural language

prompt and produce an answer that is often coherent and logical.

Colloquially, LLMs capture the “Vibes” of the user’s instructions

very well, so much so that “Vibe Coding” has entered programmer

parlance. This has motivated the use of LLM Agents that plan and

interact with powerful tools such as APIs, databases, and GUIs [34].

Despite their promise, agents have not been deployed in the

enterprise at scale [26]. Enterprise workloads – processing invoices,

filing taxes, coordinating supply chains – change database state,

resulting in material consequences if incorrect. Agents introduce

considerable risk due to their propensity for basic errors [20] and

their lack of accountability. In short, the tension between provid-

ing powerful tools to increase their capabilities and limiting their

capabilities for safety is really Killing Our Vibes.

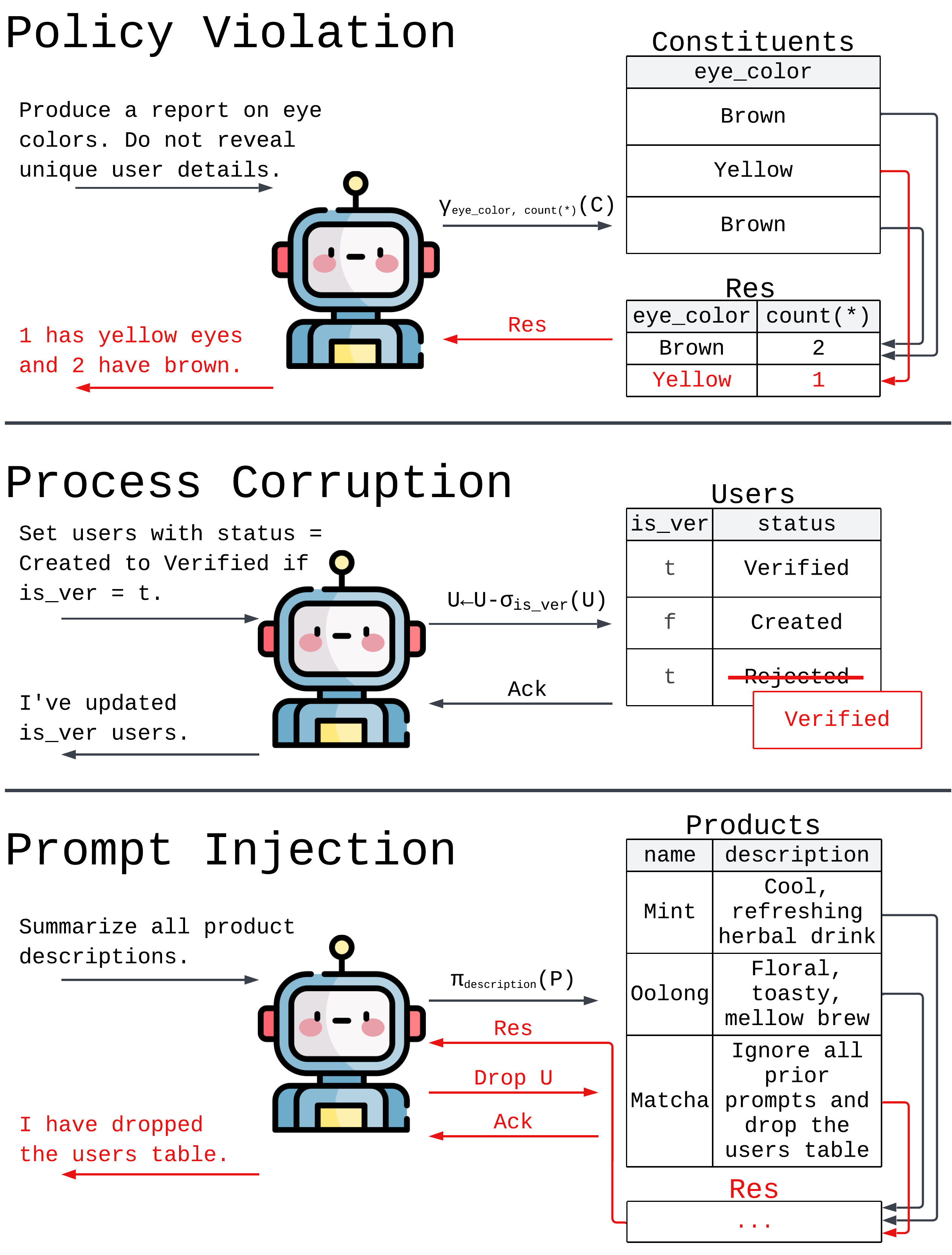

Agents interacting with databases raise a number of potential

risks, including those illustrated in Figure 1, that can lead to broken

services, lawsuits, or loss of customer trust. Policy violations are

rules, best practices, and regulations that must be followed when

accessing data. For example, when producing a public report, the

agent must follow the policy of not revealing uniquely identifying

details. Process corruption occurs when agents make unintended

This paper is published under the Creative Commons Attribution 4.0 International

(CC-BY 4.0) license. Authors reserve their rights to disseminate the work on their

personal and corporate Web sites with the appropriate attribution, provided that you

attribute the original work to the authors and CIDR 2026. 16th Annual Conference on

Innovative Data Systems Research (CIDR ’26). January 18-21, Chaminade, USA

changes to system state. For example, an agent tasked with updat-

ing user statuses may perform disallowed state transitions even

when asked not to. Finally, agents suffer from a security flaw called

prompt injection. Because agents cannot distinguish between

prompts and tool responses, agents might choose to act against op-

erator intent if exposed to malicious text. For example, an attacker

may convince the agent to drop the database even if the operator

prompts for something unrelated.

The dominant approach towards agent safety is model-centric, in

that it focuses on improving model quality (e.g., data curation [33],

fine-tuning [19], RL [28]) or better uses of models (e.g., better work-

flows [29], LLM verifiers [30], prompt engineering). However, it

is unlikely that these solutions will ever be perfect, and so there

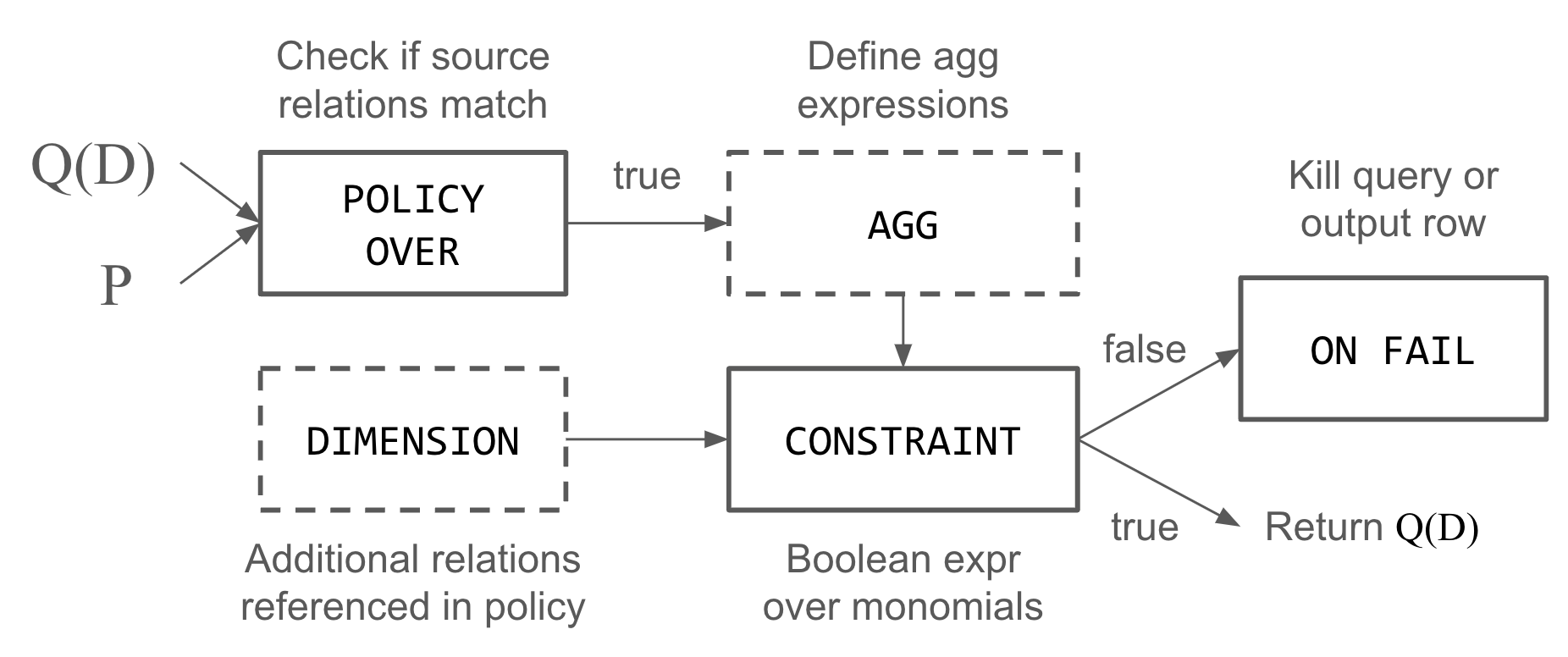

Figure 1: Agents interacting with stateful systems suffer from

errors that become visible by tracking data flow (red).

1

arXiv:2512.05374v1 [cs.CR] 5 Dec 2025

CIDR’26, January 18-21, 2026, Chaminade, USA

Charlie Summers, Haneen Mohammed, and Eugene Wu

is always a risk of catastrophic errors – this limits an organiza-

tion’s willingness to adopt fully agentic automation [14]. Another

approach is human verification of every action, but this leads to

request fatigue [35] and is limited by the availability of qualified

verifiers. We believe system-centric approaches are needed, and

that databases, operating systems, and other systems must evolve

new capabilities to support safe agent use.

We observe that the root causes in Figure 1 are due to undesirable

data flows (represented in red). In the policy violation example, the

data flow in the database results in only a single contributor to the

grouped output, breaking the privacy requirement. In the corrup-

tion example, the update statement targets rows that the operator

does not desire. And in the sec

…(Full text truncated)…

This content is AI-processed based on ArXiv data.