Exploring Topological Bias in Heterogeneous Graph Neural Networks

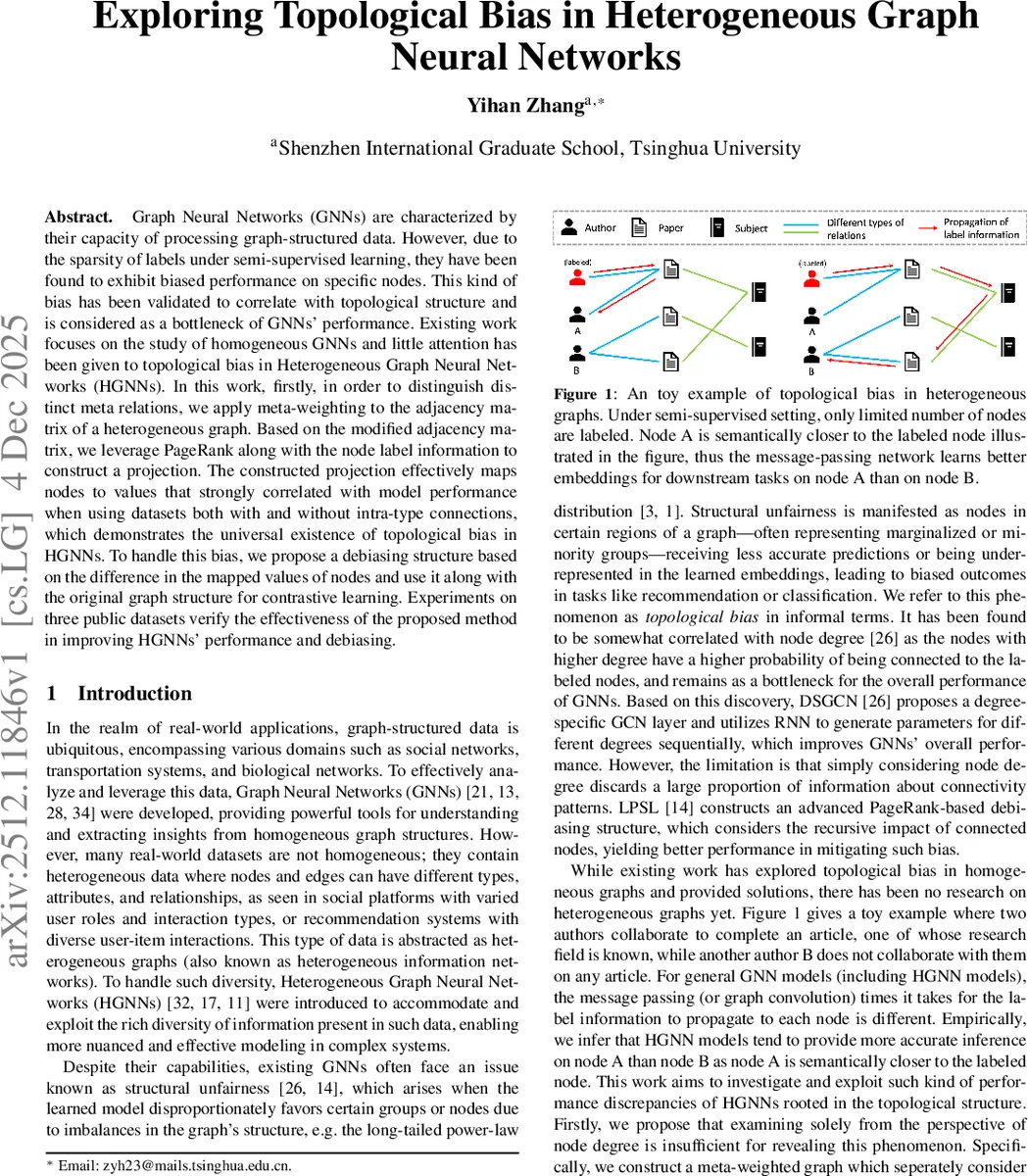

Graph Neural Networks (GNNs) are characterized by their capacity of processing graph-structured data. However, due to the sparsity of labels under semi-supervised learning, they have been found to exhibit biased performance on specific nodes. This kind of bias has been validated to correlate with topological structure and is considered as a bottleneck of GNNs’ performance. Existing work focuses on the study of homogeneous GNNs and little attention has been given to topological bias in Heterogeneous Graph Neural Networks (HGNNs). In this work, firstly, in order to distinguish distinct meta relations, we apply meta-weighting to the adjacency matrix of a heterogeneous graph. Based on the modified adjacency matrix, we leverage PageRank along with the node label information to construct a projection. The constructed projection effectively maps nodes to values that strongly correlated with model performance when using datasets both with and without intra-type connections, which demonstrates the universal existence of topological bias in HGNNs. To handle this bias, we propose a debiasing structure based on the difference in the mapped values of nodes and use it along with the original graph structure for contrastive learning. Experiments on three public datasets verify the effectiveness of the proposed method in improving HGNNs’ performance and debiasing.

💡 Research Summary

This paper investigates a previously under‑explored phenomenon: topological bias in heterogeneous graph neural networks (HGNNs) under semi‑supervised learning with scarce labels. While structural unfairness has been studied for homogeneous graphs, the authors demonstrate that similar bias exists in heterogeneous settings, where nodes of different types and meta‑relations interact.

The authors first construct a meta‑weighted adjacency matrix B by element‑wise multiplying the original adjacency matrix A with a type‑relation weight matrix R. Two scaling parameters, η₁ and η₂, control (i) self‑amplification of intra‑type edges and (ii) regulation of meta‑relations according to edge counts, thereby preserving richer semantic information than a binary adjacency.

Using B, they derive a personalized PageRank‑style influence matrix Q = α (I – (1–α) D̃⁻¹ᐟ² B̃ D̃⁻¹ᐟ²)⁻¹, where B̃ = I + B and D̃ is the degree matrix of B̃. Multiplying Q by a binary label vector J (1 for labeled nodes, 0 otherwise) yields Z = QJ, and the heterogeneous label impact degree (HLID) of node i is defined as HLID(i) = Z_i. HLID quantifies the total influence of all labeled nodes on each unlabeled node, independent of the source node’s degree.

To assess whether HLID captures bias, the authors compute Spearman rank correlation (r_s) between node‑wise HLID values and the prediction accuracy of several HGNN models (HAN, HGT, Simple‑HGN) on three public datasets: IMDB and DBLP (no intra‑type edges) and ACM (both intra‑ and inter‑type edges). HLID consistently achieves high positive r_s (0.82–0.93), whereas simple degree‑based projections yield low or negative correlations. Moreover, the correlation diminishes as the label rate increases, confirming that bias is most pronounced when labeled nodes are sparse.

Building on this insight, the paper proposes a debiasing framework called Heterogeneous Topological‑Aware Debiasing (HT‑AD). HT‑AD treats the difference in HLID values between node pairs as a similarity signal: pairs with similar HLID are considered positive, while pairs with divergent HLID are negative. Two graph views are generated—(1) the original heterogeneous graph and (2) a graph reconstructed from HLID values. For each view, local and global augmentations are applied, and a contrastive loss based on NT‑Xent is computed for both supervised (label‑aware) and unsupervised components. The total loss combines cross‑entropy classification loss with the contrastive terms, allowing seamless integration into existing HGNN pipelines without altering the underlying architecture.

Experimental results show that adding HT‑AD improves overall node classification accuracy by 2–4 percentage points across the three datasets, with the most substantial gains observed at low label rates (1–5%). Ablation studies confirm the importance of both η₁/η₂ weighting and the HLID‑based contrast; removing either component degrades performance. The authors also discuss computational considerations: HLID requires a one‑time inversion of a matrix of size N×N, which is feasible for the benchmark sizes but may need approximation techniques for larger graphs.

In summary, the contributions are: (1) empirical evidence that topological bias exists in heterogeneous graphs; (2) a novel metric (HLID) that quantifies the bias by integrating meta‑relation weights and label influence; (3) a contrastive debiasing method (HT‑AD) that leverages HLID differences to produce fairer node representations; and (4) extensive validation on multiple datasets demonstrating both effectiveness and robustness. Limitations include the fixed design of the relation weight matrix R and the scalability of the PageRank‑based HLID computation, suggesting future work on learnable relation weighting and approximate influence estimators.

Comments & Academic Discussion

Loading comments...

Leave a Comment