Automating Complex Document Workflows via Stepwise and Rollback-Enabled Operation Orchestration

Workflow automation promises substantial productivity gains in everyday document-related tasks. While prior agentic systems can execute isolated instructions, they struggle with automating multi-step, session-level workflows due to limited control over the operational process. To this end, we introduce AutoDW, a novel execution framework that enables stepwise, rollback-enabled operation orchestration. AutoDW incrementally plans API actions conditioned on user instructions, intent-filtered API candidates, and the evolving states of the document. It further employs robust rollback mechanisms at both the argument and API levels, enabling dynamic correction and fault tolerance. These designs together ensure that the execution trajectory of AutoDW remains aligned with user intent and document context across long-horizon workflows. To assess its effectiveness, we construct a comprehensive benchmark of 250 sessions and 1,708 human-annotated instructions, reflecting realistic document processing scenarios with interdependent instructions. AutoDW achieves 90% and 62% completion rates on instruction-and session-level tasks, respectively, outperforming strong baselines by 40% and 76%. Moreover, AutoDW also remains robust for the decision of backbone LLMs and on tasks with varying difficulty. Code and data will be open-sourced.

💡 Research Summary

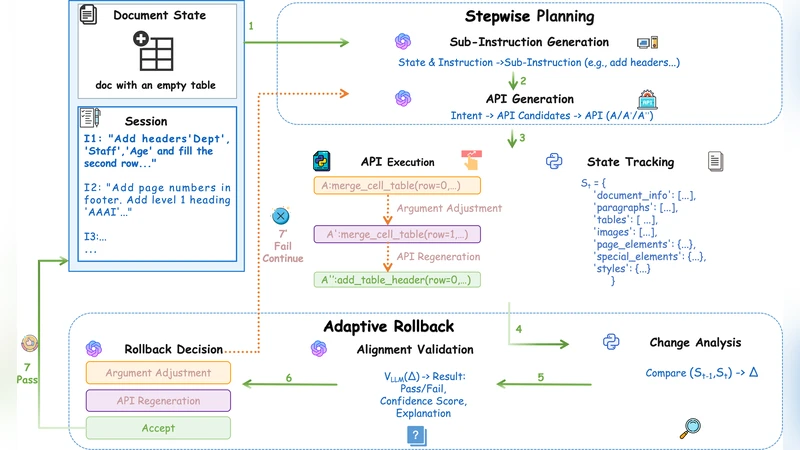

The paper introduces AutoDW, a novel execution framework designed to automate complex, multi‑step document‑processing workflows that span entire user sessions. Traditional agentic systems excel at executing isolated instructions but falter when required to maintain control over a long‑horizon sequence of operations, especially when errors occur. AutoDW addresses this gap by integrating three tightly coupled components: (1) an intent‑filtered planner that parses natural‑language user commands with a large language model (LLM), extracts the underlying intent, and selects candidate APIs from a curated library of document‑centric functions (e.g., insert text, generate tables, convert formats); (2) a state‑conditioned argument generator that inspects the current document’s content, structure, and metadata, then produces concrete API arguments using prompt‑conditioned sampling and assigns confidence scores to each candidate; and (3) a dual‑level rollback mechanism. After each API call, AutoDW immediately validates the result against predefined schemas and against the expected document change. If validation fails, it first attempts an argument‑level rollback—re‑generating only the faulty parameters—and, if necessary, escalates to an API‑level rollback that discards the call and searches for an alternative function. This hierarchical rollback strategy prevents error propagation and keeps the execution trajectory aligned with user intent throughout long sessions.

To evaluate the system, the authors constructed a benchmark comprising 250 realistic sessions and 1,708 human‑annotated instructions covering interdependent tasks such as table creation, chart generation, and summary insertion. Using GPT‑3.5‑Turbo and Claude‑2 as backbone LLMs, AutoDW achieved a 90 % success rate on individual instruction completion and a 62 % success rate on full‑session completion. These figures represent relative improvements of 40 % and 76 % over strong baseline agents that lack stepwise planning and rollback capabilities. Notably, in workflows with deep dependencies (e.g., “create a table, generate a chart from the table, embed the chart in a summary”), the rollback‑enabled version outperformed the non‑rollback baseline by more than 30 % in session success. The experiments also demonstrated that AutoDW’s performance is robust across different LLM backbones and scales with task difficulty, confirming the generality of the approach.

The paper acknowledges limitations: the current API library must be predefined, which restricts applicability to domains lacking ready‑made functions (e.g., graphic design, code editing). Moreover, overly conservative rollback policies can lead to unnecessary retries, reducing efficiency. Future work is proposed in two directions. First, meta‑learning techniques could be employed to automatically discover and register new APIs from observed user behavior, expanding the system’s functional repertoire without manual engineering. Second, reinforcement‑learning or cost‑aware optimization could be used to fine‑tune the rollback policy, balancing fault tolerance against computational overhead. By open‑sourcing the code and dataset, the authors invite the research community to build upon AutoDW, aiming toward more reliable, autonomous assistants capable of handling real‑world, multi‑step document workflows.