Neural architecture search (NAS) in expressive search spaces is a computationally hard problem, but it also holds the potential to automatically discover completely novel and performant architectures. To achieve this we need effective search algorithms that can identify powerful components and reuse them in new candidate architectures. In this paper, we introduce two adapted variants of the Smith-Waterman algorithm for local sequence alignment and use them to compute the edit distance in a grammar-based evolutionary architecture search. These algorithms enable us to efficiently calculate a distance metric for neural architectures and to generate a set of hybrid offspring from two parent models. This facilitates the deployment of crossover-based search heuristics, allows us to perform a thorough analysis on the architectural loss landscape, and track population diversity during search. We highlight how our method vastly improves computational complexity over previous work and enables us to efficiently compute shortest paths between architectures. When instantiating the crossover in evolutionary searches, we achieve competitive results, outperforming competing methods. Future work can build upon this new tool, discovering novel components that can be used more broadly across neural architecture design, and broadening its applications beyond NAS.

INTRODUCTION Neural architecture search (NAS) (Elsken et al., 2019) has traditionally operated in constrained search spaces, defined by limited operations and fixed topologies. Popular benchmarks such as NAS-Bench-101 (Ying et al., 2019) and NAS-Bench-201 (Dong & Yang, 2020) restrict exploration to cell-based architectures built from only a handful of primitives. While these constraints simplify optimisation, they confine the search to narrow structural templates that cannot lead to fundamentally better architectures but rather incremental improvements of existing ones. Recently, more expressive NAS search spaces have been proposed to enable broader architectural discovery. For example, Hierarchical NAS (Schrodi et al., 2023) expands cell-based spaces by including high-level macro design choices such as network topology. Similarly, Ericsson et al. (2024) introduce einspace, a parameterised context-free grammar (PCFG) that spans architectures of varying depth, branching patterns, and operation types. Unlike earlier spaces that only fine-tuned existing templates, these approaches unlock the potential to discover fundamentally new architectures. However, this expressiveness comes at the cost of scalability. The vast size and complexity of grammar-based spaces make exploration difficult. Evolutionary operators such as mutation and crossover, while effective in small DAG-based spaces (Real et al., 2019) , do not easily transfer to these flexible representations. Moreover, the lack of tractable distance metrics for large graphs or * Equal contribution.

Deep Dive into Evolutionary Architecture Search through Grammar-Based Sequence Alignment.

Neural architecture search (NAS) in expressive search spaces is a computationally hard problem, but it also holds the potential to automatically discover completely novel and performant architectures. To achieve this we need effective search algorithms that can identify powerful components and reuse them in new candidate architectures. In this paper, we introduce two adapted variants of the Smith-Waterman algorithm for local sequence alignment and use them to compute the edit distance in a grammar-based evolutionary architecture search. These algorithms enable us to efficiently calculate a distance metric for neural architectures and to generate a set of hybrid offspring from two parent models. This facilitates the deployment of crossover-based search heuristics, allows us to perform a thorough analysis on the architectural loss landscape, and track population diversity during search. We highlight how our method vastly improves computational complexity over previous work and enables us

trees hinders the ability to control diversity or measure smoothness within the search. Advancing NAS therefore requires efficient metrics and recombination operators tailored to expressive spaces.

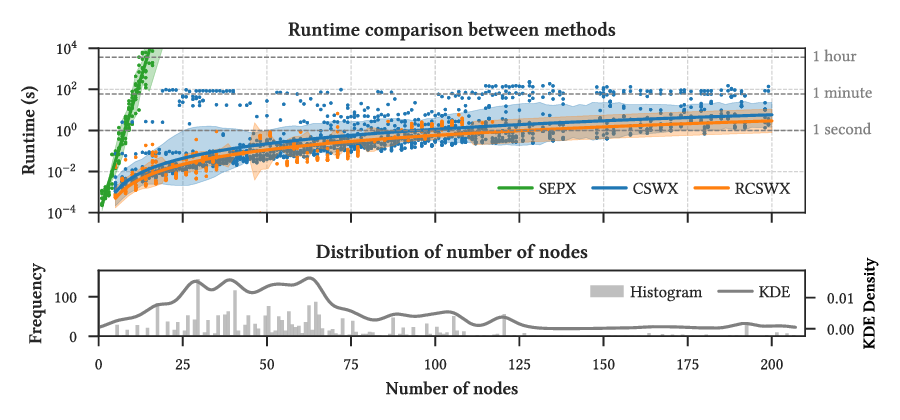

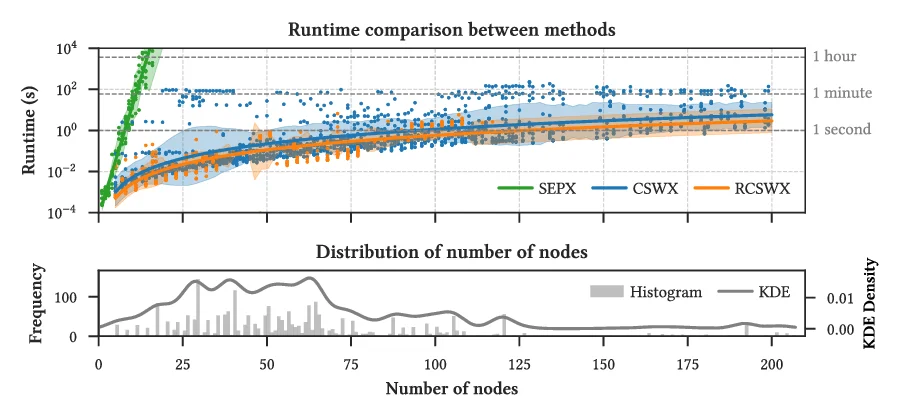

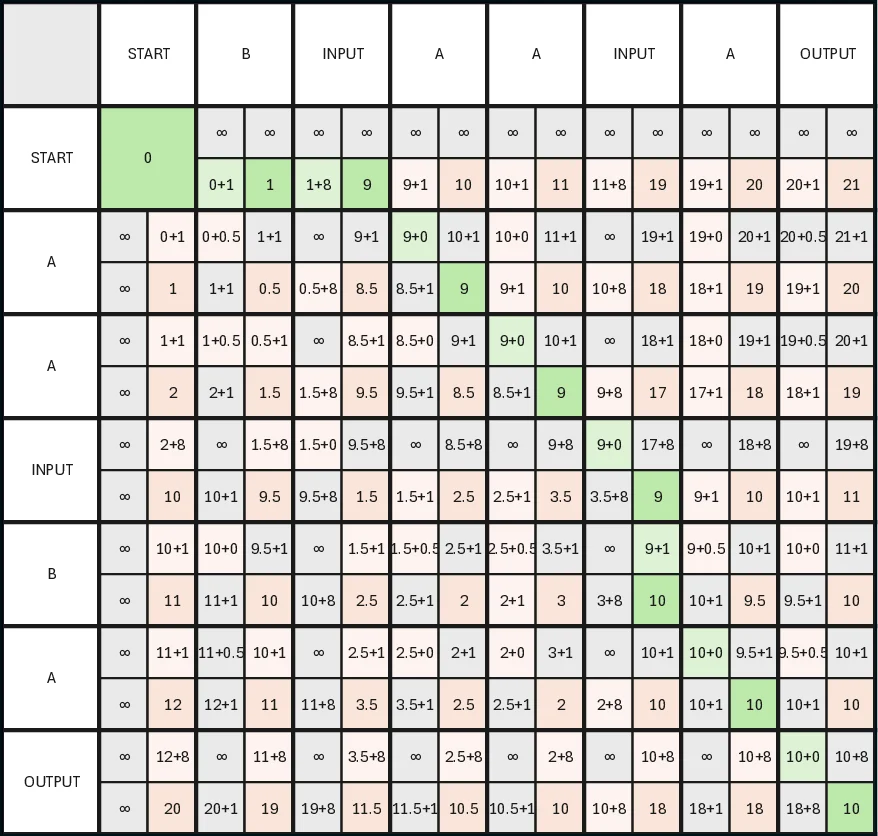

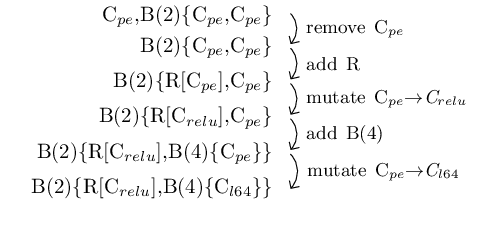

Prior work on shortest edit path crossover (SEPX) demonstrated a theoretically principled method by finding the minimal sequence of graph edits needed to transform one parent into the other (Qiu & Miikkulainen, 2023). This approach addresses the permutation problem where different graph encodings represent equivalent networks. While SEPX can yield high-quality offspring in smaller spaces, it does not scale well to the larger, more complex architectures of modern NAS. Computing the true shortest edit path essentially requires solving a graph edit distance problem, which is NP-hard (Bougleux et al., 2017). As the number of nodes and connections increases, graph matching becomes computationally intractable (as we show in Figure 3), limiting SEPX’s applicability in expressive NAS spaces.

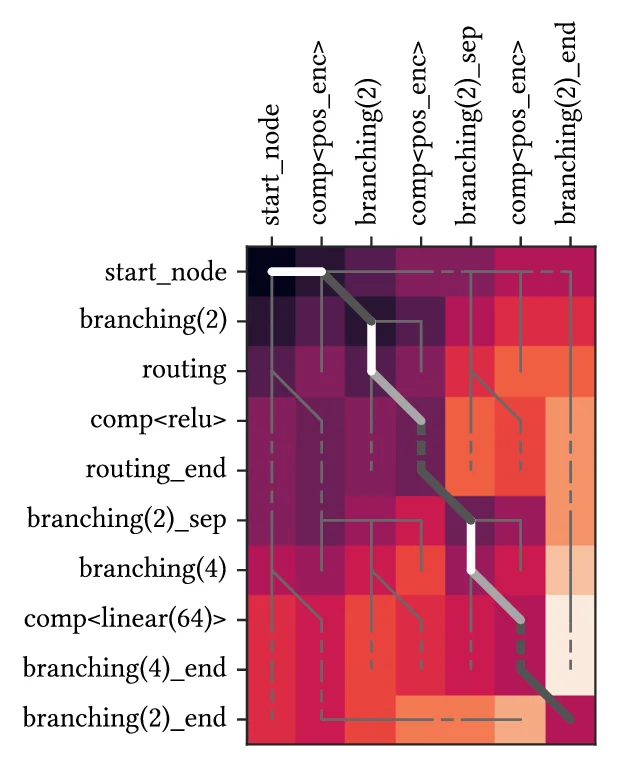

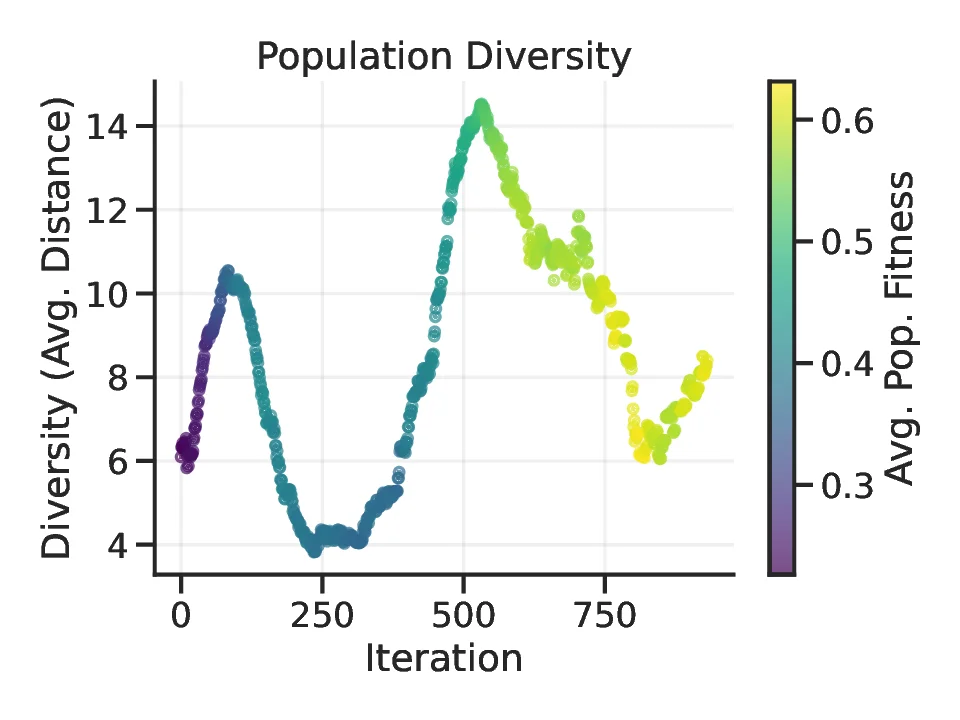

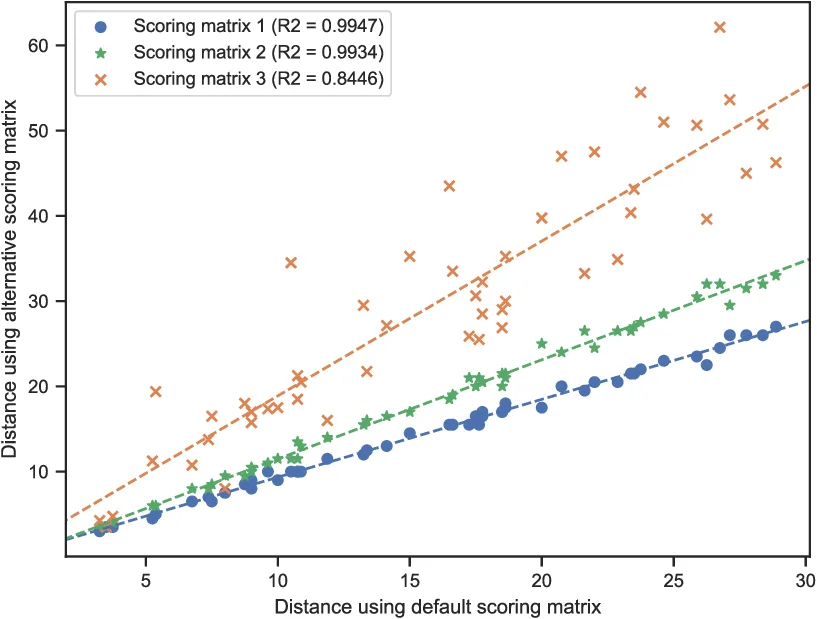

In this work, we propose a scalable alternative: a novel evolutionary crossover operator inspired by local sequence alignment. By leveraging the context-free grammar representation introduced in einspace (Ericsson et al., 2024), our method represents each architecture as a sequence of tokens and employs a constrained variant of the Smith-Waterman algorithm (Smith & Waterman, 1981) to identify high-scoring local alignments between parent sequences. These alignments serve as a shortest edit path between architectures and can be used to guide recombination, ensuring that offspring inherit coherent and functionally analogous substructures. Furthermore, the edit distance we get is a metric on space of functional architectures and can be used to aid the search itself, controlling diversity, as well as to perform extensive analysis on the architectural loss landscape. Crucially, these benefits can be attained due to the efficient computation that offers orders of magnitude speed-ups compared to SEPX.

• We introduce an efficient grammar-based sequence-alignment algorithm for computing edit paths and distances between neural architectures in expressive search spaces, guaranteeing syntactic validity. We show that this reduces computation time by orders of magnitude compared to previous methods.

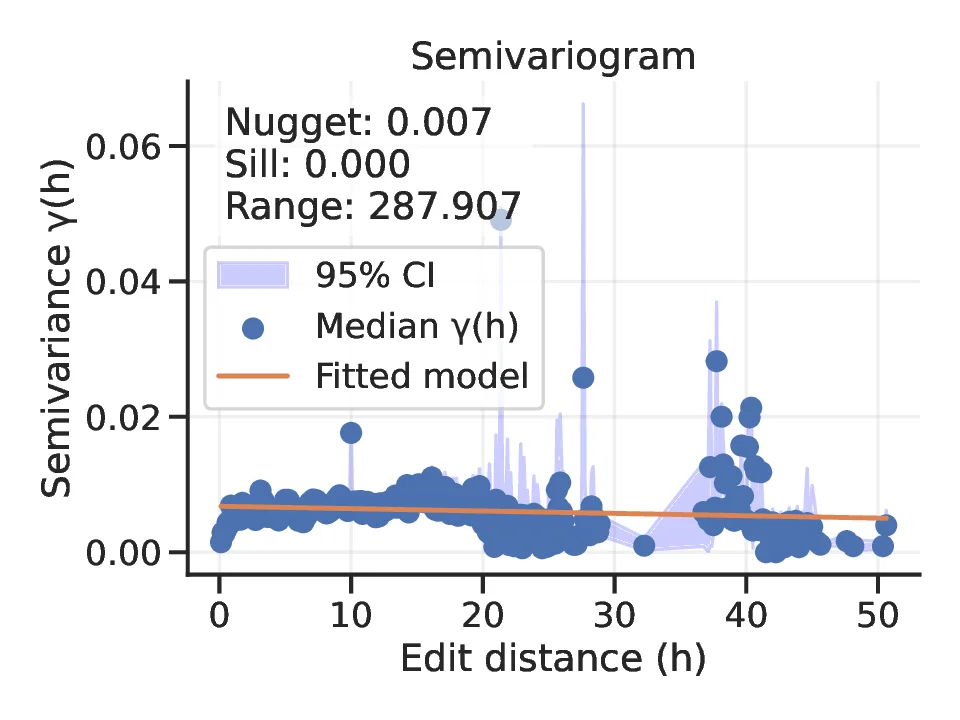

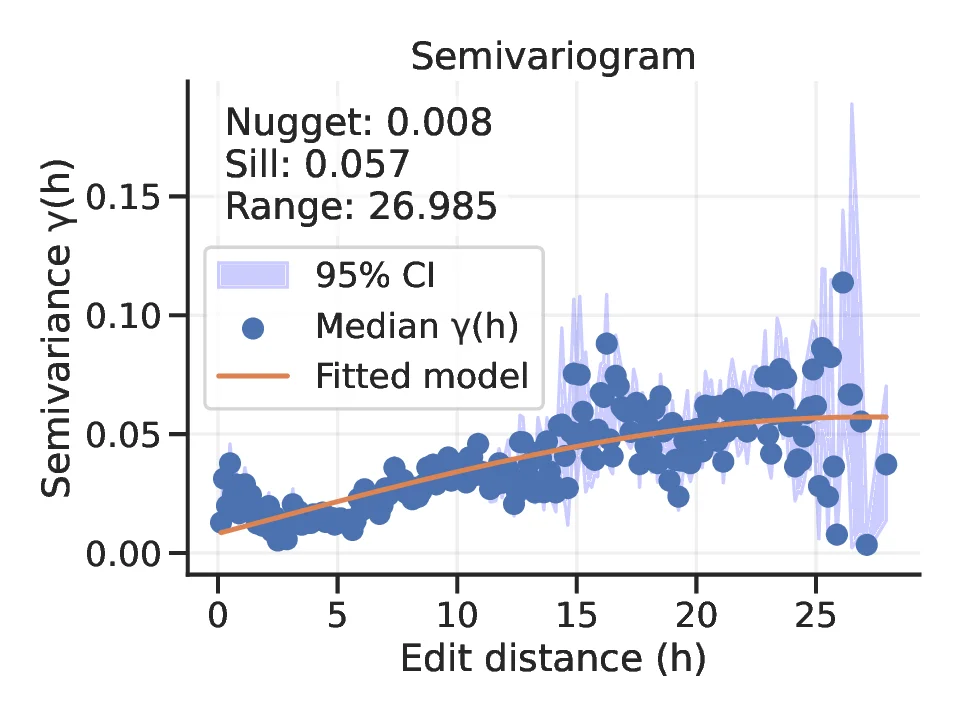

• Our algorithm enables powerful new applications: (a) crossover along the shortest edit path in grammar-based NAS, and (b) diversity measurement and architectural loss landscape analysis through its use as a distance metric.

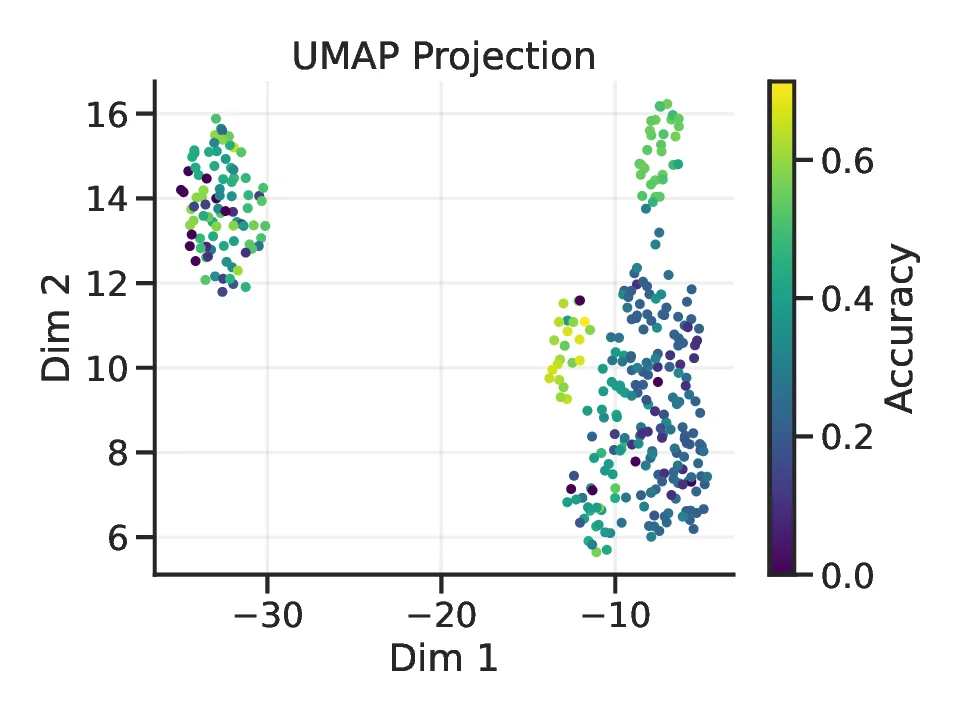

• We demonstrate these applications in one of the most expressive search space to date, establishing new tools for both search and interpretability in NAS. Our analysis reveals the loss landscape at unprecedented scale, quantifying its smoothness and clustered structure.

Neural architecture search. NAS is commonly described in terms of three components: a search space, which defines the set of candidate architectures; a search strategy, which explores that space; and a performance estimator, which evaluates candidate models (Elsken et al., 2019). This work focuses on methods that enrich search strategies and enable more effective exploration and analysis of expressive search spaces.

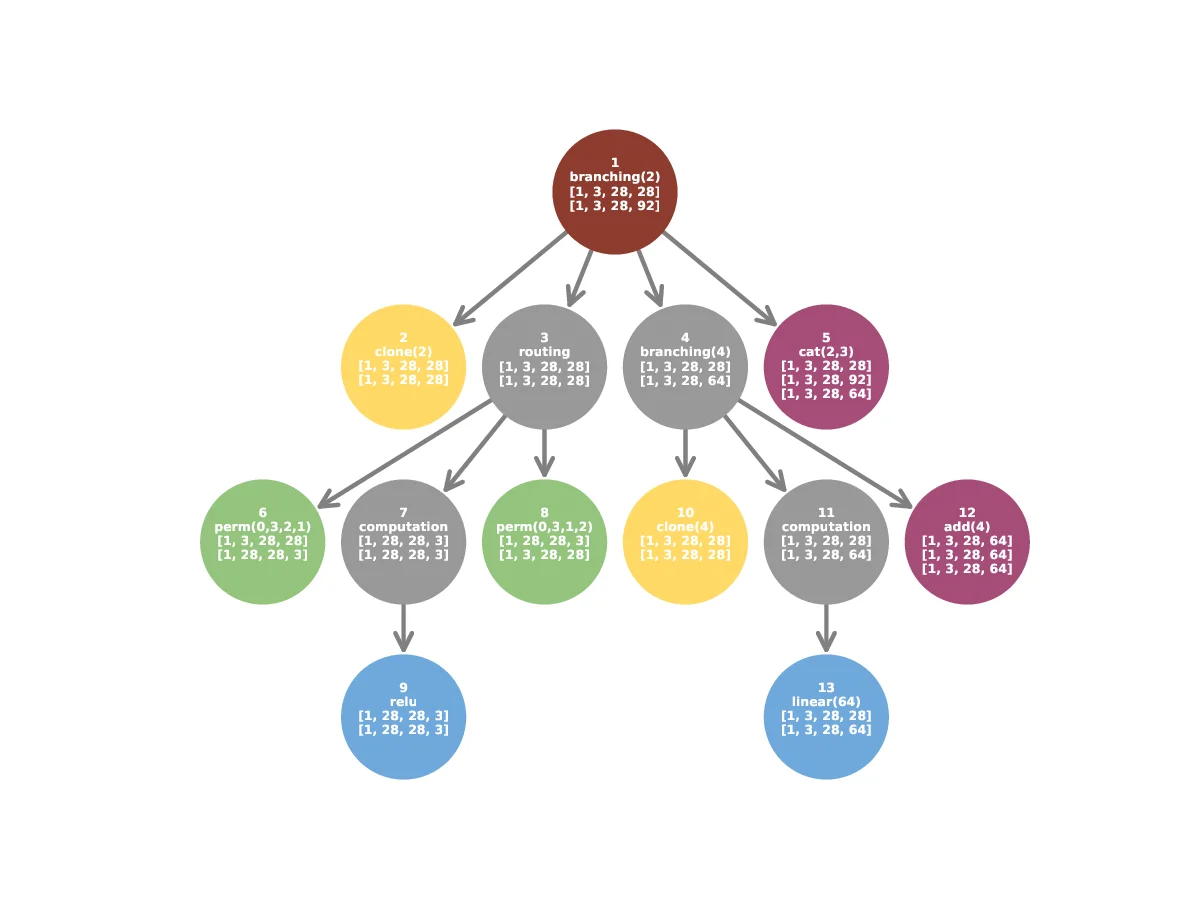

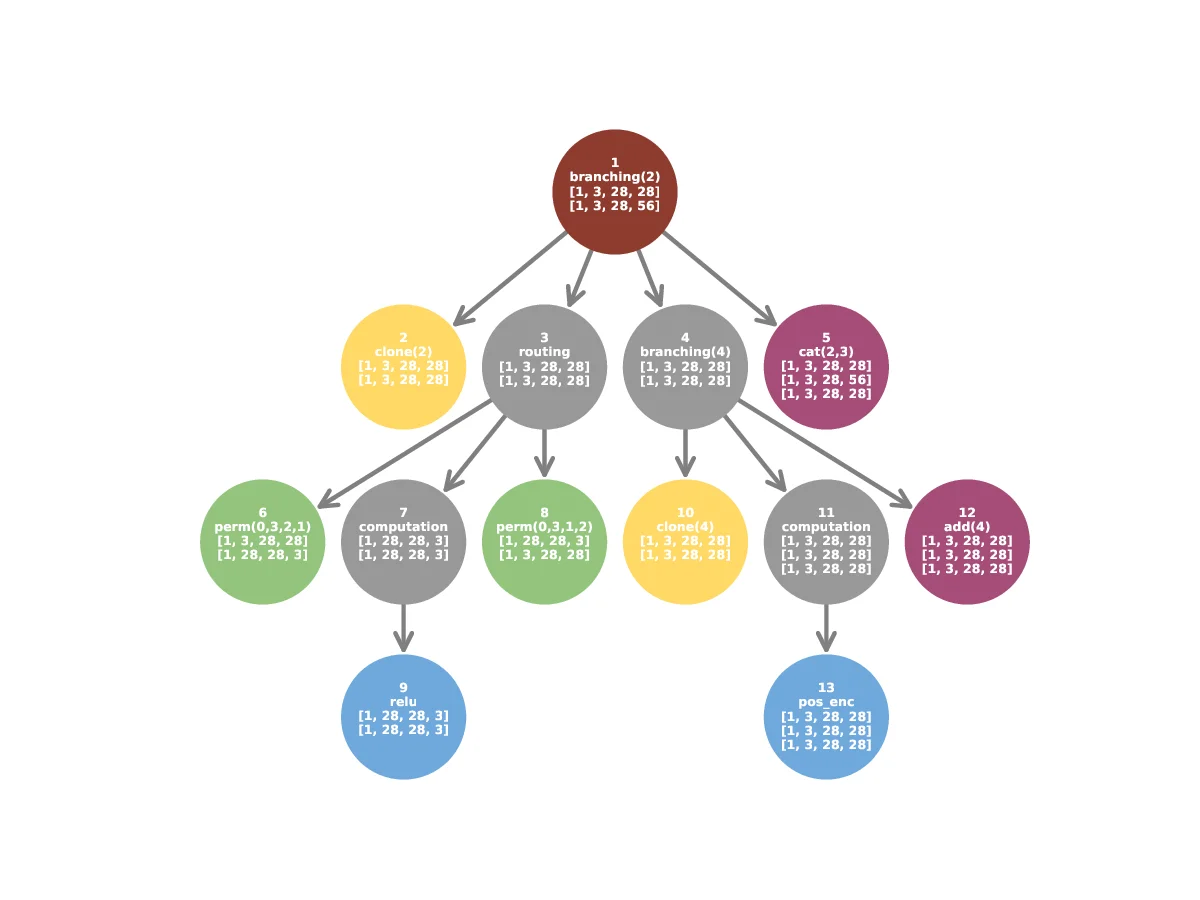

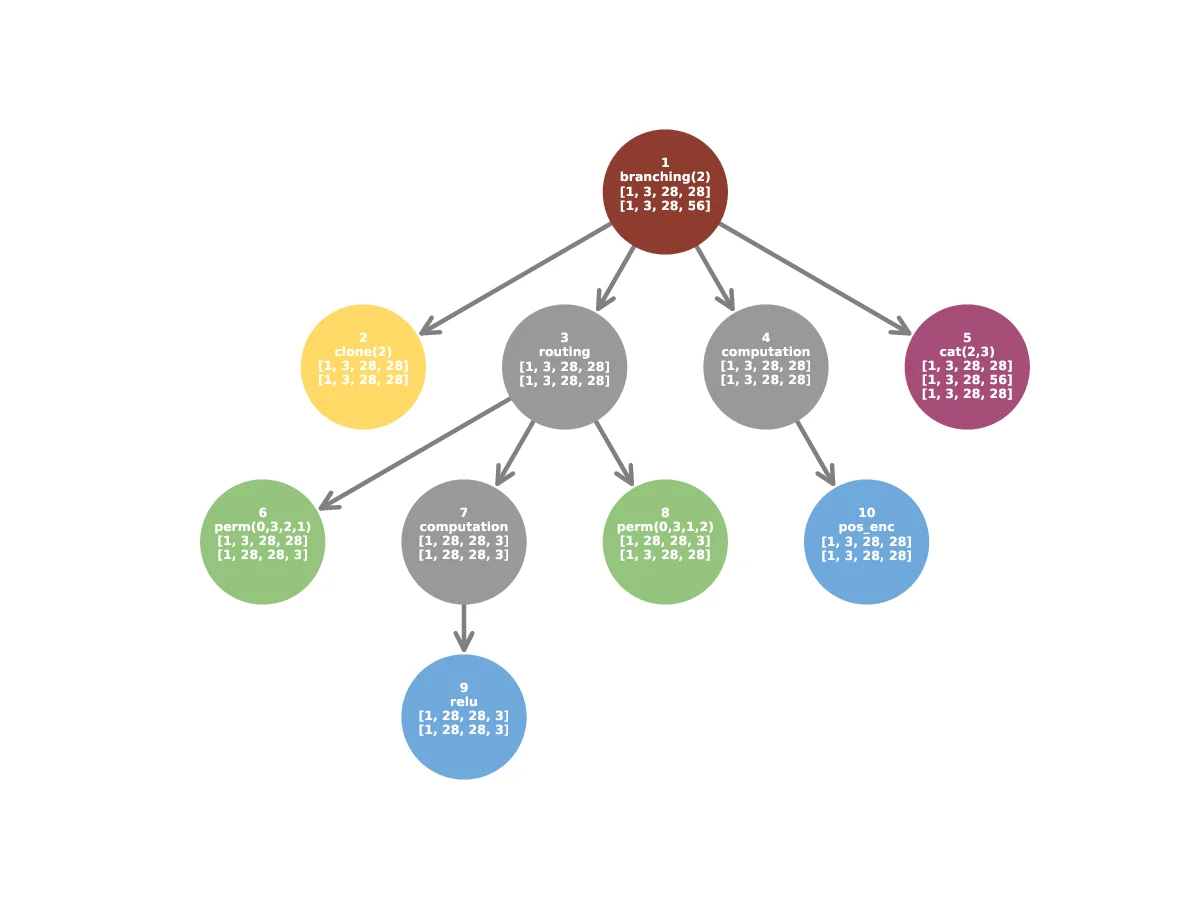

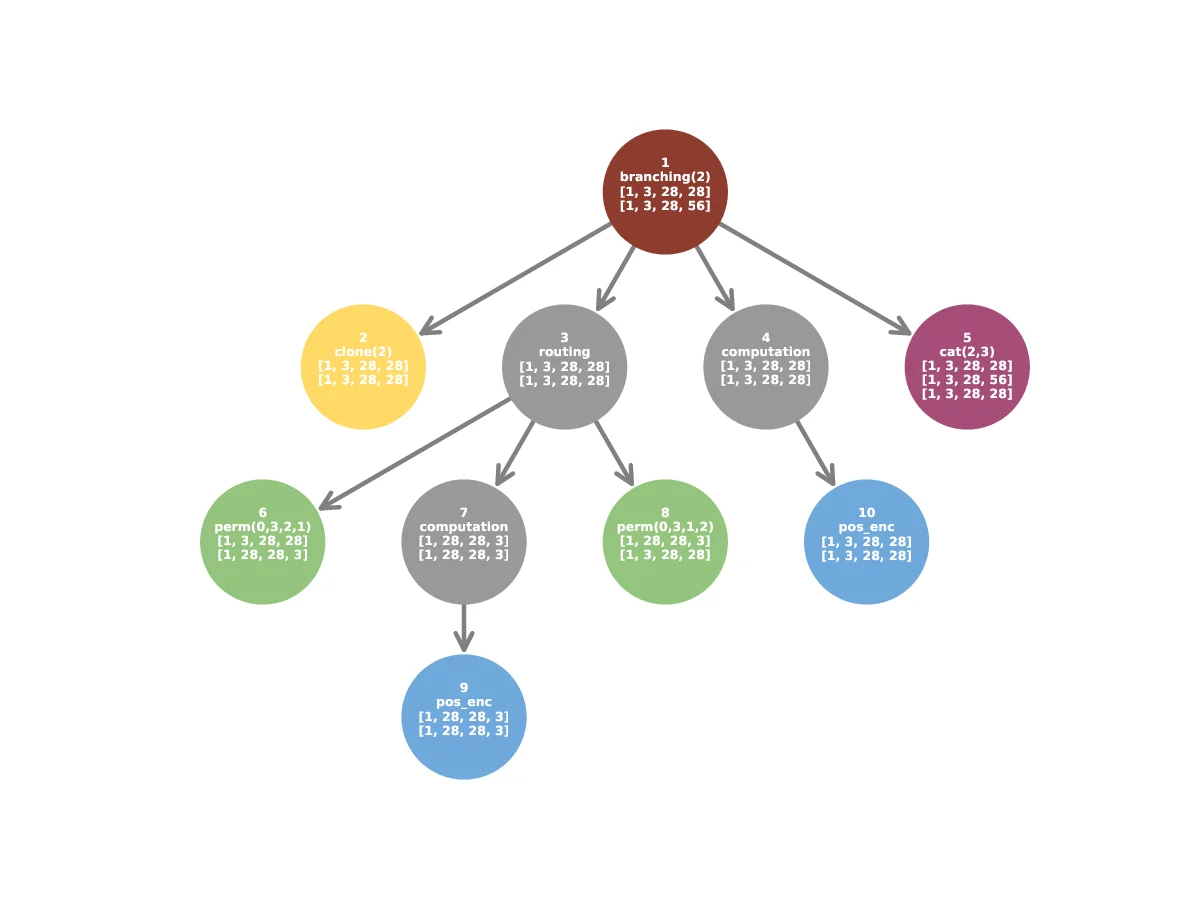

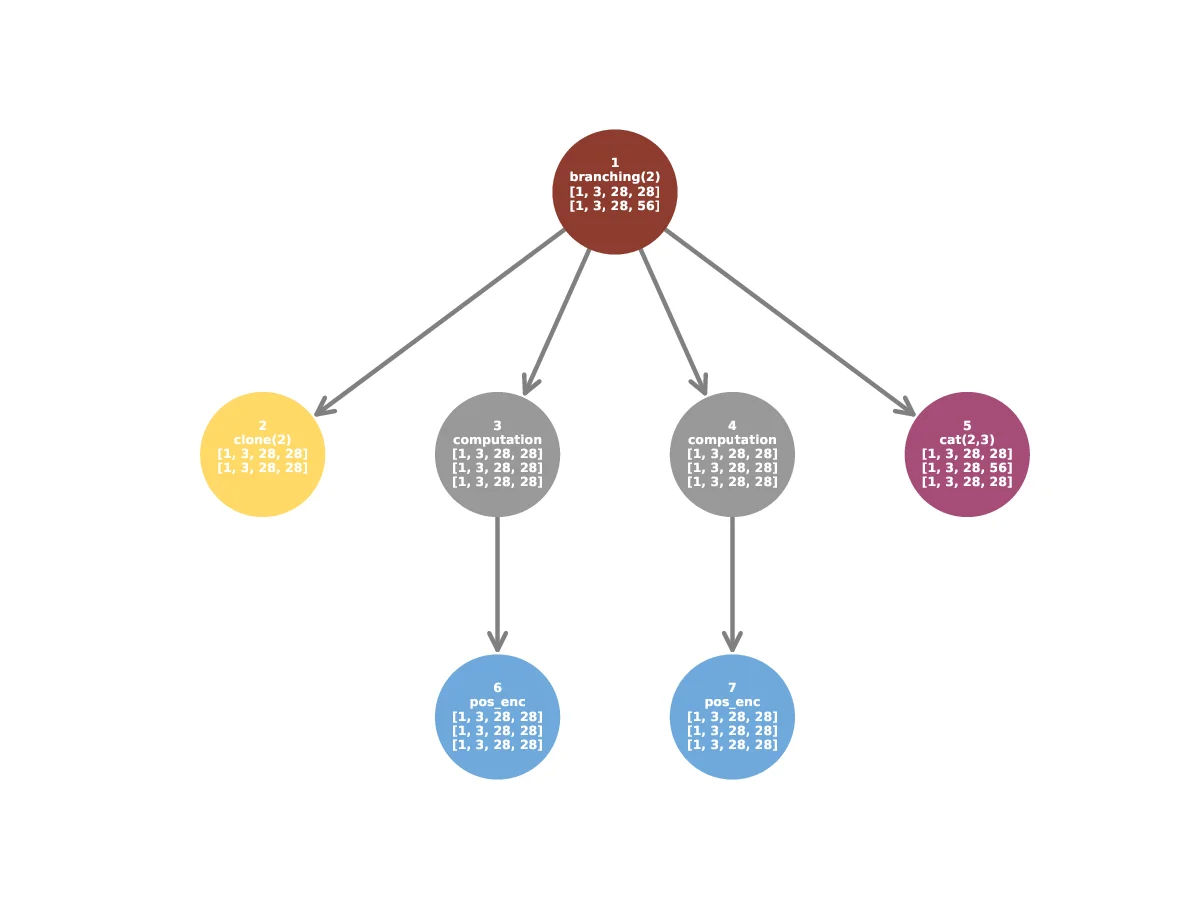

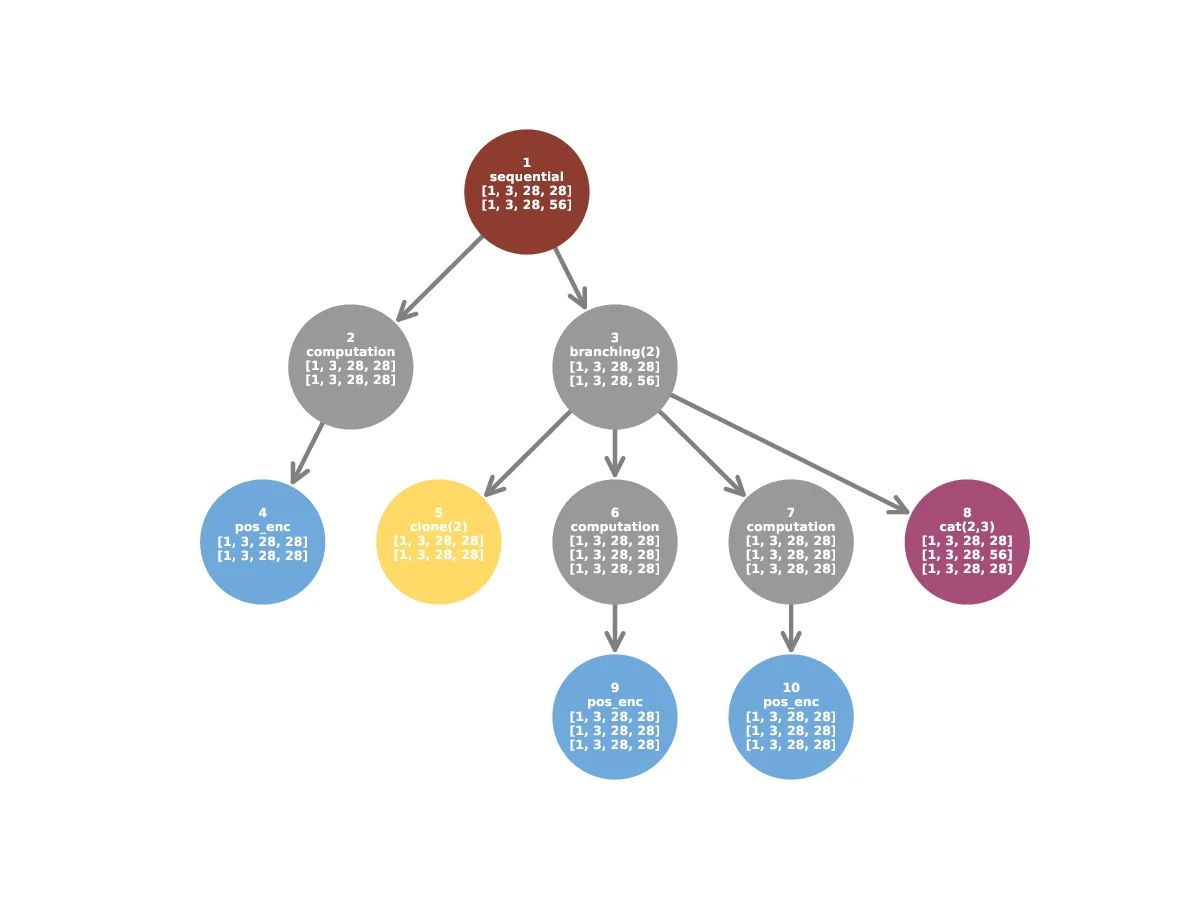

Grammar-based search spaces. Context-free grammars (CFGs) provide a compact and expressive way to encode architectures through a set of production rules. The einspace framework (Ericsson et al., 2024) builds on this idea by constructing a grammar that allows for complex architecture topologies while keeping a simple set of rules. In particular, the rule

specifies a branching(2) module where two independent submodules (M) are combined via a branching operator (B) followed by an aggregation operator (A). The resulting component allows for branching structures in the network, and thus break the sequential nature of the architectural encodings.

In addition to Branching modules, other production rules of the grammar describe Sequential and Routing modules, and terminal nodes can be grouped into types-Branching, Aggregation, Pre-Routing, Post-Routing and Computation. For more details, see Ericsson et al. (2024).

Evolutionary search. Evolutionary algorithms search by maintaining a population of candidate architectures and iteratively applying selection and variation operators (Liu et al., 2023). Variation is typically achieved through mutation, which introduces small random edits, and crossover, which recombines substructures from two parents into new offspring. While mutation drives local exploration, effective crossover can accelerate search by sharing and recombining high-performing architectural motifs across the population.

Recent NAS methods use context-free grammars (CFGs) to create more expressive architectural search spaces. Hierarchical NAS (Schrodi et al., 2023) uses grammars to compose diverse macroand micro-structures, expanding significantly beyond traditional cell-based spaces. Ericsson et al. (2024) employs probabilistic CFGs with re

…(Full text truncated)…

This content is AI-processed based on ArXiv data.