📝 Original Info

- Title: NAWOA-XGBoost: A Novel Model for Early Prediction of Academic Potential in Computer Science Students

- ArXiv ID: 2512.04751

- Date: 2025-12-04

- Authors: Researchers from original ArXiv paper

📝 Abstract

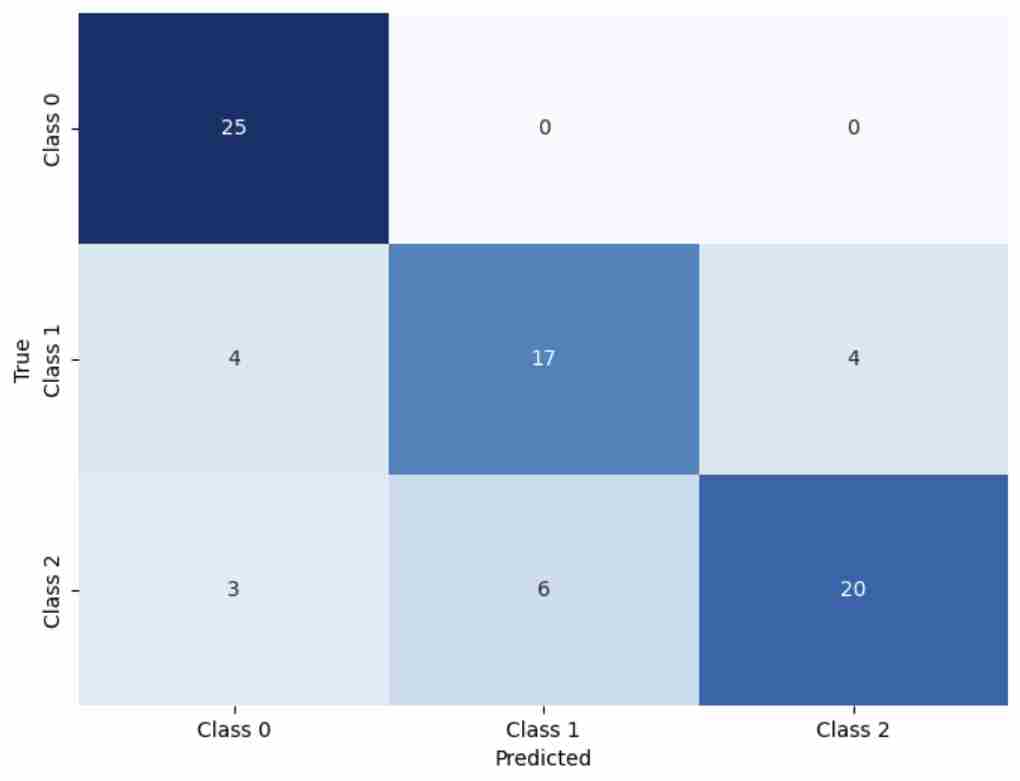

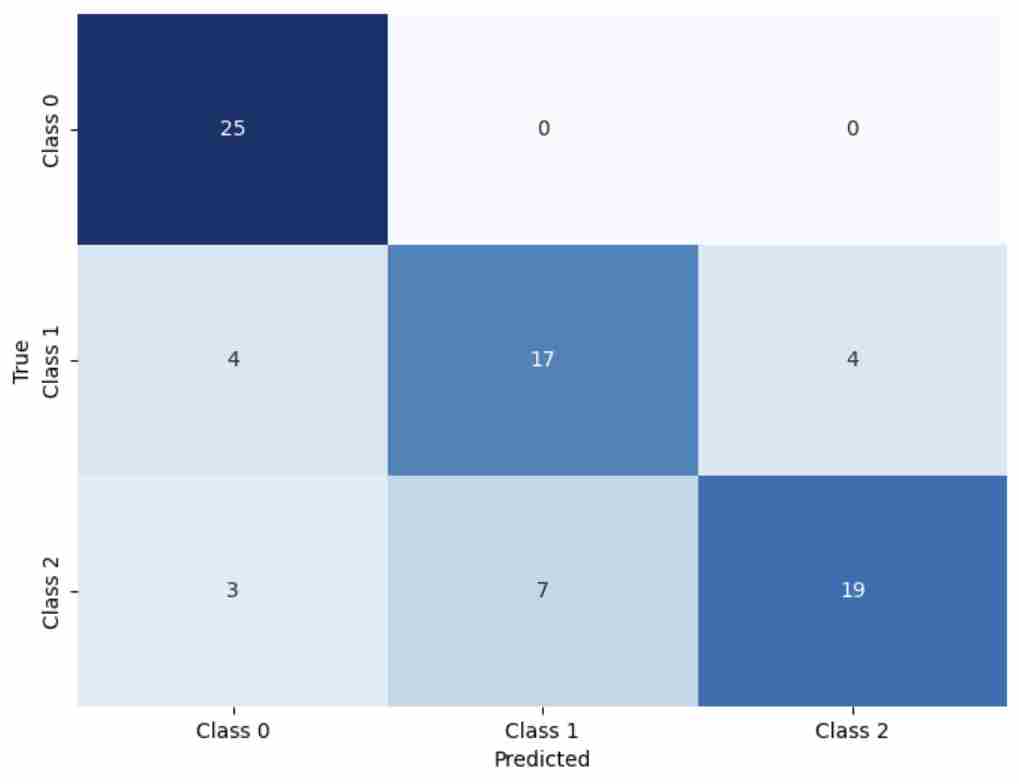

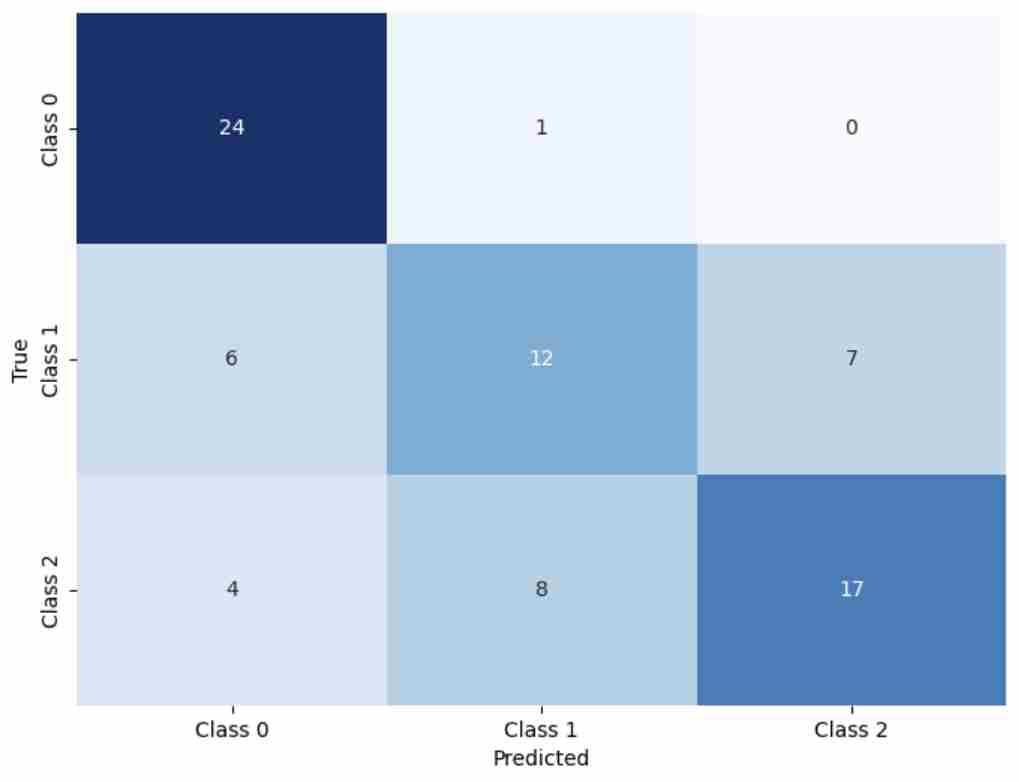

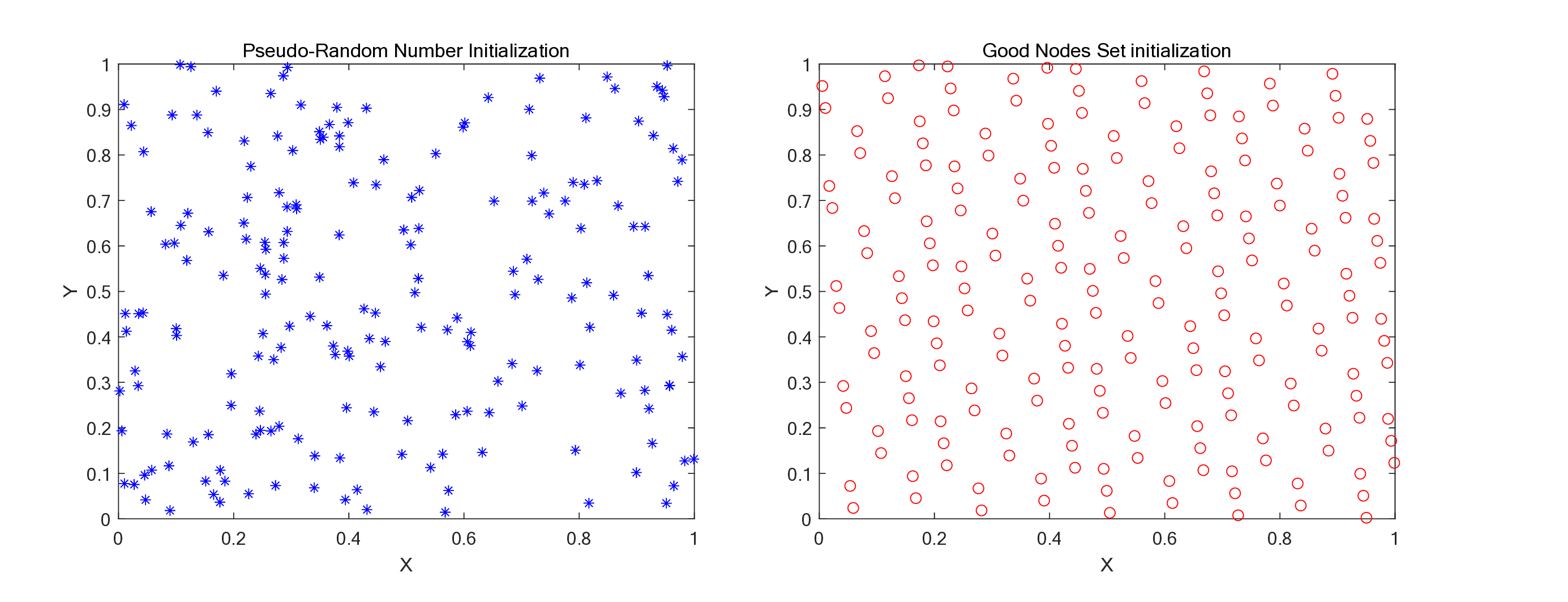

Whale Optimization Algorithm (WOA) suffers from limited global search ability, slow convergence, and tendency to fall into local optima, restricting its effectiveness in hyperparameter optimization for machine learning models. To address these issues, this study proposes a Nonlinear Adaptive Whale Optimization Algorithm (NAWOA), which integrates strategies such as Good Nodes Set initialization, Leader-Followers Foraging, Dynamic Encircling Prey, Triangular Hunting, and a nonlinear convergence factor to enhance exploration, exploitation, and convergence stability. Experiments on 23 benchmark functions demonstrate NAWOA's superior optimization capability and robustness. Based on this optimizer, an NAWOA-XGBoost model was developed to predict academic potential using data from 495 Computer Science undergraduates at Macao Polytechnic University (2009-2019). Results show that NAWOA-XGBoost outperforms traditional XGBoost and WOA-XGBoost across key metrics, including Accuracy (0.8148), Macro F1 (0.8101), AUC (0.8932), and G-Mean (0.8172), demonstrating strong adaptability on multi-class imbalanced datasets.

💡 Deep Analysis

Deep Dive into NAWOA-XGBoost: A Novel Model for Early Prediction of Academic Potential in Computer Science Students.

Whale Optimization Algorithm (WOA) suffers from limited global search ability, slow convergence, and tendency to fall into local optima, restricting its effectiveness in hyperparameter optimization for machine learning models. To address these issues, this study proposes a Nonlinear Adaptive Whale Optimization Algorithm (NAWOA), which integrates strategies such as Good Nodes Set initialization, Leader-Followers Foraging, Dynamic Encircling Prey, Triangular Hunting, and a nonlinear convergence factor to enhance exploration, exploitation, and convergence stability. Experiments on 23 benchmark functions demonstrate NAWOA’s superior optimization capability and robustness. Based on this optimizer, an NAWOA-XGBoost model was developed to predict academic potential using data from 495 Computer Science undergraduates at Macao Polytechnic University (2009-2019). Results show that NAWOA-XGBoost outperforms traditional XGBoost and WOA-XGBoost across key metrics, including Accuracy (0.8148), Macro

📄 Full Content

NAWOA-XGBOOST: A NOVEL MODEL FOR EARLY PREDICTION

OF ACADEMIC POTENTIAL IN COMPUTER SCIENCE STUDENTS ∗

Junhao Wei, Yanzhao Gu, Ran Zhang, Mingjing Huang, Jinhong Song, Yanxiao Li, Wenxuan Zhu, Yapeng Wang

Faculty of Applied Sciences

Macao Polytechnic University

Macao, China

{p2312195,p2311998,p2512396,P2314640,p2315937,P2525981,p2525620,yapengwang}@mpu.edu.mo

Zikun Li

School of Economics and Management

South China Normal University

18520610821@163.com

Zhiwen Wang

School of Nursing

Peking University

wzwjing@sina.com

Xu Yang*, Ngai Cheong*

Faculty of Applied Sciences

Macao Polytechnic University

{xuyang,ncheong}@mpu.edu.mo

ABSTRACT

Whale Optimization Algorithm (WOA) suffers from limited global search ability, slow convergence,

and tendency to fall into local optima, restricting its effectiveness in hyperparameter optimization for

machine learning models. To address these issues, this study proposes a Nonlinear Adaptive Whale

Optimization Algorithm (NAWOA), which integrates strategies such as Good Nodes Set initializa-

tion, Leader-Followers Foraging, Dynamic Encircling Prey, Triangular Hunting, and a nonlinear

convergence factor to enhance exploration, exploitation, and convergence stability. Experiments on

23 benchmark functions demonstrate NAWOA’s superior optimization capability and robustness.

Based on this optimizer, an NAWOA-XGBoost model was developed to predict academic potential

using data from 495 Computer Science undergraduates at Macao Polytechnic University (2009-2019).

Results show that NAWOA-XGBoost outperforms traditional XGBoost and WOA-XGBoost across

key metrics, including Accuracy (0.8148), Macro F1 (0.8101), AUC (0.8932), and G-Mean (0.8172),

demonstrating strong adaptability on multi-class imbalanced datasets.

Keywords Whale Optimization Algorithm · XGBoost · data mining · education technology

1

Introduction

With the rapid development of Educational Data Mining (EDM) [1], predicting students’ future academic potential

based on their early learning performance has become an important research direction for quality assurance, resource

allocation, and personalized instruction in higher education. In the field of Computer Science (CS), students’ academic

records and behavioral data during their first and second years often provide strong indications of their foundational

knowledge, learning capability, and future development potential. Therefore, establishing a reliable early academic

potential prediction model is of significant importance.

In recent years, machine learning methods have been extensively applied to educational prediction tasks. Among

these methods, XGBoost has been regarded as a suitable model due to its strong generalization ability and superior

performance on structured data. However, the performance of XGBoost highly depends on its hyperparameter settings.

Manual tuning is inefficient and prone to local optima, which restricts further improvements in predictive performance.

To address this limitation, researchers started to pay attention on metaheuristic algorithms. Metaheuristic algorithms,

which are famous of their strong optimization abilities, have been introduced to path planning [2][3][4], engineering

design, antenna design [5], and optimizing the hyperparameters of machine learning models. Among them, the Whale

Optimization Algorithm (WOA) has attracted attention due to its simplicity, efficiency, and global search capability.

∗Citation: Authors. Title. Pages.... DOI:000000/11111.

arXiv:2512.04751v2 [cs.CE] 5 Dec 2025

Running Title for Header

Nevertheless, the conventional WOA tends to suffer from premature convergence and loss of population diversity in

later iterations, limiting its ability to effectively explore complex parameter spaces.

Previous studies have integrated the classical WOA with XGBoost and achieved promising prediction results [6].

However, there remains considerable room for improvement. Building upon this foundation, the present study proposes

a Nonlinear Adaptive Whale Optimization Algorithm (NAWOA), which incorporates strategies such as Good Nodes

Set initialization, Leader-Followers Foraging, and Dynamic Encircling Prey to enhance global exploration ability and

optimization stability. Experiments conducted on 23 benchmark functions validate the strong optimization capability

of the proposed algorithm [7][8]. Based on this enhanced optimizer, we construct an NAWOA-XGBoost academic

potential prediction model and evaluate it using a real dataset of 495 undergraduate students majoring in Computer

Science at Macao Polytechnic University (MPU) from 2009 to 2019. The dataset was preprocessed and provided by the

institution, enabling direct use for training and comparative analysis.

Experimental results indicate that NAWOA can more effectively optimize the hyperparameters of XGBoost, thereby

enhancing model stability and predictive performance in multi-class educational prediction tasks. The proposed model

provides a valuable reference for early acad

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.