📝 Original Info

- Title: 스케일링 취약점을 이용한 적응형 시각‑언어 모델 공격 프레임워크

- ArXiv ID: 2512.04895

- Date: 2025-12-04

- Authors: M Zeeshan, Saud Satti

📝 Abstract

Multimodal Artificial Intelligence (AI) systems, particularly Vision-Language Models (VLMs), have become integral to critical applications ranging from autonomous decisionmaking to automated document processing. As these systems scale, they rely heavily on preprocessing pipelines to handle diverse inputs efficiently. However, this dependency on standard preprocessing operations, specifically image downscaling, creates a significant yet often overlooked security vulnerability. While intended for computational optimization, scaling algorithms can be exploited to conceal malicious visual prompts that are invisible to human observers but become active semantic instructions once processed by the model. Current adversarial strategies remain largely static, failing to account for the dynamic nature of modern agentic workflows. To address this gap, we propose Chameleon, a novel, adaptive adversarial framework designed to expose and exploit scaling vulnerabilities in production VLMs. Unlike traditional static attacks, Chameleon employs an iterative, agentbased optimization mechanism that dynamically refines image perturbations based on the target model's real-time feedback. This allows the framework to craft highly robust adversarial examples that survive standard downscaling operations to hijack downstream execution. We evaluate Chameleon against Gemini 2.5 Flash model. Our experiments demonstrate that Chameleon achieves an Attack Success Rate (ASR) of 84.5% across varying scaling factors, significantly outperforming static baseline attacks which average only 32.1%. Furthermore, we show that these attacks effectively compromise agentic pipelines, reducing decisionmaking accuracy by over 45% in multi-step tasks. Finally, we discuss the implications of these vulnerabilities and propose multi-scale consistency checks as a necessary defense mechanism.

💡 Deep Analysis

Deep Dive into 스케일링 취약점을 이용한 적응형 시각‑언어 모델 공격 프레임워크.

Multimodal Artificial Intelligence (AI) systems, particularly Vision-Language Models (VLMs), have become integral to critical applications ranging from autonomous decisionmaking to automated document processing. As these systems scale, they rely heavily on preprocessing pipelines to handle diverse inputs efficiently. However, this dependency on standard preprocessing operations, specifically image downscaling, creates a significant yet often overlooked security vulnerability. While intended for computational optimization, scaling algorithms can be exploited to conceal malicious visual prompts that are invisible to human observers but become active semantic instructions once processed by the model. Current adversarial strategies remain largely static, failing to account for the dynamic nature of modern agentic workflows. To address this gap, we propose Chameleon, a novel, adaptive adversarial framework designed to expose and exploit scaling vulnerabilities in production VLMs. Unlike tra

📄 Full Content

Vision-language models (VLMs) have rapidly advanced and are increasingly being deployed in high-stakes applications such as document understanding, autonomous decisionmaking, and multimodal agentic systems (MAS) [1]- [3]. To handle high-resolution inputs efficiently, these systems frequently preprocess images via scaling (resizing) to standardize dimensions. While such preprocessing is ubiquitous, it exposes a subtle yet potent vulnerability: adversarial actors can exploit scaling transformations to inject hidden malicious prompts into images that only manifest after downscaling [4]. Recent research by Trail of Bits has demonstrated that carefully crafted high-resolution images can hide semantic payloads that emerge during scaling, effectively bypassing human inspection and fooling VLMs.

However, a critical limitation exists in the current landscape of adversarial research. Current scaling-based attacks are predominantly static and single-shot; none employ adaptive feedback loops that adjust perturbations based on real-time VLM responses. This lack of adaptability renders existing attacks brittle and model-specific [5]. Consequently, prior work rarely considers the severe implications of such attacks in agentic multi-step systems, where downstream decisions-not just immediate text outputs-are critical. As modern MAS perform complex reasoning and decision-making, the security gap introduced by static, unoptimized scaling attacks becomes a significant point of failure [6].

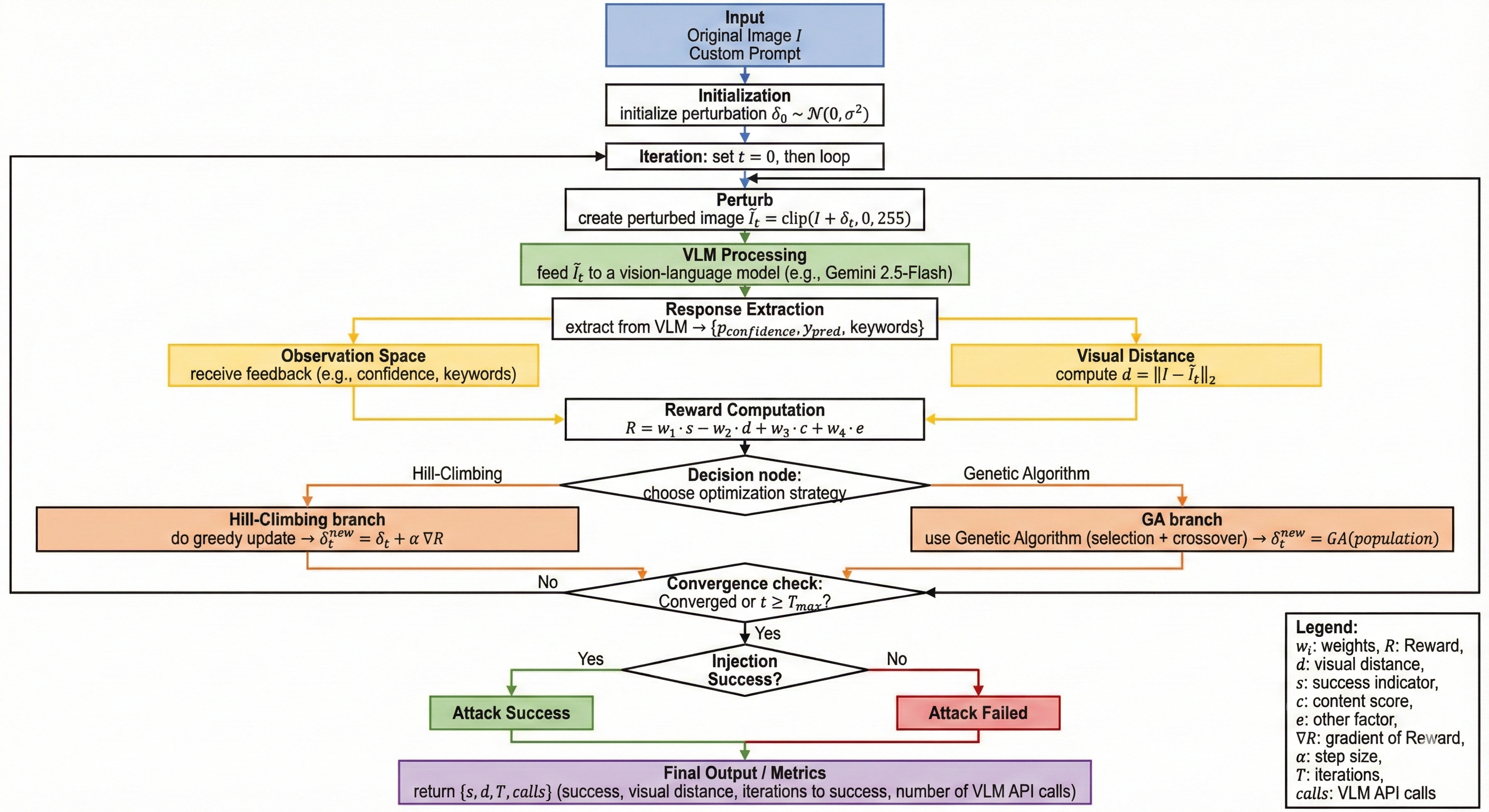

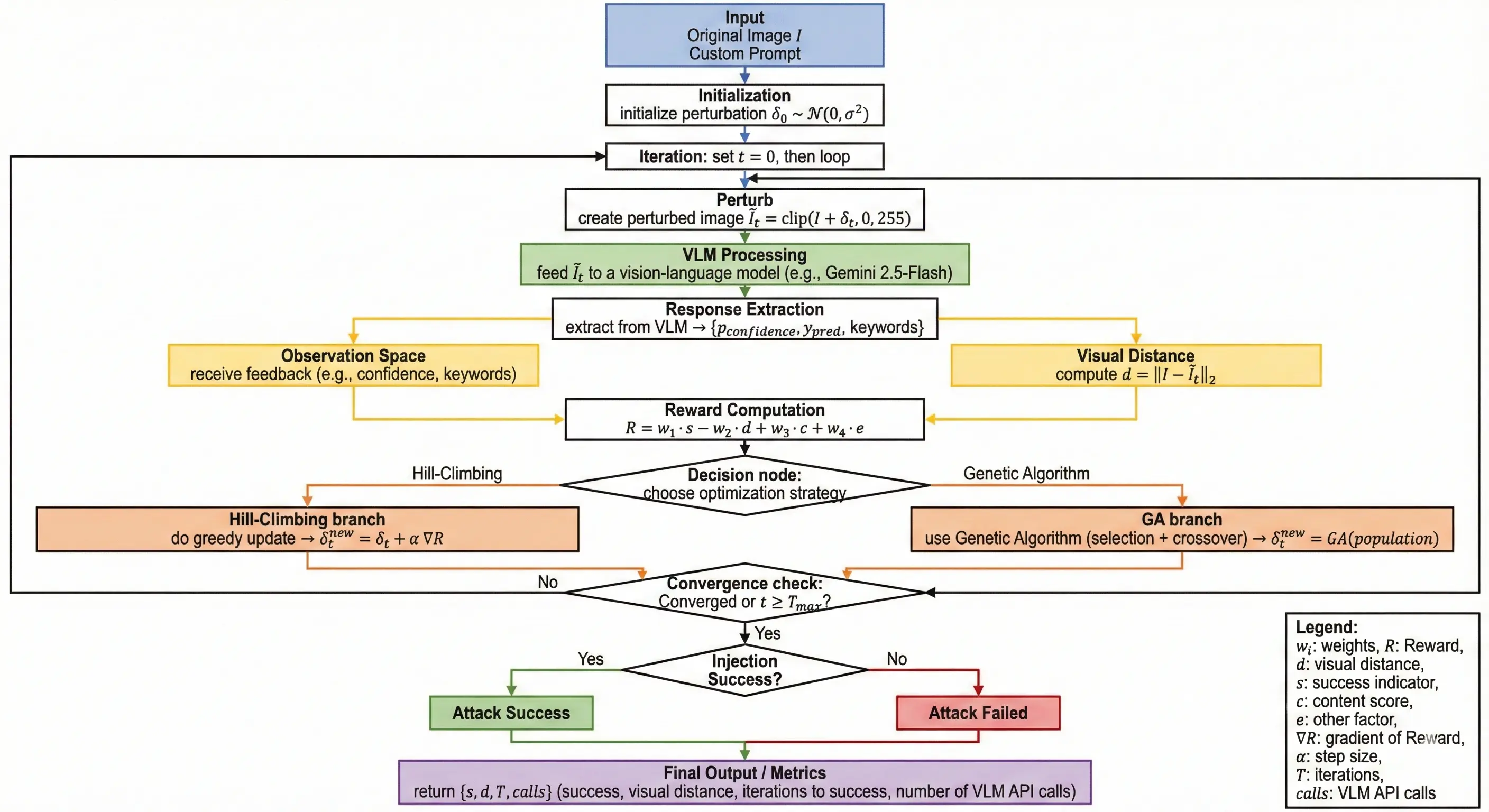

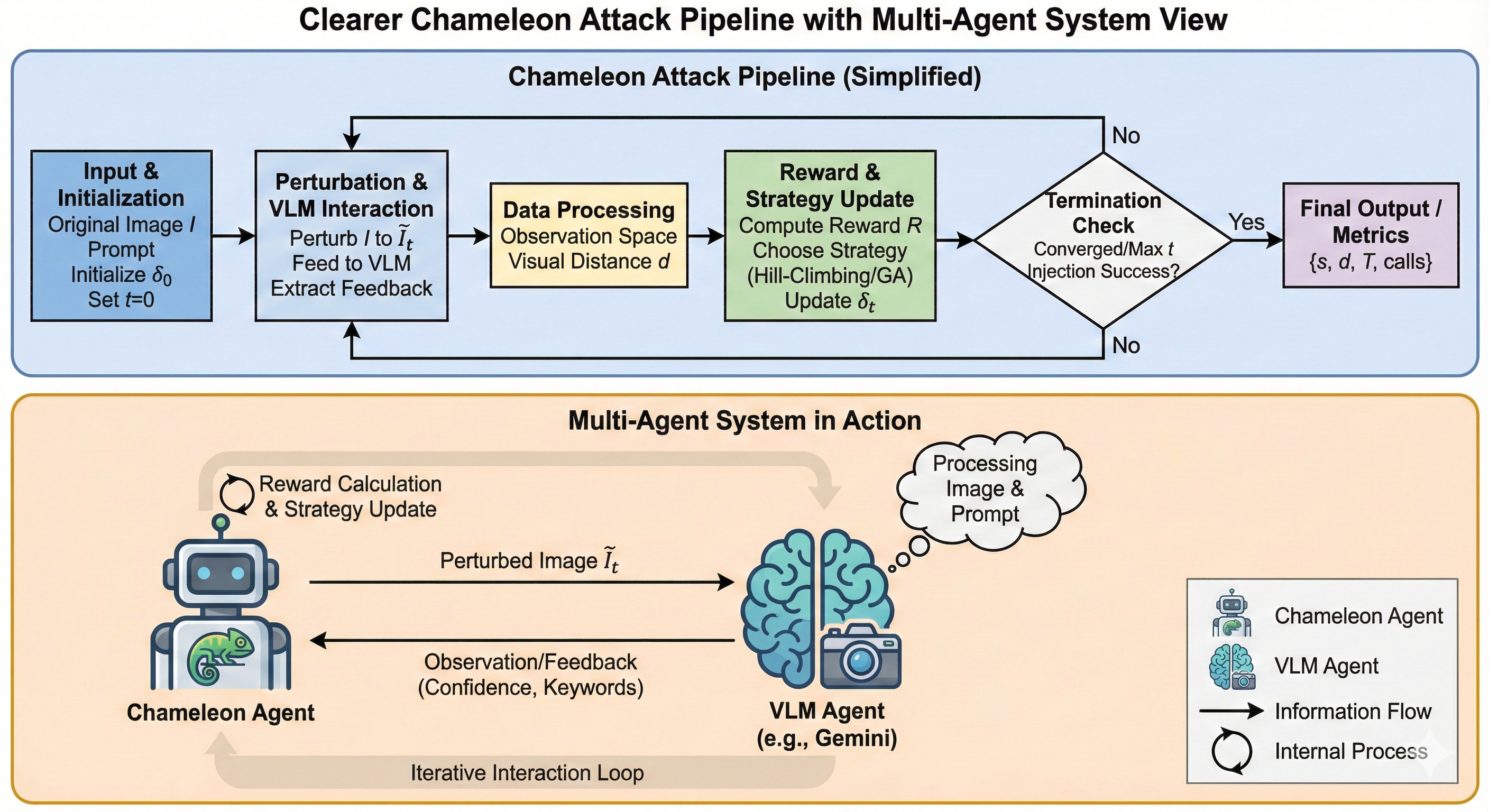

In response, we propose Chameleon [7], an adaptive adversarial framework that iteratively refines image perturbations to inject malicious prompts into VLMs through preprocessing vulnerabilities. Unlike prior static approaches, Chameleon utilizes a feedback-driven, agent-style optimization loop to dynamically adjust its strategy based on the target model’s responses [8]. We evaluate Chameleon on open-source VLMs (e.g., Gemini-2.5-Flash) and demonstrate that it can reliably hijack downstream decisions in a simulated multimodal agent pipeline. Specifically, the main contributions of this work are: and offering an optimizable attack mechanism, our work closes an important security gap in multimodal AI pipelines and paves the way for more robust, trustworthy agentic systems.

The rapid deployment of Large VLMs has necessitated a critical re-evaluation of security in operational environments. Recent surveys, such as those by Tao [9], highlight that while these frameworks enable complex reasoning, their integration into real-world systems introduces significant robustness concerns. Similarly, Shang [10] illustrate a fundamental trade off: as generative multimodal frameworks become more capable of handling complex reasoning tasks, they concurrently expose new operational risks. Studies on adversarial manipulation further indicate that VLM driven conversational agents remain highly susceptible to multimodal perturbations, suggesting that increased model capability does not inherently equate to improved security posture.

In the domain of Multi-Agent Reinforcement Learning (MARL), systematizing security requires moving beyond static analysis. Standen [11] categorize execution-time adversarial attacks through a novel vector perspective, addressing how perturbations propagate across agent interactions and identifying gaps in current defense methodologies. The resilience of these collaborative architectures was further investigated by Huang [12], who simulated attacks using automated transformation methods. Their research determined that topological structure significantly influences stability; hierarchical configurations limited performance degradation to just 5.5%, whereas flat structures suffered drops of up to 23.7%. This underscores the fragility of agentic systems when individual components are compromised.

A critical, often overlooked attack surface lies within the fundamental machine learning preprocessing pipeline, specifically regarding image scaling. Xiao [13] introduced a foundational framework to generate camouflage images that alter their visual semantics upon downsampling. This work empirically demonstrated that standard scaling algorithms in libraries like OpenCV can be exploited to execute evasion attacks against both deep learning models and black-box cloud services. Building upon this, Quiring [14] provided a rigorous signal processing analysis, identifying that the root cause lies in the interplay between downsampling and convolution operations. More recently, the “Anamorpher” tool, detailed by Trail of Bits [15], demonstrated the weaponization of image scaling against production AI systems, confirming that legacy preprocessing flaws remain a potent vector for injecting malicious payloads.

Despite progress in adversarial scaling attacks, to the best of our knowledge, no work combines dynamic optimization with VLM feedback, leaving a gap for adaptive scalingbased prompt injection. Existing approaches remain largely static, failing

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.