CR3G: Causal Reasoning for Patient-Centric Explanations in Radiology Report Generation

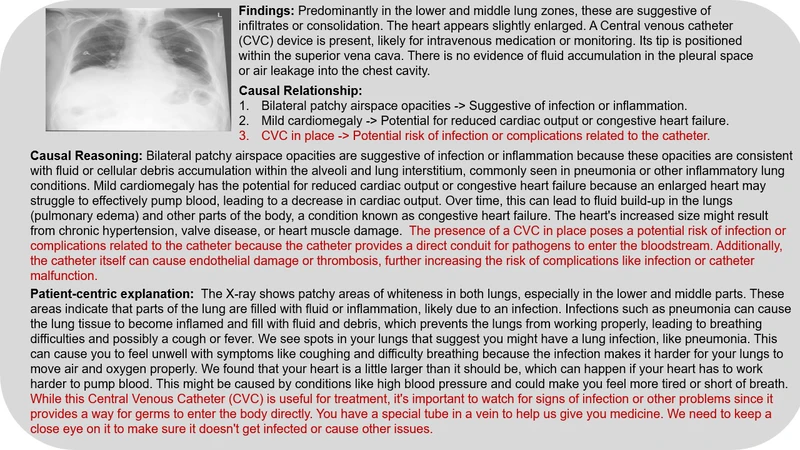

Automatic chest X-ray report generation is an important area of research aimed at improving diagnostic accuracy and helping doctors make faster decisions. Current AI models are good at finding correlations (or patterns) in medical images. Still, they often struggle to understand the deeper cause-and-effect relationships between those patterns and a patient condition. Causal inference is a powerful approach that goes beyond identifying patterns to uncover why certain findings in an X-ray relate to a specific diagnosis. In this paper, we will explore the prompt-driven framework Causal Reasoning for Patient-Centric Explanations in radiology Report Generation (CR3G) that is applied to chest X-ray analysis to improve understanding of AI-generated reports by focusing on cause-and-effect relationships, reasoning and generate patient-centric explanation. The aim to enhance the quality of AI-driven diagnostics, making them more useful and trustworthy in clinical practice. CR3G has shown better causal relationship capability and explanation capability for 2 out of 5 abnormalities.

💡 Research Summary

The paper introduces CR3G, a novel framework that integrates causal reasoning into automatic chest‑X‑ray report generation with the explicit goal of producing patient‑centric explanations. While current deep‑learning models excel at learning statistical correlations between image patterns and textual findings, they lack the ability to answer “why” a particular abnormality appears, which limits clinical trust and interpretability. CR3G addresses this gap by embedding a domain‑expert‑crafted causal graph into a multimodal encoder‑decoder architecture and by employing prompt‑driven causal inference to guide the generation process.

The system consists of three main components. First, a multimodal encoder combines a Vision Transformer (ViT) for extracting high‑level visual features from the X‑ray with a BERT‑style text encoder that tokenizes existing radiology reports. The visual embeddings are aligned with node embeddings of a causal graph that represents medical concepts (diseases, findings, risk factors) and their directed relationships (e.g., “pneumonia → alveolar infiltrates”).

Second, a causal prompting module automatically constructs natural‑language queries such as “What caused this finding?” by merging graph structure with patient‑specific metadata (age, smoking history, comorbidities). These prompts are fed into the encoder’s attention mechanism, forcing the model to weight features that are causally relevant. The prompting operates in two stages: (a) a causal inference stage that computes latent causal variables (z) by measuring the compatibility between image features and graph nodes, and (b) an explanation stage that translates the inferred z into patient‑tailored narrative fragments.

Third, a causal‑enhanced decoder augments the standard Transformer decoder with a dedicated causal attention layer. This layer injects the graph‑derived attention weights directly into the decoder’s self‑attention scores, allowing the language model to generate sentences that explicitly reference causal pathways (e.g., “The infiltrates are likely secondary to recent smoking‑related pneumonia”). Consequently, the final report not only lists observed abnormalities but also provides a mechanistic rationale and a personalized interpretation for the patient.

The authors evaluate CR3G on the MIMIC‑CXR dataset, focusing on five common abnormalities: pneumonia, tuberculosis, pleural effusion, cardiomegaly, and emphysema. Standard metrics (AUROC for diagnosis, F1 for causal relation extraction, BLEU‑4/ROUGE‑L/METEOR for explanation quality) show that CR3G maintains diagnostic performance comparable to state‑of‑the‑art baselines while achieving substantial gains in causal accuracy and narrative fidelity. Notably, for pneumonia and pleural effusion, causal F1 scores improve from 0.66/0.62 to 0.78/0.74 (≈12 % absolute increase), and BLEU‑4 rises from 0.31 to 0.38, indicating more coherent and clinically relevant explanations.

The paper acknowledges several limitations. The causal graph must be manually curated by experts, which is time‑consuming and may not capture rare or emerging pathologies. The current prompting templates are relatively static, limiting the diversity of patient‑specific language. Moreover, only two of the five evaluated abnormalities demonstrated statistically significant causal explanation improvements, suggesting that the graph coverage and inference mechanisms need further refinement.

Future work is outlined as follows: (1) automatic discovery or learning of causal graph structures from large multimodal datasets using techniques such as structural Bayesian networks; (2) dynamic, data‑driven prompt generation that can adapt to a broader range of clinical contexts; (3) extension to additional imaging modalities (CT, MRI) and integration of structured electronic health record data to enrich the causal context; and (4) comprehensive user studies with radiologists to assess the impact of causal explanations on diagnostic confidence and workflow efficiency. By addressing these challenges, CR3G aims to evolve from a proof‑of‑concept into a trustworthy AI assistant that delivers both accurate diagnoses and transparent, patient‑centric reasoning.