NAS-LoRA: Empowering Parameter-Efficient Fine-Tuning for Visual Foundation Models with Searchable Adaptation

The Segment Anything Model (SAM) has emerged as a powerful visual foundation model for image segmentation. However, adapting SAM to specific downstream tasks, such as medical and agricultural imaging, remains a significant challenge. To address this,…

Authors: ** - Renqi Chen (복합 로봇 및 고급 제조학부, 푸단대학교) - Haoyang Su (호주 애들레이드 대학교, Australian Institute for Machine Learning) - Shixiang Tang (상하이 인공지능 연구소

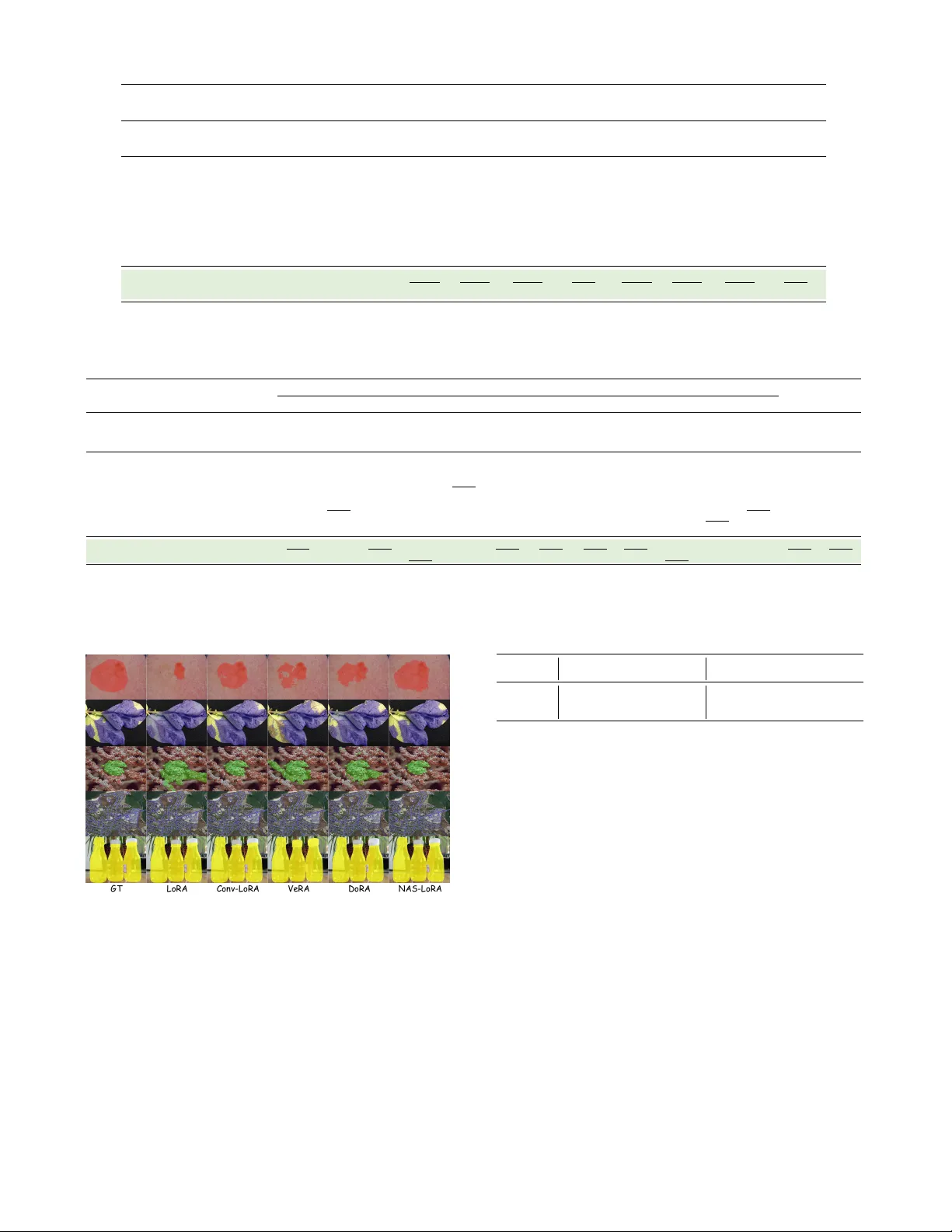

N AS-LoRA: Empowering P arameter -Efficient Fine-T uning f or V isual F oundation Models with Sear chable Adaptation Renqi Chen 1 , Haoyang Su 2 , Shixiang T ang 3 * 1 College of Intelligent Robotics and Adv anced Manufacturing, Fudan Univ ersity , Shanghai, China 2 Australian Institute for Machine Learning, The Univ ersity of Adelaide, Adelaide, Australia 3 Shanghai Artificial Intelligence Laboratory rqchen23@m.fudan.edu.cn, haoyang.su@adelaide.edu.cn, tangshixiang@pjlab .org.cn Abstract The Segment An ything Model (SAM) has emerged as a powerful visual foundation model for image segmentation. Howe ver , adapting SAM to specific downstream tasks, such as medical and agricultural imaging, remains a significant challenge. T o address this, Low-Rank Adaptation (LoRA) and its variants have been widely employed to enhancing SAM’ s adaptation performance on di verse domains. Despite advancements, a critical question arises: can we integrate inductiv e bias into the model? This is particularly relevant since the Transformer encoder in SAM inherently lacks spa- tial priors within image patches, potentially hindering the acquisition of high-le vel semantic information. In this pa- per , we propose N AS-LoRA, a new P arameter-Ef ficient Fine- T uning (PEFT) method designed to bridge the semantic gap between pre-trained SAM and specialized domains. Specifi- cally , N AS-LoRA incorporates a lightweight Neural Archi- tecture Search (N AS) block between the encoder and de- coder components of LoRA to dynamically optimize the prior knowledge integrated into weight updates. Furthermore, we propose a stage-wise optimization strategy to help the V iT en- coder balance weight updates and architectural adjustments, facilitating the gradual learning of high-le vel semantic infor- mation. V arious Experiments demonstrate our N AS-LoRA improv es existing PEFT methods, while reducing training cost by 24.14% without increasing inference cost, highlight- ing the potential of N AS in enhancing PEFT for visual foun- dation models. 1 Introduction Foundation models pre-trained on large-scale general datasets ha ve demonstrated remarkable generalization capa- bilities across div erse tasks (T ouvron et al. 2023; Liu et al. 2023; Chen et al. 2024b). Among visual foundation mod- els, the Segment Anything Model (SAM) (Kirillov et al. 2023) stands out for its prompt-based design and training on over a billion masks, achieving strong zero-shot perfor- mance on natural images and common objects. Howe ver , SAM struggles with domain-specific tasks such as medical imaging and remote sensing (Chen et al. 2023a; W u et al. 2023; Osco et al. 2023), where domain knowledge and vi- sual inducti ve biases are critical (W u et al. 2023; Chen et al. 2024a). While fully fine-tuning foundation models for such * Corresponding author . NAS Ce ll Pretrained Weights Encoder LoRA Pretrained Weights Encoder NAS - LoRA Frozen Trainable Decoder Decoder (a) LoRA vs. NAS-LoRA Kvasir CVC-ClinicDB ISIC 2017 CAMO Leaf Road Trans10K-v2 92.40 91.20 91.90 90.70 86.40 84.90 88.40 88.00 84.90 83.60 77.40 76.50 67.94 66.01 NAS-LoRA LoRA (b) Comparison on various datasets NAS -LoRA LoRA Trai ning Cos t (m i n/ep och) <24.14% NAS -L oRA LoRA Infere nce Cost ( iter /s ) No Incre ase! 20.3 25 .2 4.62 NAS -L oRA LoRA Param eter Cost (M) <0.23% 4.0 (c) Training cost and inference time Figure 1: N AS-LoRA adds a lightweight, trainable N AS cell between LoRA ’ s encoder and decoder to inject opti- mized prior kno wledge. This enhancement shows superior- ity across each segmentation tasks, with only a minimal in- crease in training cost and no additional inference ov erhead. tasks is often computationally intensi ve and prone to o verfit- ting, Lo w-Rank Adaptation (LoRA) (Hu et al. 2022), which is a P arameter-Ef ficient Fine-T uning (PEFT) (Houlsby et al. 2019; T u, Mai, and Chao 2023; Xin et al. 2024a; W ang et al. 2024) method, is proposed to address these chal- lenges by updating only a small subset of parameters. De- spite advancements, its capacity to encode domain kno wl- edge and inducti ve biases remains underexplored, partly due to the V iT encoder’ s lack of inherent task-specific priors. Unlike con volutional architectures, V iTs process input glob- ally without built-in locality or structure-a ware mechanisms, making it harder to capture fine-grained patterns and contex- tual cues essential for specialized domains such as medical or remote sensing imagery (Zhong et al. 2024). T o address the lack of local priors in SAM, conv olu- tional LoRA (Zhong et al. 2024) introduces conv olutions into LoRA modules across all encoder layers. Howe ver , op- erating on Q , K , and V embeddings rather than raw pixels may distort spatial structure. Moreover , critical components like pooling and skip connections (Ronneberger , Fischer , and Brox 2015; He et al. 2016; Chen et al. 20 21; Nirthika et al. 2022) are absent in such adaptations. This raises a key question: Should conv olution be applied uniformly , or can we design a more adaptiv e way to inject inductiv e biases? W e argue that different do wnstream tasks often require tailored inductiv e biases. For instance, cancer image seg- mentation relies on capturing fine-grained textures and subtle intensity v ariations to delineate ambiguous bound- aries (Chen et al. 2023b; W ang et al. 2022), while natural image segmentation benefits more from strong edge detec- tion and global spatial context (Kirillov et al. 2023; Zhang et al. 2023). Similarly , remote sensing tasks emphasize ge- ometric regularity and scale inv ariance to detect structures like roads or b uildings under varying resolutions. These dif- ferences highlight the need for flexible adaptation strategies that can selecti vely inject task-relev ant priors, rather than ap- plying a one-size-fits-all design across domains. Inspired by Neural Architecture Search (NAS) (Liu, Si- monyan, and Y ang 2018; Xu et al. 2019; Salehin et al. 2024), which can dynamically optimizes network structures, we propose NAS-LoRA, an adapti ve PEFT frame work that gen- eralizes SAM across div erse downstream tasks. As shown in Fig. 1, NAS-LoRA integrates a lightweight NAS cell be- tween the encoder and decoder of LoRA, enabling dynamic selection of the most suitable feature transformation path- ways for each task. T o av oid sub-optimal results caused by complex search spaces and parameter-architecture interac- tions, we introduce a stage-wise strategy to decouple the op- timization process, ensuring gradual semantic learning in the V iT encoder . Additionally , unlike traditional NAS methods requiring a decoding step for final structure selection (Liang et al. 2021; W ang and Zhu 2024; Salmani Pour A vv al et al. 2025), N AS-LoRA seamlessly merges into pre-trained weights without re-training or merging loss, maintaining its efficienc y . W e ev aluate N AS-LoRA across nine segmenta- tion benchmarks from different domains, sho wing its su- periority over existing PEFT methods. Additionally , N AS- LoRA outperforms LoRA by reducing training cost by 24.14% without increasing inference cost. Our key contri- butions are summarized as follo ws: • W e introduce NAS-LoRA, a novel PEFT framework that dynamically selects optimal pathways for inductiv e bias injection during weight updates, ef fectiv ely adapting SAM to specialized domains. • W e propose a a stage-wise optimization strate gy that bal- ances weight adaptation and architectural adjustments, improving high-level semantic learning in SAM’ s V iT encoder . • N AS-LoRA eliminates the need for additional decoding and re-training steps, unlike traditional NAS approaches, ensuring a parameter-ef ficient adaptation process. 2 Related work Parameter Efficient Fine-T uning . T o reduce computa- tional costs and mitigate ov erfitting, Parameter Efficient Fine-T uning (PEFT) methods adapt pre-trained models to downstream tasks by fine-tuning only a small subset of parameters while keeping the original model weights frozen (Han et al. 2024; Xin et al. 2024b). PEFT techniques can be broadly categorized into adapter-based methods, prompt-based tuning, and Low-Rank Adaptation (LoRA). Adapter-based methods (Houlsby et al. 2019; Y in et al. 2023; Y ang et al. 2023; Y in et al. 2025) introduce additional trainable modules between the layers of a pre-trained model, while prompt-based tuning (Lester , Al-Rfou, and Constant 2021; Razdaibiedina et al. 2023; W ang et al. 2024) appends learnable tok ens to the input and fine-tunes only these to- kens. Despite their ef fecti veness, both approaches face two main limitations: (1) sensitivity to the initialization of learn- able parameters and (2) increased inference latency due to added modules or input modifications. LoRA (Hu et al. 2022) overcomes these issues by intro- ducing lo w-rank matrices to approximate weight updates, which are merged into the pre-trained model before infer - ence, av oiding additional computational overhead. LoRA variants (Kopiczk o, Blank e voort, and Asano 2023; Liu et al. 2024; Zhong et al. 2024) ha ve been proposed to analyze its mechanism and enhance its ef fecti veness. For instance, DoRA (Liu et al. 2024) lev erages weight decomposition analysis to re veal that the gap between LoRA and full fine- tuning is not solely due to the number of trainable parame- ters b ut the patterns of weight updates. Instead of increasing parameter efficienc y through weight decomposition, we fo- cus on optimizing the arrangement of trainable parameters within LoRA. This design allows LoRA to incorporate not only domain-specific knowledge but also structured induc- tiv e biases, improving its adaptation to downstream tasks. Fine-tuning SAM. Segment Anything (SAM) (Kirillov et al. 2023), a foundational model trained on the SA-1B dataset with o ver one billion masks using a model-in-the- loop approach, has demonstrated strong generalization and zero-shot se gmentation capabilities. Gi ven its zero-shot per- formance, e xisting studies have integrated LoRA or inserted adapters to enhance SAM’ s segmentation capabilities for specific visual tasks (Chen et al. 2023a; W u et al. 2023; Zhang and Liu 2023; Zhong et al. 2024). Among these, Con v-LoRA (Zhong et al. 2024) represents a notable at- tempt to incorporate inducti ve biases by injecting conv olu- tional operations between the encoder and decoder compo- nents of LoRA. While this modification improved perfor - mance compared to standard LoRA fine-tuning, it applies con volutions uniformly across all SAM encoder layers, dis- regarding the structural dif ferences in patch embeddings and their varying sensitivity to local priors. T o address this lim- itation, we propose N AS-LoRA, which dynamically selects the optimal feature mapping pathway for prior incorpora- tion. By adaptiv ely injecting inducti ve biases, N AS-LoRA achiev es a more efficient and ef fecti ve fine-tuning strate gy . Neural Architecture Search. Neural Architecture Search (N AS) aims to discover optimal neural architectures while minimizing computational costs and reliance on expert knowledge (Ren et al. 2021). T o enhance efficiency , gradient-based N AS methods (Liu, Simonyan, and Y ang 2018; Xu et al. 2019; Chu, Zhang, and Xu 2021; Y e et al. 2022; He et al. 2024) hav e been introduced, leveraging con- Image Encoder a is frozen a is tr aina ble w i s t r aina ble w i s f roz en Image Embedding Mask Decoder Prompt Encoder Fi ne - tu nin g Frozen Tra inabl e NAS Cell Opt imizatio n Encoder De co der dilated conv olution separ able con volution no c onnection arch itectu re Sta ge 1 Px (1 - P) x a 1 w’ dilated conv olution separ able con volut ion no c onnection architec tu re Sta ge 2 Px (1 - P) x a’ 1 a’ i w (a) SAM (b) N AS - LoRA a i Figure 2: The proposed NAS-LoRA framew ork for fine-tuning SAM. The upper part illustrates the design of each SAM compo- nent: N AS-LoRA is applied to the self-attent ion layers of the image encoder , the prompt encoder is frozen to enable automated processing, and the mask decoder is fully fine-tuned without LoRA, as it is a lightweight module. The lower part depicts the end-to-end stage-wise optimization of NAS-LoRA, where Stage 1 and Stage 2 iterati vely update model weights and architecture parameters using two independent optimizers. After optimization, the learned architecture parameters and weights are directly merged into the pre-trained model for do wnstream tasks, following the standard LoRA mer ging process. tinuous relaxation to reallocate path weights across candi- date operations within layers or cells. Additionally , efforts to streamline NAS (Xu et al. 2019; Xue and Qin 2022; Gao et al. 2022) focus on reducing the computational burden as- sociated with the search process. NAS vs. NAS-LoRA. Ho wever , integrating the advance- ments of con ventional N AS methods into foundation model during fine-tuning exists two critical challenges. First, they typically require a decoding step—such as the V iterbi al- gorithm—to finalize the selected architecture, which intro- duces biases and necessitate additional re-training to align the decoded model with the tar get task. Second, N AS is computationally intensiv e during training, making it imprac- tical for large-scale models. T o overcome these, we propose N AS-LoRA, which eliminates both decoding and re-training by directly optimizing weight updates, allowing seamless in- tegration with pre-trained models. Furthermore, by utilizing the lo w rank of LoRA and channel selection, NAS-LoRA introduces a lightweight search framew ork, efficiently opti- mizing the allocation of trainable parameters within LoRA. By appropriately applying NAS principles to large-scale models, NAS-LoRA achie ves improvements in adaptation without incurring excessi ve computational costs, making it the first scalable and practical N AS-based solution for fine- tuning foundation models. 3 Methodology 3.1 Preliminary: LoRA As shown in the top section of Fig. 1(a), LoRA (Hu et al. 2022) fine-tunes a model by freezing the pre-trained weights and introducing a lo w-rank adaptation mechanism. Specifi- cally , LoRA injects a pair of trainable low-rank matrices (an encoder and a decoder) into each transformer layer , enabling efficient weight updates with minimal additional parameters. Formally , given a pre-trained weight matrix W 0 ∈ R b × a , LoRA introduces an encoder matrix W e ∈ R r × a and a de- coder matrix W d ∈ R b × r , where r ≪ min ( a, b ) . The for- ward pass with LoRA is defined as: h = W 0 x + ∆ W x = W 0 x + W d W e x (1) In contrast, N AS-LoRA, shown in the bottom section of Fig. 1(a), extends this frame work by incorporating a train- able N AS cell between the encoder and decoder . Unlike Con v-LoRA(Zhong et al. 2024), which injects inductiv e bi- ases by applying con volutions indiscriminately across all transformer layers, N AS-LoRA introduces a dynamic selec- tion mechanism. This mechanism adaptiv ely determines the optimal feature mapping pathway between LoRA ’ s encoder and decoder , effecti vely bridging the semantic gap between pre-trained models and do wnstream tasks. By optimizing in- ductiv e bias injection, N AS-LoRA enables more adaptiv e and efficient fine-tuning, ensuring that local priors are in- corporated in a task-specific manner . 3.2 NAS-LoRA N AS-LoRA introduces a N AS cell that dynamically se- lects the optimal local prior . As illustrated in Fig.2(b), the N AS cell ev olves across different training stages (discussed in Sec.3.3) and serves as a learnable module that adap- tiv ely determines the optimal feature mapping pathway . This pathway is constructed from a set of candidate oper - ations O , with the most suitable operation being selected based on architecture parameters α . In this work, O com- prises widely used operations in modern CNNs, including: • 3 × 3 separable conv olution • 5 × 5 separable conv olution • 3 × 3 conv olution & dilation rate 2 • 5 × 5 conv olution & dilation rate 2 • 3 × 3 average pooling • 3 × 3 max pooling • skip connection • no connection (zero) Each operation serv es a distinct function: conv olution captures local features and enables feature transformation, pooling reduces spatial dimensions and assists in the acqui- sition of in v ariant features, skip connection enhances gradi- ent flo w and facilitates information fusion, while no connec- tion enables information flow isolation. The forward pass of N AS-LoRA is formulated as: h = W 0 x + W d ( |O| X i =1 exp { α i } P |O| j =1 exp { α j } O i ( W e x )) , (2) where W 0 ∈ R C out × C in , W e ∈ R r × C in , W d ∈ R C out × r , and x ∈ R B × C in × H × W . Here, C in and C out denote the number of input and output channels, r is the rank of LoRA, B is the batch size, H and W are the input dimensions. O i represents the i -th operation in O , and the architecture parameter α is optimized by the continuous relaxation al- gorithm. This formulation ensures that the most ef fective prior is incorporated into the parameter updates, enabling a parameter-ef ficient NAS search. NAS with PEFT . Although the N AS cell can autonomously adjust its structure and parameters to enhance representation learning, its built-in search process adds computational over - head, undermining the efficiency goals of PEFT . Therefore, maintaining the lightweight and efficient nature of N AS- LoRA emerges as a critical challenge. T o address this, we take inspiration from Partial Connections (Xu et al. 2019), which show that training with a subset of feature channels maintains performance without degradation. By integrating this P artial C onnection mechanism into N AS-LoRA, we in- troduce N AS- PC -LoRA, where Eq. 2 is reformulated as: h = W 0 x + W d (1 − P ) ⊙ ( W e x ) + P |O| i =1 exp { α i } P |O| j =1 exp { α j } O i ( P ⊙ W e x ) (3) where P is a binary mask indicating the selected channels, and ⊙ denotes the Hadamard product. Notably , when P is a zero matrix, N AS-PC-LoRA degrades to standard LoRA. 3.3 NAS-LoRA in T raining As illustrated in Fig. 2(a), we adopt SAM as the backbone for NAS-LoRA, which consists of three key components: an image encoder, a mask decoder, and a prompt encoder . For the image encoder , we integrate NAS-LoRA along with a stage-wise optimization strategy to effecti vely adapt the model to do wnstream tasks (detailed in Alg. 1). The mask decoder is fully fine-tuned without LoRA. T o enable end- to-end model optimization and application, we freeze the prompt encoder while maintaining constant prompt tokens. Notably , the original mask decoder is modified from binary mask prediction to multi-class mask prediction by incorpo- rating a classification branch. This branch is responsible for predicting classification scores 1 . 1 Follo wing the design in Conv-LoRA (Zhong et al. 2024) and Mask2Former (Cheng et al. 2022), the classification branch pro- Loss Functions. Our final loss function combines the seg- mentation loss L seg and classification loss L cls as follows: L = λ seg L seg + λ cls L cls , (4) where λ seg and λ cls are the weighting coefficients that bal- ance the two objectives. The se gmentation loss L seg (Ma et al. 2024) includes a binary cross-entropy (BCE) loss L BCE and a Dice loss L Dice . The classification loss L cls is defined as the cross-entropy ov er all K + 1 classes. Further details about the loss functions are shown in Appx. A. Optimization Strategy . As shown in Fig. 2(b), stage-wise optimization strategy follows a two-stage iterativ e process, where architecture parameters and model weights are up- dated separately using two independent optimizers. The pro- cedure is shown in Alg. 1. Algorithm 1: N AS-LoRA Optimization 1: Input: weight parameters w , architecture parameters α , optimization steps T , and T B 2: Output: Optimized w ′ , α ′ 3: for t = 1 to T do 4: Fix α and update w by ∇ w L ( w , α ) − → Stage 1 5: if t > T B then 6: Fix w and update α by ∇ α L ( w , α ) − → Stage 2 3.4 NAS-LoRA in Infer ence In LoRA, the fine-tuned weights are mer ged with the frozen pre-trained weights as follows: h = W 0 x + W ′ d W ′ e x = ( W 0 + W ′ d W ′ e ) | {z } W merg ed x. (5) Similarly , in N AS-LoRA, the merging process remains straightforward. The weighted combination of candidate op- eration weights and architecture parameters can be directly integrated into the pre-trained weights, which can be formu- lated based on Eq. 2 as: h = ( W 0 + W ′ d ( |O| X i =1 exp { α ′ i } P |O| j =1 exp { α ′ j } O i W ′ e )) | {z } W merg ed x. (6) For N AS-PC-LoRA, the merged weight is formulated as: W merg ed = W 0 + W ′ d [(1 − P ) ⊙ W ′ e + P |O| i =1 exp { α ′ i } P |O| j =1 exp { α ′ j } O i ( P ⊙ W ′ e )] . (7) A key adv antage of NAS-LoRA is that, unlike tradi- tional N AS, it eliminates the need for an additional decod- ing step to finalize the optimal architecture path (tailored for lo wer deployment costs and faster inference), thereby av oiding path decoding bias (particularly when the path weights are uniform). Furthermore, N AS-LoRA eliminates the re-training step, which is required to correct performance degradation caused by decoding bias in traditional N AS, fur - ther easing the training burden while preserving adaptability . duces K + 1 class predictions (with an additional category for ’no object’), assuming the original task has K categories. For seman- tic inference, pixel-wise predictions are obtained by performing a matrix multiplication between the masks and classification predic- tions, followed by an additi ve operation. Method Params(M)/ Medical Images Natural Images Agriculture Remote Sensing Kvasir CVC-ClinicDB ISIC 2017 CAMO SBU Leaf Road Ratio(%) S α ↑ E ϕ ↑ S α ↑ E ϕ ↑ Jac ↑ Dice ↑ S α ↑ E ϕ ↑ F w β ↑ BER ↓ IoU ↑ Dice ↑ IoU ↑ Dice ↑ Non-PEFT methods. Domain Specific */100% 90.9 94.4 92.6* 95.5* 80.1* 87.5* 80.8 85.8 73.1 3.56 62.3 74.1 59.1 73.0 SAM (scratch) 641.09/100% 78.5 82.4 85.9 91.6 73.8 82.5 61.9 67.0 40.5 5.53 52.1 65.5 55.6 71.1 PEFT methods. Decoder-only 3.51/0.55% 86.5 89.5 85.5 89.9 69.7 79.5 78.5 83.1 69.8 14.58 50.8 63.8 48.6 65.1 SAM-Adapter 3.98/0.62% 89.6 92.5 89.6 92.4 76.1 84.6 85.6 89.6 79.8 3.14 71.4 82.1 60.6 75.2 Mona 17.08/2.66% 91.7 94.4 91.2 93.9 77.1 85.3 87.6 91.6 82.4 2.72 74.0 84.1 61.9 76.1 BitFit 3.96/0.62% 90.8 93.8 89.0 91.6 76.4 84.7 86.8 90.7 81.5 3.16 71.4 81.7 60.6 75.2 VPT 4.00/0.62% 91.5 94.3 91.0 93.7 76.9 85.1 87.4 91.4 82.1 2.70 73.6 83.8 60.2 74.9 LoRA 4.00/0.62% 91.2 93.8 90.7 92.5 76.6 84.9 88.0 91.9 82.8 2.74 73.7 83.6 62.2 76.5 Con v-LoRA 4.02/0.63% 92.0 94.7 91.3 94.0 77.6 85.7 88.3 92.4 84.0 2.54 74.5 84.3 62.6 76.8 DoRA 4.04/0.63% 91.8 94.6 91.4 94.0 77.2 85.3 87.3 91.0 82.2 3.05 73.8 83.8 61.3 75.7 V eRA 0.32/0.05% 90.6 93.4 90.2 92.9 76.5 84.8 87.1 89.9 82.0 3.08 72.9 82.7 62.0 76.3 N AS-LoRA 4.016/0.63% 92.3 94.8 91.7 94.3 78.5 86.4 88.2 92.2 83.7 2.42 * 75.1 84.8 62.8 77.0 N AS-PC-LoRA 4.009/0.62% 92.4 * 95.0 * 91.9 94.5 78.2 86.2 88.4 * 92.5 * 84.2 * 2.52 75.4 * 84.9 * 63.3 * 77.4 * T able 1: Results of binary-class semantic segmentation. “Domain-Specific” refers to methods specifically designed for the target tasks: PraNet (Fan et al. 2020b) for Kvasir and CVC-ClinicDB, Transfuse (Zhang, Liu, and Hu 2021) for ISIC 2017, SINet-v2 (Fan et al. 2021) for CAMO, FDRNet (Zhu et al. 2021) for SBU, Deeplabv3 (Chen et al. 2017) for Leaf, and LinkNet34MTL (Batra et al. 2019) for Road. “Params (M) / Ratio (%)” represents the number of trainable parameters and their proportion relative to total parameters. The best and second-best results among PEFT methods are highlighted in bold and underlined, respectiv ely , while “*” indicates the best performance among both Non-PEFT and PEFT methods. Compared to existing PEFT approaches, N AS-LoRA/NAS-PC-LoRA achie ves superior performance with minimal parameter ov erhead. 4 Experiment 4.1 Experimental Setup Implementation Details. W e apply N AS-LoRA to the Q , K and V matrices in self-attention layers of the image en- coder , following the same approach as LoRA. Experiments are conducted on an Nvidia V100 GPU with a batch size of 4. For weight parameters w , we use the Adam optimizer with an initial learning rate of 1 × 10 − 4 and a weight decay of 1 × 10 − 4 . For architecture parameters α , the Adam opti- mizer is used with an initial learning rate of 1 × 10 − 3 and a weight decay of 1 × 10 − 3 . The initial v alues of α before soft- max are sampled from a standard Gaussian scaled by 0.001. W e set the optimization steps to T = 40 and T B = 10 . Ran- dom horizontal flipping is employed as a data argumentation technique during training. The weight for the segmentation loss, λ seg , is set to 1 , while the weight for the classifica- tion loss, λ cls , is set to 2 . The default rank of NAS-LoRA is 3 , and the selected feature channel ratio is set to 2 / 3 . T o ensure result stability , all e xperiments are conducted three times, with mean values reported. Baselines. W e compare NAS-LoRA with follo wing meth- ods: 1) Fine-tuning SAM’ s mask decoder only . 2) SAM- Adapter (Chen et al. 2023a), which incorporates domain- specific information into SAM using adapters. 3) BitFit (Za- ken, Ravfogel, and Goldberg 2021), which fine-tunes only the bias terms of the model. 4) VPT (Jia et al. 2022), which inserts learnable tokens into the hidden states of each transformer layer . 5) LoRA (Hu et al. 2022). 6) Con v- LoRA (Zhong et al. 2024), which introduces the conv olution managed by Mixture-of-Expert (MoE) into the LoRA. 7) DoRA (Liu et al. 2024), which decomposes the pre-trained weights into the magnitude and direction for fine-tuning. 8) V eRA (Kopiczk o, Blankev oort, and Asano 2023), which shares a single pair of low-rank matrices across all layers and learns small scaling vectors instead of LoRA. 9) Mona (Y in et al. 2025), which introduces multiple vision-friendly filters into the adapter . The experimental results of these baselines are either cited from the original papers or re-implemented. Datasets. Our experiments encompass semantic segmen- tation datasets from multiple domains (Zhong et al. 2024), including natural images, medical images, agriculture, and remote sensing. In the medical domain, we study polyp se g- mentation using CVC-ClinicDB (Bernal et al. 2015) and Kvasir datasets (Jha et al. 2020), and skin lesion segmenta- tion with ISIC 2017 (Codella et al. 2018). For natural im- ages, we in vestigate camouflaged object segmentation on COD10K (Fan et al. 2020a), CAMO (Le et al. 2019), and CHAMELEON (Skurowski et al. 2018), and shadow detec- tion on SBU (V icente et al. 2016). In agriculture, we ev alu- ate on the Leaf Disease Segmentation dataset (Rath 2023), and for remote sensing, we use the Massachusetts Roads dataset (Mnih 2013). Additionally , we explore multi-class segmentation on transparent object datasets, Trans10K-v1 (3 classes) (Xie et al. 2020) and T rans10K-v2 (12 classes) (Xie et al. 2021). Further dataset details are pro vided in Appx. B. 4.2 Main Results Binary-Class Semantic Segmentation. T able 1 presents the results of binary-class semantic segmentation across each datasets. N AS-LoRA and NAS-PC-LoRA outperform the baselines on most datasets, highlighting the effecti veness of optimizing priors with N AS techniques and the importance of inductiv e bias in visual tasks. In terms of parameter effi- ciency , our methods achiev e superior performance with min- imal parameter overhead. While N AS-LoRA falls slightly behind domain-specific methods on certain tasks, its strong generalization ability allows it to be easily adapted to various Method Params(M)/Ratio(%) Easy Hard Acc ↑ mIoU ↑ MAE ↓ MBER ↑ Acc ↑ mIoU ↑ MAE ↓ MBER ↓ Non-PEFT methods. T ranslab (Xie et al. 2020) 42.19/100% 95.77 92.23 0.036 3.12 83.04 72.10 0.166 13.30 PEFT methods. Decoder-only 3.51/0.55% 94.68 88.54 0.050 4.24 83.53 68.30 0.186 14.37 VPT 4.00/0.62% 98.31 95.73 0.017 1.52 90.42 83.38 0.083 7.21 LoRA 4.00/0.62% 98.44 96.26 0.016 1.35 91.94 83.95 0.083 6.35 Con v-LoRA 4.02/0.63% 98.63 96.45 0.015 1.27 93.05 84.37 0.075 6.25 DoRA 4.04/0.63% 98.52 96.31 0.016 1.32 92.21 84.13 0.078 6.32 V eRA 0.32/0.05% 98.14 95.67 0.019 1.58 90.23 83.12 0.084 7.46 N AS-LoRA 4.016/0.63% 98.74 96.56 0.014 1.22 93.82 84.64 0.072 6.19 N AS-PC-LoRA 4.009/0.62% 98.78 96.60 0.013 1.19 94.00 84.78 0.070 6.16 T able 2: Results of multi-class semantic se gmentation on the Trans10K-v1 dataset (three-class segmentation). The best and second-best results are highlighted in bold and underlined, respecti vely . Compared to existing non-PEFT and PEFT methods, N AS-LoRA/N AS-PC-LoRA achieves superior se gmentation performance with minimal parameter overhead. Method Params(M)/ Category IoU ↑ Acc ↑ mIoU ↑ Ratio(%) bg shelf jar freezer window door eyeglass cup wall bowl bottle box Non-PEFT methods. Translab (Xie et al. 2021) 42.19/100% 93.90 54.36* 64.48 65.14* 54.58 57.72 79.85 81.61 72.82 69.63 77.50 56.43 92.67 69.00 Trans2Se g (Xie et al. 2021) 56.20/100% 95.35 53.43 67.82* 64.20 59.64* 60.56 88.52* 86.67* 75.99 73.98* 82.43* 57.17* 94.14 72.15* PEFT methods. Decoder-only 3.51/0.55% 93.66 32.75 39.96 35.87 50.70 45.89 57.38 73.16 69.36 54.23 56.58 33.77 90.66 49.97 VPT 4.00/0.62% 97.41 29.76 52.82 62.09 55.54 63.61 81.12 83.40 79.61 65.29 72.92 44.77 94.42 62.81 LoRA 4.00/0.62% 97.50 42.17 57.82 64.35 53.44 64.08 87.28 85.28 80.43 63.67 77.97 49.56 94.80 66.01 Con v-LoRA 4.02/0.63% 97.66 50.51 58.44 51.70 55.69 65.22 85.23 84.84 80.97 72.84 79.83 52.73 95.07 67.09 DoRA 4.04/0.63% 97.58 45.29 58.12 60.45 54.23 64.54 86.12 85.12 80.23 64.67 78.97 51.56 94.97 66.67 V eRA 0.32/0.05% 97.33 30.12 53.21 61.08 54.53 63.47 82.36 83.95 80.10 64.43 75.84 46.62 94.63 64.46 NAS-LoRA 4.016/0.63% 97.78 49.90 59.24 65.06 54.83 65.77 87.86 85.71 81.04 68.41 78.59 52.82 95.28 67.86 NAS-PC-LoRA 4.009/0.62% 97.80 * 50.71 59.42 64.88 55.01 65.98 * 88.23 85.91 81.35 * 69.45 78.78 52.08 95.31 * 67.94 T able 3: Results of multi-class semantic segmentation on the T rans10K-v2 dataset (twelv e-class se gmentation). “*” denotes the best overall results across both non-PEFT and PEFT methods. Compared to existing approaches, NAS-LoRA/N AS-PC-LoRA achiev es superior segmentation performance with minimal parameter overhead. GT L o RA C o n v - L o R A N A S - L o RA V e R A D o R A Figure 3: V isual comparisons on sample images from the ISIC 2017 ( 1 st line), Leaf ( 2 nd line), CAMO ( 3 rd line), Road ( 4 th line), and T ransparent Object ( 5 th line) datasets. downstream tasks without requiring domain-specific infor- mation. Additionally , N AS-PC-LoRA consistently outper- forms NAS-LoRA on most datasets, indicating the effecti ve- ness of the partial connection mechanism in the PEFT set- ting. A possible explanation is that partial channel sampling reduces bias in operation selection and regularizes the pref- Model Attention Distance ↓ Log Amplitude ↑ Layer 10 Layer 15 Layer 20 f = 0 . 4 π f = 0 . 6 π f = 0 . 8 π Randomly 114 117 115 -5.0 -5.2 -5.4 SAM V iT 76 105 93 -4.2 -4.4 -4.5 NAS-LoRA 65 98 82 -4.0 -4.3 -4.4 T able 4: Ev aluation of inductive biases within features. erence for weight-free operations (Xu et al. 2019), which improv es the stability and performance of N AS. Multi-Class Semantic Segmentation. T able 2 and T a- ble 3 present the results of multi-class semantic segmen- tation on T rans10K-v1 and T rans10K-v2. For Trans10K- v1, our proposed method outperforms existing non-PEFT and PEFT approaches across both Easy and Hard attributes. On T rans10K-v2, NAS-LoRA/N AS-PC-LoRA achiev e the highest category IoU in nine categories among PEFT meth- ods and attain the highest overall accuracy compared to all other methods. Notably , existing PEFT approaches un- derperform compared to non-PEFT methods in mIoU, as SAM’ s image encoder struggles to e xtract high-level seman- tic information crucial for classification tasks (Zhong et al. 2024). Our method further bridges this gap by dynamically adjust adaptation configurations, demonstrating its ability to better retain and lev erage high-level semantic information. V isualization Analysis. Fig. 3 presents comparisons of I m a ge GT L o R A N A S - L o R A C o n v - L o R A D o R A Figure 4: Heatmap visualization by Grad-CAM (Selv araju et al. 2017) on Leaf. Compared to previous methods, apply- ing N AS-LoRA could capture more fine-grained details. various representativ e methods across the ISIC 2017, Leaf, CAMO, Road, and Transparent Object datasets. The results demonstrate that N AS-LoRA consistently produces more precise segmentation results. Enhanced Inductive Bias. W e use mean attention dis- tance (Raghu et al. 2021) and relati ve log amplitude (Park and Kim 2022) to ev aluate the inducti ve biases of the model on 100 randomly sampled segmentation images. As shown in the T able 4, from randomly initialized V iT to SAM V iT and ultimately to N AS-LoRA, the mean attention distance decreases, while the high-frequency signals in the features increase, illustrating a focus on local information and the enhancement of inductiv e bias by N AS-LoRA. Fig. 4 vi- sualizes heatmaps from SAM’ image encoder by Grad- CAM (Selvaraju et al. 2017), where N AS-LoRA could pro- vide more accurate information, which is beneficial to later mask prediction, showing the enhanced inducti ve bias. Method T raining Inference iter/s ↑ min/epoch ↓ iter/s ↑ BitFit 1.82 18.3 4.62 Adapter 1.73 19.3 4.38 VPT 1.41 23.6 4.39 LoRA 1.64 20.3 4.62 Con v-LoRA 1.22 27.3 3.64 DoRA 1.61 20.7 4.62 V eRA 13.14 2.54 4.62 Ours (update w ) 1.57 21.1 4.62 Ours (update w & α ) - 25.2 T able 5: Computational cost comparison of NAS-PC-LoRA and other PEFT methods on the ISIC 2017 dataset. Method ISIC 2017 Jac ↑ T -Jac ↑ Dice ↑ Acc ↑ N AS-PC-LoRA 78.2 71.2 86.2 94.6 w/o con volution 76.9 1 . 3 ↓ 68.6 2 . 6 ↓ 85.0 1 . 2 ↓ 93.3 1 . 3 ↓ w/o pooling 77.9 0 . 3 ↓ 70.5 0 . 7 ↓ 85.9 0 . 3 ↓ 94.2 0 . 4 ↓ w/o skip/no connection 77.5 0 . 7 ↓ 69.7 1 . 5 ↓ 85.6 0 . 6 ↓ 94.0 0 . 6 ↓ T able 6: Ef fects of candidate operations in the NAS cell. Computational Cost. In T able 5, we compare the compu- tational cost of N AS-PC-LoRA with other PEFT methods in terms of training and inference. The e valuation is conducted on ISIC 2017 using an Nvidia V100 GPU. While N AS-PC- LoRA has a higher training cost than the original LoRA due to its stage-wise optimization strategy , it introduces no ad- ditional inference o verhead and deli vers rob ust performance gains across v arious tasks, as sho wn in T ables 1, 2, and 3. 4.3 Ablation Study Effect of Operation. T able 6 presents the effects of differ - ent candidate operations in the N AS cell. Con volution con- tributes the most to performance, followed by skip/no con- nection, while pooling has a relativ ely smaller impact. LoRA Params(M)/Ratio(%) T rans10K-v2 Acc ↑ mIoU ↑ r = 3 4.009/0.62% 95.31 67.94 r = 6 4.50/0.70% 95.72 68.36 r = 12 5.49/0.86% 95.77 68.48 r = 24 7.46/1.16% 95.76 68.65 T able 7: Ef fects of the rank r of NAS-LoRA. Rank of LoRA. W e further in vestigate the effects of the rank r of N AS-LoRA in T able 7. The performance increases with the increase of the rank r , while the high rank also caus- ing the parameter ov erhead and disobeying the principle of parameter efficienc y of PEFT . LoRA Blocks Params(M)/ Leaf Ratio(%) IoU ↑ Dice ↑ Acc ↑ Layers { 1,2,3,. . . ,16 } 2.004/0.31% 73.0 82.9 95.5 Layers { 16,17,18,. . . ,32 } 2.004/0.31% 73.6 83.4 95.7 Layers { 1,2,3,. . . ,32 } 4.009/0.62% 75.4 84.9 96.6 T able 8: Ablation study on the place of NAS blocks in V iTs. Effect of LoRA Place. The effect of the N AS-LoRA place in V iT layers is shown in the T able 8. While the performance is optimal when NAS blocks are inserted into all layers, we find that inserting them into the last half of the layers per- forms better than inserting them into the first half. Architecture Epoch T B ISIC 2017 Jac ↑ T -Jac ↑ Dice ↑ Acc ↑ 0 (update w & α simultaneously) 77.5 70.1 85.3 93.9 0 (update w & α stage-wisely) 77.9 70.6 85.8 94.4 10 (ours) 78.2 71.2 86.2 94.6 20 78.0 70.9 85.9 94.4 T able 9: Ef fects of the stage-wise optimization strategy . Effect of Stage-wise Optimization. W e assess the ef fect of the stage-wise optimization in T able 9. When architec- ture parameters are optimized together with the weights, per- formance degrades significantly . In contrast, stage-wise op- timization—where architecture parameters are updated af- ter weights have partially conv erged—yields better results. Although early and delayed architecture optimization affect performance, the impact remains within an acceptable range. 5 Conclusion In this paper , we propose N AS-LoRA, a PEFT -based seg- mentation approach that integrates NAS to optimize lo- cal priors. Through an end-to-end stage-wise optimization strategy , NAS-LoRA effecti vely adapts to do wnstream tasks while maintaining minimal training parameter overhead and incurring no additional inference cost. Experiments across various se gmentation tasks validate its ef fecti veness. References Batra, A.; Singh, S.; Pang, G.; Basu, S.; Jawahar , C.; and Paluri, M. 2019. Improved road connectivity by joint learn- ing of orientation and segmentation. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 10385–10393. Bernal, J.; S ´ anchez, F . J.; Fern ´ andez-Esparrach, G.; Gil, D.; Rodr ´ ıguez, C.; and V ilari ˜ no, F . 2015. WM-DO V A maps for accurate polyp highlighting in colonoscopy: V alidation vs. saliency maps from physicians. Computerized medical imaging and graphics , 43: 99–111. Chen, C.; Miao, J.; W u, D.; Zhong, A.; Y an, Z.; Kim, S.; Hu, J.; Liu, Z.; Sun, L.; Li, X.; et al. 2024a. Ma-sam: Modality- agnostic sam adaptation for 3d medical image se gmentation. Medical Image Analysis , 98: 103310. Chen, J.; Hu, H.; W u, H.; Jiang, Y .; and W ang, C. 2021. Learning the best pooling strategy for visual semantic em- bedding. In Pr oceedings of the IEEE/CVF conference on computer vision and pattern r ecognition , 15789–15798. Chen, L.-C.; P apandreou, G.; K okkinos, I.; Murphy , K.; and Y uille, A. L. 2017. Deeplab: Semantic image segmentation with deep conv olutional nets, atrous con volution, and fully connected crfs. IEEE transactions on pattern analysis and machine intelligence , 40(4): 834–848. Chen, T .; Zhu, L.; Ding, C.; Cao, R.; W ang, Y .; Li, Z.; Sun, L.; Mao, P .; and Zang, Y . 2023a. SAM Fails to Seg- ment Anything?–SAM-Adapter: Adapting SAM in Under- performed Scenes: Camouflage, Shado w , Medical Image Segmentation, and More. arXiv pr eprint arXiv:2304.09148 . Chen, X.; Xu, Y .; Zhou, Y .; et al. 2023b. TransNorm: Learn- ing Normalization for Medical V ision T ransformers. In Pr o- ceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Reco gnition (CVPR) . Chen, Z.; W u, J.; W ang, W .; Su, W .; Chen, G.; Xing, S.; Zhong, M.; Zhang, Q.; Zhu, X.; Lu, L.; et al. 2024b. Intern vl: Scaling up vision foundation models and align- ing for generic visual-linguistic tasks. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 24185–24198. Cheng, B.; Misra, I.; Schwing, A. G.; Kirillov , A.; and Gird- har , R. 2022. Masked-attention mask transformer for univ er- sal image segmentation. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern reco gnition , 1290–1299. Chu, X.; Zhang, B.; and Xu, R. 2021. Fairnas: Rethink- ing e valuation fairness of weight sharing neural architecture search. In Pr oceedings of the IEEE/CVF International Con- fer ence on computer vision , 12239–12248. Codella, N. C.; Gutman, D.; Celebi, M. E.; Helba, B.; Marchetti, M. A.; Dusza, S. W .; Kalloo, A.; Liopyris, K.; Mishra, N.; Kittler, H.; et al. 2018. Skin lesion analysis to- ward melanoma detection: A challenge at the 2017 interna- tional symposium on biomedical imaging (isbi), hosted by the international skin imaging collaboration (isic). In 2018 IEEE 15th international symposium on biomedical imaging (ISBI 2018) , 168–172. IEEE. Fan, D.-P .; Ji, G.-P .; Cheng, M.-M.; and Shao, L. 2021. Con- cealed object detection. IEEE transactions on pattern anal- ysis and machine intelligence , 44(10): 6024–6042. Fan, D.-P .; Ji, G.-P .; Sun, G.; Cheng, M.-M.; Shen, J.; and Shao, L. 2020a. Camouflaged object detection. In Proceed- ings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 2777–2787. Fan, D.-P .; Ji, G.-P .; Zhou, T .; Chen, G.; Fu, H.; Shen, J.; and Shao, L. 2020b. Pranet: Parallel rev erse attention net- work for polyp se gmentation. In International conference on medical image computing and computer-assisted inter- vention , 263–273. Springer . Gao, S.; Li, Z.-Y .; Han, Q.; Cheng, M.-M.; and W ang, L. 2022. Rf-next: Efficient receptiv e field search for con volu- tional neural networks. IEEE transactions on pattern anal- ysis and machine intelligence , 45(3): 2984–3002. Han, Z.; Gao, C.; Liu, J.; Zhang, J.; and Zhang, S. Q. 2024. Parameter -ef ficient fine-tuning for large models: A compre- hensiv e survey . arXiv pr eprint arXiv:2403.14608 . He, H.; Liu, L.; Zhang, H.; and Zheng, N. 2024. IS-D AR TS: stabilizing D AR TS through precise measurement on candi- date importance. In Pr oceedings of the AAAI Conference on Artificial Intelligence , v olume 38, 12367–12375. He, K.; Zhang, X.; Ren, S.; and Sun, J. 2016. Deep resid- ual learning for image recognition. In Pr oceedings of the IEEE conference on computer vision and pattern r ecogni- tion , 770–778. Houlsby , N.; Giurgiu, A.; Jastrzebski, S.; Morrone, B.; De Laroussilhe, Q.; Gesmundo, A.; Attariyan, M.; and Gelly , S. 2019. P arameter-ef ficient transfer learning for NLP . In International confer ence on machine learning , 2790–2799. PMLR. Hu, E. J.; Shen, Y .; W allis, P .; Allen-Zhu, Z.; Li, Y .; W ang, S.; W ang, L.; Chen, W .; et al. 2022. Lora: Lo w-rank adapta- tion of large language models. ICLR , 1(2): 3. Jha, D.; Smedsrud, P . H.; Riegler , M. A.; Halvorsen, P .; De Lange, T .; Johansen, D.; and Johansen, H. D. 2020. Kvasir -seg: A segmented polyp dataset. In MultiMedia mod- eling: 26th international conference , MMM 2020, Daejeon, South K orea, J anuary 5–8, 2020, proceedings, part II 26 , 451–462. Springer . Jia, M.; T ang, L.; Chen, B.-C.; Cardie, C.; Belongie, S.; Hariharan, B.; and Lim, S.-N. 2022. V isual prompt tun- ing. In Eur opean confer ence on computer vision , 709–727. Springer . Kirillov , A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T .; Whitehead, S.; Berg, A. C.; Lo, W .-Y .; et al. 2023. Segment an ything. In Pr oceedings of the IEEE/CVF international confer ence on computer vision , 4015–4026. K opiczko, D. J.; Blankev oort, T .; and Asano, Y . M. 2023. V era: V ector-based random matrix adaptation. arXiv pr eprint arXiv:2310.11454 . Le, T .-N.; Nguyen, T . V .; Nie, Z.; T ran, M.-T .; and Sugi- moto, A. 2019. Anabranch network for camouflaged object segmentation. Computer vision and image understanding , 184: 45–56. Lester , B.; Al-Rfou, R.; and Constant, N. 2021. The power of scale for parameter-ef ficient prompt tuning. arXiv pr eprint arXiv:2104.08691 . Liang, Y .; Lu, L.; Jin, Y .; Xie, J.; Huang, R.; Zhang, J.; and Lin, W . 2021. An efficient hardw are design for accelerating sparse CNNs with NAS-based models. IEEE T ransactions on Computer-Aided Design of Integr ated Cir cuits and Sys- tems , 41(3): 597–613. Liu, H.; Li, C.; W u, Q.; and Lee, Y . J. 2023. V isual in- struction tuning. Advances in neural information pr ocessing systems , 36: 34892–34916. Liu, H.; Simonyan, K.; and Y ang, Y . 2018. Darts: Differen- tiable architecture search. arXiv pr eprint arXiv:1806.09055 . Liu, S.-Y .; W ang, C.-Y .; Y in, H.; Molchanov , P .; W ang, Y .- C. F .; Cheng, K.-T .; and Chen, M.-H. 2024. Dora: W eight- decomposed low-rank adaptation. In F orty-first Interna- tional Confer ence on Machine Learning . Ma, J.; He, Y .; Li, F .; Han, L.; Y ou, C.; and W ang, B. 2024. Segment anything in medical images. Nature Communica- tions , 15(1): 654. Mnih, V . 2013. Machine learning for aerial ima ge labeling . Univ ersity of T oronto (Canada). Nirthika, R.; Mani vannan, S.; Ramanan, A.; and W ang, R. 2022. Pooling in con volutional neural networks for medical image analysis: a surve y and an empirical study . Neural Computing and Applications , 34(7): 5321–5347. Osco, L. P .; W u, Q.; De Lemos, E. L.; Gonc ¸ alves, W . N.; Ramos, A. P . M.; Li, J.; and Junior , J. M. 2023. The segment anything model (sam) for remote sensing applications: From zero to one shot. International Journal of Applied Earth Observation and Geoinformation , 124: 103540. Park, N.; and Kim, S. 2022. Ho w do vision transformers work? arXiv preprint . Raghu, M.; Unterthiner, T .; K ornblith, S.; Zhang, C.; and Dosovitskiy , A. 2021. Do vision transformers see like con- volutional neural networks? Advances in neur al information pr ocessing systems , 34: 12116–12128. Rath, S. R. 2023. Leaf disease segmentation dataset. Razdaibiedina, A.; Mao, Y .; Hou, R.; Khabsa, M.; Le wis, M.; Ba, J.; and Almahairi, A. 2023. Residual prompt tuning: Improving prompt tuning with residual reparameterization. arXiv pr eprint arXiv:2305.03937 . Ren, P .; Xiao, Y .; Chang, X.; Huang, P .-Y .; Li, Z.; Chen, X.; and W ang, X. 2021. A comprehensiv e survey of neural architecture search: Challenges and solutions. A CM Com- puting Surve ys (CSUR) , 54(4): 1–34. Ronneberger , O.; Fischer , P .; and Brox, T . 2015. U-net: Con volutional networks for biomedical image segmenta- tion. In Medical ima ge computing and computer-assisted intervention–MICCAI 2015: 18th international conference , Munich, Germany , October 5-9, 2015, proceedings, part III 18 , 234–241. Springer . Salehin, I.; Islam, M. S.; Saha, P .; Noman, S.; T uni, A.; Hasan, M. M.; and Baten, M. A. 2024. AutoML: A sys- tematic revie w on automated machine learning with neural architecture search. Journal of Information and Intellig ence , 2(1): 52–81. Salmani Pour A vval, S.; Eskue, N. D.; Grov es, R. M.; and Y aghoubi, V . 2025. Systematic revie w on neural architecture search. Artificial Intelligence Review , 58(3): 73. Selvaraju, R. R.; Cogswell, M.; Das, A.; V edantam, R.; Parikh, D.; and Batra, D. 2017. Grad-cam: V isual explana- tions from deep networks via gradient-based localization. In Pr oceedings of the IEEE international confer ence on com- puter vision , 618–626. Skurowski, P .; Abdulameer , H.; Błaszczyk, J.; Depta, T .; K o- rnacki, A.; and Kozieł, P . 2018. Animal camouflage analysis: Chameleon database. Unpublished manuscript , 2(6): 7. T ouvron, H.; Lavril, T .; Izacard, G.; Martinet, X.; Lachaux, M.-A.; Lacroix, T .; Rozi ` ere, B.; Goyal, N.; Hambro, E.; Azhar , F .; et al. 2023. Llama: Open and efficient founda- tion language models. arXiv preprint . T u, C.-H.; Mai, Z.; and Chao, W .-L. 2023. V isual query tuning: T o wards effecti ve usage of intermediate representa- tions for parameter and memory efficient transfer learning. In Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 7725–7735. V icente, T . F . Y .; Hou, L.; Y u, C.-P .; Hoai, M.; and Sama- ras, D. 2016. Large-scale training of shadow detectors with noisily-annotated shadow examples. In Computer V ision– ECCV 2016: 14th Eur opean Conference , Amster dam, The Netherlands, October 11-14, 2016, Pr oceedings, P art VI 14 , 816–832. Springer . W ang, H.; Chang, J.; Zhai, Y .; Luo, X.; Sun, J.; Lin, Z.; and T ian, Q. 2024. Lion: Implicit vision prompt tuning. In Pr o- ceedings of the AAAI Conference on Artificial Intelligence , volume 38, 5372–5380. W ang, J. M. J. V .; et al. 2022. DoF A: Domain-specific Fea- ture Augmentation for Medical Image Segmentation. In In- ternational Confer ence on Medical Image Computing and Computer-Assisted Intervention (MICCAI) . W ang, X.; and Zhu, W . 2024. Advances in neural architec- ture search. National Science Review , 11(8): nwae282. W u, J.; Ji, W .; Liu, Y .; Fu, H.; Xu, M.; Xu, Y .; and Jin, Y . 2023. Medical sam adapter: Adapting se gment any- thing model for medical image se gmentation. arXiv pr eprint arXiv:2304.12620 . Xie, E.; W ang, W .; W ang, W .; Ding, M.; Shen, C.; and Luo, P . 2020. Segmenting transparent objects in the wild. In Computer V ision–ECCV 2020: 16th European Conference , Glasgow , UK, August 23–28, 2020, Pr oceedings, P art XIII 16 , 696–711. Springer . Xie, E.; W ang, W .; W ang, W .; Sun, P .; Xu, H.; Liang, D.; and Luo, P . 2021. Se gmenting transparent object in the wild with transformer . arXiv pr eprint arXiv:2101.08461 . Xin, Y .; Du, J.; W ang, Q.; Lin, Z.; and Y an, K. 2024a. Vmt- adapter: Parameter -ef ficient transfer learning for multi-task dense scene understanding. In Pr oceedings of the AAAI Con- fer ence on Artificial Intelligence , volume 38, 16085–16093. Xin, Y .; Luo, S.; Zhou, H.; Du, J.; Liu, X.; Fan, Y .; Li, Q.; and Du, Y . 2024b. Parameter -ef ficient fine-tuning for pre-trained vision models: A survey . arXiv preprint arXiv:2402.02242 . Xu, Y .; Xie, L.; Zhang, X.; Chen, X.; Qi, G.-J.; Tian, Q.; and Xiong, H. 2019. Pc-darts: Partial channel connections for memory-efficient architecture search. arXiv pr eprint arXiv:1907.05737 . Xue, Y .; and Qin, J. 2022. Partial connection based on chan- nel attention for differentiable neural architecture search. IEEE T ransactions on Industrial Informatics , 19(5): 6804– 6813. Y ang, T .; Zhu, Y .; Xie, Y .; Zhang, A.; Chen, C.; and Li, M. 2023. Aim: Adapting image models for efficient video ac- tion recognition. arXiv preprint . Y e, P .; Li, B.; Li, Y .; Chen, T .; Fan, J.; and Ouyang, W . 2022. b-darts: Beta-decay regularization for differentiable archi- tecture search. In pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 10874–10883. Y in, D.; Hu, L.; Li, B.; Zhang, Y .; and Y ang, X. 2025. 5%¿ 100%: Breaking performance shackles of full fine-tuning on visual recognition tasks. In Pr oceedings of the Computer V ision and P attern Recognition Confer ence , 20071–20081. Y in, D.; Y ang, Y .; W ang, Z.; Y u, H.; W ei, K.; and Sun, X. 2023. 1% vs 100%: Parameter-ef ficient low rank adapter for dense predictions. In Pr oceedings of the IEEE/CVF con- fer ence on computer vision and pattern r ecognition , 20116– 20126. Zaken, E. B.; Ravfogel, S.; and Goldberg, Y . 2021. Bitfit: Simple parameter -efficient fine-tuning for transformer- based masked language-models. arXiv pr eprint arXiv:2106.10199 . Zhang, E.; et al. 2023. Segformer v2: Learning hierar- chical representations for efficient semantic segmentation. In Advances in Neural Information Processing Systems (NeurIPS) . Zhang, K.; and Liu, D. 2023. Customized segment any- thing model for medical image se gmentation. arXiv pr eprint arXiv:2304.13785 . Zhang, Y .; Liu, H.; and Hu, Q. 2021. Transfuse: Fus- ing transformers and cnns for medical image segmenta- tion. In Medical imag e computing and computer assisted intervention–MICCAI 2021: 24th international conference , Strasbour g, F rance, September 27–October 1, 2021, pr o- ceedings, P art I 24 , 14–24. Springer . Zhong, Z.; T ang, Z.; He, T .; Fang, H.; and Y uan, C. 2024. Con volution meets lora: Parameter efficient finetuning for segment an ything model. arXiv preprint . Zhu, L.; Xu, K.; K e, Z.; and Lau, R. W . 2021. Mitigating in- tensity bias in shadow detection via feature decomposition and reweighting. In Pr oceedings of the IEEE/CVF interna- tional confer ence on computer vision , 4702–4711. In the supplementary material, we provide additional de- tails and experimental results to enhance the understanding and insights into our proposed N AS-LoRA. This supplemen- tary material is organized as: • In Sec. A, we provide more details about the loss functions in the training. • In Sec. B, we pro vide more details about the emplo yed datasets. • In Sec. C, we provide more details about the training be- havior , including the training con vergence analysis and the adaptability of N AS-LoRA. • In Sec. D, we pro vide more ablation studies, including the effect of the partial connection in the N AS-PC-LoRA. A Loss Functions Our final loss function combines the segmentation loss L seg and classification loss L cls as follows: L = λ seg L seg + λ cls L cls , (8) where λ seg and λ cls are the weighting coefficients that bal- ance the two objectives. The segmentation loss L seg (Ma et al. 2024) includes a binary cross-entropy (BCE) loss L BCE and a Dice loss L Dice , defined respectiv ely as: L BCE = − 1 N N X i =1 [ y i log( ˆ y i ) + (1 − y i ) log(1 − ˆ y i )] , (9) L Dice = 1 − 2 P N i =1 y i ˆ y i P N i =1 y 2 i + P N i =1 ˆ y 2 i . (10) Here, N is the total number of pixels, y i is the ground-truth label, and ˆ y i is the predicted segmentation output. The classification loss L cls is defined as the cross-entropy ov er all K + 1 classes: L cls = − 1 N P N i =1 P K +1 k =1 y k i log( ˆ y k i ) , where y k i and ˆ y k i denote the ground-truth and predicted prob- ability for class k at pixel i , respecti vely . B Dataset Details Medical Images. The polyp segmentation task inv olves segmenting abnormal gro wths in gastrointestinal endoscop y images, which is challenging due to complex backgrounds and the div erse sizes and shapes of polyps. W e use two polyp segmentation datasets: CVC-ClinicDB (Bernal et al. 2015) (612 images) and Kv asir (Jha et al. 2020) (1000 images). Follo wing (Fan et al. 2020b; Zhong et al. 2024), we split each dataset into 90% for training and 10% for testing, with 20% of the training set used for validation. Additionally , we ev aluate our method on the skin lesion se gmentation task, which in volv es segmenting lesions from dermoscopic im- ages. For this, we use the ISIC 2017 dataset (Codella et al. 2018), comprising 2000 training images, 150 validation im- ages, and 600 testing images. Natural Images. Camouflaged object segmentation aims to segment objects concealed within complex or visu- ally cluttered backgrounds. W e use three related datasets: COD10K (Fan et al. 2020a), CAMO (Le et al. 2019), and CHAMELEON (Skuro wski et al. 2018). COD10K consists of 3,040 training and 2,026 testing images, CAMO includes 1,000 training and 250 testing images, while CHAMELEON provides 76 images for testing. The model is trained on the training sets of COD10K and CAMO and ev aluated on all three datasets, with CAMO highlighted as the most challenging. Additionally , we conduct experiments on the Shadow Detection dataset, SBU (V icente et al. 2016), which focuses on identifying shadow regions within a scene. SBU contains 4,085 training and 638 testing images. For both tasks, 10% of the training set is randomly selected as the validation set. Agriculture. Leaf Disease Segmentation dataset (Rath 2023) is used to identify the infected regions on the leaves. The dataset includes 498 images for training and 90 images for testing. Remote Sensing. Road segmentation aims to identify road or street regions in images. W e use the Massachusetts Roads Dataset (Mnih 2013), which includes 1,107 training images, 13 validation images, and 48 testing images. Multi-class Segmentation. For a more comprehensive ev aluation, we also explore multi-class segmentation us- ing the multi-class transparent object segmentation datasets T rans10K-v1 (Xie et al. 2020), which includes three classes (background and two categories of transparent objects), and T rans10K-v2 (Xie et al. 2021), which consists of 12 classes (background and 11 fine-grained categories of transparent objects). Both datasets contain 5,000 training images, 1,000 validation images, and 4,428 testing images. C T raining Behavior 0 5 10 15 20 25 30 35 40 Epochs 20 30 40 50 60 70 80 IoU Figure C1: Con ver gence behavior of N AS-PC-LoRA on the Leaf dataset ov er three independent trials. Con vergence Analysis. A key concern when integrating N AS with PEFT is ensuring stable con ver gence. Fig. C1 il- lustrates the con ver gence behavior of N AS-PC-LoRA on the Leaf dataset across three independent trials, where the dark blue line denotes the mean value, and the light blue shaded area represents the standard deviation. The curve exhibits a rapid initial increase, follo wed by a more gradual rise before stabilizing. Notably , a slight fluctuation occurs around the 10- th epoch due to the optimization of architectural param- eters, after which the trend resumes its upward trajectory . 30.9% 20.6% 5.9% 7.4% 8.8% 2.9% 14.7% 8.8% 21.8% 16.7% 14.1% 11.5% 6.4% 3.8% 15.4% 10.3% 3x3_sep_conv 5x5_sep_conv 3x3_dil_conv 5x5_dil_conv 3x3_mean_pool 3x3_max_pool skip_connect no_connect Figure C2: The final architecture of NAS-PC-LoRA on Leaf Disease Segmentation dataset (Left) and Multi-class Trans- parent Object Segmentation dataset (Right). These results confirm that N AS-PC-LoRA ef fectiv ely con- ver ges to a stable solution. Adaptability of NAS-PC-LoRA. T o better understand the adaptability of N AS-PC-LoRA across dif ferent do wnstream tasks, we analyze the statistical distrib ution of operation selections on the Leaf Disease Segmentation and Multi- class T ransparent Object Segmentation datasets, as sho wn in Fig. C2. The proportion of each candidate operation is computed as: P O i ∈O = P layer s P q,k ,v α i P lay ers P q ,k,v . The results re veal distinct operation preferences across the two datasets: the Leaf dataset predominantly selects separable con volution, while the T ransparent Object dataset fa vors both dilated and separable con volutions. This di versity in operation selection highlights its ability to adapt to the unique characteristics of different tasks. D More Ablation Studies Sampling Channels Params(M)/ Leaf Ratio(%) IoU ↑ Dice ↑ Acc ↑ 3/3 (N AS-LoRA) 4.016/0.63% 75.1 84.8 96.4 2/3 (N AS-PC-LoRA) 4.009/0.62% 75.4 84.9 96.6 1/3 4.003/0.62% 74.8 84.6 96.2 T able D1: Ef fects of the partially connection in N AS-LoRA. Partial Connection. A notable feature of NAS-PC-LoRA is its partial connection mechanism, which allo ws for the update of a subset of feature channels. In T able D1, we sho w the ef fects of partial connection, indicating that this setting is meaningful in the PEFT scenario. The optimal sampling ratio is 2/3, as too fe w or too man y channels may lead to performance degradation.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment