Large Language Models (LLMs) have transformed natural language processing and hold growing promise for advancing science, healthcare, and decision-making. Yet their training paradigms remain dominated by affirmation-based inference, akin to modus ponens, where accepted premises yield predicted consequents. While effective for generative fluency, this onedirectional approach leaves models vulnerable to logical fallacies, adversarial manipulation, and failures in causal reasoning. This paper makes two contributions. First, it demonstrates how existing LLMs from major platforms exhibit systematic weaknesses when reasoning in scientific domains with negation, counterexamples, or faulty premises. 1 Second, it introduces a dualreasoning training framework that integrates affirmative generation with structured counterfactual denial. Grounded in formal logic, cognitive science, and adversarial training, this training paradigm formalizes a computational analogue of "denying the antecedent" as a mechanism for disconfirmation and robustness. By coupling generative synthesis with explicit negation-aware objectives, the framework enables models that not only affirm valid inferences but also reject invalid ones, yielding systems that are more resilient, interpretable, and aligned with human reasoning.

Deep Dive into 이중 추론 학습: 긍정‑부정 논리를 결합한 대형 언어 모델의 과학적 추론 강화.

Large Language Models (LLMs) have transformed natural language processing and hold growing promise for advancing science, healthcare, and decision-making. Yet their training paradigms remain dominated by affirmation-based inference, akin to modus ponens, where accepted premises yield predicted consequents. While effective for generative fluency, this onedirectional approach leaves models vulnerable to logical fallacies, adversarial manipulation, and failures in causal reasoning. This paper makes two contributions. First, it demonstrates how existing LLMs from major platforms exhibit systematic weaknesses when reasoning in scientific domains with negation, counterexamples, or faulty premises. 1 Second, it introduces a dualreasoning training framework that integrates affirmative generation with structured counterfactual denial. Grounded in formal logic, cognitive science, and adversarial training, this training paradigm formalizes a computational analogue of “denying the antecedent” as a

Recent advances in large language models (LLMs) such as GPT-5, LLaMA, and Gemini demonstrate remarkable progress in natural language generation, reasoning, and generalization. These systems are trained on massive corpora with objectives such as autoregressive prediction, masked language modeling, and next-sentence prediction. At their core, such models estimate the most probable continuation of a linguistic sequence, reflecting a probabilistic analogue of modus ponens logic: if P =⇒ Q and P holds, then Q is predicted. This affirmation-based paradigm has fueled generative fluency across applications ranging from dialogue to scientific writing.

However, reliance on affirmation alone exposes critical weaknesses, particularly in scientific domains where causal reasoning, counterfactuals, and robustness are essential. Models to affirm likely consequents often overgeneralize from correlations, misattribute causal direction, or fail to reject faulty premises. For example, when asked whether a patient with traumatic brain injury (TBI) can develop post-traumatic stress disorder (PTSD) (P =⇒ Q), an LLM may correctly affirm the association Walker et al. [2017], Bai et al. [2017]. Yet without exposure to negative or counterfactual cases, the same model may incorrectly infer that PTSD implies a prior TBI (Q =⇒ P ), or that the absence of TBI guarantees the absence of PTSD (¬P =⇒ ¬Q).

Recent reports highlight that young users sometimes treat AI chatbots as close companions, with detrimental psychological outcomes [Horton andLee, 2023, Smith andGomez, 2024]. These concerns underscore the urgency of ensuring that LLMs reason transparently and safely, particularly when deployed in sensitive domains. Such errors illustrate how statistical regularities in training data can mislead reasoning, leading to logical fallacies such as affirming the consequent, denying the antecedent, or reversing causality.

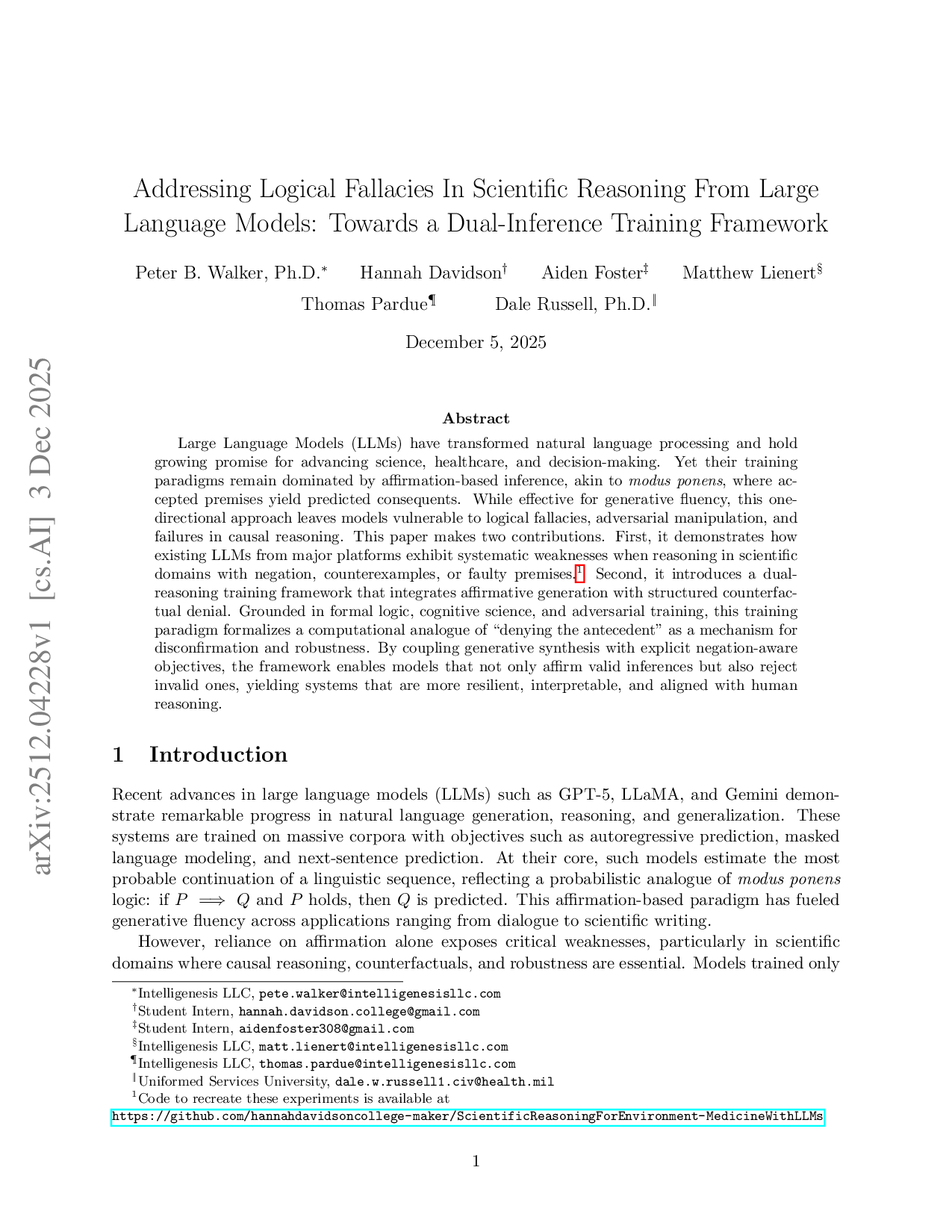

Table 1 enumerates these patterns, contrasting valid inference with fallacies that have been frequently observed in LLM outputs [Wei et al., 2022, Ji et al., 2023].

Building on insights from cognitive science and philosophy, we argue that these so-called fallacies may hold computational value. Human reasoning thrives not only on confirmation but also on disconfirmation: generating counterfactuals, testing negated premises, and learning from absence Kahneman [1973], Roese [1997]. Popper’s falsificationist philosophy Popper [2002] similarly emphasizes the scientific imperative of testing hypotheses against potential refutation. In machine learning, analogous mechanisms appear in adversarial training, out-of-distribution generalization Geirhos et al. [2020], and contrastive learning. Together, these traditions suggest that training models to engage with negation and denial is not a flaw but a pathway to greater robustness.

This paper advances that pathway by introducing a dual-reasoning framework for LLMs. The framework formalizes a taxonomy of logical patterns, extends training objectives beyond affirmation, and operationalizes a computational analogue of denying the antecedent. Through this dual approach, models can affirm valid consequents while simultaneously learning to reject invalid inferences, improving resilience, interpretability, and alignment with human reasoning.

The remainder of the paper is structured as follows. Section 2 reviews foundations in psychology, philosophy, and machine learning that motivate dual reasoning. Section 3 introduces our logical taxonomy and illustrates its application in medical and AI contexts. Section 4 presents the dualreasoning training paradigm, including mathematical formalization and proof of representational benefit. Section 5 discusses evaluation, limitations, and implications for AI safety and scientific discovery, and Section 6 concludes with directions for future research.

As shown in Table 1, these logical patterns illustrate the contrasts between valid inference and the types of fallacies that LLMs often generate. A more detailed discussion of these patterns is provided in Section 4.2.

1.1 Background 1.2 From Modus Ponens to a Need for Logical Negation in LLMs Contemporary LLMs have largely been developed under the paradigm of modus ponens reasoning, where an accepted premise leads to the most likely consequent (“If P then Q; P; therefore Q”). This structure is evident in the architecture and training methodologies employed. Transformer-based LLMs, with their attention mechanisms [Vaswani et al., 2017], learn to map input token sequences (serving as premises, “P”) to highly probable output sequences (serving as consequents, “Q”). This mapping is probabilistic, reflecting the statistical regularities of language rather than strict deduction. Relatedly, Generative Adversarial Networks (GANs) [Goodfellow et al., 2014] employ a generative-discriminative loop, in which the generator produces candidate outputs from a learned distribution and the discriminator evaluates their va

…(Full text truncated)…

This content is AI-processed based on ArXiv data.