Accurate perception of the vehicle's 3D surroundings, including fine-scale road geometry, such as bumps, slopes, and surface irregularities, is essential for safe and comfortable vehicle control. However, conventional monocular depth estimation often oversmooths these features, losing critical information for motion planning and stability. To address this, we introduce Gammafrom-Mono (GfM), a lightweight monocular geometry estimation method that resolves the projective ambiguity in single-camera reconstruction by decoupling global and local structure. GfM predicts a dominant road surface plane together with residual variations expressed by 𝛾, a dimensionless measure of vertical deviation from the plane, defined as the ratio of a point's height above it to its depth from the camera, and grounded in established planar parallax geometry. With only the camera's height above ground, this representation deterministically recovers metric depth via a closed form, avoiding full extrinsic calibration and naturally prioritizing nearroad detail. Its physically interpretable formulation makes it well suited for self-supervised learning, eliminating the need for large annotated datasets. Evaluated on KITTI and the Road Surface Reconstruction Dataset (RSRD), GfM achieves state-of-the-art near-field accuracy in both depth and 𝛾 estimation while maintaining competitive global depth performance. Our lightweight 8.88M-parameter model adapts robustly across diverse camera setups and, to our knowledge, is the first selfsupervised monocular approach evaluated on RSRD.

Deep Dive into 단일 카메라 기반 도로 표면 정밀 복원 기술.

Accurate perception of the vehicle’s 3D surroundings, including fine-scale road geometry, such as bumps, slopes, and surface irregularities, is essential for safe and comfortable vehicle control. However, conventional monocular depth estimation often oversmooths these features, losing critical information for motion planning and stability. To address this, we introduce Gammafrom-Mono (GfM), a lightweight monocular geometry estimation method that resolves the projective ambiguity in single-camera reconstruction by decoupling global and local structure. GfM predicts a dominant road surface plane together with residual variations expressed by 𝛾, a dimensionless measure of vertical deviation from the plane, defined as the ratio of a point’s height above it to its depth from the camera, and grounded in established planar parallax geometry. With only the camera’s height above ground, this representation deterministically recovers metric depth via a closed form, avoiding full extrinsic calibr

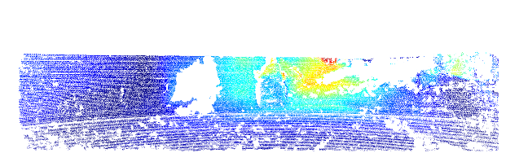

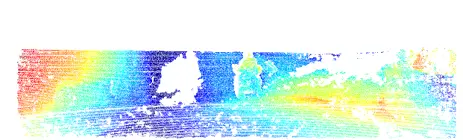

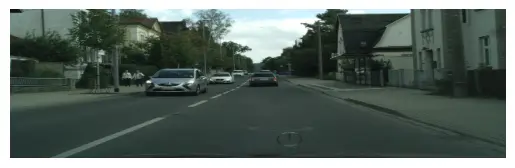

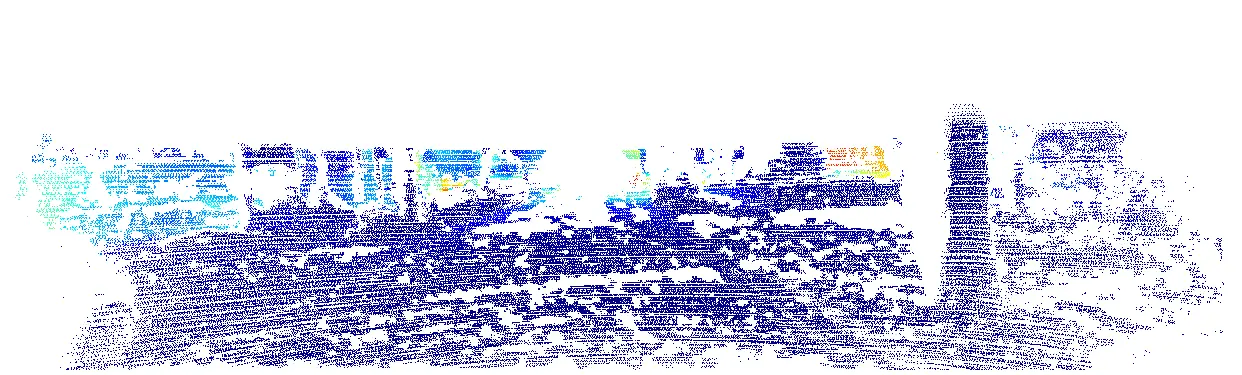

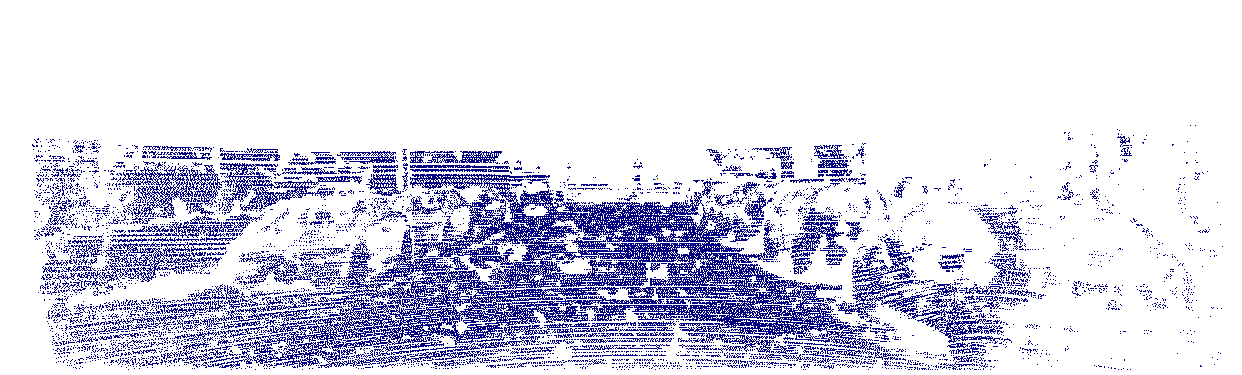

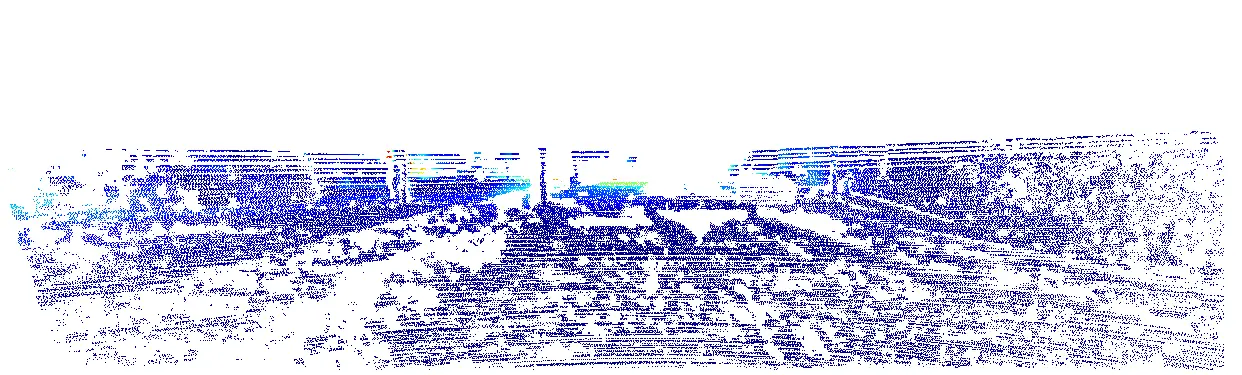

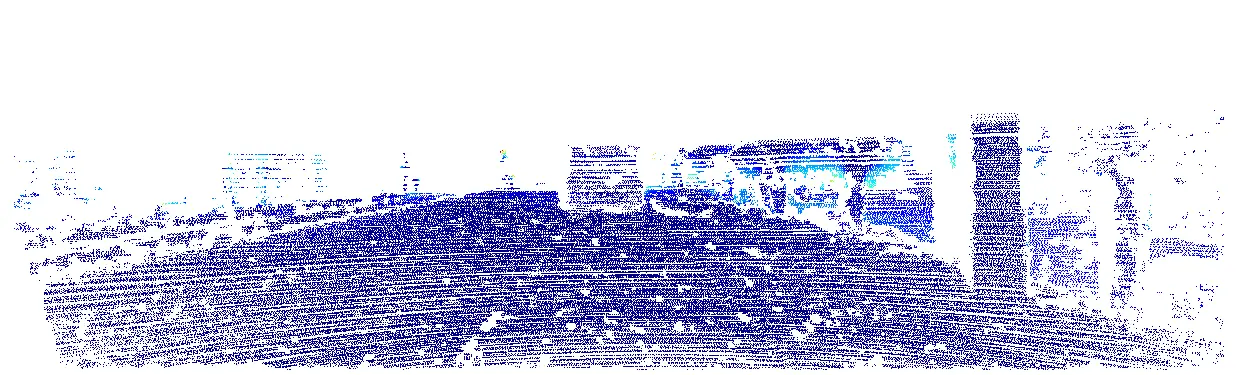

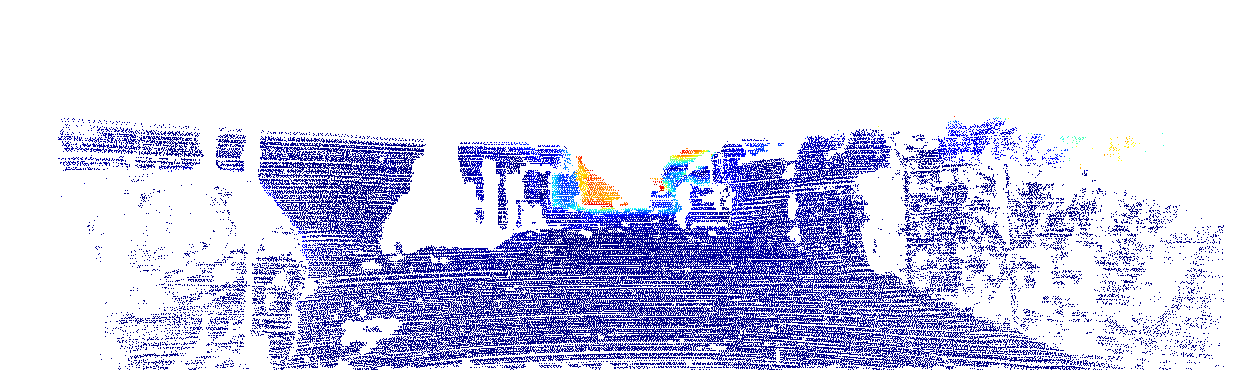

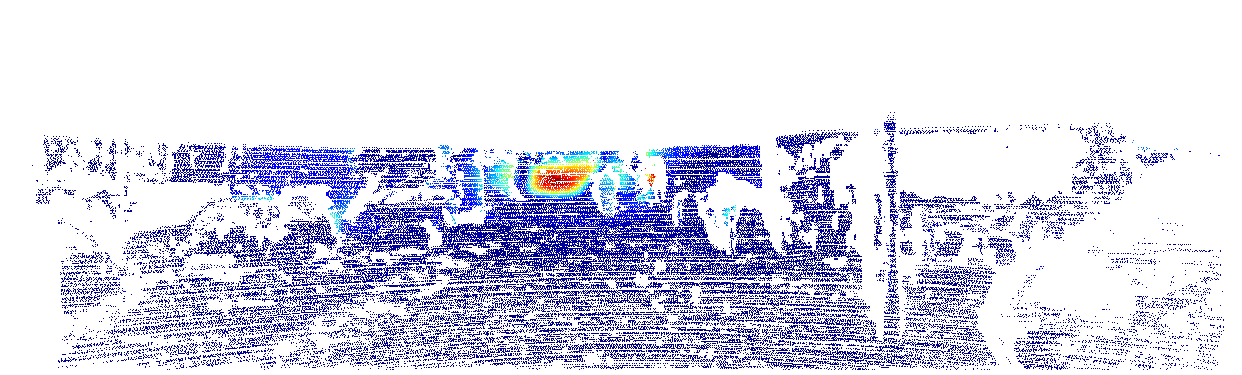

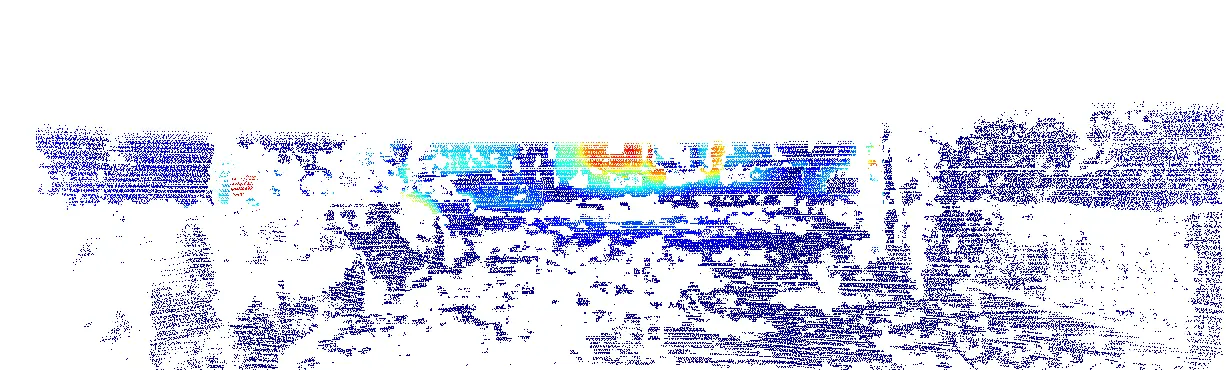

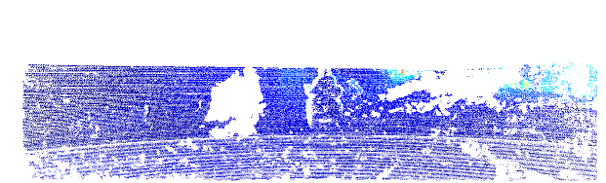

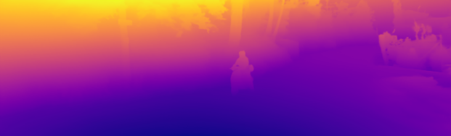

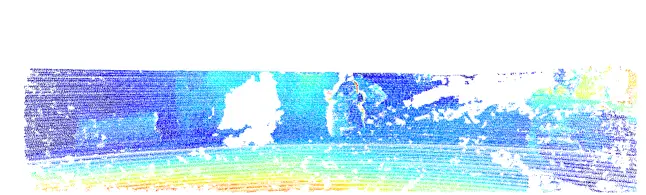

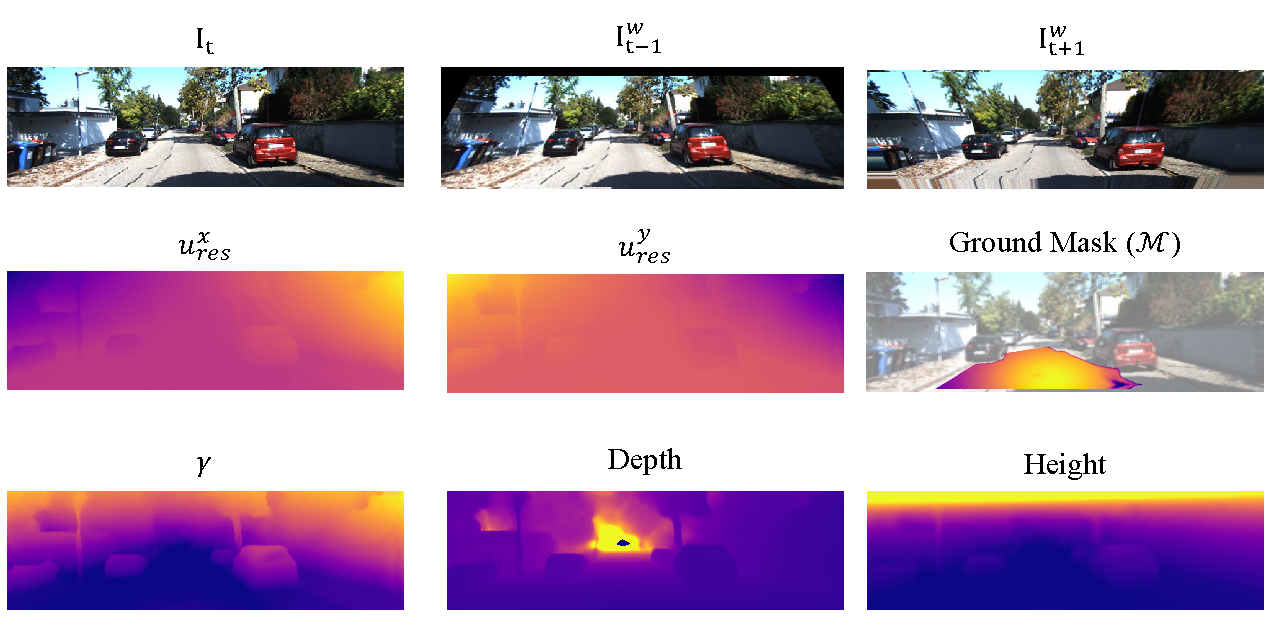

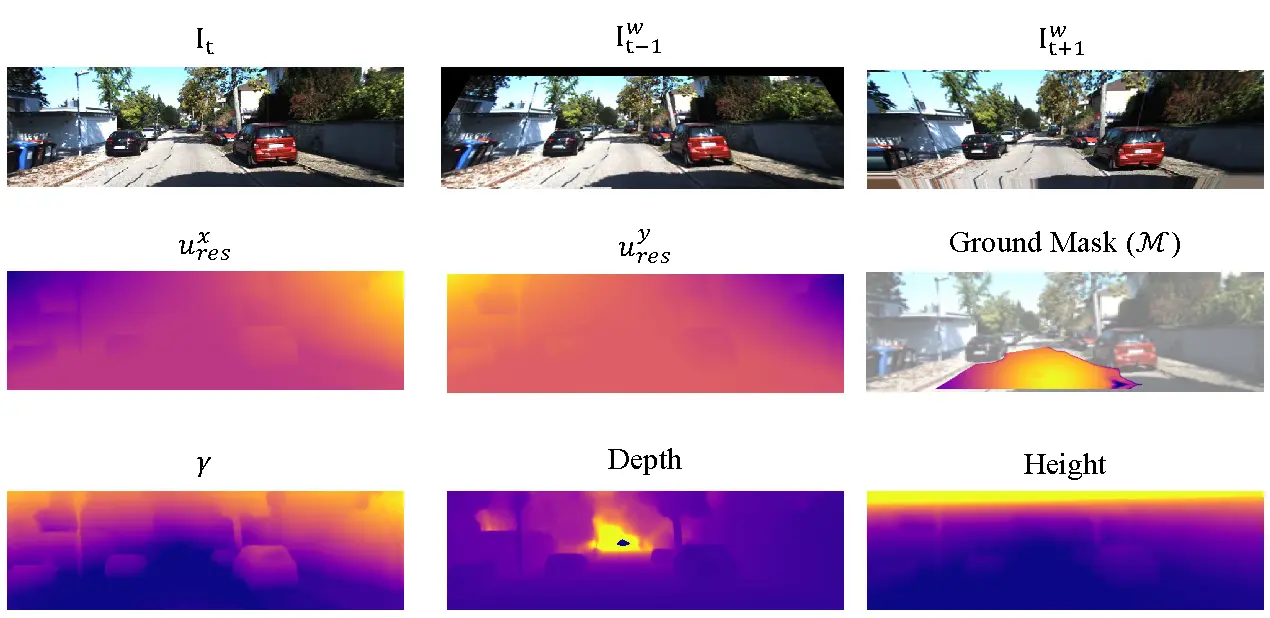

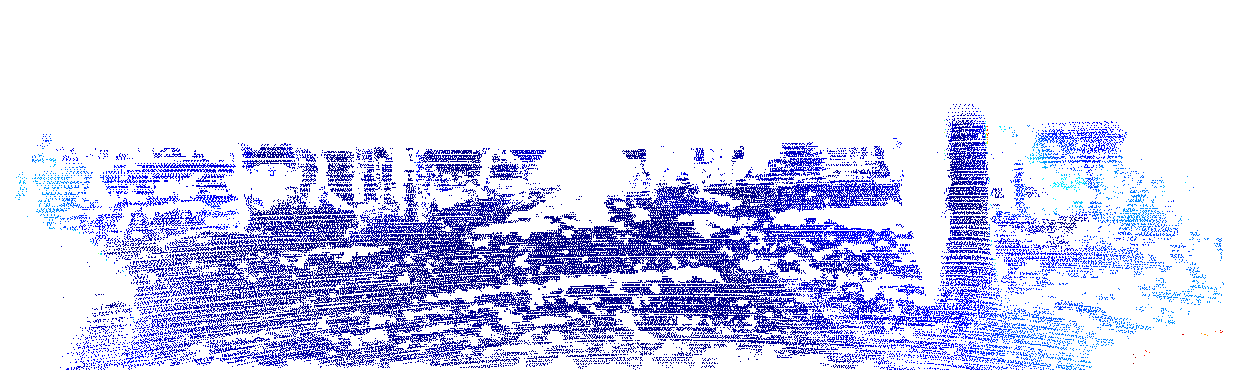

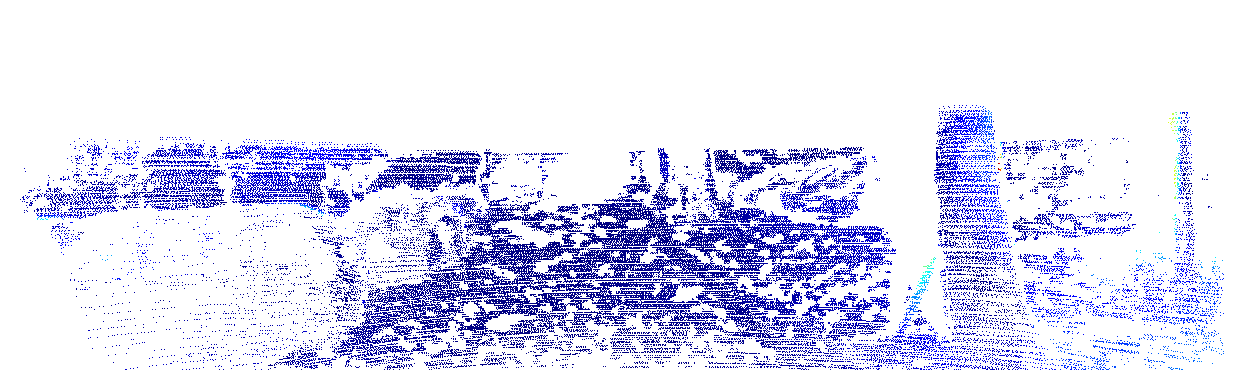

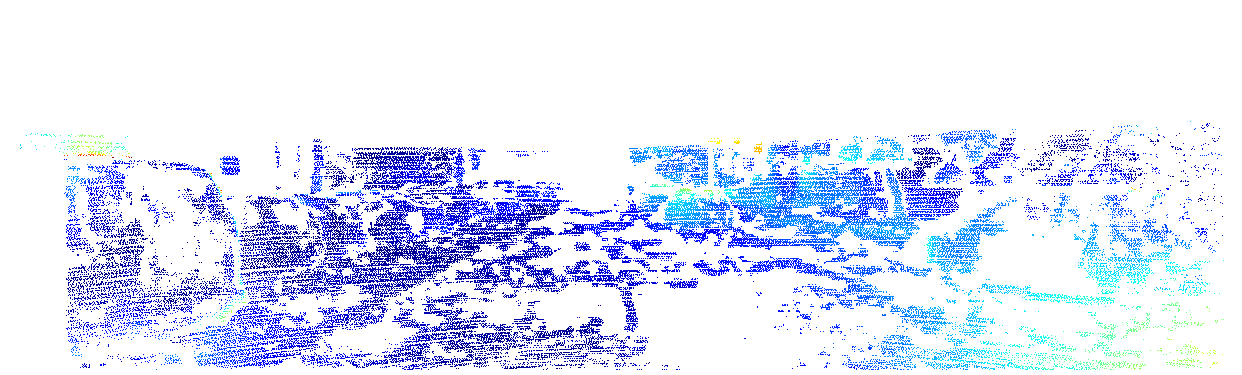

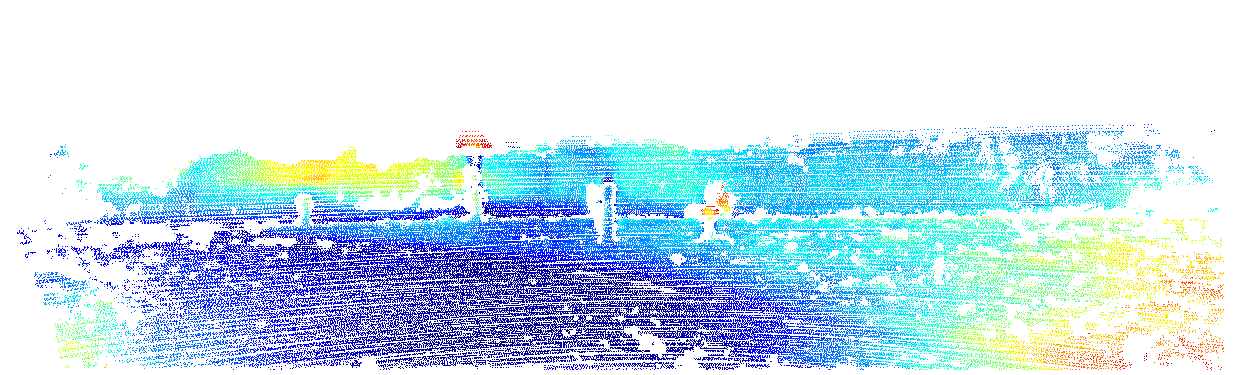

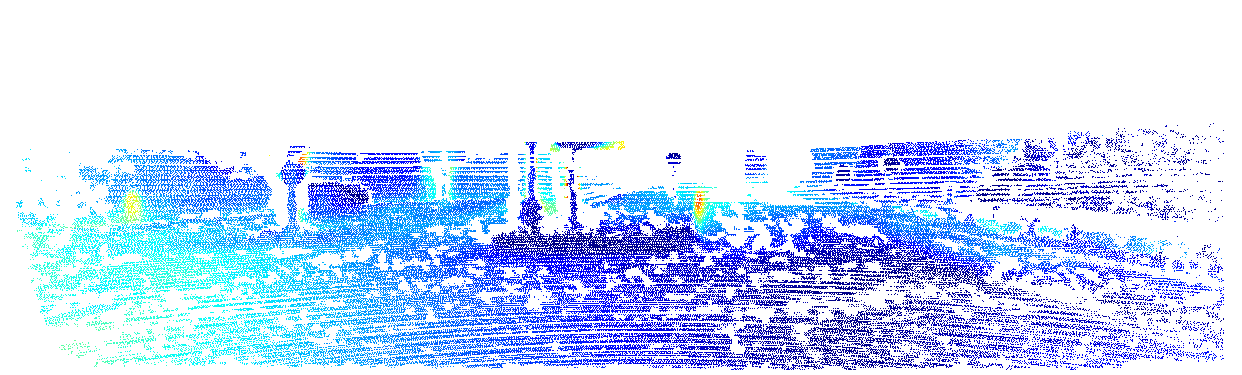

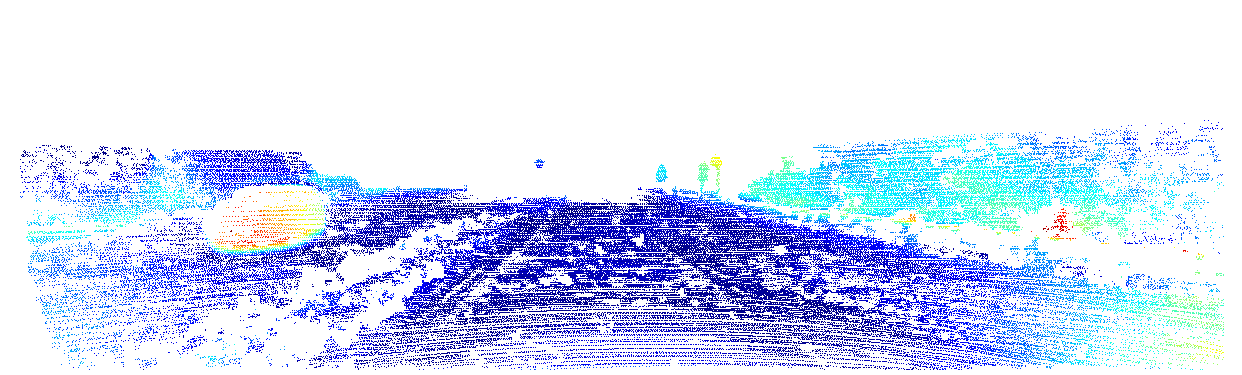

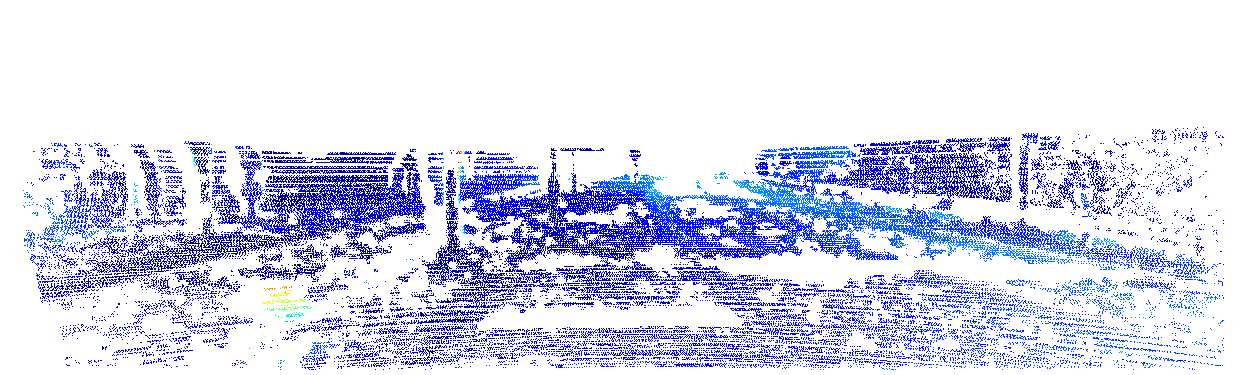

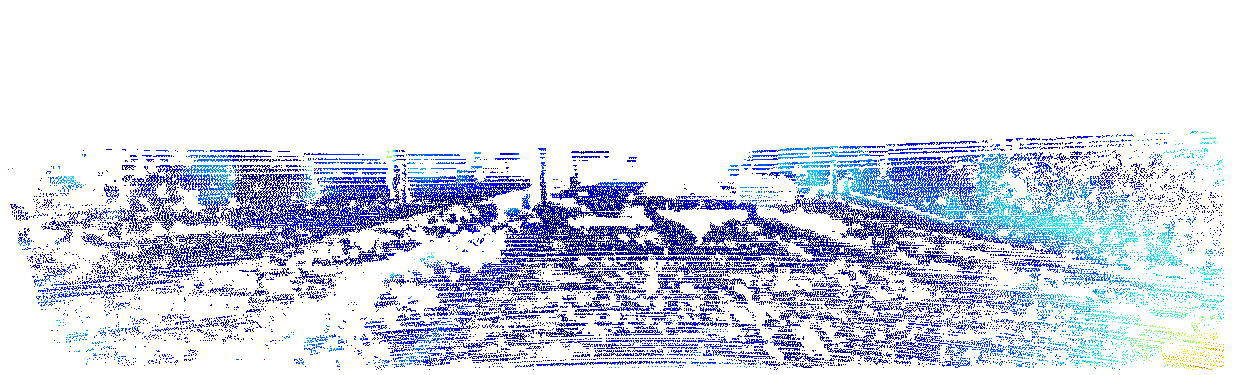

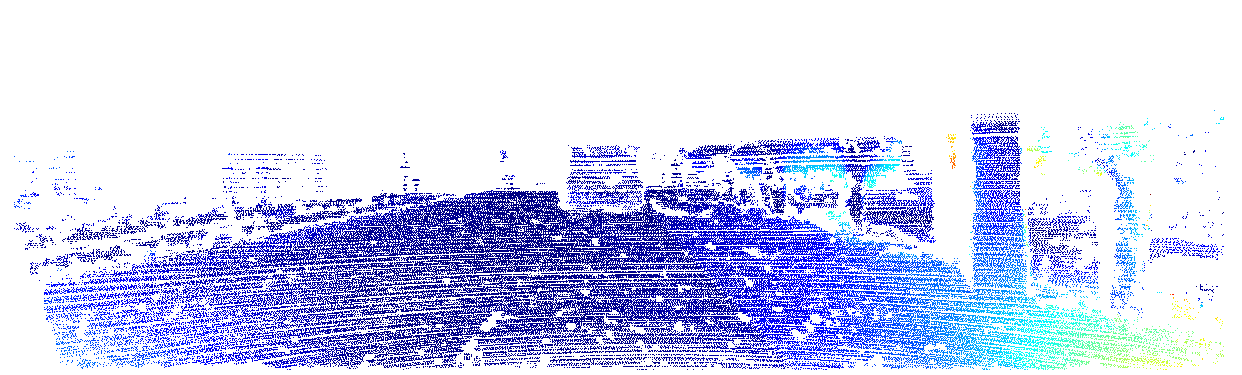

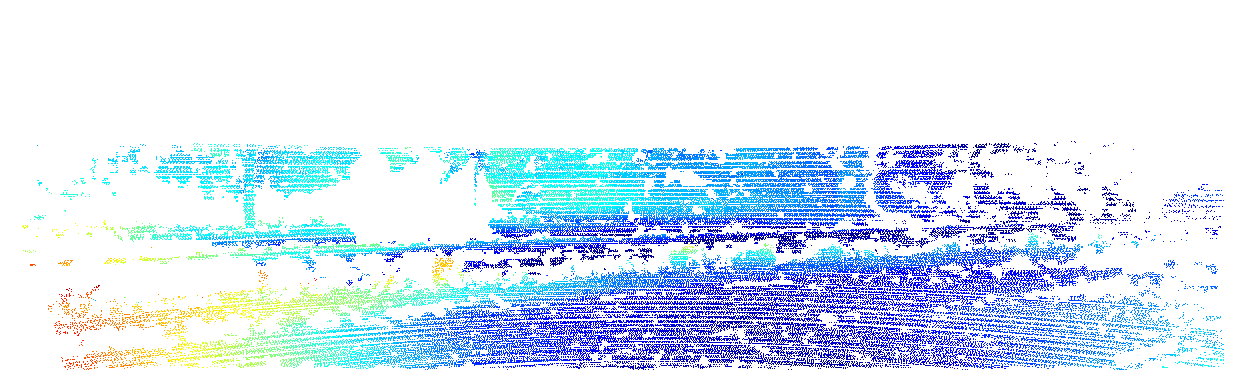

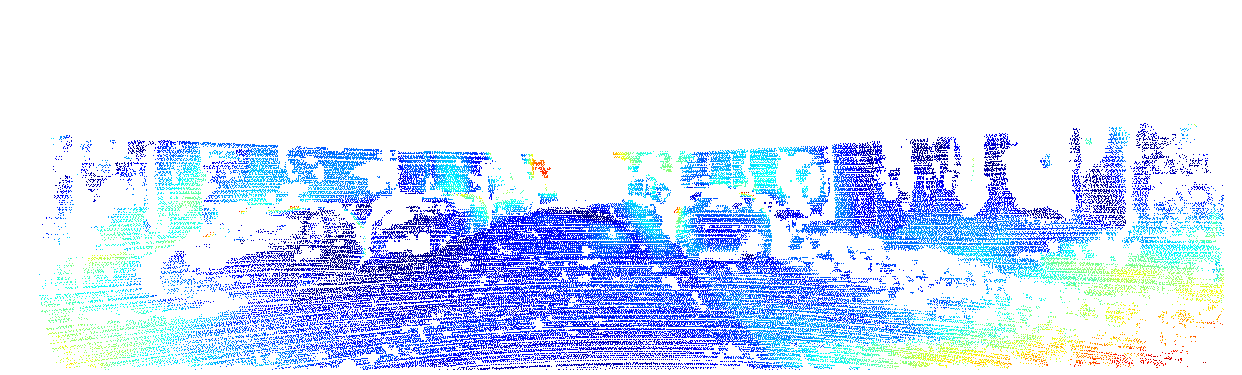

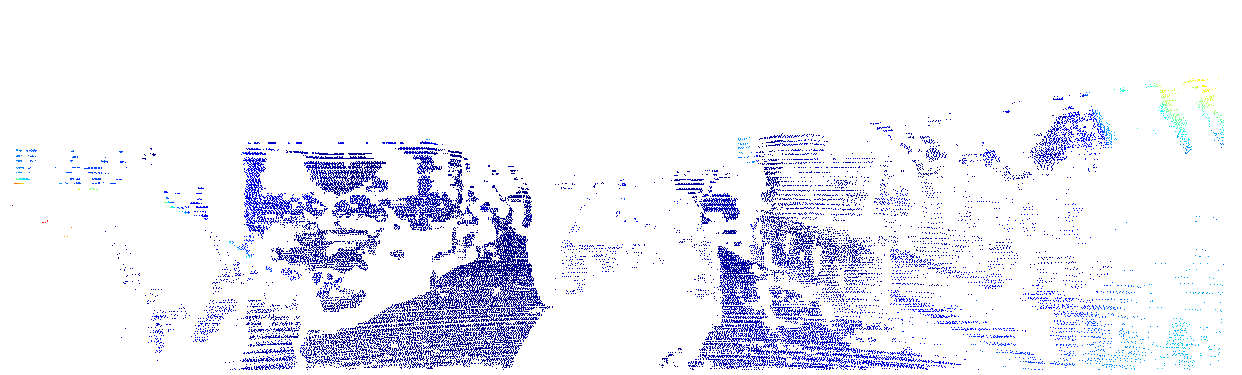

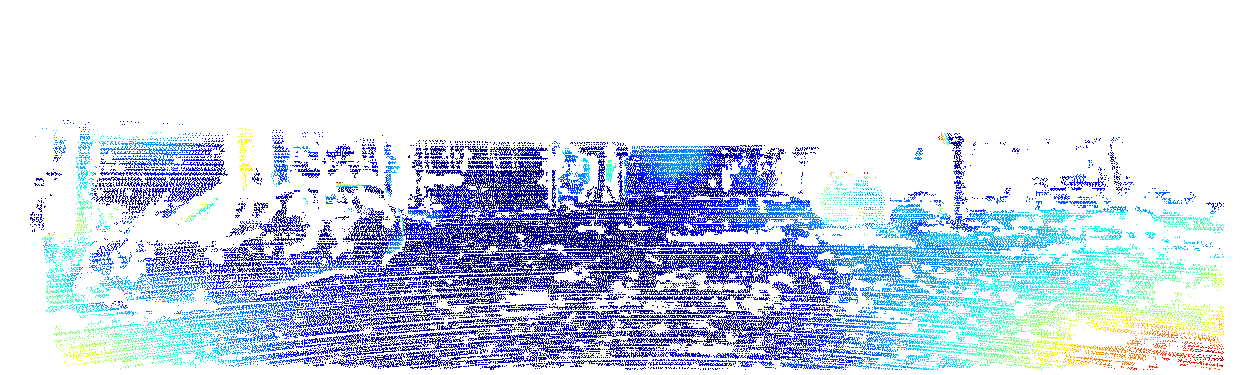

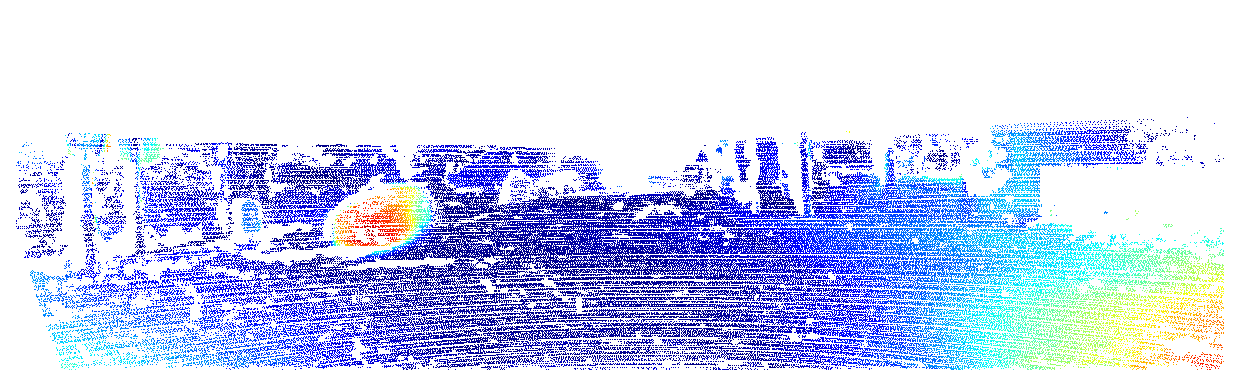

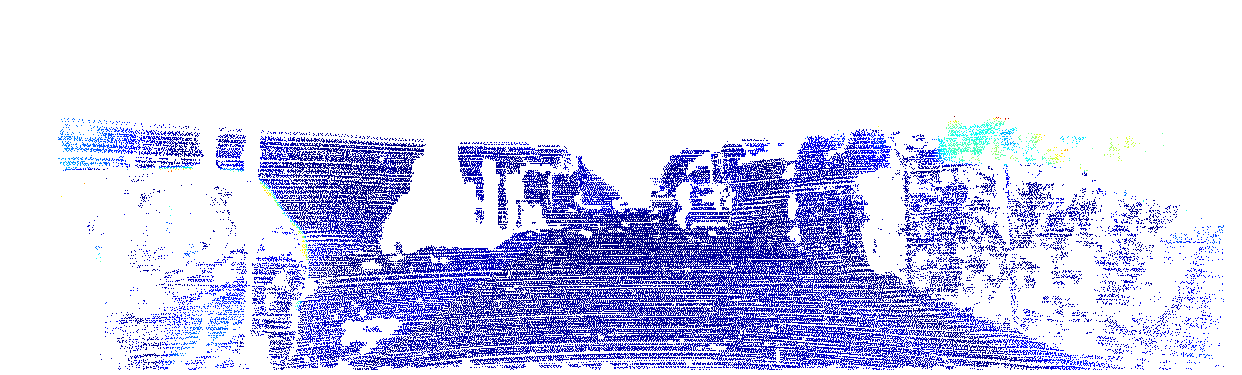

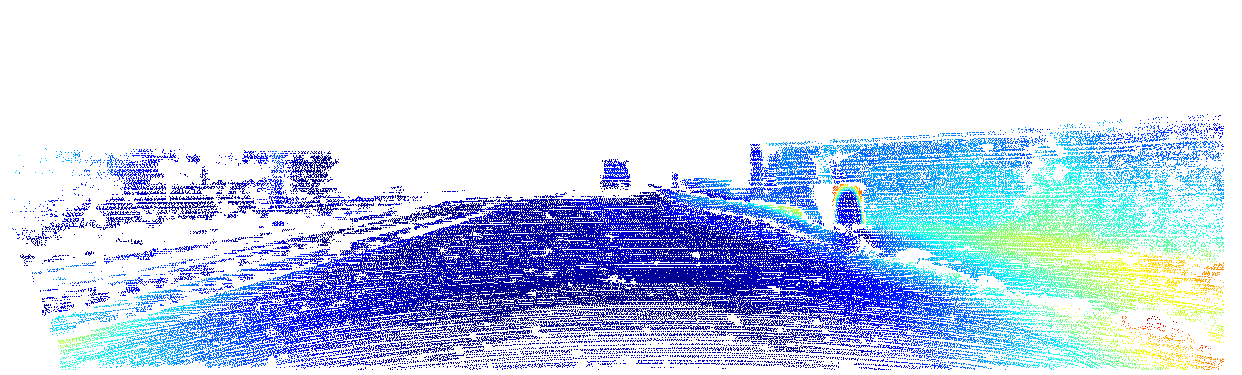

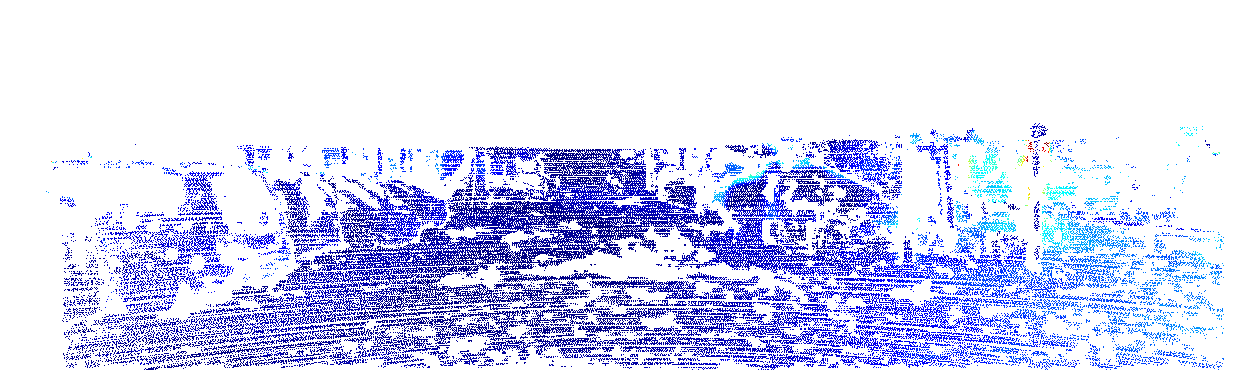

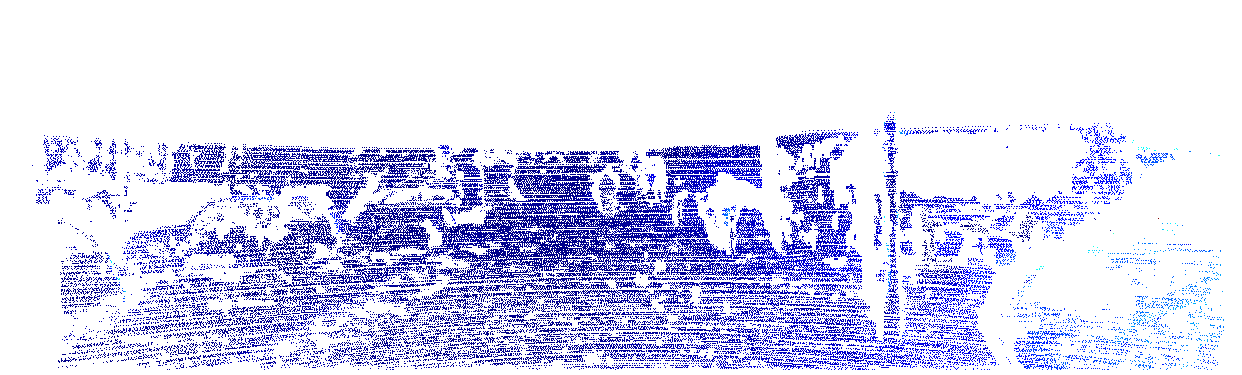

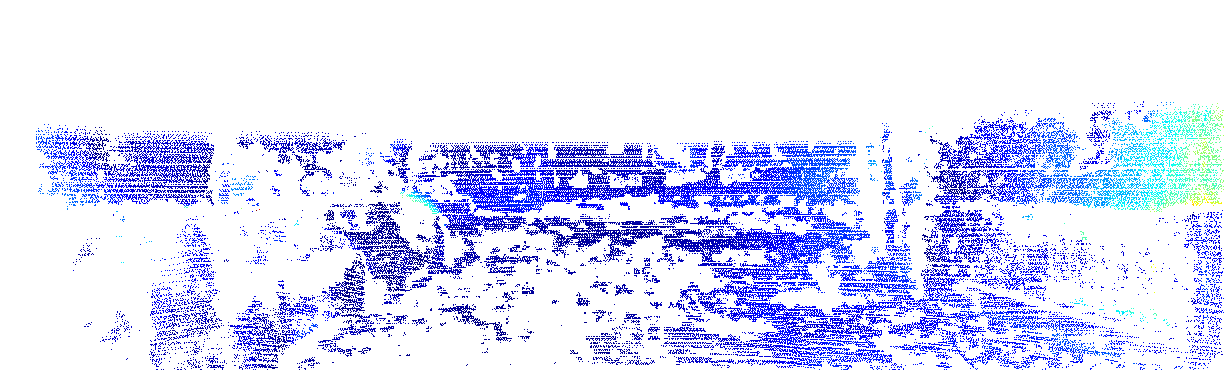

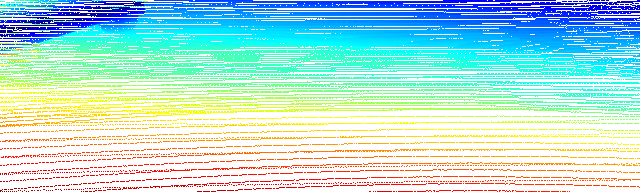

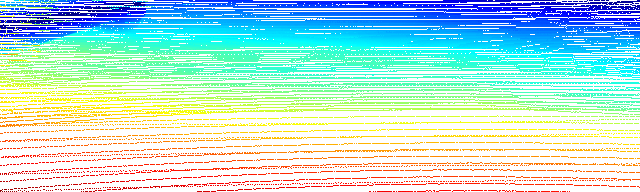

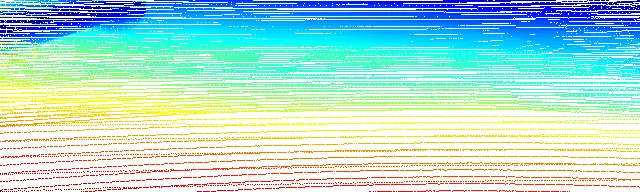

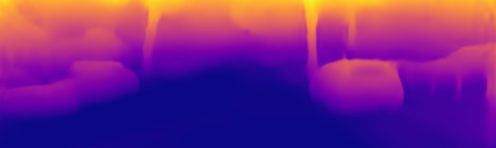

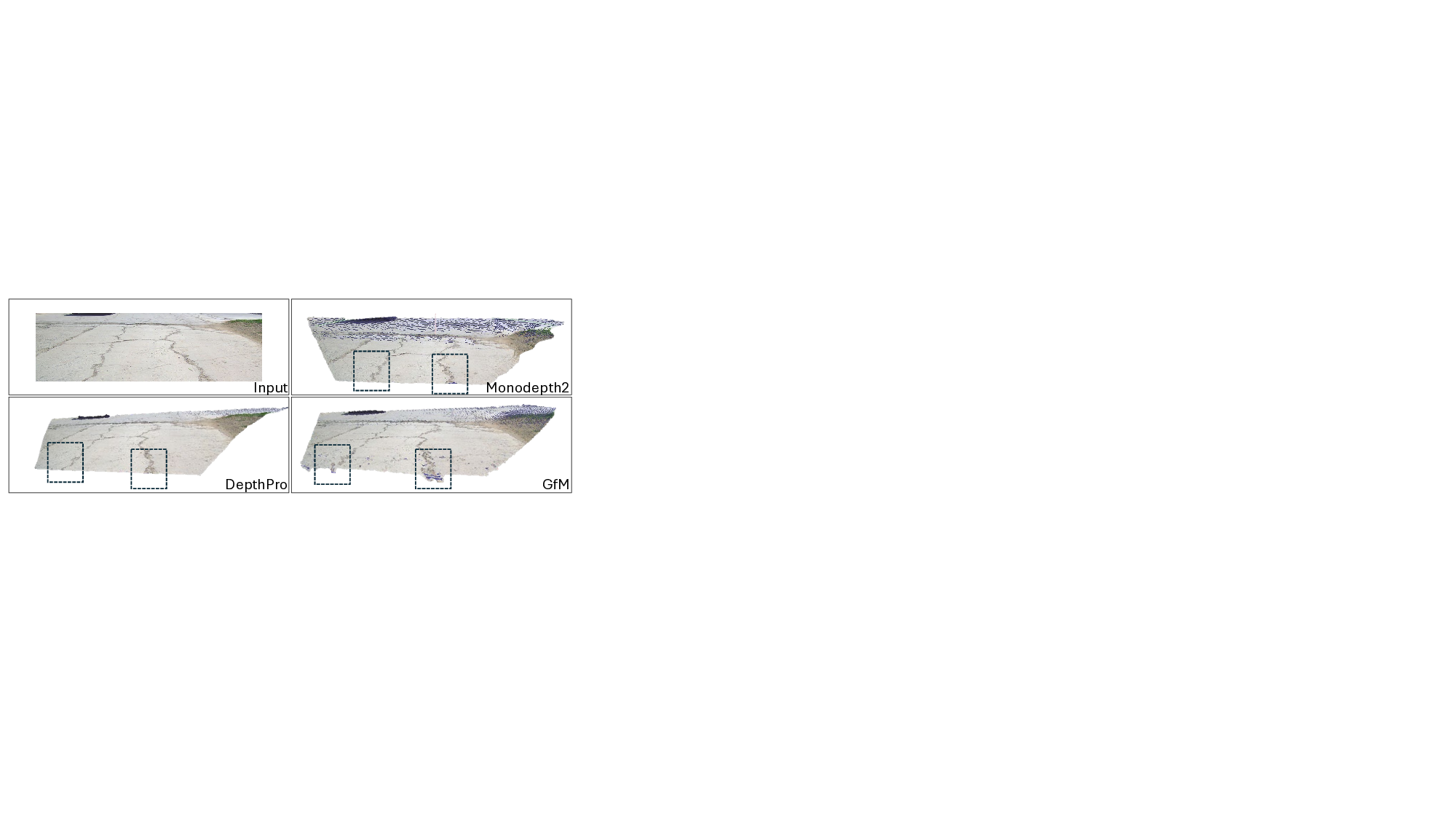

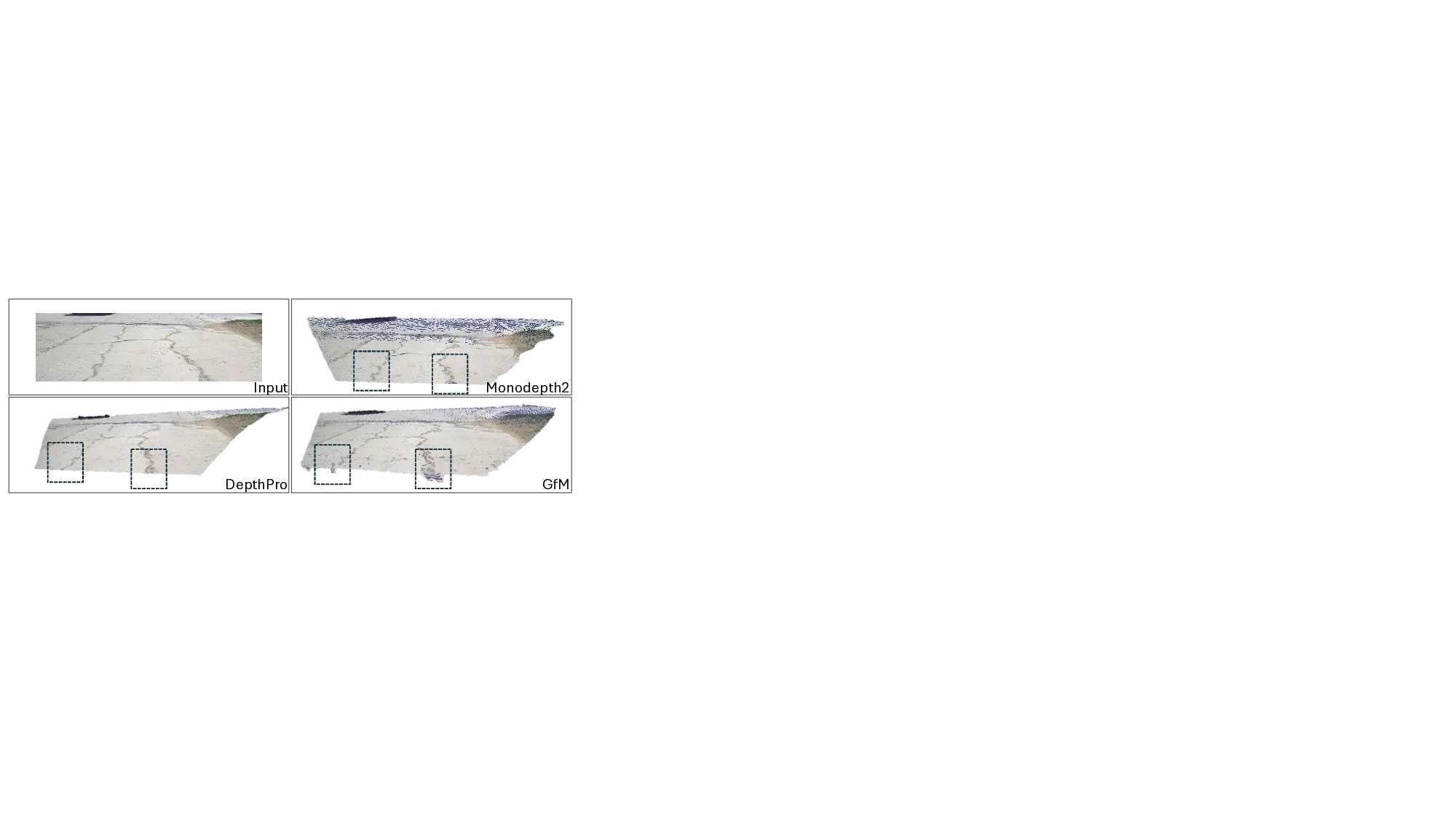

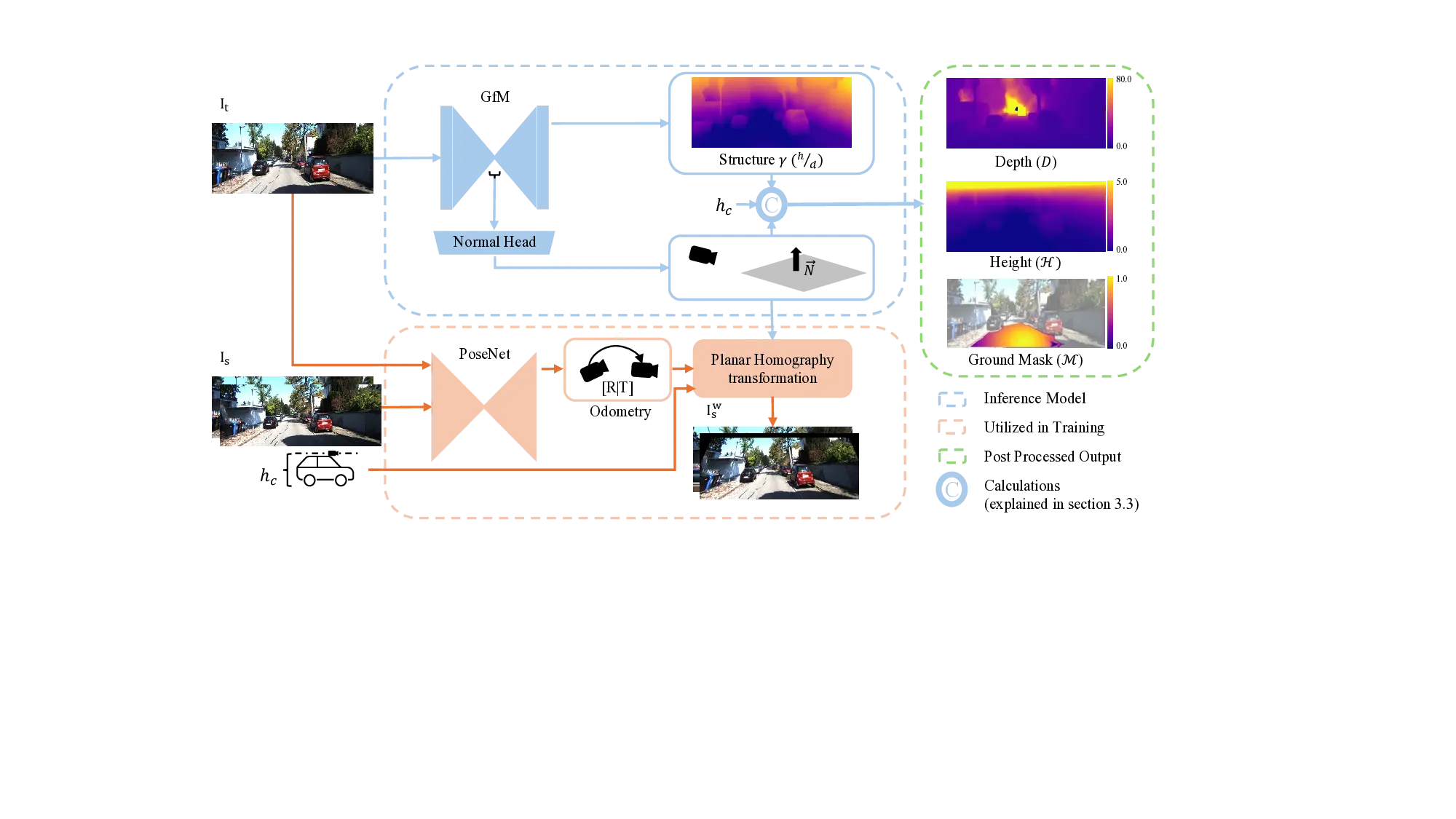

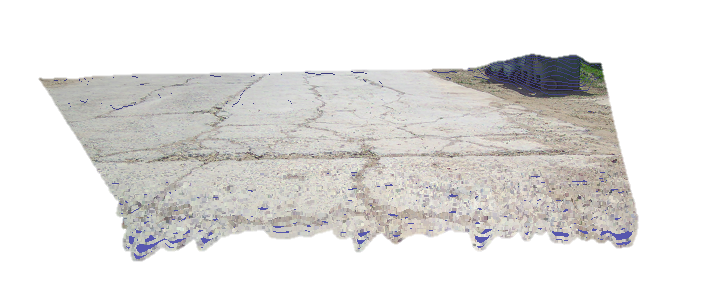

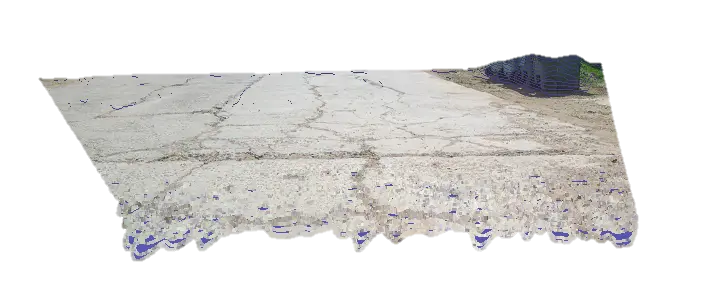

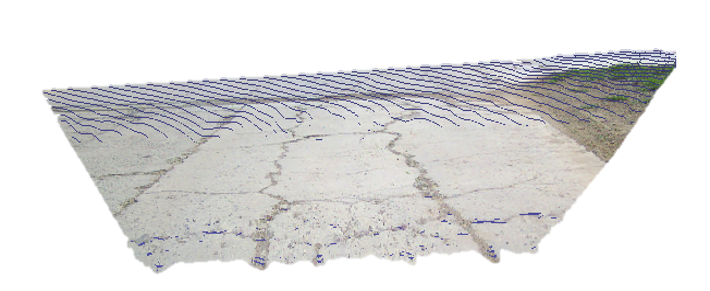

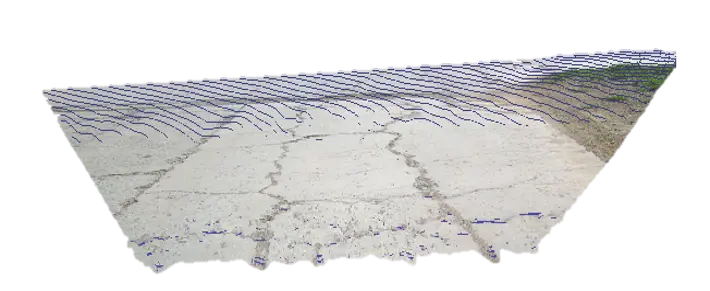

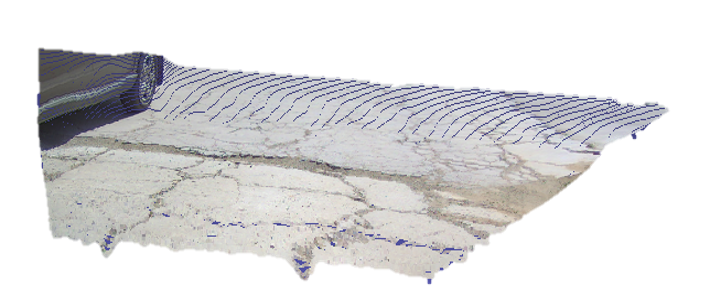

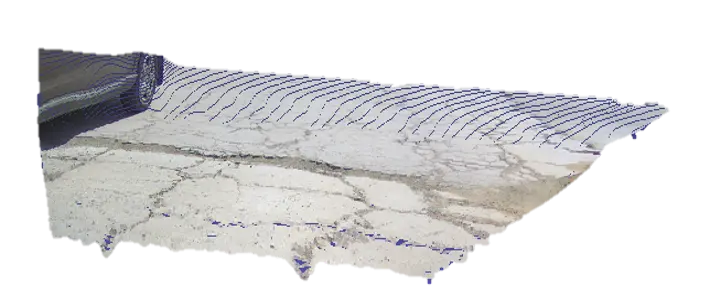

Modern robotics and autonomous vehicles demand scalable 3D perception, but achieving it with a single camera remains challenging [25]. Monocular Geometry Estimation (MGE) reconstructs per-pixel 3D structure from a single image, enabling cost-effective 3D perception without specialized sensors [2]. Specifically, accurate [9] and RSRD [48], with input images in the top-left.

3D reconstruction of the near-road geometry is crucial for navigation in autonomous driving [17,18], supporting obstacle avoidance and motion planning [34], and it is also vital for off-road and legged robotics, where elevation and local slope guide traversability and footstep planning [23]. However, monocular depth estimation methods, which are commonly used in these domains, struggle to accurately capture road topography [49]. Textureless pavements and low-contrast surfaces often lead to oversmoothing and underestimation of slopes, causing small obstacles and surface irregularities to be missed [1,41,49]. This limitation is critical, as small height variations, such as bumps or road-level changes, can differentiate drivable regions from hazards and negatively impact vehicle dynamics and safety [18,24,29].

Metric foundation depth models [4,19,20,43,44] generalize well across domains and provide strong scene-level predictions. However, they do not explicitly target road-relative quantities such as height above the ground or local slope. Moreover, they often suffer from residual scale drift when deployed in new envi-ronments, typically requiring inference-time scale correction. In contrast, self-supervised monocular methods [5,8,12,15,30,32,39,47] attempt to resolve scale ambiguity by incorporating metric anchors such as odometry sensors or ground-plane constraints. While these strategies stabilize global scale, they remain depthcentric, leaving road geometry underconstrained.

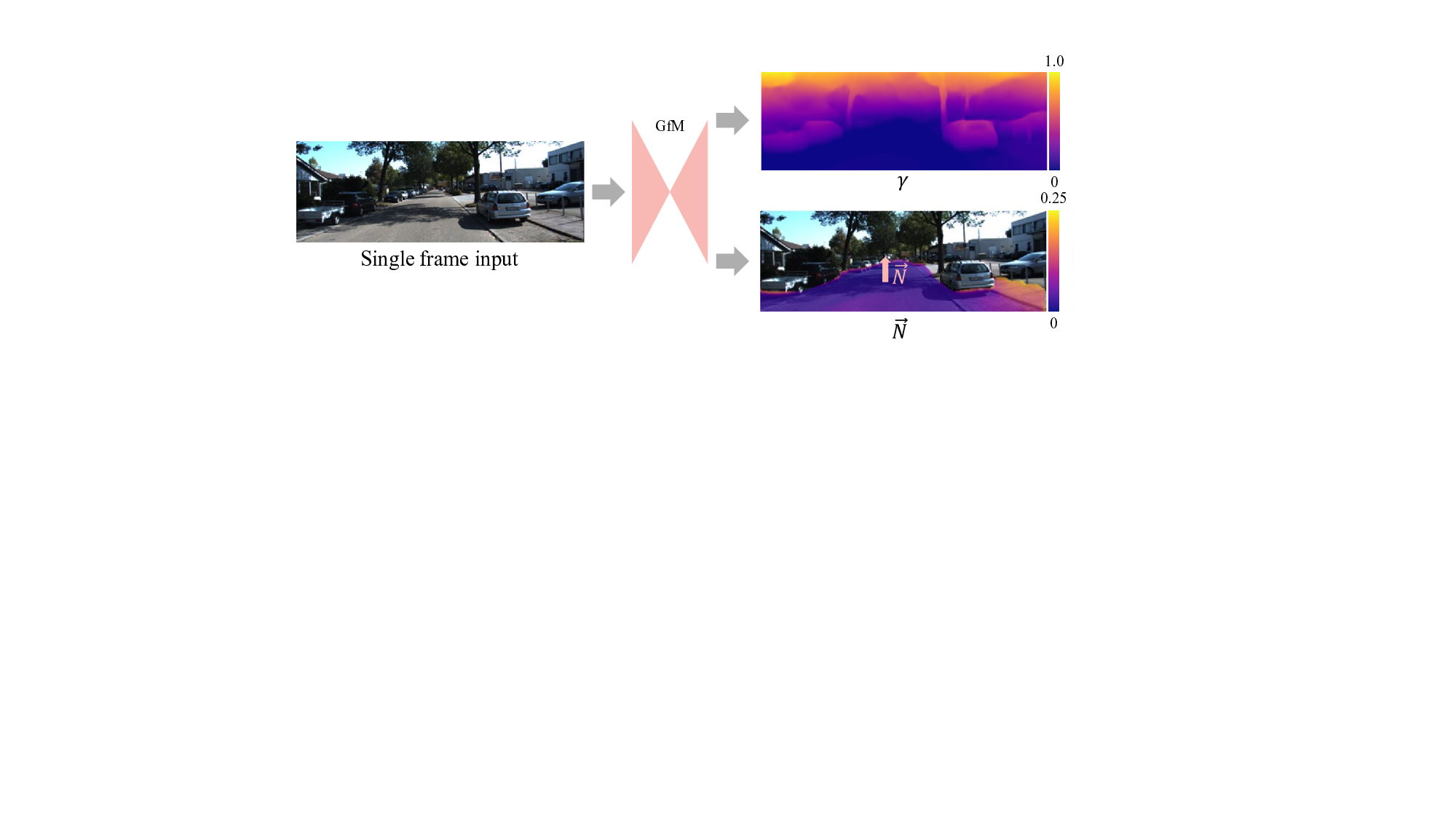

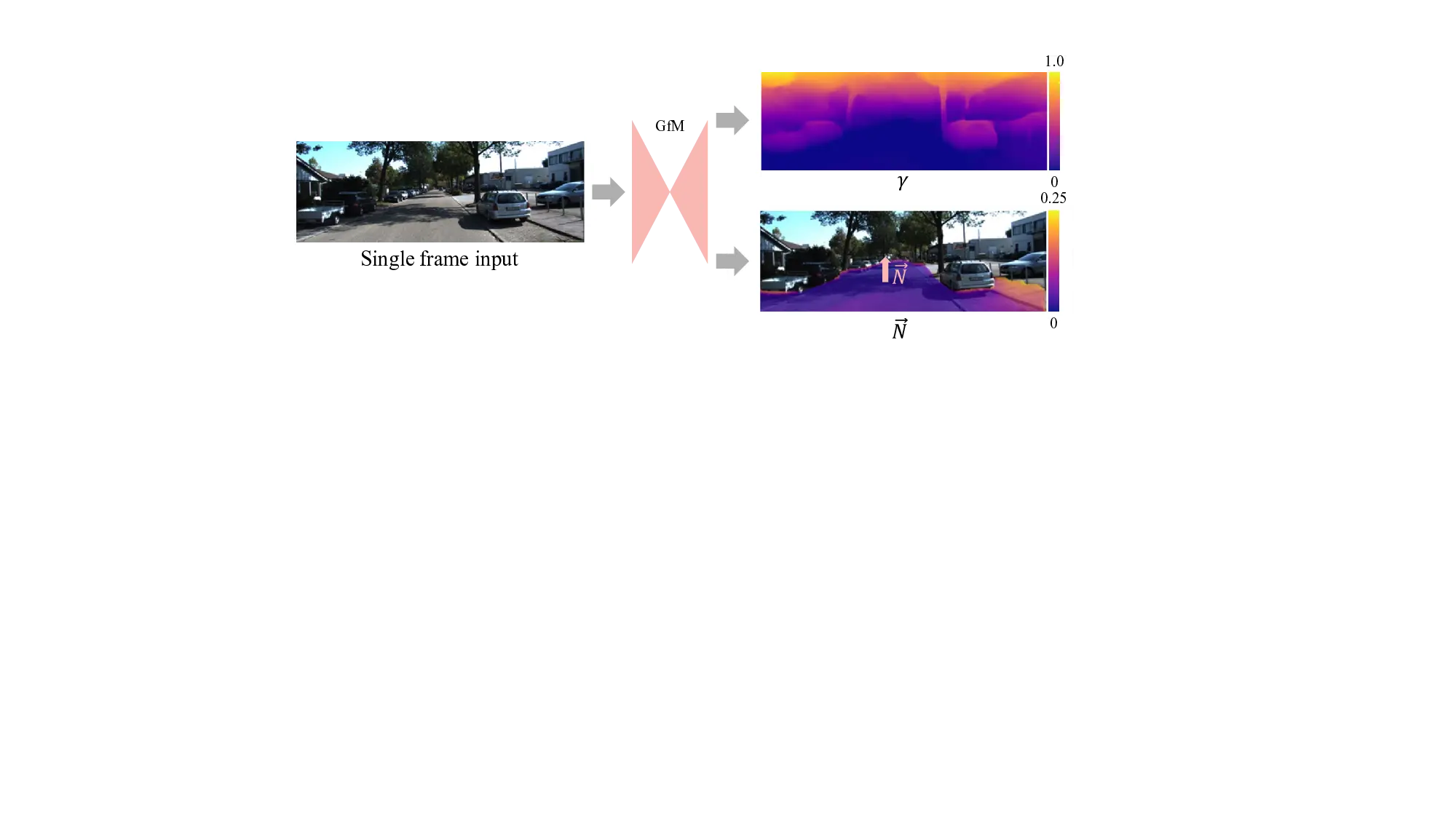

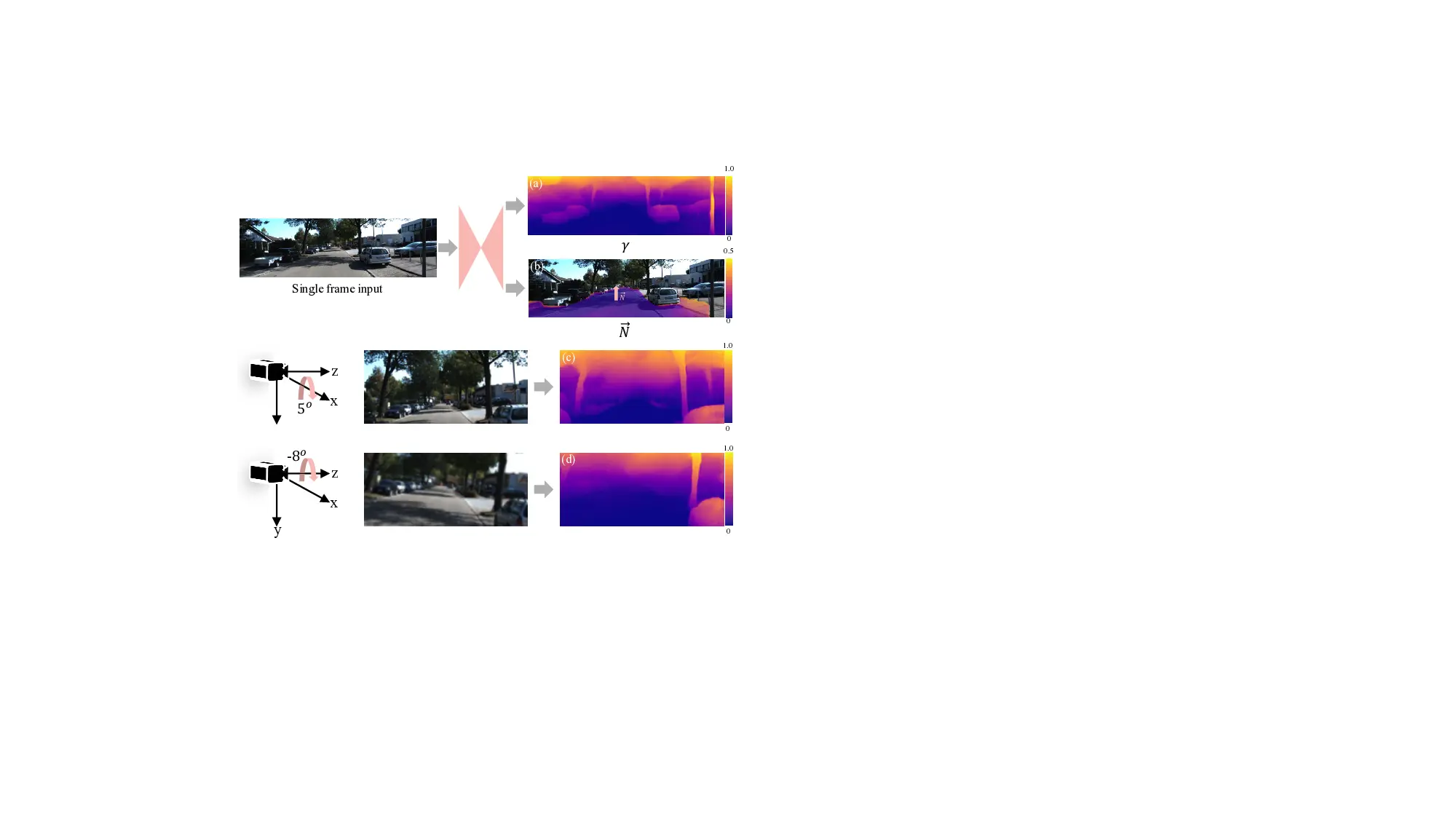

In practice, existing monocular pipelines recover height above the road indirectly via costly postprocessing, such as elevation maps [23]. Recent topdown (BEV) approaches [49] model road surfaces explicitly but rely on discretized ground-plane grids and dense ground-truth supervision, limiting resolution and scalability in unlabeled settings. Since single-view depth is projectively ambiguous [26,42], a road-relative height-to-depth ratio 𝛾 = ℎ/𝑑 offers a complementary representation, making 𝛾 dimensionless and tied to the ground. As shown in Fig. 2, doubling the scale of an object relative to the ground leaves the apparent vertical offset unchanged. From a single image the metric configuration is indistinguishable, so depth remains scale ambiguous, whereas in 𝛾 space, both configurations take the same dimensionless value, consistently tied to the road. With a known ground plane and camera height, this 𝛾 value converts to absolute height and depth in a simple closed-form way. Accordingly, we introduce Gamma from Mono (GfM), a single-frame approach that reframes monocular geometry around the dominant road plane, mitigating projective ambiguity and reducing scale to a single camera-height parameter. Our key contributions are:

• We propose a model that directly predicts a roadrelative representation, comprising a global roadplane normal and a per-pixel height-to-depth ratio (𝛾), preserving near-road detail. • To our knowledge, this is the first single-frame, selfsupervised method that directly regresses 𝛾 for roadrelative geometry, enabling explicit, interpretable estimates of road topography. • We resolve metric scale from a dimensionless prediction, converting to metric depth using only known camera height and avoiding full extrinsic calibration or test-time fitting.

Most prior work in monocular geometry estimation predicts per-pixel depth [22]. By contrast, the height-todepth ratio 𝛾 has been a key parameter for multi-view reconstruction via planar parallax [14,27,28]. For example, MonoPP [8] uses 𝛾 only as a training-time scale cue distilled from multi-frame planar-parallax constraints.

On the other hand, Yuan et al. [45] computes per-pixel 𝛾 from multi-frame homography alignment with LiDAR supervision. Beyond these, monocular methods remain depth-centric. Both approaches rely on multi-view cues or explicit ground-truth depth, and neither regresses 𝛾 directly from a single image in a self-supervised setting.

Scale ambiguity. Monocular depth estimation (MDE) has advanced greatly, yet models trained only on monocular images recover depth only up to an unknown scale factor [35,42]. However, metric-scale depth is crucial for autonomous driving safety [33]. Recent foundational models such as Metric3D [44], UniDepth [19,20], MoGe [35,36], DepthAnything [42,43], and Depth-Pro [4] include metric-depth variants, yet still exhibit small but persistent scale drift in novel environments. Moreover, these methods are trained on millions of images and use large transformer backbones, making them less suitable for resource-constrained real-time deployment on limited hardware

…(Full text truncated)…

This content is AI-processed based on ArXiv data.