📝 Original Info

- Title: Out-of-the-box: Black-box Causal Attacks on Object Detectors

- ArXiv ID: 2512.03730

- Date: 2025-12-03

- Authors: Researchers from original ArXiv paper

📝 Abstract

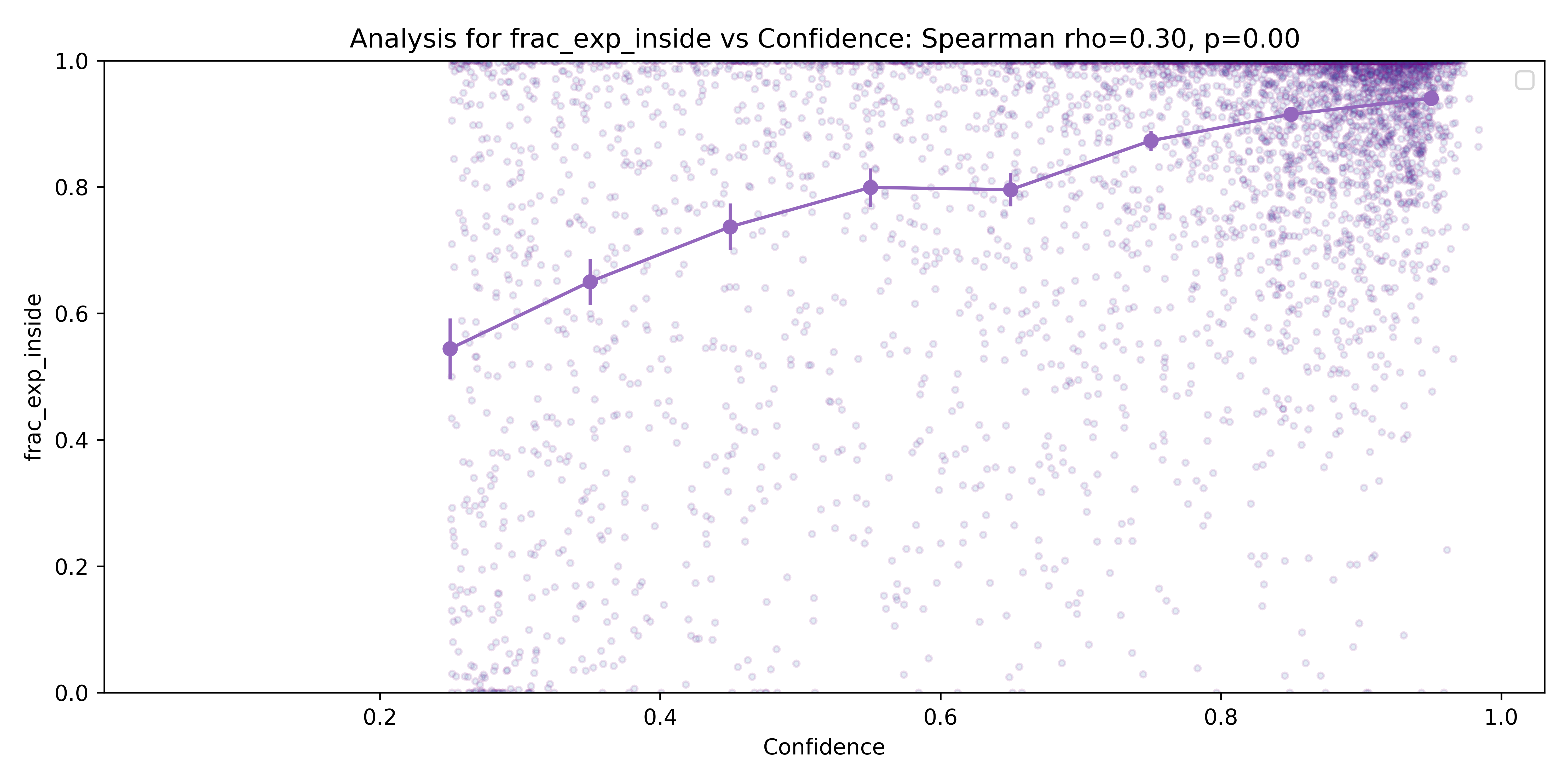

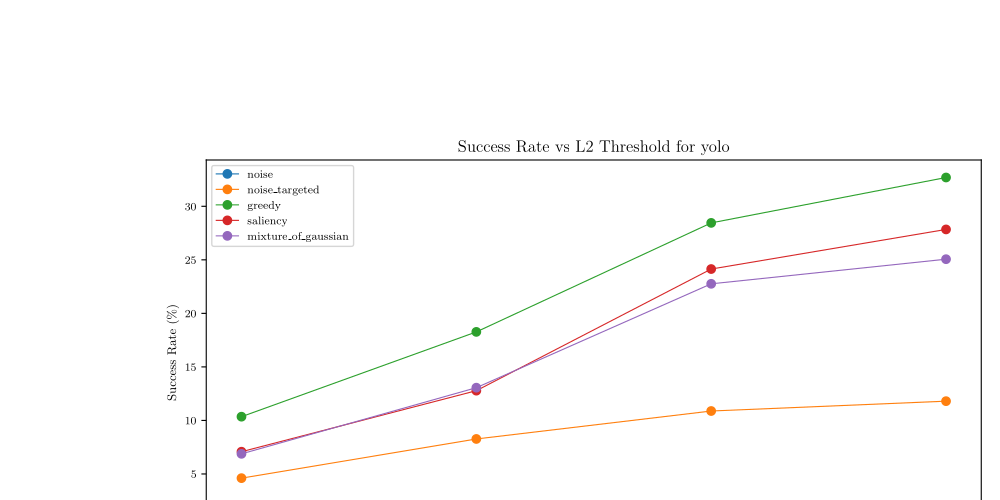

Adversarial perturbations are a useful way to expose vulnerabilities in object detectors. Existing perturbation methods are frequently white-box and architecture specific. More importantly, while they are often successful, it is rarely clear why they work. Insights into the mechanism of this success would allow developers to understand and analyze these attacks, as well as fine-tune the model to prevent them. This paper presents BlackCAtt, a black-box algorithm and a tool, which uses minimal, causally sufficient pixel sets to construct explainable, imperceptible, reproducible, architecture-agnostic attacks on object detectors. BlackCAtt combines causal pixels with bounding boxes produced by object detectors to create adversarial attacks that lead to the loss, modification or addition of a bounding box. BlackCAtt works across different object detectors of different sizes and architectures, treating the detector as a black box. We compare the performance of BlackCAtt with other black-box attack methods and show that identification of causal pixels leads to more precisely targeted and less perceptible attacks. On the COCO test dataset, our approach is 2.7 times better than the baseline in removing a detection, 3.86 times better in changing a detection, and 5.75 times better in triggering new, spurious, detections. The attacks generated by BlackCAtt are very close to the original image, and hence imperceptible, demonstrating the power of causal pixels.

💡 Deep Analysis

Deep Dive into Out-of-the-box: Black-box Causal Attacks on Object Detectors.

Adversarial perturbations are a useful way to expose vulnerabilities in object detectors. Existing perturbation methods are frequently white-box and architecture specific. More importantly, while they are often successful, it is rarely clear why they work. Insights into the mechanism of this success would allow developers to understand and analyze these attacks, as well as fine-tune the model to prevent them. This paper presents BlackCAtt, a black-box algorithm and a tool, which uses minimal, causally sufficient pixel sets to construct explainable, imperceptible, reproducible, architecture-agnostic attacks on object detectors. BlackCAtt combines causal pixels with bounding boxes produced by object detectors to create adversarial attacks that lead to the loss, modification or addition of a bounding box. BlackCAtt works across different object detectors of different sizes and architectures, treating the detector as a black box. We compare the performance of BlackCAtt with other black-box

📄 Full Content

Out-of-the-box: Black-box Causal Attacks on Object Detectors

Melane Navaratnarajah∗

King’s College London

Department of Informatics

melane.navaratnarajah@kcl.ac.uk

David A. Kelly∗

King’s College London

Department of Informatics

david.a.kelly@kcl.ac.uk

Hana Chockler

King’s College London

Department of Informatics

hana.chockler@kcl.ac.uk

Abstract

Adversarial perturbations are a useful way to expose vul-

nerabilities in object detectors. Existing perturbation meth-

ods are frequently white-box and architecture specific.

More importantly, while they are often successful, it is

rarely clear why they work. Insights into the mechanism of

this success would allow developers to understand and an-

alyze these attacks, as well as fine-tune the model to prevent

them.

This paper presents BlackCAtt, a black-box algorithm

and a tool, which uses minimal, causally sufficient pixel

sets to construct explainable, imperceptible, reproducible,

architecture-agnostic attacks on object detectors. Black-

CAtt combines causal pixels with bounding boxes produced

by object detectors to create adversarial attacks that lead

to the loss, modification or addition of a bounding box.

BlackCAtt works across different object detectors of differ-

ent sizes and architectures, treating the detector as a black

box.

We compare the performance of BlackCAtt with other

black-box attack methods and show that identification of

causal pixels leads to more precisely targeted and less per-

ceptible attacks. On the COCO test dataset, our approach is

2.7 times better than the baseline in removing a detection,

3.86 times better in changing a detection, and 5.75 times

better in triggering new, spurious, detections. The attacks

generated by BlackCAtt are very close to the original im-

age, and hence imperceptible, demonstrating the power of

causal pixels.

1. Introduction

Picture yourself in a self-driving car when you suddenly see

a dog in the road in front of you. The car has detected it as

well, via its object detector. Then, for no apparent reason,

the dog is no longer detected: its bounding box has van-

ished. The dog is still there, you can see it, but to the car it

*These authors contributed equally to this work

has become invisible. You have to intervene and apply the

brakes to avoid an accident. Why did the dog vanish?

Object detectors (OD) are known to be vulnerable to both

accidental and adversarial perturbations [33]. In fact, image

classification models in general are quite easy to attack [31,

44]. What is harder to explain is what causes the attack to

work. Generic attacks, such as global gaussian noise, are

reproducible and demonstrate vulnerability of the models,

but do not reveal the causal relationship between pixel-level

perturbations and failed object detections.

There is growing interest in exploring adversarial at-

tacks on object detectors using eXplainable AI (XAI) tech-

niques [47, 48]. These approaches are mostly white-box

methods: they need access to OD hidden layers, which is an

unnatural, and generous, attack model. Moreover, saliency

maps produced from the hidden layers are well known to

be noisy, sensitive to input perturbations and not naturally

interpretable [40, 49].

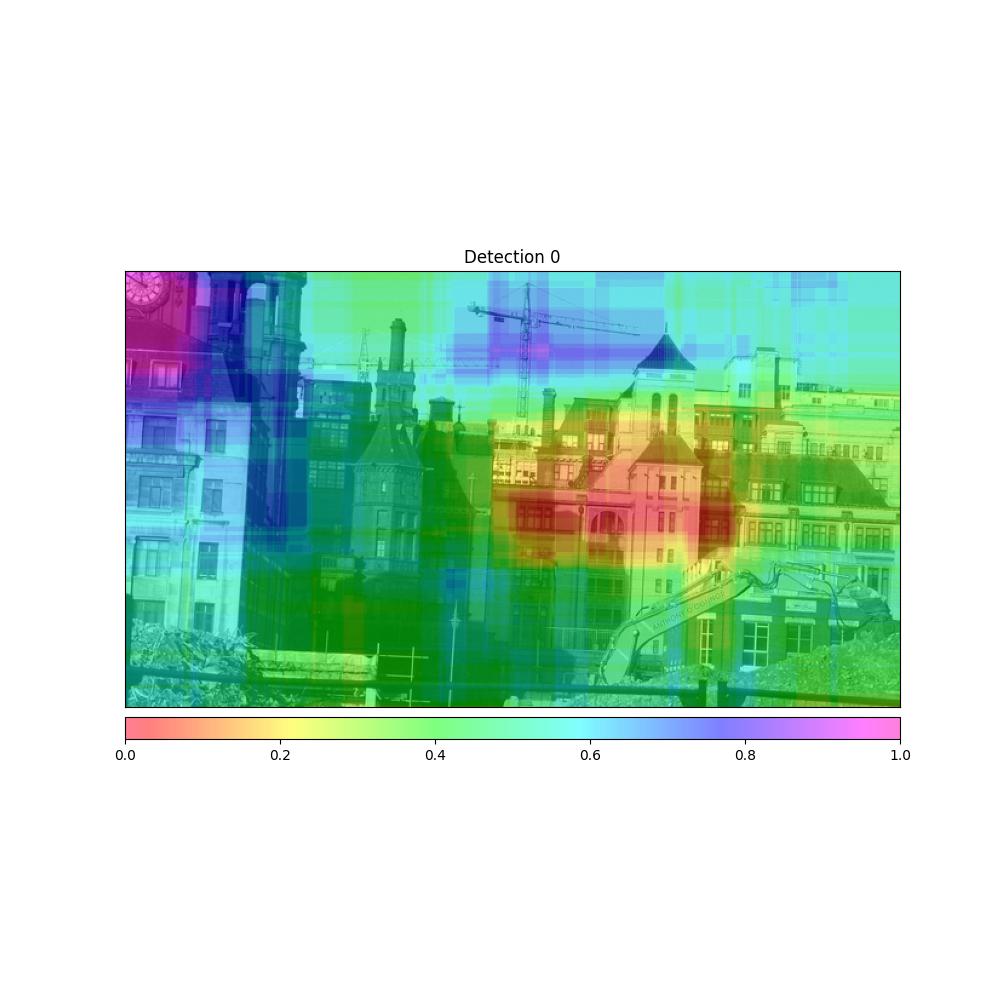

In this paper, we present BlackCAtt (Black-box Causal

Attacks), a black-box, causal approach to generating ad-

versarial attacks on object detectors. BlackCAtt discovers

minimal, sufficient pixel sets (MSPSs) for a detected object

using ReX [7]. These pixels, by themselves, are enough to

cause the required detection [6, 15]. BlackCAtt uses these

pixels to generate low-distortion attacks that remove, alter,

or introduce detections. BlackCAtt attacks the causes of the

classification, not random pixels nor the entire image.

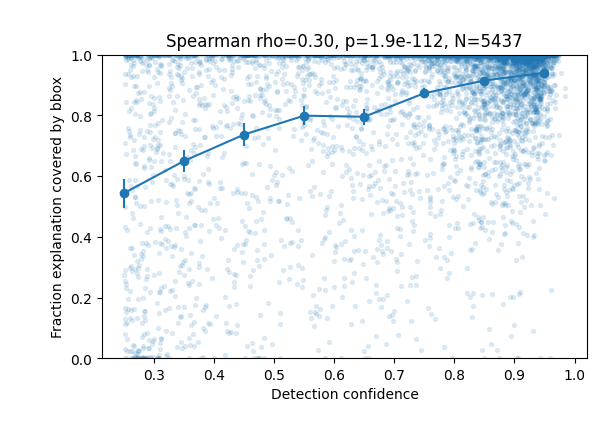

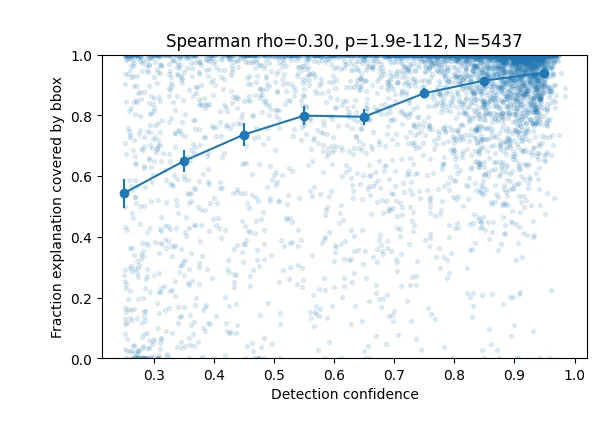

One might expect that all MSPSs would be contained

within the bounding box. One of the most surprising results

in this paper is that MSPSs are, in fact, frequently either

fully outside, or not fully contained within the OD bounding

box. We exploit this phenomenon, showing that perturbing

causal pixels outside the box often makes the box disappear

(Section 6). This happens across different detector architec-

tures (single-stage, two-stage, and transformer-based). We

also compare the accuracy and precision of BlackCAtt’s

native MSPSs by applying our extraction and perturba-

tion techniques to another popular black box saliency tool,

DRISE, and quantify attack success with a number of mea-

sures, including perceptual distortion [50].

1

arXiv:2512.03730v1 [cs.CV] 3 Dec 2025

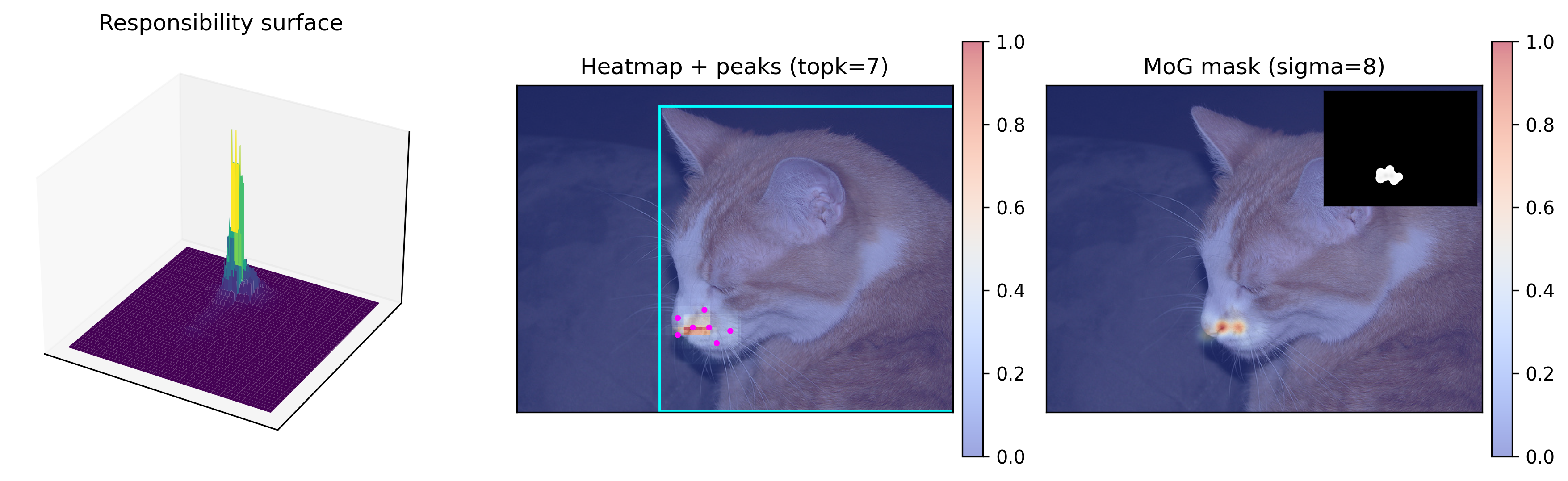

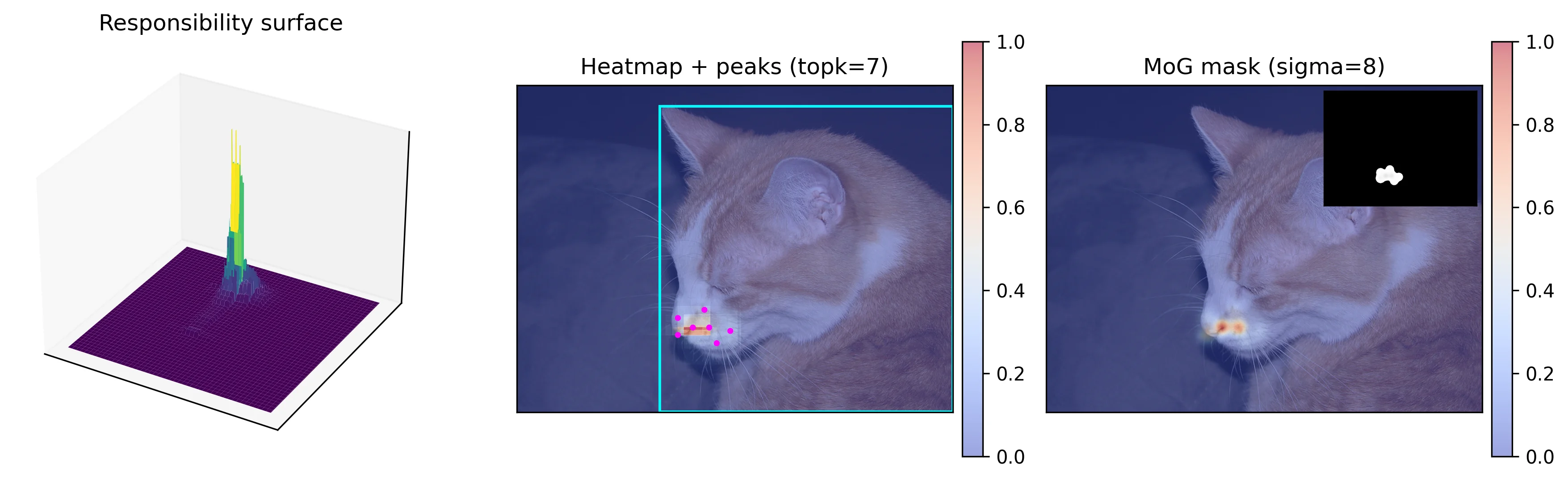

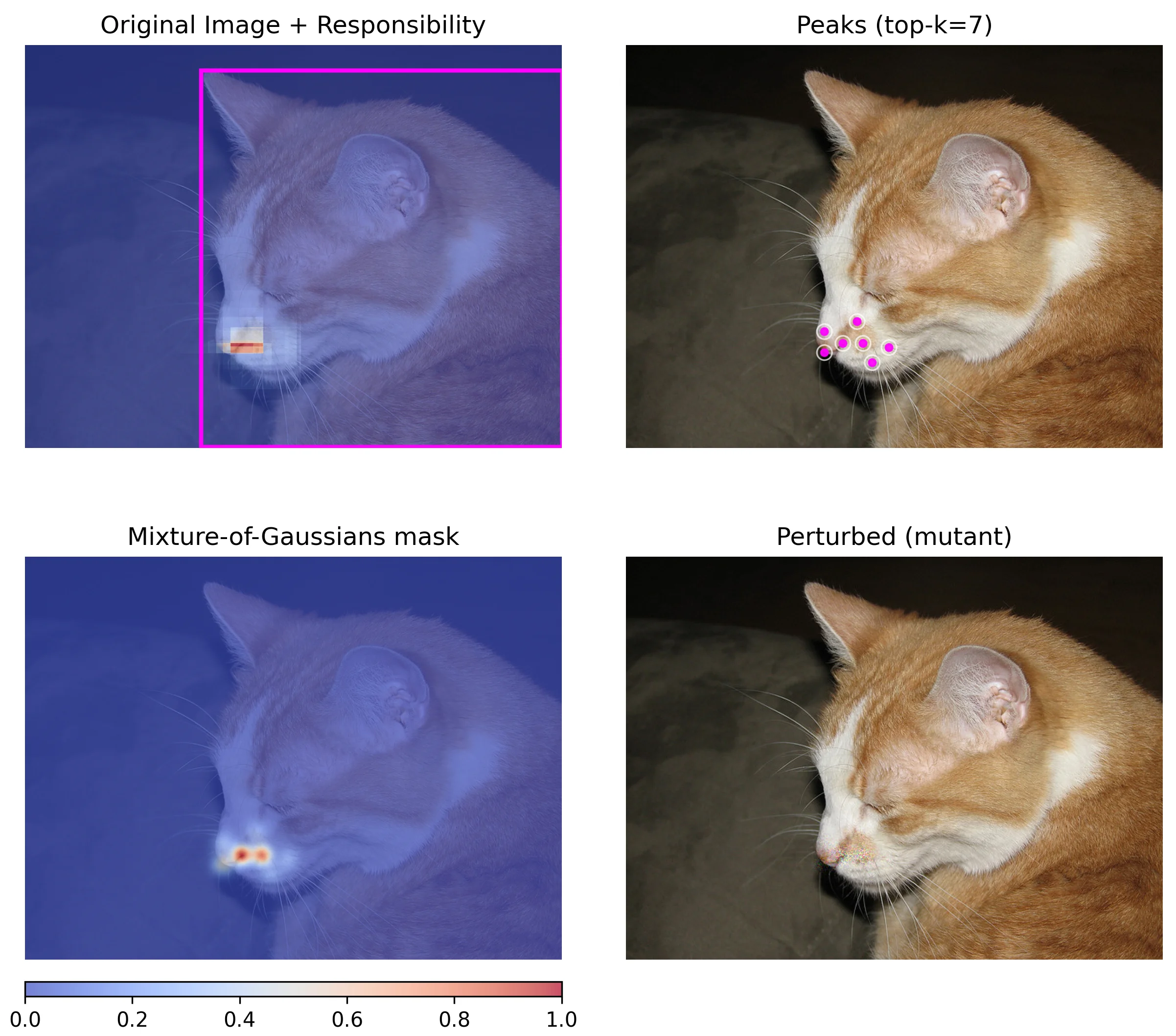

(a) Cat and its bounding box

(b) Bounding box and MSPS

(c) No cat 1: blur

(d) No cat 2: black

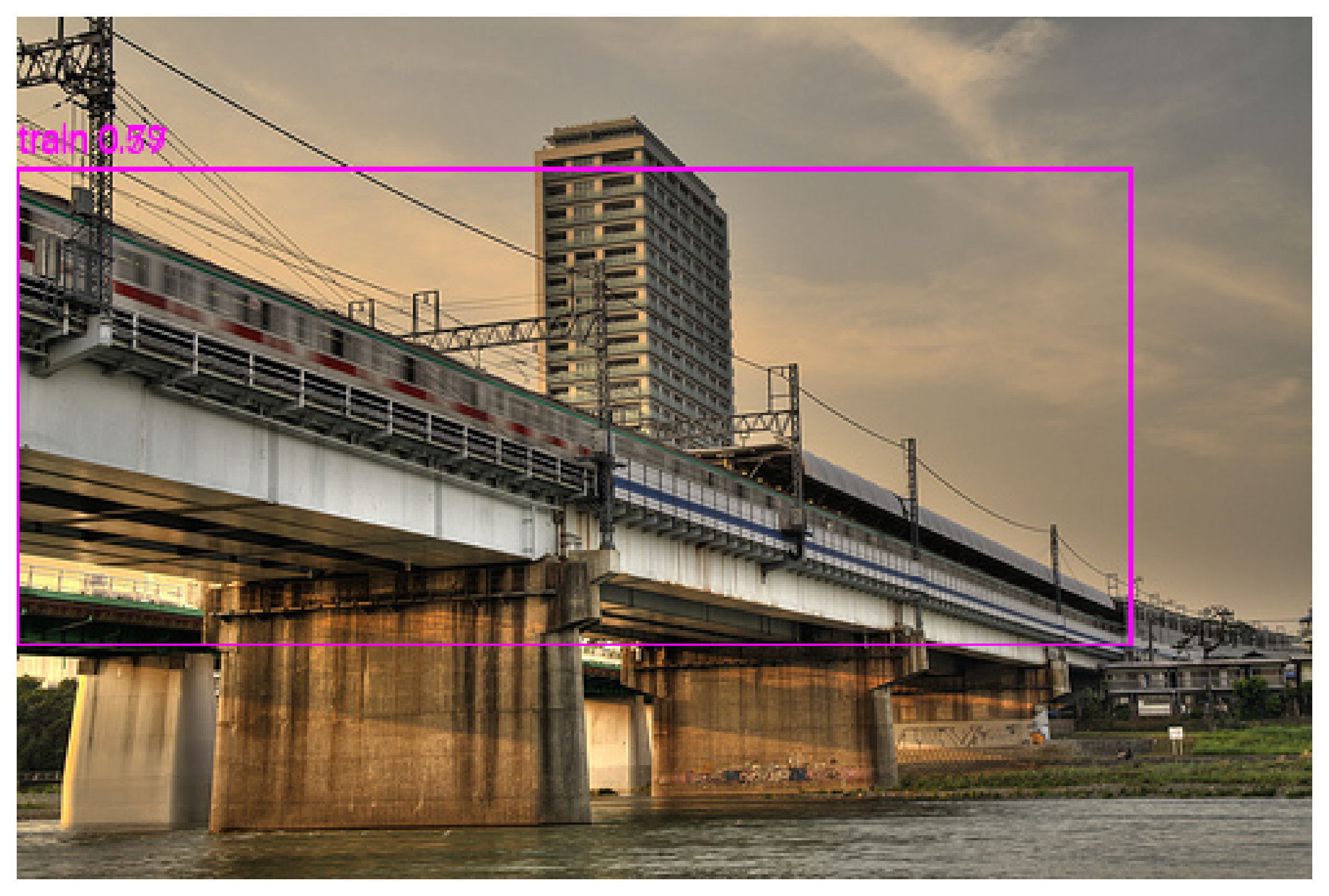

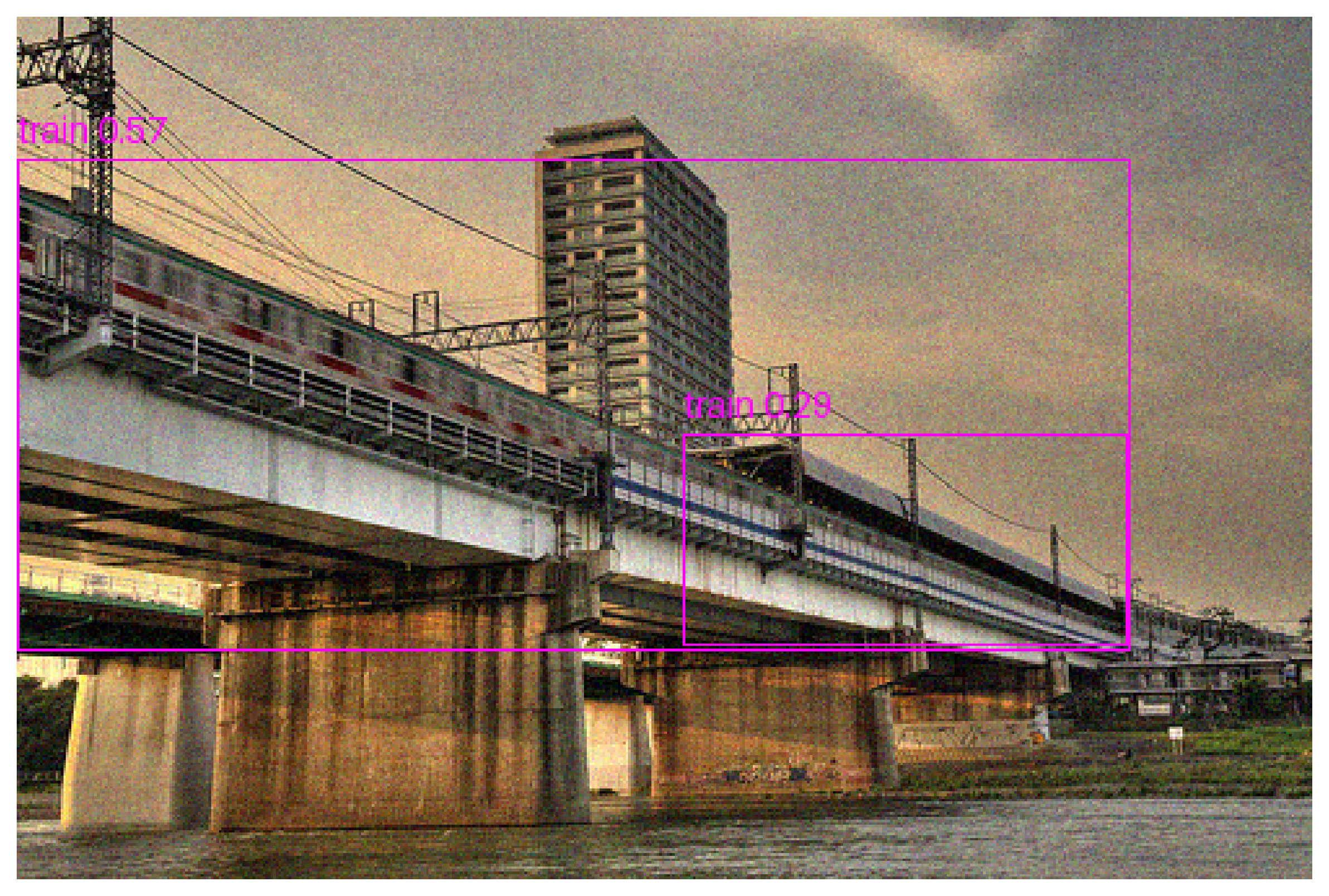

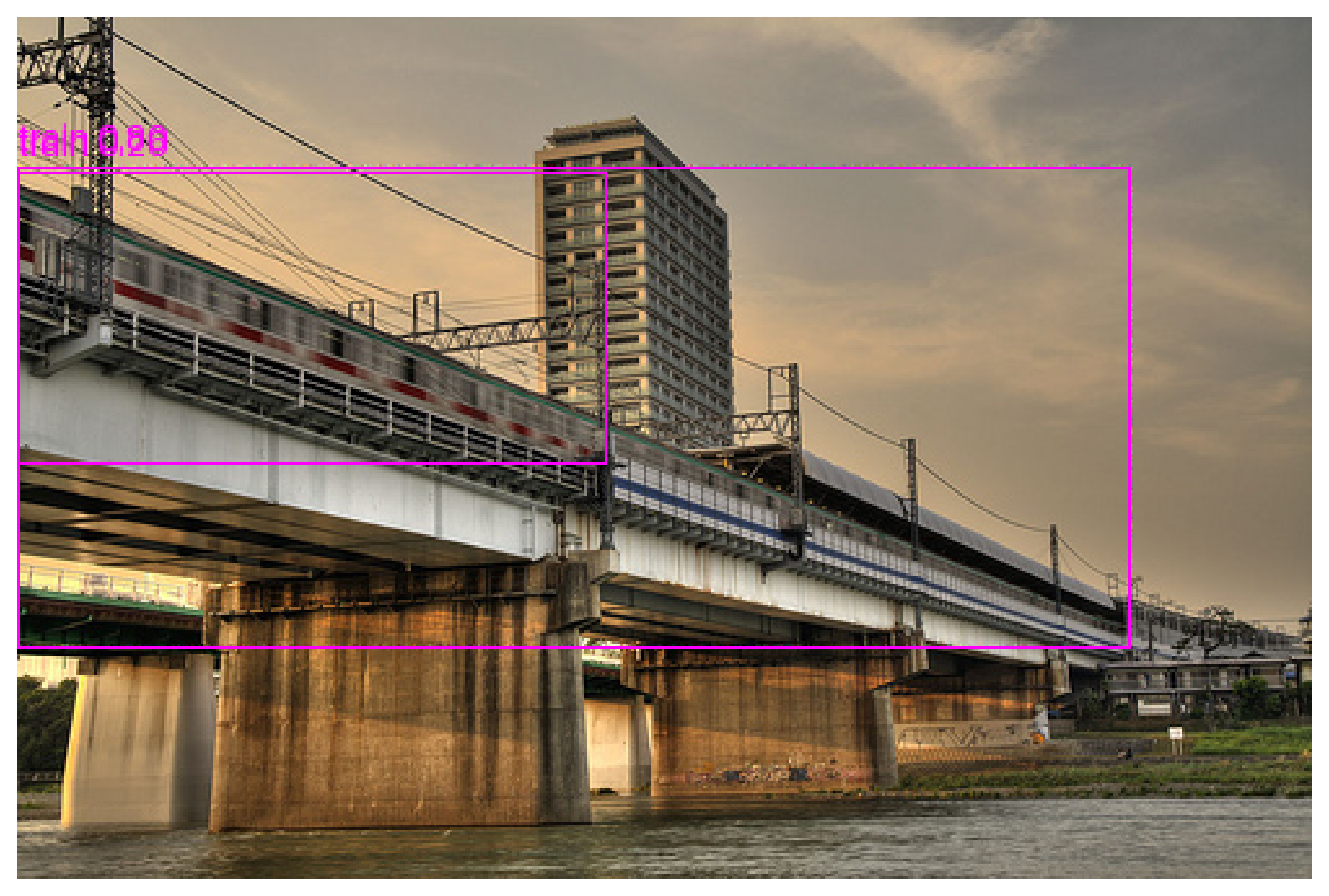

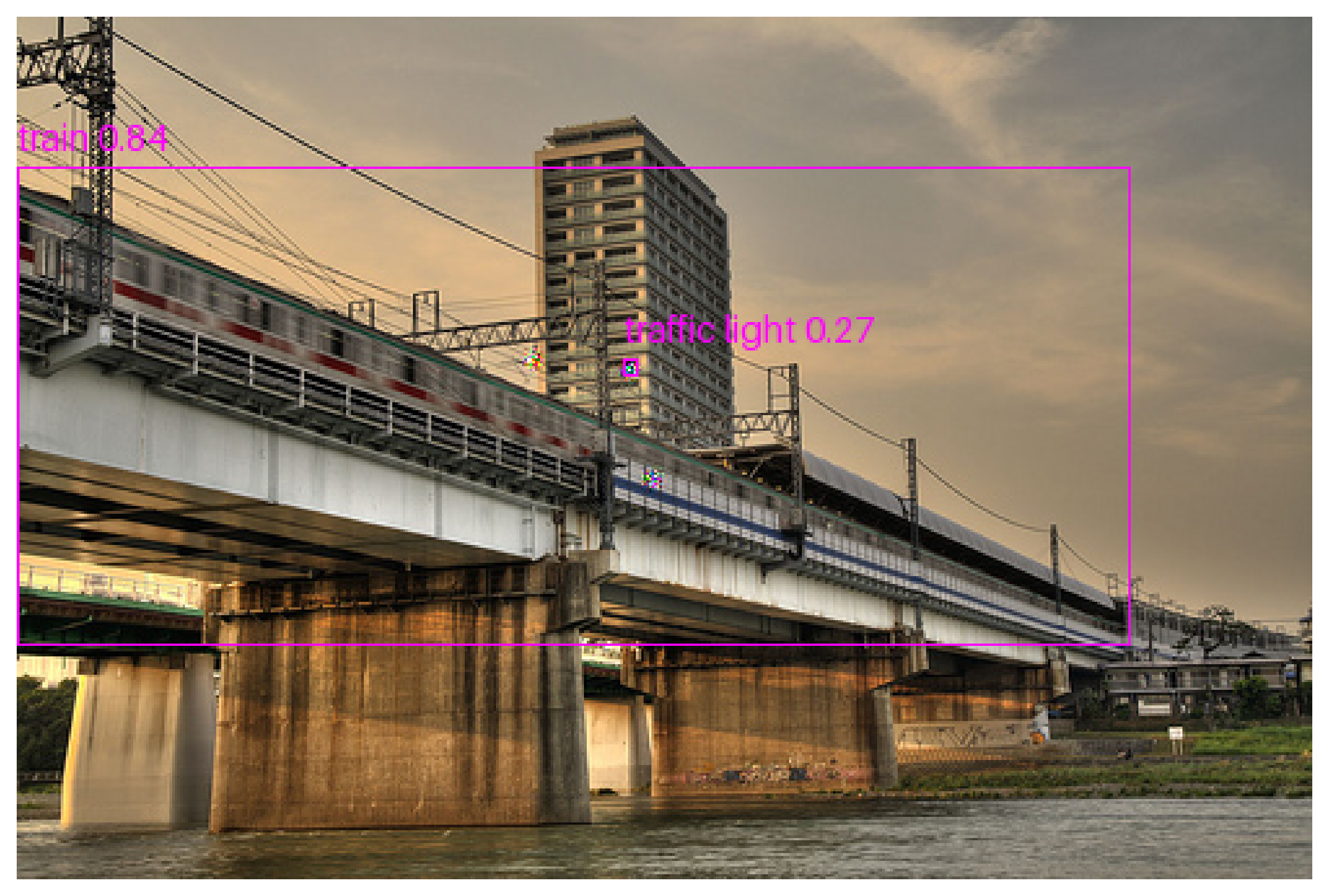

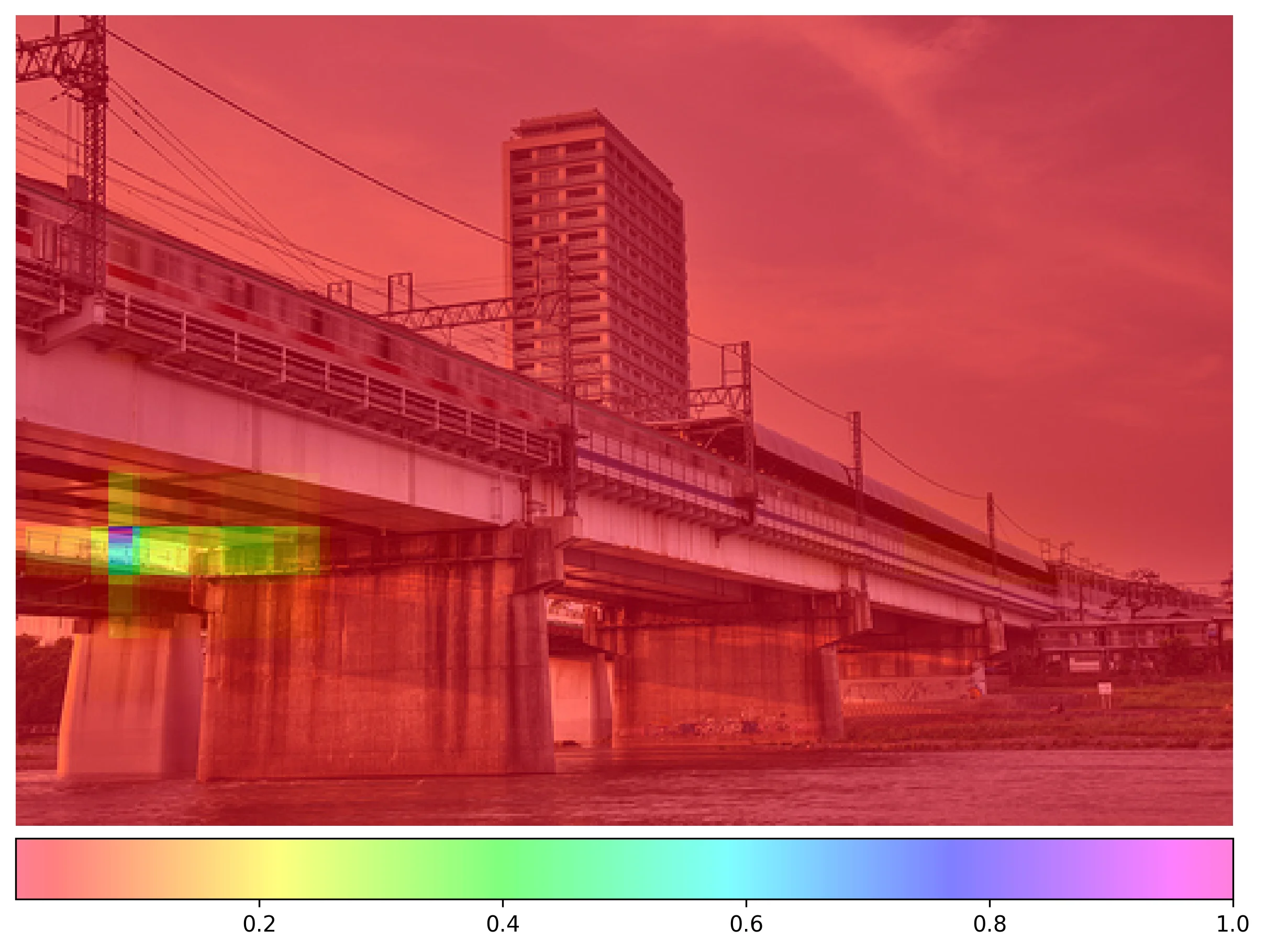

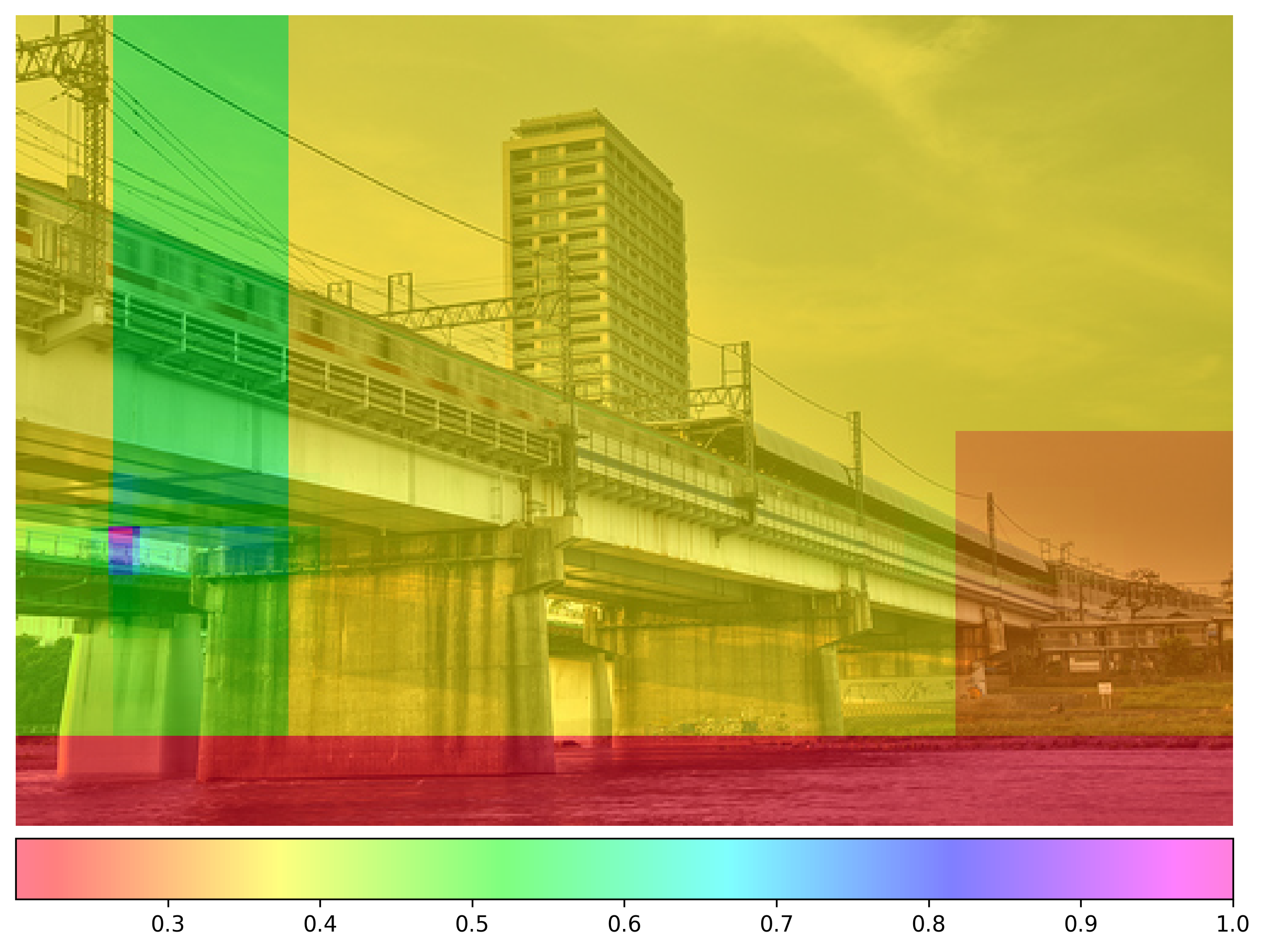

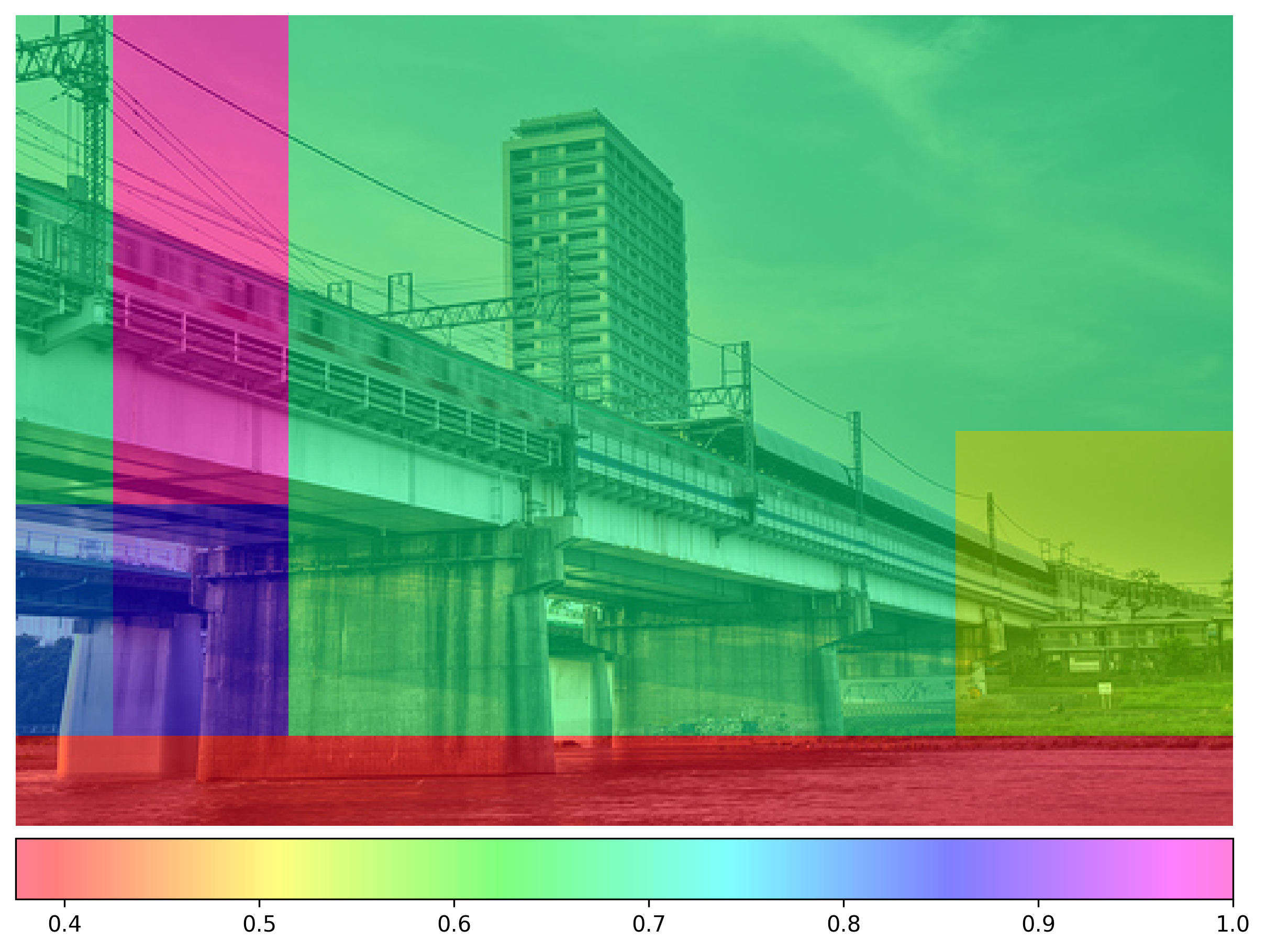

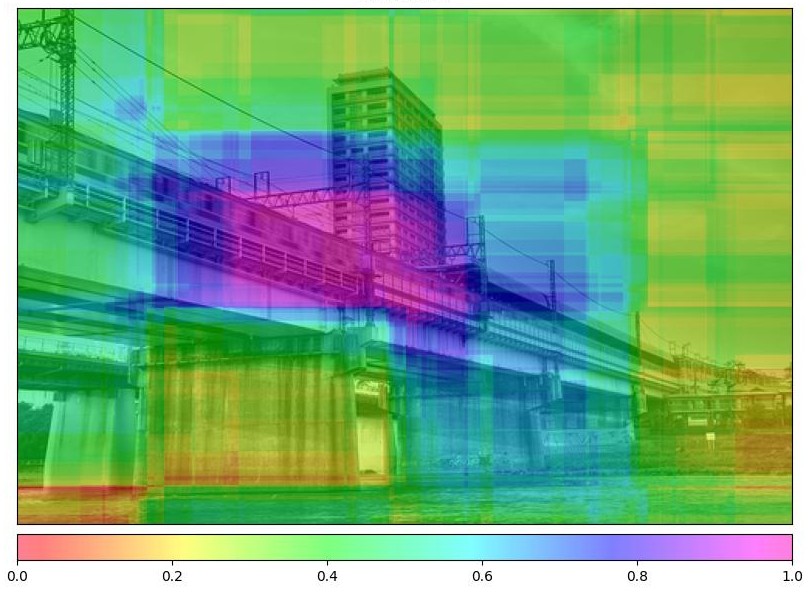

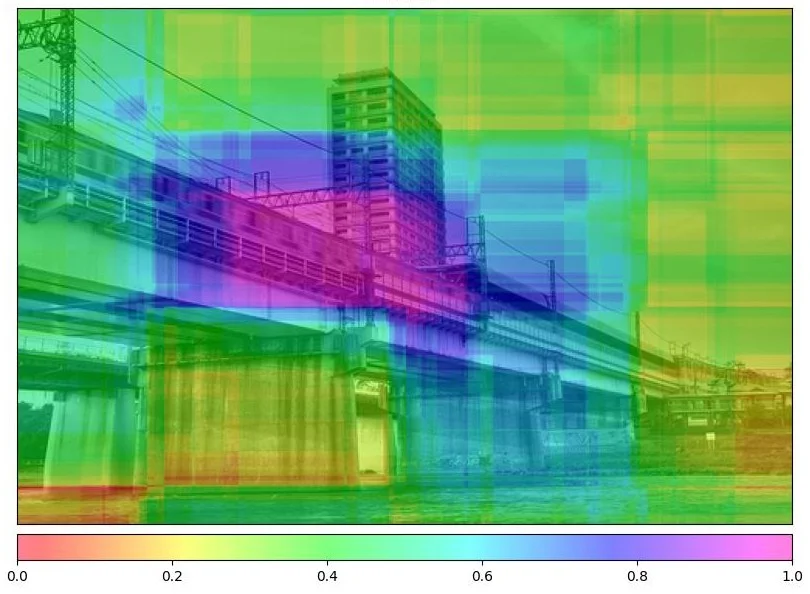

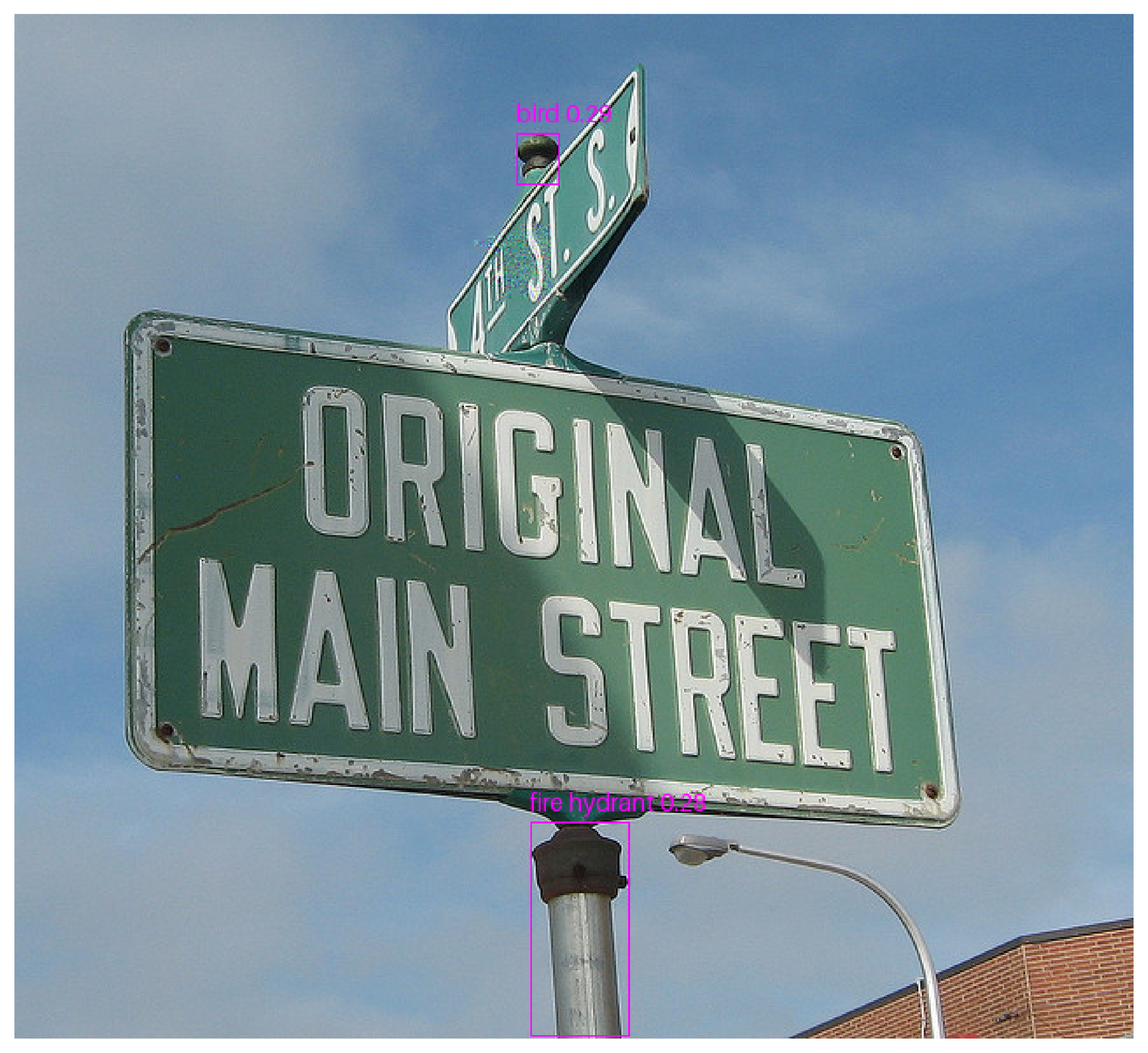

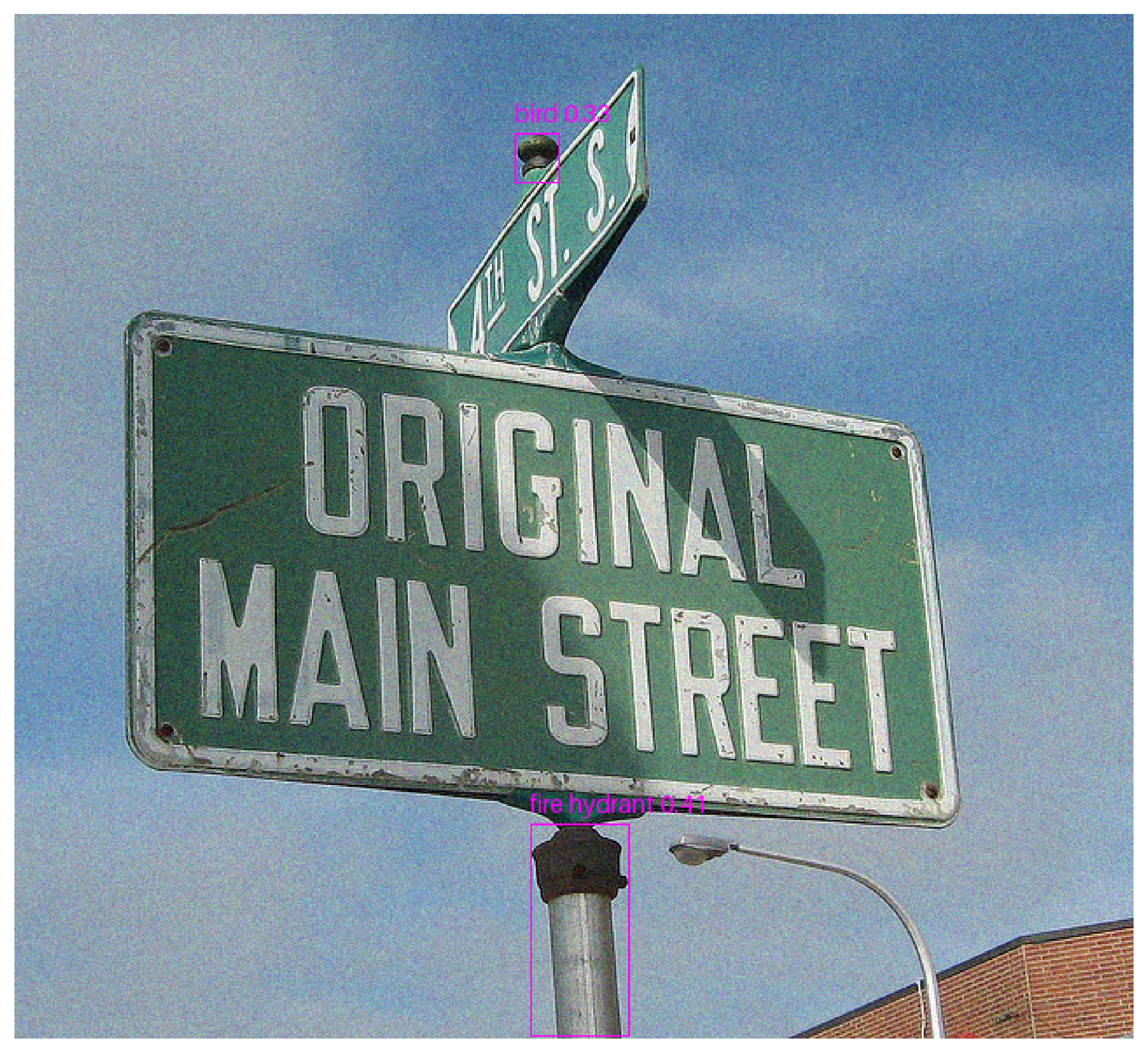

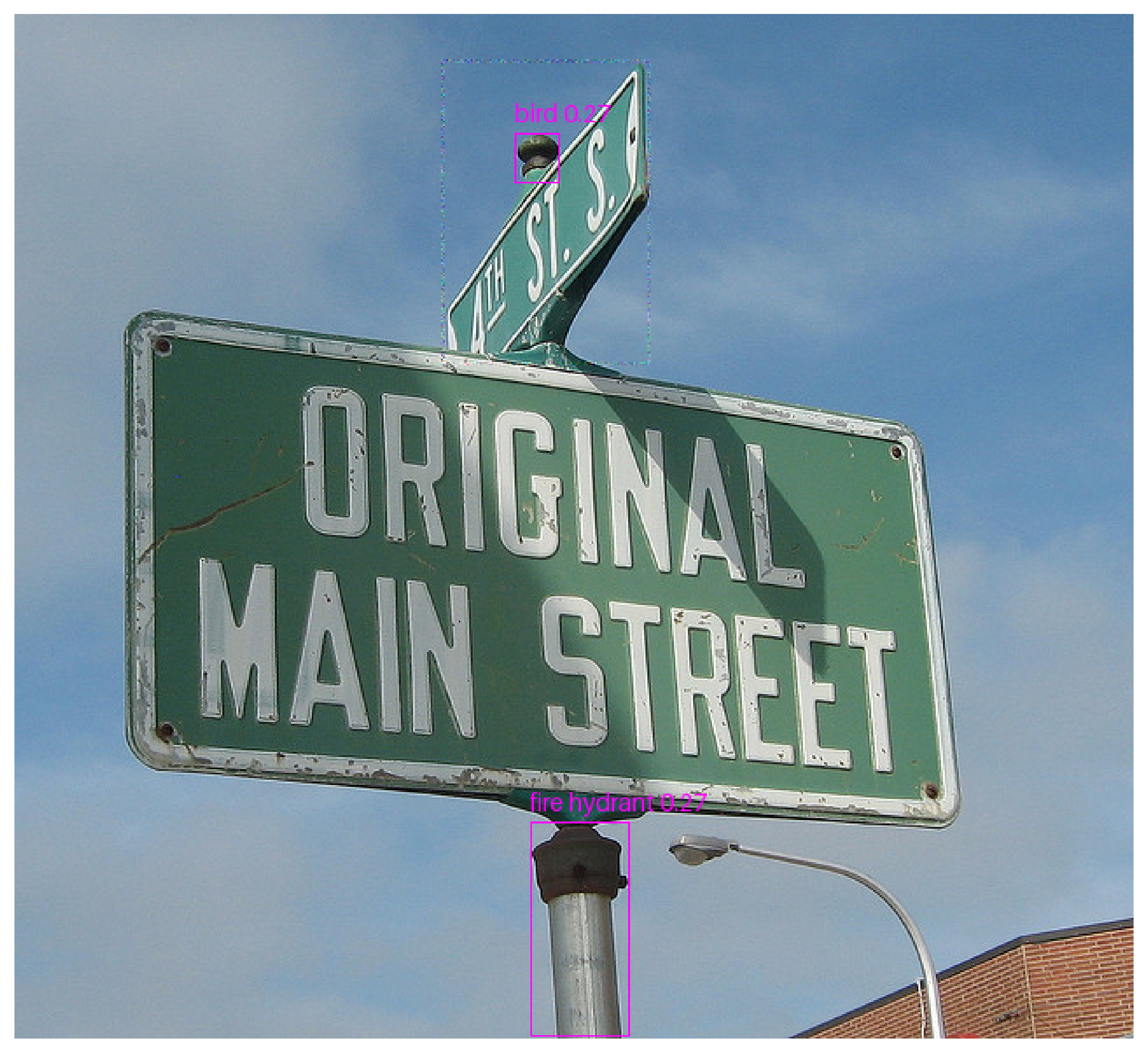

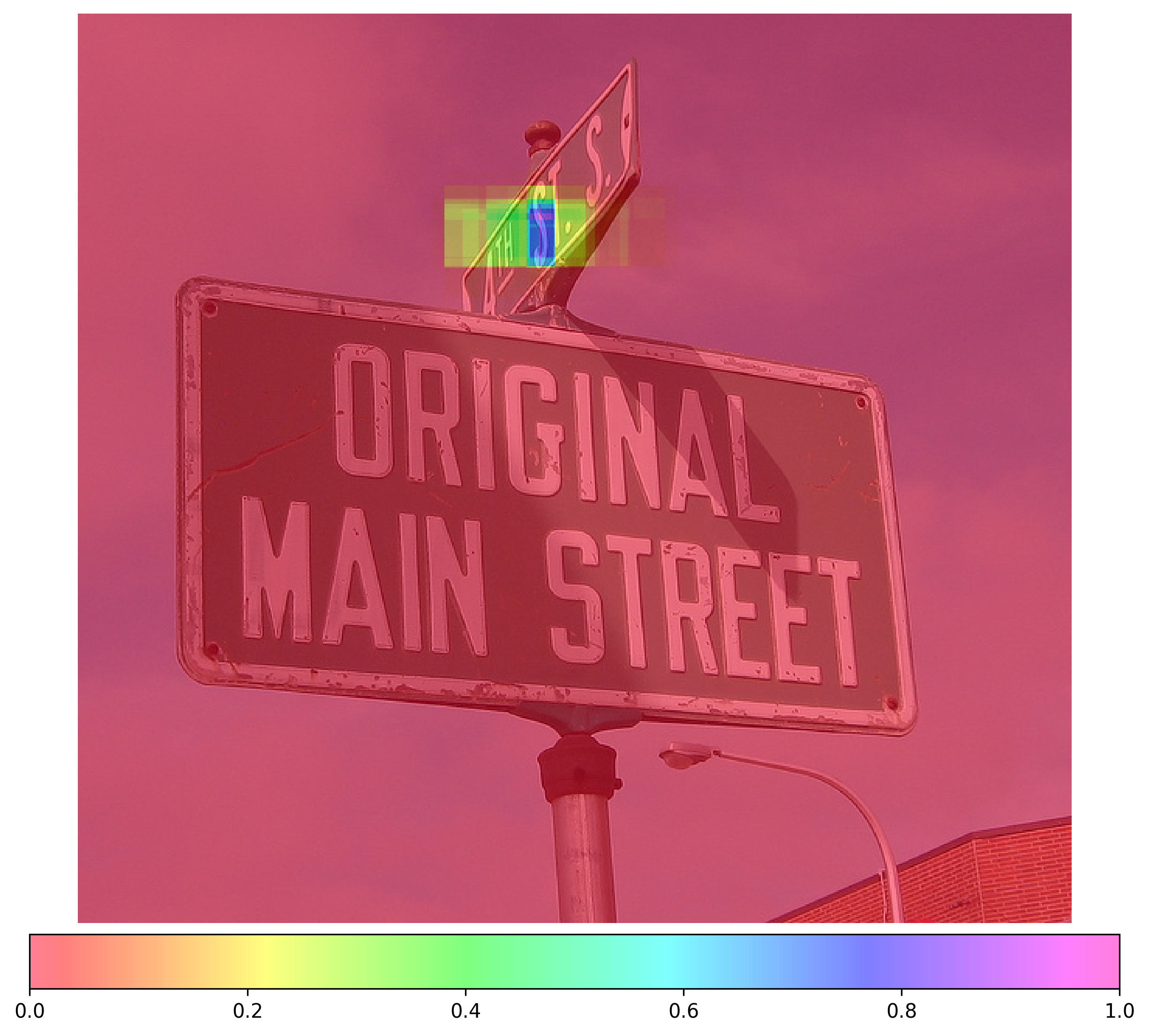

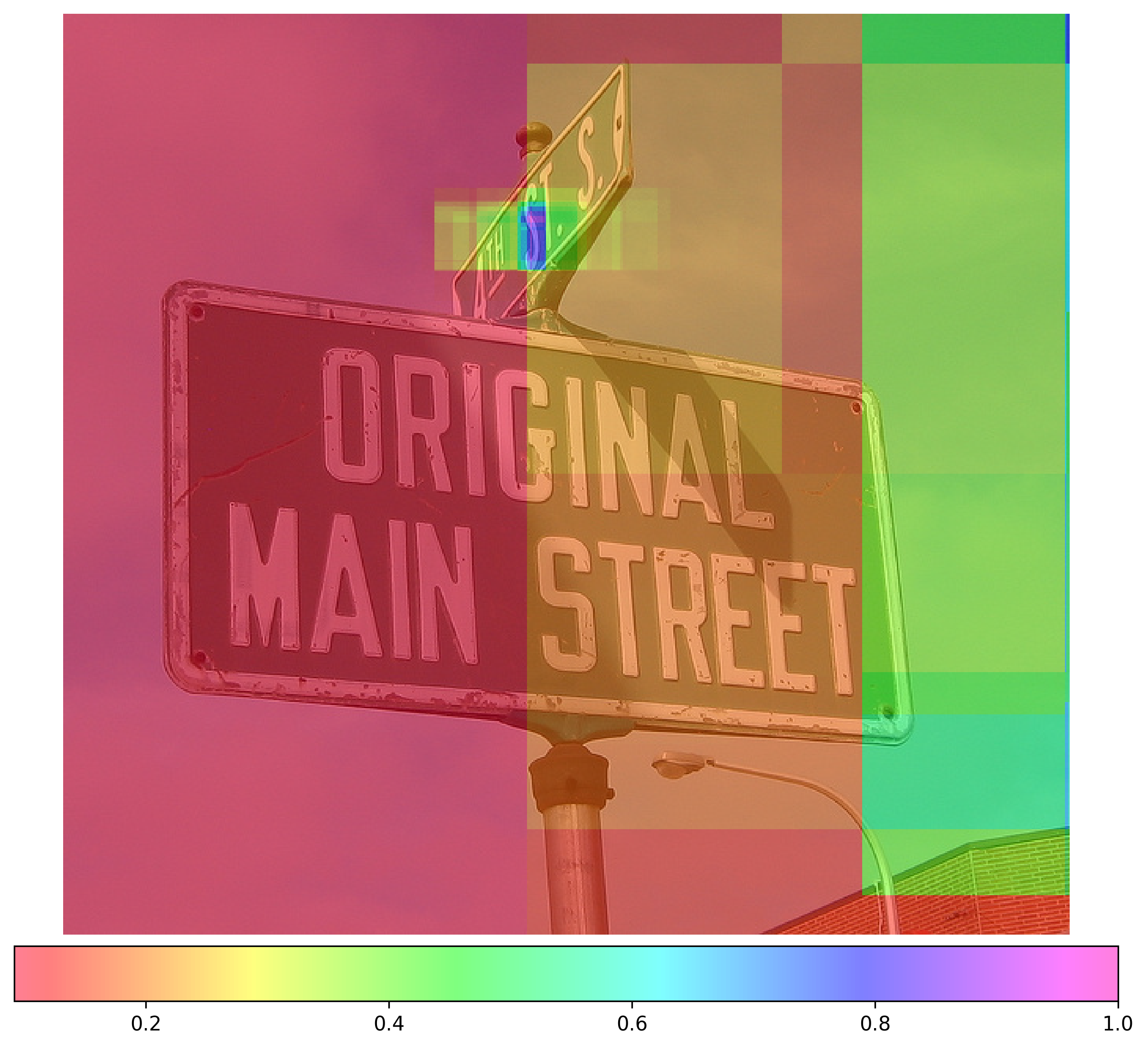

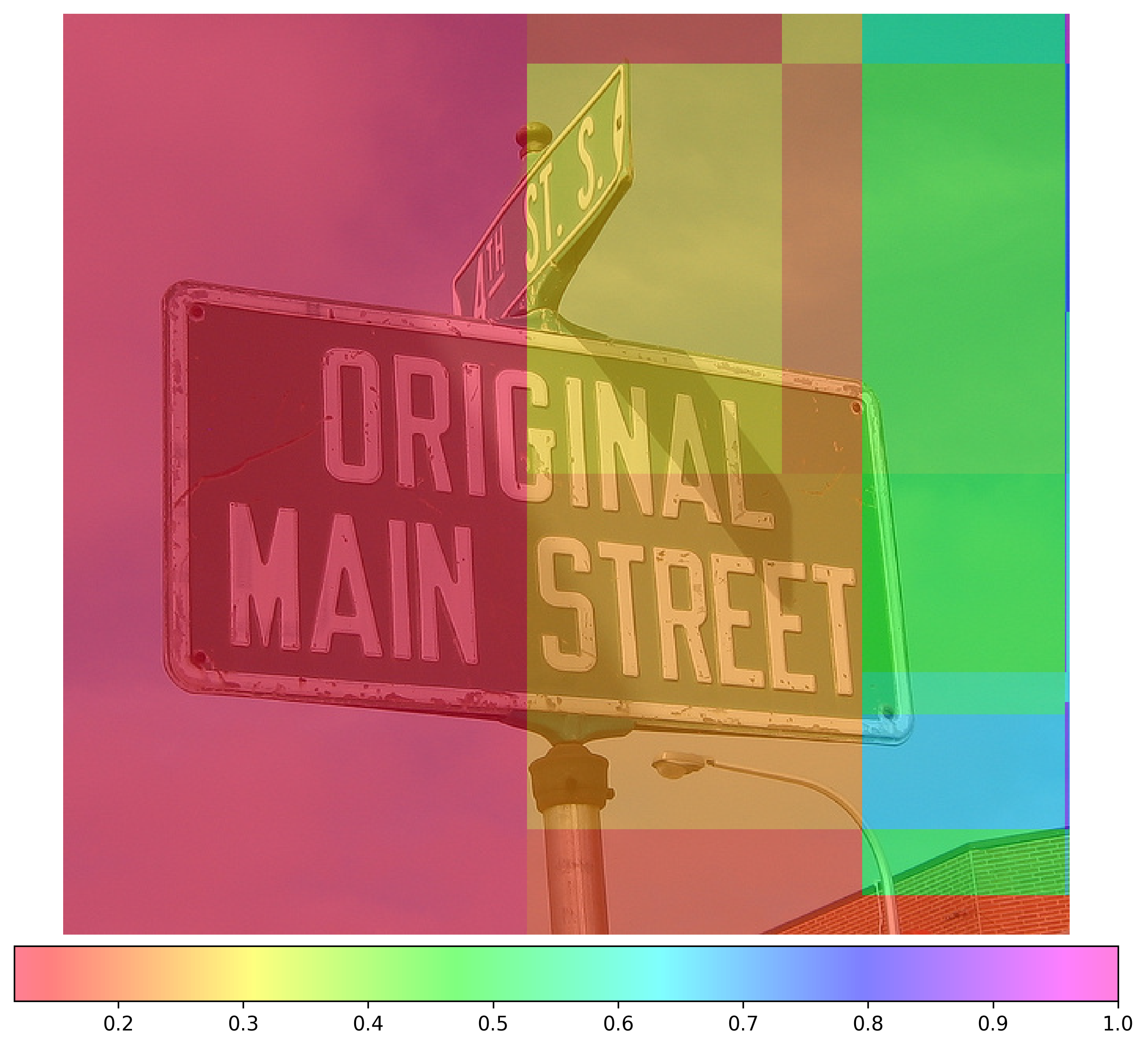

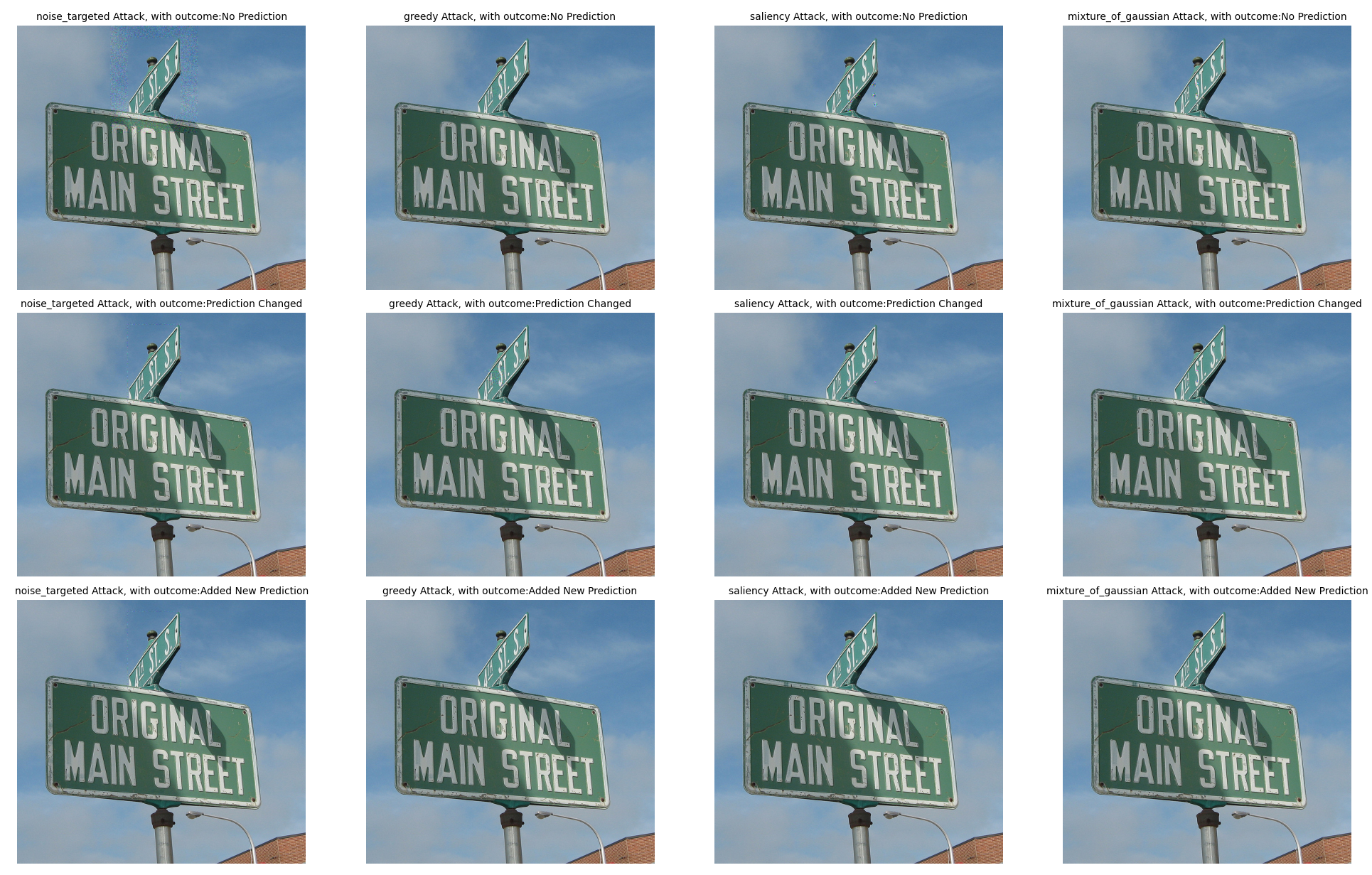

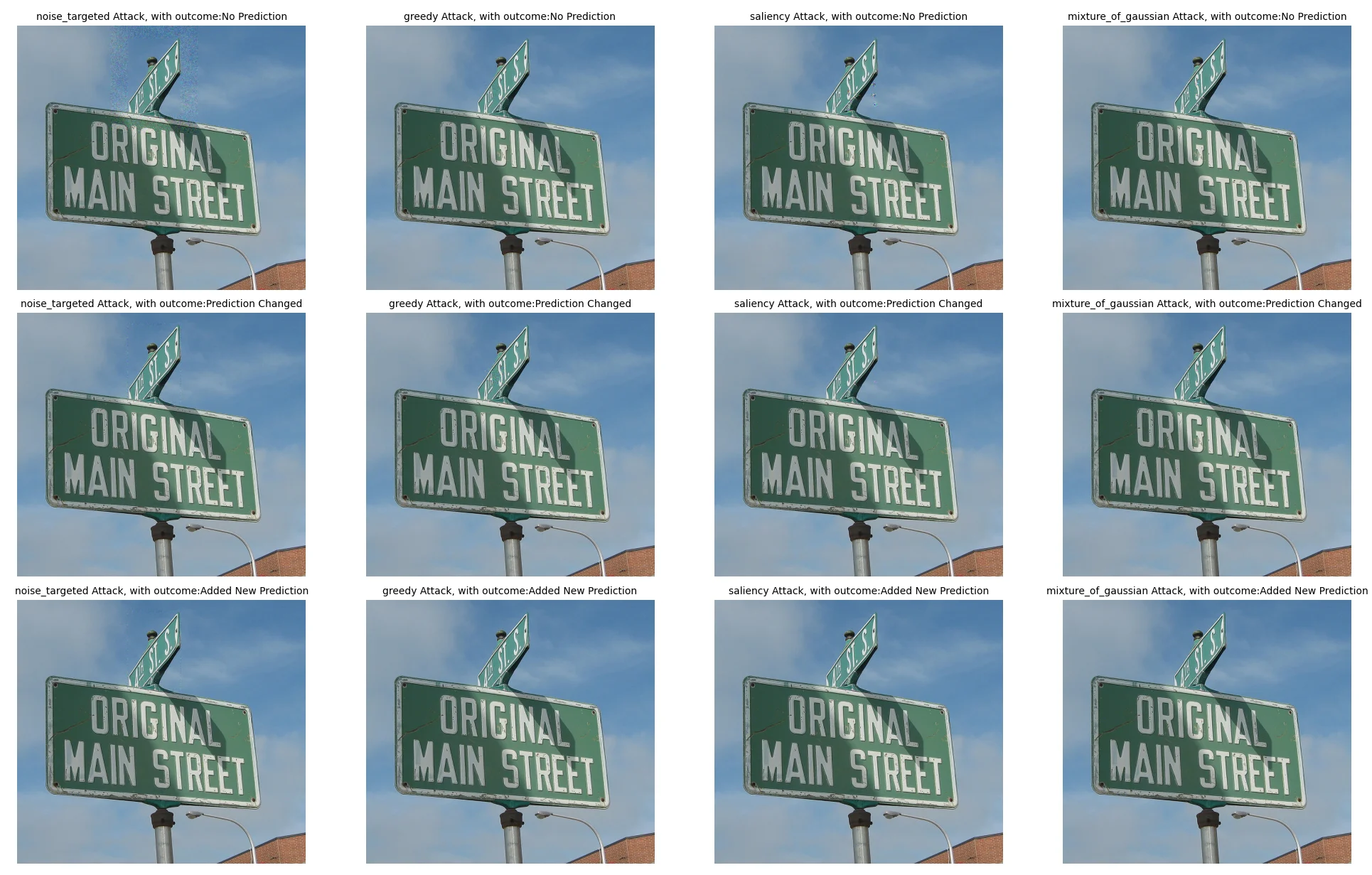

Figure 1. The MSPS for cat (Figure 1b) reveals a dependency on the surrounding context. BlackCAtt starts with causal pixels outside of

the bounding box and works inwards in order to maximize imperceptibility. In both Figures 1c and 1d the cat is still clearly present and

complete, but YOLO no longer detects the cat. The attack works because BlackCAtt changes part of the cause of the detec

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.