📝 Original Info

- Title: When, How Long and How Much? Interpretable Neural Networks for Time Series Regression by Learning to Mask and Aggregate

- ArXiv ID: 2512.03578

- Date: 2025-12-03

- Authors: ** Florent Forest, Amaury Wei, Olga Fink **

📝 Abstract

Time series extrinsic regression (TSER) refers to the task of predicting a continuous target variable from an input time series. It appears in many domains, including healthcare, finance, environmental monitoring, and engineering. In these settings, accurate predictions and trustworthy reasoning are both essential. Although state-of-the-art TSER models achieve strong predictive performance, they typically operate as black boxes, making it difficult to understand which temporal patterns drive their decisions. Post-hoc interpretability techniques, such as feature attribution, aim to to explain how the model arrives at its predictions, but often produce coarse, noisy, or unstable explanations. Recently, inherently interpretable approaches based on concepts, additive decompositions, or symbolic regression, have emerged as promising alternatives. However, these approaches remain limited: they require explicit supervision on the concepts themselves, often cannot capture interactions between time-series features, lack expressiveness for complex temporal patterns, and struggle to scale to high-dimensional multivariate data.

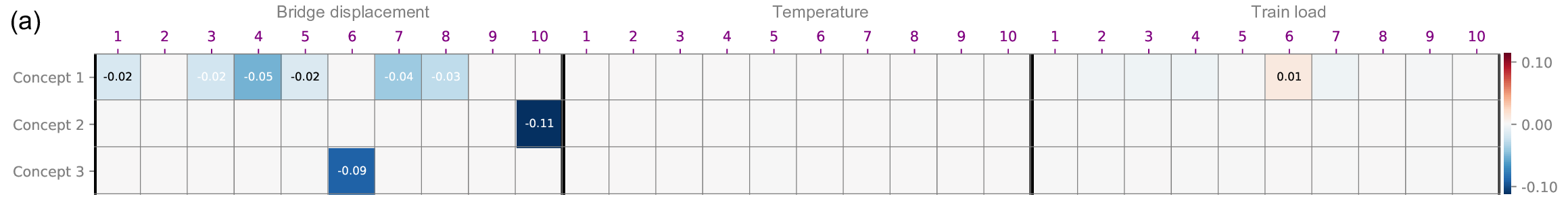

To address these limitations, we propose MAGNETS (Mask-and-AGgregate NEtwork for Time Series), an inherently interpretable neural architecture for TSER. MAGNETS learns a compact set of human-understandable concepts without requiring any annotations. Each concept corresponds to a learned, mask-based aggregation over selected input features, explicitly revealing both which features drive predictions and when they matter in the sequence. Predictions are formed as combinations of these learned concepts through a transparent, additive structure, enabling clear insight into the model's decision process.

The code implementation and datasets are publicly available at https://github.com/FlorentF9/MAGNETS.

💡 Deep Analysis

Deep Dive into When, How Long and How Much? Interpretable Neural Networks for Time Series Regression by Learning to Mask and Aggregate.

Time series extrinsic regression (TSER) refers to the task of predicting a continuous target variable from an input time series. It appears in many domains, including healthcare, finance, environmental monitoring, and engineering. In these settings, accurate predictions and trustworthy reasoning are both essential. Although state-of-the-art TSER models achieve strong predictive performance, they typically operate as black boxes, making it difficult to understand which temporal patterns drive their decisions. Post-hoc interpretability techniques, such as feature attribution, aim to to explain how the model arrives at its predictions, but often produce coarse, noisy, or unstable explanations. Recently, inherently interpretable approaches based on concepts, additive decompositions, or symbolic regression, have emerged as promising alternatives. However, these approaches remain limited: they require explicit supervision on the concepts themselves, often cannot capture interactions between

📄 Full Content

1

When, How Long and How Much? Interpretable

Neural Networks for Time Series Regression by

Learning to Mask and Aggregate

Florent Forest, Amaury Wei, Olga Fink

Abstract—Time series extrinsic regression (TSER) refers to

the task of predicting a continuous target variable from an input

time series. It appears in many domains, including healthcare,

finance, environmental monitoring, and engineering. In these

settings, accurate predictions and trustworthy reasoning are

both essential. Although state-of-the-art TSER models achieve

strong predictive performance, they typically operate as black

boxes, making it difficult to understand which temporal patterns

drive their decisions. Post-hoc interpretability techniques, such as

feature attribution, aim to to explain how the model arrives at its

predictions, but often produce coarse, noisy, or unstable expla-

nations. Recently, inherently interpretable approaches based on

concepts, additive decompositions, or symbolic regression, have

emerged as promising alternatives. However, these approaches

remain limited: they require explicit supervision on the concepts

themselves, often cannot capture interactions between time-series

features, lack expressiveness for complex temporal patterns, and

struggle to scale to high-dimensional multivariate data.

To address these limitations, we propose MAGNETS (Mask-

and-AGgregate NEtwork for Time Series), an inherently inter-

pretable neural architecture for TSER. MAGNETS learns a

compact set of human-understandable concepts without requir-

ing any annotations. Each concept corresponds to a learned,

mask-based aggregation over selected input features, explicitly

revealing both which features drive predictions and when they

matter in the sequence. Predictions are formed as combinations of

these learned concepts through a transparent, additive structure,

enabling clear insight into the model’s decision process.

Experiments on synthetic and real-world univariate and mul-

tivariate TSER datasets show that MAGNETS closely matches

the accuracy of black-box models while substantially outperform-

ing existing interpretable baselines, particularly on multivariate

datasets involving feature interactions. Finally, we also show that

MAGNETS provides more faithful and informative explanations

than post-hoc methods.

The code implementation and datasets are publicly available

at https://github.com/FlorentF9/MAGNETS.

Index Terms—Time series regression, Machine learning, Ex-

plainability, Concept learning, Interpretability.

I. INTRODUCTION

T

IME series extrinsic regression (TSER) refers to to the

task of predicting a continuous target variable from an

input time series [1]. It appears in many domains—including

healthcare (e.g., vital-sign forecasting), finance (e.g., volatil-

ity prediction), engineering (e.g., predicting battery state-

of-charge or estimating remaining useful life from sensor

streams), and environmental monitoring (e.g., pollution esti-

mation). In these settings, accurate predictions and trustworthy

reasoning are both essential [2]. However, despite recent gains

in accuracy, the opacity of modern TSER models remains an

obstacle to adoption, particularly when predictions must be

understood and validated by domain experts.

The demand for interpretability becomes especially difficult

to satisfy with current state-of-the-art TSER models. Although

recent approaches achieve strong predictive performance, they

typically operate as black boxes. Leading approaches include

deep neural networks [3], [4], large ensembles [5], and Ran-

dOm Convolutional KErnel Transform (ROCKET) models

[6]–[8]. Their complexity, large parameter counts, and lack of

transparency make it difficult to understand which temporal

features drive predictions or how multivariate interactions

across variables influence the model’s output. These limita-

tions hinder deployment in sensitive or regulated contexts.

For example, if a TSER model predicts an impending system

failure, engineers need to trace that prediction back to specific

sensor behaviors and time intervals, rather than relying on an

alert that cannot be meaningfully explained or validated.

These interpretability challenges have motivated growing

interest in Explainable AI (XAI) research for time series,

which aims to bridge the gap between predictive accuracy

and actionable insight. While this research direction has made

meaningful progress, existing approaches still fall short in

several ways. Post-hoc explanation methods, such as saliency

maps and feature attribution [9]–[11], attempt to rationalize

a model’s decisions after training, but their explanations are

often coarse, unstable, or poorly aligned with the model’s true

internal reasoning [12].

In contrast, inherently interpretable models embed trans-

parency directly into their architecture. Neural additive models

(NAMs) [13] and their time-series extension, Neural Addi-

tive Time-series Models (NATMs) [14], offer interpretable

decompositions but remain

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.