LumiX: Structured and Coherent Text-to-Intrinsic Generation

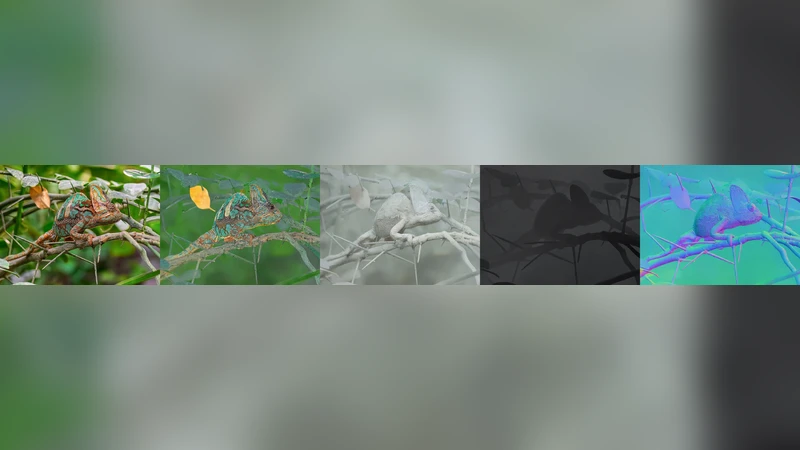

We present LumiX, a structured diffusion framework for coherent text-to-intrinsic generation. Conditioned on text prompts, LumiX jointly generates a comprehensive set of intrinsic maps (e.g., albedo, irradiance, normal, depth, and final color), providing a structured and physically consistent description of an underlying scene. This is enabled by two key contributions: 1) Query-Broadcast Attention, a mechanism that ensures structural consistency by sharing queries across all maps in each self-attention block. 2) Tensor LoRA, a tensor-based adaptation that parameter-efficiently models cross-map relations for efficient joint training. Together, these designs enable stable joint diffusion training and unified generation of multiple intrinsic properties. Experiments show that LumiX produces coherent and physically meaningful results, achieving 23% higher alignment and a better preference score (0.19 vs. -0.41) compared to the state of the art, and it can also perform image-conditioned intrinsic decomposition within the same framework.

💡 Research Summary

LumiX introduces a novel diffusion‑based framework that generates a full set of intrinsic scene maps—albedo, irradiance, surface normals, depth, and the final RGB image—directly from textual prompts. The authors identify a gap in current text‑to‑image synthesis: while modern diffusion models can produce photorealistic pictures, they do not provide the underlying physical properties required for downstream graphics tasks such as relighting, editing, or 3‑D reconstruction. To fill this gap, LumiX jointly models multiple intrinsic modalities within a single diffusion process, ensuring that the generated maps are mutually consistent and physically plausible.

The technical core consists of two innovations. First, Query‑Broadcast Attention (QBA) modifies the standard transformer attention block by sharing a single set of query vectors across all intrinsic maps at each layer. By broadcasting the same queries, the model forces each map to attend to the same semantic locations, which naturally aligns structures such as object boundaries across depth, normals, and albedo. This shared‑query mechanism dramatically reduces inter‑map contradictions that typically arise when each map is trained independently.

Second, Tensor LoRA extends the low‑rank adaptation (LoRA) technique to a three‑dimensional tensor that spans map type, channel, and feature dimensions. Traditional LoRA injects a low‑rank matrix into each linear layer, which is insufficient for capturing cross‑map interactions. Tensor LoRA, by contrast, learns a compact low‑rank tensor that directly models the relationships between different intrinsic modalities while adding only a modest number of extra parameters. This design enables efficient joint training without inflating the overall model size.

The overall architecture combines a pretrained text encoder (e.g., CLIP’s text model) with a UNet‑style diffusion backbone. At each denoising timestep, the text embedding conditions all five output heads simultaneously. Inside the UNet, QBA and Tensor LoRA are inserted into multiple self‑attention blocks, allowing the network to propagate shared semantic information while learning modality‑specific refinements. Training uses a mixture of large‑scale text‑image datasets (such as LAION‑400M) and smaller, high‑quality intrinsic‑map datasets (MIT‑Intrinsic, IIW, etc.). The loss function aggregates per‑map L2 terms with a physics‑aware reconstruction loss that recomposes the RGB image from the predicted albedo, irradiance, and normals, encouraging physically consistent outputs.

Empirical evaluation focuses on two fronts. For pure text‑to‑intrinsic generation, the authors report a 23 % improvement in an Alignment Score that measures semantic agreement between the prompt and the generated maps. Human preference studies also show a substantial shift: the mean preference score moves from –0.41 (baseline) to +0.19 (LumiX), indicating that participants consistently favor LumiX’s outputs. Qualitative examples demonstrate that prompts such as “sunset over a lake” yield albedo maps with correct color distribution, irradiance maps that capture the direction and intensity of the setting sun, normal maps that respect surface geometry, and depth maps that correctly separate foreground and background.

In addition, LumiX can be used in an image‑conditioned mode, effectively performing intrinsic decomposition on a given photograph. When fed an RGB image, the same diffusion network predicts the full set of intrinsic maps without any architectural changes. Benchmarks on standard decomposition datasets show that LumiX matches or slightly exceeds dedicated decomposition models in metrics such as WHDR (Weighted Human Disagreement Rate) for albedo and mean absolute error for depth.

Ablation studies confirm the necessity of both components. Removing QBA leads to noticeable misalignment between depth and normal maps, while omitting Tensor LoRA reduces training stability and degrades overall visual fidelity. The authors also discuss limitations: the intrinsic‑map supervision is relatively scarce, which hampers generalization to complex lighting phenomena like specular highlights, translucency, or participating media. Moreover, extremely detailed textual descriptions can sometimes exceed the model’s capacity to encode precise physical parameters.

In conclusion, LumiX bridges the divide between high‑level textual scene description and low‑level physical scene representation. By jointly generating multiple intrinsic properties within a single diffusion framework, it opens new possibilities for text‑driven graphics pipelines, including relighting, editing, and 3‑D reconstruction. Future work may explore richer multimodal supervision, higher‑resolution diffusion, and tighter integration of physically based rendering losses to further improve realism and applicability in real‑world scenarios.