AI-Driven Document Redaction in UK Public Authorities: Implementation Gaps, Regulatory Challenges, and the Human Oversight Imperative

Document redaction in public authorities faces critical challenges as traditional manual approaches struggle to balance growing transparency demands with increasingly stringent data protection requirements. This study investigates the implementation of AI-driven document redaction within UK public authorities through Freedom of Information (FOI) requests. While AI technologies offer potential solutions to redaction challenges, their actual implementation within public sector organizations remains underexplored. Based on responses from 44 public authorities across healthcare, government, and higher education sectors, this study reveals significant gaps between technological possibilities and organizational realities. Findings show highly limited AI adoption (only one authority reported using AI tools), widespread absence of formal redaction policies (50 percent reported “information not held”), and deficiencies in staff training. The study identifies three key barriers to effective AI implementation: poor record-keeping practices, lack of standardized redaction guidelines, and insufficient specialized training for human oversight. These findings highlight the need for a socio-technical approach that balances technological automation with meaningful human expertise. This research provides the first empirical assessment of AI redaction practices in UK public authorities and contributes evidence to support policymakers navigating the complex interplay between transparency obligations, data protection requirements, and emerging AI technologies in public administration.

💡 Research Summary

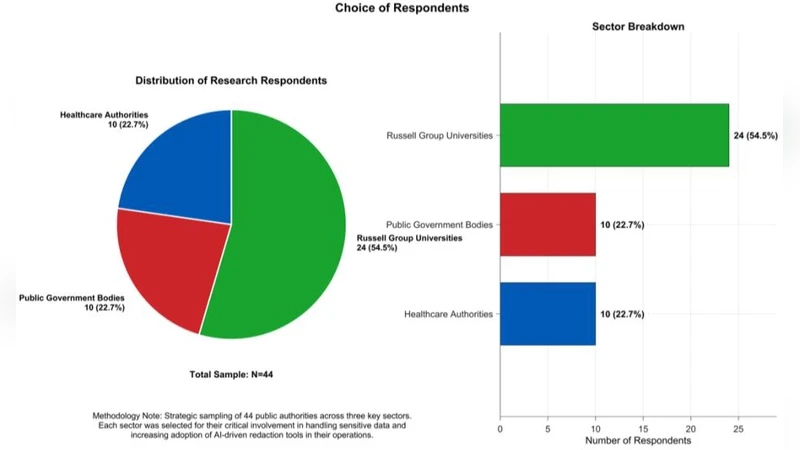

This paper investigates the real‑world adoption of artificial‑intelligence (AI)‑driven document redaction within United Kingdom public authorities, a topic that has received scant empirical attention despite growing policy pressure for both transparency and stringent data‑protection compliance. The authors employed a Freedom of Information (FOI) request methodology, targeting a purposive sample of 44 organisations across three sectors—healthcare (including NHS bodies), central and local government, and higher education. All requests were identical, asking for (i) any formal redaction policies or standard operating procedures, (ii) whether AI or machine‑learning tools are currently used for redaction, (iii) the existence of staff training programmes on redaction and AI, and (iv) the nature of human‑oversight mechanisms applied to automated redaction outputs. Responses were received from 30 authorities (68 % response rate), providing a robust basis for mixed‑methods analysis: quantitative descriptive statistics were generated for binary and categorical items, while qualitative content analysis of free‑text replies identified recurring themes and nuanced concerns.

The key empirical findings are stark. Only one authority (2.3 % of respondents) reported active deployment of an AI‑based redaction system; the remaining organisations either relied on manual processes (27 %) or admitted that no redaction activity was performed at all (45 %). Half of the sample (22 authorities) explicitly stated that they “do not hold the information” or that no formal redaction policy exists, indicating a systemic absence of documented guidance. Moreover, 70 % of respondents highlighted poor record‑keeping practices—documents are inconsistently catalogued, metadata is sparse, and classification schemes are ad‑hoc—making it difficult to generate the labelled training data that modern NLP models require. In terms of human factors, 71 % of agencies reported that staff involved in redaction have never received AI‑specific training, and 58 % said that there is no documented human‑oversight step to review or validate AI‑generated redactions. Respondents also voiced legal and ethical anxieties, notably the risk of GDPR breaches, uncertainty over liability if an AI system mis‑classifies personal data, and the tension between FOIA‑driven openness and automated privacy safeguards.

From these observations the authors distilled three principal barriers to effective AI implementation: (1) inadequate records management that undermines data quality for AI training and validation; (2) the lack of standardized, organisation‑wide redaction guidelines and SOPs, which prevents consistent application and accountability; and (3) insufficient specialised training for staff, eroding the essential “human‑in‑the‑loop” oversight that safeguards against algorithmic error and ethical lapse. The paper frames these barriers within a socio‑technical lens, arguing that technology alone cannot resolve the redaction dilemma; instead, a coordinated strategy that aligns technical tools with organisational processes, governance structures, and workforce development is required.

Policy recommendations are concrete. First, public bodies should develop a centralised redaction policy framework that defines scope, risk thresholds, and verification procedures, and that is harmonised across departments to ensure uniformity. Second, investment in digital records‑management infrastructure is needed to produce clean, well‑metadata‑rich corpora that can serve as reliable training sets for supervised machine‑learning models. Third, a mandatory, ongoing training curriculum should be instituted, covering AI fundamentals, model limitations, data‑protection law, and ethical decision‑making, thereby empowering staff to critically assess AI outputs. Fourth, legislators and regulators are urged to issue clear interpretative guidance that reconciles FOIA obligations with GDPR requirements in the context of AI‑assisted redaction, explicitly delineating liability for automated decisions.

In conclusion, the study provides the first systematic, evidence‑based snapshot of AI‑driven redaction practices in UK public authorities, revealing a pronounced gap between the theoretical promise of AI and the operational realities on the ground. The authors contend that bridging this gap demands a socio‑technical approach that couples robust automation with vigilant human oversight, thereby enabling public institutions to meet both transparency imperatives and privacy safeguards in an increasingly data‑intensive environment.

Comments & Academic Discussion

Loading comments...

Leave a Comment