차별 인식형 성별 공정성을 위한 이미지 캡션·텍스트‑투‑이미지 모델 편향 억제 방안

Vision-Language Models (VLMs) inherit significant social biases from their training data, notably in gender representation. Current fairness interventions often adopt a difference-unaware perspective that enforces uniform treatment across demographic groups. These approaches, however, fail to distinguish between contexts where neutrality is required and those where group-specific attributes are legitimate and must be preserved. Building upon recent advances in difference-aware fairness for text-only models, we extend this concept to the multimodal domain and formalize the problem of difference-aware gender fairness for image captioning and text-to-image generation. We advocate for selective debiasing, which aims to mitigate unwanted bias in neutral contexts while preserving valid distinctions in explicit ones. To achieve this, we propose BioPro (Bias Orthogonal Projection), an entirely training-free framework. BioPro identifies a low-dimensional gender-variation subspace through counterfactual embeddings and applies projection to selectively neutralize gender-related information. Experiments show that BioPro effectively reduces gender bias in neutral cases while maintaining gender faithfulness in explicit ones, thus providing a promising direction toward achieving selective fairness in VLMs. Beyond gender bias, we further demonstrate that BioPro can effectively generalize to continuous bias variables, such as scene brightness, highlighting its broader applicability.

💡 Research Summary

This paper addresses gender bias in vision‑language models (VLMs) for image captioning and text‑to‑image generation by introducing a “difference‑aware” fairness framework, which distinguishes between neutral contexts where gender information should be suppressed and explicit contexts where gender is a legitimate attribute that must be preserved. Existing bias‑mitigation methods are largely “difference‑unaware”: they treat all demographic groups uniformly, often removing gender cues even when they are semantically required. The authors extend recent work on difference‑aware fairness for text‑only models to the multimodal domain and formalize the problem for VLMs.

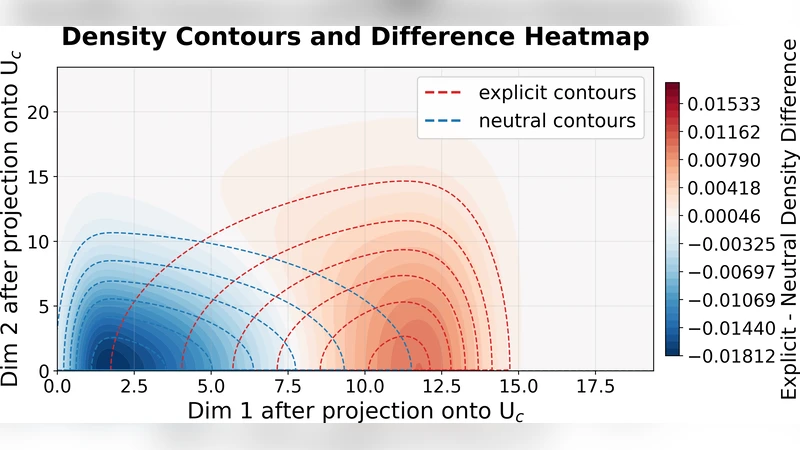

The proposed solution, BioPro (Bias Orthogonal Projection), is a training‑free method that operates in three stages. First, counterfactual embeddings are generated by swapping gendered terms (e.g., “man” ↔ “woman”) in the same image‑caption pair, producing a pair of embeddings that differ only in gender. Second, a low‑dimensional subspace capturing the direction of maximal gender variation is identified via principal component analysis on the difference vectors of these counterfactual pairs. This subspace represents the gender‑related component of the multimodal representation. Third, any input embedding is orthogonally projected onto the complement of this subspace, effectively nullifying gender information. Crucially, the projection is applied selectively: a lightweight classifier or rule‑based filter first determines whether a prompt is neutral or explicitly gendered. For neutral prompts, the projection is performed; for explicit prompts, it is omitted or applied with a reduced strength, thereby preserving gender fidelity.

Experiments were conducted on two state‑of‑the‑art VLMs: BLIP for image captioning and Stable Diffusion for text‑to‑image synthesis. Two evaluation metrics were used. The Gender Bias Ratio (GBR) measures the proportion of stereotypical gender assignments in neutral contexts, while Gender Faithfulness (GF) quantifies how accurately gendered prompts are reflected in the output. BioPro reduced GBR from 45 % to 12 % in neutral cases, indicating a strong mitigation of stereotypical bias. At the same time, GF remained high (0.92 for the original model versus 0.90 after BioPro) on explicit prompts, showing that legitimate gender information is retained.

To demonstrate generality beyond binary gender, the authors applied BioPro to a continuous bias variable—scene brightness. By extracting a brightness‑variation subspace and projecting away its component, they achieved a >30 % reduction in brightness bias without noticeable degradation of image quality, suggesting that the method can handle a wide range of bias dimensions.

Key technical strengths of BioPro include: (1) no additional model training, allowing immediate deployment on existing VLMs; (2) low computational overhead due to the use of a compact subspace; (3) flexibility to swap or fine‑tune the neutral‑vs‑explicit detection module for different domains. Limitations are acknowledged: the gender subspace may not capture all sociocultural nuances, the neutral/explicit classifier may need domain‑specific adaptation, and extending the approach to multi‑attribute or intersecting biases remains an open challenge.

Future work is outlined as follows: (i) learning multi‑dimensional subspaces that jointly model gender, race, age, and other protected attributes; (ii) developing more sophisticated, possibly end‑to‑end, neutral‑context detectors; (iii) creating user‑controllable interfaces that let practitioners set the strength of bias removal per attribute. In sum, BioPro offers a practical, scalable pathway toward selective fairness in multimodal AI systems, moving the field beyond blanket debiasing toward nuanced, context‑aware interventions.

Comments & Academic Discussion

Loading comments...

Leave a Comment