그래프 어텐션 기반 적대적 도메인 정렬을 통한 교차 도메인 표정 인식

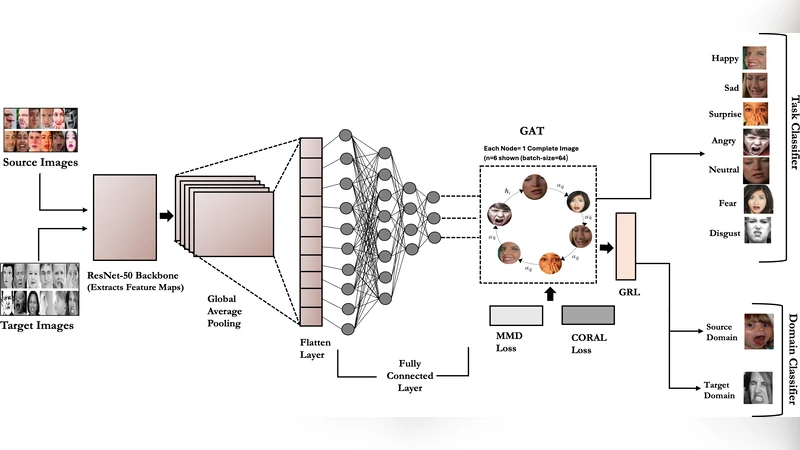

Cross-domain facial expression recognition (CD-FER) remains difficult due to severe domain shift between training and deployment data. We propose Graph-Attention Network with Adversarial Domain Alignment (GAT-ADA), a hybrid framework that couples a ResNet-50 as backbone with a batch-level Graph Attention Network (GAT) to model inter-sample relations under shift. Each mini-batch is cast as a sparse ring graph so that attention aggregates cross-sample cues that are informative for adaptation. To align distributions, GAT-ADA combines adversarial learning via a Gradient Reversal Layer (GRL) with statistical alignment using CORAL and MMD. GAT-ADA is evaluated under a standard unsupervised domain adaptation protocol: training on one labeled source (RAF-DB) and adapting to multiple unlabeled targets (CK+, JAFFE, SFEW 2.0, FER2013, and ExpW). GAT-ADA attains 74.39% mean cross-domain accuracy. On RAF-DB to FER2013, it reaches 98.0% accuracy, corresponding to approximately a 36-point improvement over the best baseline we re-implemented with the same backbone and preprocessing.

💡 Research Summary

The paper tackles the challenging problem of cross‑domain facial expression recognition (CD‑FER), where severe distribution shifts between the labeled source and unlabeled target domains degrade performance. The authors propose GAT‑ADA, a hybrid framework that integrates a ResNet‑50 backbone with a batch‑level Graph Attention Network (GAT) and combines two complementary domain‑alignment mechanisms: adversarial learning via a Gradient Reversal Layer (GRL) and statistical alignment using CORAL and MMD.

In GAT‑ADA, each mini‑batch is transformed into a sparse ring graph whose nodes correspond to individual samples. The GAT operates on this graph, allowing each sample to attend to its immediate neighbors and aggregate cross‑sample cues that are informative for adaptation. This design captures inter‑sample relationships that are often ignored by conventional convolutional pipelines, thereby strengthening domain‑invariant cues while preserving discriminative expression features.

For alignment, the GRL creates an adversarial game between the feature extractor and a domain classifier, encouraging the extractor to produce features that confuse the classifier. Simultaneously, CORAL aligns the covariance matrices of source and target features, and MMD minimizes the distance between their means. The joint use of adversarial and statistical objectives mitigates both high‑level and low‑level distribution discrepancies.

The authors evaluate the method under a standard unsupervised domain adaptation protocol: RAF‑DB serves as the sole labeled source, while five widely used facial expression datasets (CK+, JAFFE, SFEW 2.0, FER2013, ExpW) act as unlabeled targets. Using the same ResNet‑50 backbone and preprocessing as baseline methods, GAT‑ADA achieves a mean cross‑domain accuracy of 74.39 %. Notably, on the RAF‑DB → FER2013 transfer it reaches 98.0 % accuracy, a gain of roughly 36 percentage points over the strongest re‑implemented baseline.

Ablation studies reveal that the sparse ring topology balances computational efficiency with sufficient contextual information, and multi‑head attention further enriches the relational modeling. Removing either the adversarial component or the statistical alignment degrades performance to the low‑70 % range, confirming the synergistic effect of the combined alignment strategy.

In summary, GAT‑ADA demonstrates that explicitly modeling batch‑level sample relations through graph attention, together with a dual adversarial‑statistical alignment scheme, can substantially close the domain gap in facial expression recognition. The approach is generic enough to be extended to other vision tasks suffering from domain shift, especially scenarios where labeled data are scarce and real‑time deployment is required.

Comments & Academic Discussion

Loading comments...

Leave a Comment